WTF Bites

-

SYSTEMD CAN FAIL TO BOOT IF RANDOM NUMBERS AREN'T SUFFICIENTLY DIFFERENT!

I work with payment terminals. One of the standard bits of information that's sent when you pay with a card is called the Unpredictable Number.

We got a test failure back on our new V2 software because the unpredictable number isn't the same as in V1. Yes, the unpredictable number was too unpredictable

-

@levicki said in WTF Bites:

@sebastian-galczynski said in WTF Bites:

Of course you can't run normal Debian on it

Did you try first updating BIOS on the mainboard?

Hmm...

When the RDRAND bug in Ryzen 3000 first surfaced back in June, Linux users widely reported that their entire Ryzen 3000-powered systems wouldn't boot. The failure to boot was due to systemd's use of RDRAND—and it wasn't systemd's first clash with AMD and a buggy random-number generator, unfortunately.

Do you see that? SYSTEMD CAN FAIL TO BOOT IF RANDOM NUMBERS AREN'T SUFFICIENTLY DIFFERENT!

Systemd being dumb? Whodathunk!

-

@levicki said in WTF Bites:

Did you try first updating BIOS on the mainboard?

Nope. But the test in the article returns something different than 0xFFFFFFFF, so I assume this is not this problem. Or did the Ubuntu's initrd kernel patch the microcode on startup? I'll check it later.

-

@sebastian-galczynski said in WTF Bites:

@levicki said in WTF Bites:

Did you try first updating BIOS on the mainboard?

Nope. But the test in the article returns something different than 0xFFFFFFFF, so I assume this is not this problem. Or did the Ubuntu's initrd kernel patch the microcode on startup? I'll check it later.

I believe Linux always installs microcode in the CPU if it is available. And that is also about the only difference between clean Debian and clean Ubuntu—Debian by default does not have non-free, so it by default does not have the microcode in the installer by default while Ubuntu does not care about free and has always has the microcodes.

-

@sebastian-galczynski said in WTF Bites:

Why is the "TearFree" option always disabled by default in Xorg.conf?

In this case, we have a definitive answer:

Works great, no more tearing.

Wait, what century is this?!?

-

Do you see that? SYSTEMD CAN FAIL TO BOOT IF RANDOM NUMBERS AREN'T SUFFICIENTLY DIFFERENT!

They're assuming they get unique numbers?

I think (if I remember correctly) @Gąska‘s point is that they‘re using random numbers in the first place!

-

@levicki yeah, it seems really dubious that you could patch a defective hardware RNG with microcode.

-

@levicki yeah, it seems really dubious that you could patch a defective hardware RNG with microcode.

Well, maybe yes. Depends on which way it is ‘faulty’.

If the RNG was really faulty in the sense that the actual circuit is not producing noise, there would be no fix in microcode. But if it incorrectly translates the x86 instruction to the corresponding micro-instruction sequence—which probably contains some logic to convert whatever distribution the circuit produces to uniform or collect the desired number of bits or something—that can obviously be fixed by updating that decoding.

-

@levicki said in WTF Bites:

@levicki yeah, it seems really dubious that you could patch a defective hardware RNG with microcode.

To be honest, you can patch a lot with microcode. Problem is (if I remember correctly) that decoding instructions that execute from microcode requires microcode assist in decoding so there is a penalty.

Are there even any instructions that don't actually execute from microcode these days? I thought they basically translate everything to micro-instructions now.

-

Wait, what century is this?!?

The Century of the Linux Desktop™, obviously

-

@levicki said in WTF Bites:

- How do you release a CPU with a broken RNG and don't notice it during QA?

The same way you release any other broken piece of software. Or create any other kind of catastrophe. People suck, overworked people suck even more.

- How much of that RNG is really in hardware if you can patch it with microcode?

Considering it's always returning UINT_MAX? It might very well be just a few bytes to pipe the result from HW to register, except the piping doesn't work.

- What is the performance impact of said patch on the RNG bandwidth?

Considering it's always returning UINT_MAX? Probably none as it's clearly an accidental overwrite/lack-of-write and the actual functionality of the generator was likely left unaltered.

- Why are you writing all this if you know he won't read any of it?

Dunno. I'm kinda bored, and I don't have anything better to do.

-

@Gąska Seems we have a severe case of not enough

over here!

over here!Can I get some ApathyTM, stat!

-

@Rhywden shitposting is the highest form of

.

.

-

@Gąska I shall be lenient and grant you that point. But don't make a habit out of it!

-

The same way you release any other broken piece of software. Or create any other kind of catastrophe. People suck, overworked people suck even more.

Sure. But we're not talking about a random piece of software there. CPUs are subjected to a huge number of tests (both before and after manu, mainly because a serious hardware bug that's not fixable with a microcode update could end being extremely expensive for the manufacturer. Of course, test coverage is never 100%, but not detecting that an instruction is completely and obviously broken is something so huge it should never happen, even if a single person messed up.

The more believable explanation is that they knew, but chose to release anyways. Then the question becomes: how did they think they could get away with it?

-

Are there even any instructions that don't actually execute from microcode these days?

Basic ops like register transfers are very nearly one-to-one. Complex ones (such as double-precision floating point divide) are done in significant numbers of micro-steps. It's either that or use an enormous amount of chip area (and maybe power too) for uncommon cases.

-

Basic ops like register transfers are very nearly one-to-one

Agner Fog maintains tables for a bunch of x86 architectures.

Since I was curious, I looked up

divsd(double precision division) and it seems to be mostly a single µop according to his tables, albeit with high latency.

-

@Zerosquare said in WTF Bites:

Of course, test coverage is never 100%, but not detecting that an instruction is completely and obviously broken is something so huge it should never happen, even if a single person messed up.

Here's the thing: how do you test whether random number generator is generating random numbers?

-

@Zerosquare said in WTF Bites:

how did they think they could get away with it?

They assumed Windows would never be on long enough to experience the bug?

-

@Zerosquare said in WTF Bites:

Of course, test coverage is never 100%, but not detecting that an instruction is completely and obviously broken is something so huge it should never happen, even if a single person messed up.

Here's the thing: how do you test whether random number generator is generating random numbers?

Usually by initializing with identical values multiple times and comparing the output.

-

Here's the thing: how do you test whether random number generator is generating random numbers?

Using statistical tests, like this one for example.

But you don't even need that to detect a fault as simple as "all bits are stuck at 1".

-

-

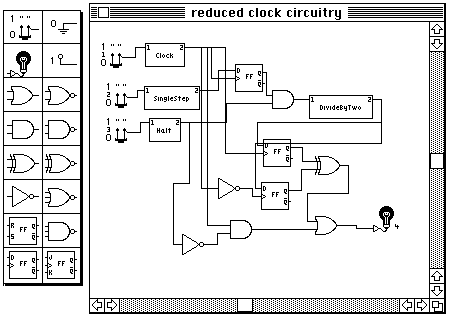

@levicki This is a visualization of Ryzen3000's

RDRANDinstruction

-

@Carnage Using random numbers is not dumb.

-

Holio fuck! If the rest of the installation is similar then I hope I never have to be anywhere near it...

Seriously, I do hope they have one of those ground return interruptor thingamabobs. Otherwise if there's ever a ground fault which draws enough power to melt the zipties it might disconnect the ground, then roasting the next unsuspecting person who touches whatever was meant to be protected this way...

-

@Zerosquare said in WTF Bites:

But you don't even need that to detect a fault as simple as "all bits are stuck at 1".

Hindsight is 20/20. It's a rarely used instruction, the circuit behaves correctly, the firmware passes unit tests, it's only after assembling everything that the issue shows up, and you can't even write a test against it because every result could be correct (and you don't want random failures in your automated tests, especially literal random failures). Sure, if someone played with the CPU and repeatedly run that particular instruction, it'd be obvious there's a problem, but the hard part is knowing that this particular instruction needs playing with.

-

If it was a random manufacturing fluke, I'd agree with you: it isn't feasible to run a complete test suite on every manufactured chip.

But this is a design bug, and tests suites for CPU design/verification absolutely do include checking that every single instruction operates correctly. When each manufacturing run costs several hundred of dollars, you don't take chances and test everything to death.

-

@levicki said in WTF Bites:

That's very likely, do let us know.

The bios is from July 2019, so it's not patched. So it must be Ubuntu.

-

-

@Carnage Sadly it's near standard now for any recent Linux distribution. There is of course an alternative from the gentoo people, but good luck running it.

-

@Carnage Sadly it's near standard now for any recent Linux distribution. There is of course an alternative from the gentoo people, but good luck running it.

Do you have to compile a suitable CPU first?

-

it seems to be mostly a single µop according to his tables, albeit with high latency

It's a multistage op (hence the latency), but the stage management might now be well enough understood to be directly in hardware. Looking through those tables,

fpremseems to be an opcode that requires multiple steps (and in practice you need it in a loop in software anyway, according to what I've read).

-

@levicki said in WTF Bites:

performance hit of the lookup into said microcode

Probably not much of a hit, as that's likely a CAM. Power hit though.

-

compile a suitable CPU

What do you think CPU designers do? Draw out all the gates by hand?

-

compile a suitable CPU

What do you think CPU designers do? Draw out all the gates by hand?

I'm trying to dig up a picture of when Intel did do just that.

But I can't find it.

-

-

@levicki said in WTF Bites:

@dkf Sorry, what I meant by lookup was not only lookup itself but also copying of uOPs from it to the instruction queue.

Also, there is the penalty of executing this longer uOPs sequence.

I don't think the μOPs are copied as such, and the memory they're held in will be very fast indeed. The real cost is that there are more stages and there are timing constraints between them (linked to pipeline length).

-

-

I don't think the μOPs are copied as such, and the memory they're held in will be very fast indeed.

Yeah, this. They definitively don't go to standard memory or anything like that. Wikichip's diagrams include some throughput numbers (at least for the Skylake architecture). I guess they could end up in the instruction cache, though, if I parse that diagram correctly. Not sure what the circumstances for that are.

-

@ixvedeusi: the page the picture is from is a pretty good candidate for this topic, too:

-

@Applied-Mediocrity said in WTF Bites:

And what, game companies can't have investment rounds?

FAKE EDIT:

Cloud Imperium is creating Star Citizen, a record-shattering, largely crowdfunded space sim, and Squadron 42, a Hollywood-caliber, story-driven single-player game set in the same universe.

-

-

-

I guess they could end up in the instruction cache, though, if I parse that diagram correctly.

They probably use the same basic memory architecture as L1 cache, but aren't in the same address space. The management circuitry might be a little different too.

-

@Atazhaia and @loopback0 discussed in WTF Bites:

NodeBB's amazing emoticon search.

https://www.youtube.com/watch?v=rrWR5DqG4ZI

@Rhywden dunno if you intended for us to see your name...

-

compile a suitable CPU

What do you think CPU designers do? Draw out all the gates by hand?

I'm trying to dig up a picture of when Intel did do just that.

But I can't find it.

Hardware description languages, such Verilog and VHDL, that can be compiled to gates first appeared in the early 1980s but didn't really gain widespread acceptance until the early 90s. I wouldn't be surprised if Intel was an early adopter, but before the mid 80s, at the earliest, yes, they drew gates by hand.

-

@HardwareGeek It was with the advent of VLSI that people stopped drawing gates by hand; it was just too mammoth a task. (Automatic interconnect routing was another big gain; it's a difficult problem, but tractable as long as you're not insisting on getting the optimal layout but rather just a workable one.)

-

@HardwareGeek said in WTF Bites:

compile a suitable CPU

What do you think CPU designers do? Draw out all the gates by hand?

I'm trying to dig up a picture of when Intel did do just that.

But I can't find it.

Hardware description languages, such Verilog and VHDL, that can be compiled to gates first appeared in the early 1980s but didn't really gain widespread acceptance until the early 90s. I wouldn't be surprised if Intel was an early adopter, but before the mid 80s, at the earliest, yes, they drew gates by hand.

There is an awesome picture of an engineer (or team) drawing a CPU on a humongous paper, that was then reduced in size for making real hardware out of.

-

@HardwareGeek said in WTF Bites:

compile a suitable CPU

What do you think CPU designers do? Draw out all the gates by hand?

I'm trying to dig up a picture of when Intel did do just that.

But I can't find it.

Hardware description languages, such Verilog and VHDL, that can be compiled to gates first appeared in the early 1980s but didn't really gain widespread acceptance until the early 90s. I wouldn't be surprised if Intel was an early adopter, but before the mid 80s, at the earliest, yes, they drew gates by hand.

There is an awesome picture of an engineer (or team) drawing a CPU on a humongous paper, that was then reduced in size for making real hardware out of.

Like, the floor of a large room covered with s single sheet of paper with tiny scribblings for a CPU pattern all over.

-

drawing a CPU on a humongous paper, that was then reduced in size for making real hardware out of.

Ah, that aspect of the process (the physical design) was computerized by at least the early 80s, although possibly still hand drawn (on the computer). By the late 80s, the concept of "standard cells" had arisen. You no longer needed to draw each AND and OR gate; you had a library of gates you could place and just make the connections to them.

HDLs vs. schematic diagrams were first, at least in my experience, used for simulating the design to prove its logical correctness, then later for being compiled into gates.

How a months-old AMD microcode bug destroyed my weekend [UPDATED]

How a months-old AMD microcode bug destroyed my weekend [UPDATED]

![How a months-old AMD microcode bug destroyed my weekend [UPDATED]](https://cdn.arstechnica.net/wp-content/uploads/2019/10/bugs-684x380.jpg)