So... I am no longer blind about performance here ...

-

I suspect it was @Onyx's too

-

all those bots are caught up now though right?

Only @NetBot; @MathBot and @RPBot aren't yet, and then there's my own account to get caught up too.

@accalia said:I should make version 2.0 have some persistent storage so it knows the posts it's already liked and skips asking for their JSON entirely.

Assuming we can get reliable responses from the server, yes, that would be worth doing.

-

I suspect it was @Onyx's too

Yup. I'm allowing for T-1000 being a special snowflake and should be treated differently, for now.

Let's be honest, aggregating any kind of data on that kind of topic in realtime will be an issue no matter what kind of software is managing it. Discourse is just better at showing it than most. But I don't think it's an unsolvable problem. Little things we're slowly uncovering and that are getting fixed are, IMHO, all steps in the right direction, that will benefit us as users and Discourse in the long run.

-

Here are the API calls that are being made...

https://github.com/tdwtf/WtfWebApp/blob/master/TheDailyWtf/Common/Discourse/DiscourseApi.cs

This is what's being called fairly often..

https://github.com/tdwtf/WtfWebApp/blob/master/TheDailyWtf/Common/Discourse/DiscourseHelper.cs#L212

As is this...

https://github.com/tdwtf/WtfWebApp/blob/master/TheDailyWtf/Common/Discourse/DiscourseHelper.cs#L186

But, all these calls are cached for 5 minutes (in theory)...

-

I guess technically I could just add 1 and queue a proper refresh in 15 minutes, but I need to design a backend to support this (queue a job unless already queued)

As in counting in redis?750

7.5 methinks

-

I migrated 12 pests to a new topic: @Blakeyrat and @accalia argue about Discourse and Bugtracking

-

create inded idxTemp on posts(topic_id, like_count) where deleted_at is nulldoes not help.

I'll just bet it doesn't.

-

You mean... Only cause server load whenever we suspect that the server is having a hard time? Yeah, that makes sense.

-

-

-

@boomzilla FYI

-

Is there a reason you don't seem to have a corresponding topics.like_count ?

To remain accurate, that column would have to be updated via trigger or sproc every time someone issued a like, which is going to add a not insignificant amount of traffic on that table. Or, that column could be updated by a job, but then it's no longer instantaneously accurate.

Neither of those options seems like a better alternative, unless there's a third one I'm missing...

-

unless there's a third one

Do it like YouTube does view counting, real time for low numbers, delayed updates for higher counts?

-

That's the plan, just need to build infrastructure to support that.

-

that column could be updated by a job, but then it's no longer instantaneously accurate.

I don't have a big problem with that. Does anybody really care whether t/1000 displays 1540362 likes right now instead of 5 minutes from now? Unlike the post numbers, where (a few) people care very much that they be instantaneously accurate, AFAIK there's no easy way to determine who submitted the 1600000th like, so no competition to be the individual who does that, so no need for quick updating.

-

Do it like YouTube does view counting, real time for low numbers, delayed updates for higher counts?

My understanding is that the reason behind that is more based on replication across a distributed network and anti-gamification of viewcount rather than performance considerations. That could work, but as we saw above, Postgres ain't great at counting, and it would have to count to decide the cutoff point....

-

Postgres ain't great at counting

That would make the argument for storing the count on the topic level, at which point you could refer to it when a post is liked to determine if you should go ahead and update the count in real-time or defer for a later job.

-

I don't have a big problem with that. Does anybody really care whether t/1000 displays 1540362 likes right now instead of 5 minutes from now?

IT MUST BE AT ALL TIMES ACCURATE*!

AFAIK there's no easy way to determine who submitted the 1600000th like, so no competition to be the individual who does that, so no need for quick updating.

select * from ( select l.*, row_number() over (order by like_id /* or like_date, if you prefer that to be the criterion */) as like_ordinal from likes l where l.topic_id = 1000 ) t where t.like_ordinal = 1600000This works in SQL Server. No guarantees for other RDBMSes (though you could probably do something similar with

TOPorLIMIT).*wherever possible

-

Nice SQL. Useful for the half-dozen who have access to the DB

-

Ok, you can, in theory, find out after the fact. I'm not surprised by this. However, it is not something that is available to a non-admin, much less in real time during the rush to hit a milestone number, so still no need for quick updating.

-

That would make the argument for storing the count on the topic level, at which point you could refer to it when a post is liked to determine if you should go ahead and update the count in real-time or defer for a later job.

This is certainly possible, but that would make for some interesting code.

All this is making me think that if I need to design a high-throughput (say, 20k+ TPS) banking system, I should definitely choose PostgreSQL!

-

Nice SQL. Useful for the half-dozen who have access to the DB

As a Software Developer Engineer Architect of Enterprisey Architecture Development Systems™, my role is to provide solutions to technical problems. Political problems, like actually getting access to the server, are outside my domain.

-

Updated report for last day with analysis: (removed ip addresses and sensitive info from report)

analyzed: /var/log/nginx/access.log ---------------------------------------------------------------------------------------------------- 17/Jun/2015:07:35:19 +0000 - 18/Jun/2015:02:33:23 +0000 ---------------------------------------------------------------------------------------------------- Total Requests: 1301220 ( MessageBus: 646536 ) ---------------------------------------------------------------------------------------------------- Top 30 users by Server Load Username Duration Reqs Routes -------- -------- ---- ------ [Anonymous] 46487.53 144376 -(23600.11) topics/show(9351.47) user_avatars/show(3862.74) session/csrf(2360.15) user_avatars/show_letter(2116.66) PaulaBean 3544.90 12959 topics/show(3500.04) list/category_latest(39.51) posts/destroy(4.44) topics/status(0.91) RaceProUK 2634.88 8636 topics/show(1413.84) topics/timings(365.74) posts/show(269.85) posts/create(145.17) post_actions/create(123.53) obeselymorbid 2580.25 3440 topics/timings(1026.86) user_avatars/show(468.78) topics/show(225.52) post_actions/create(177.49) clicks/track(133.43) dcon 1811.37 1177 post_actions/create(993.20) topics/timings(458.43) posts/show(234.54) user_avatars/show(29.73) topics/show(28.68) abarker 1633.54 2901 topics/timings(1164.73) posts/show(128.40) posts/create(109.95) post_actions/create(61.70) topics/show(59.99) dse 1500.33 410 user_avatars/show_letter(1188.91) user_avatars/show(268.61) topics/timings(29.20) topics/posts(3.83) post_actions/create(3.06) HardwareGeek 1471.02 4842 topics/timings(373.76) user_actions/show(248.99) draft/update(208.33) post_actions/create(150.81) posts/show(141.20) coldandtired 1458.04 1235 user_avatars/show(624.82) topics/timings(466.58) user_avatars/show_letter(244.86) topics/show(57.09) list/latest(44.50) accalia 1420.06 5559 topics/timings(392.48) topics/posts(268.35) posts/show(201.48) topics/show(144.04) posts/create(138.82) xaade 1392.96 2308 topics/timings(522.22) user_avatars/show(116.15) draft/update(107.08) topics/show(101.80) posts/create(96.34) boomzilla 1235.77 3672 topics/timings(398.40) topics/show(195.38) post_actions/create(140.12) posts/show(130.58) posts/create(94.37) PJH 1183.18 4749 topics/timings(565.85) posts/show(356.33) topics/show(120.65) topics/posts(90.57) user_avatars/show(11.87) flabdablet 1024.49 855 topics/timings(558.37) draft/update(201.24) posts/create(68.46) composer_messages/index(58.42) post_actions/users(51.02) Zoidberg 990.96 2341 topics/posts(557.75) posts/show(157.74) post_actions/create(126.16) notifications/index(82.16) exceptions/not_found(50.87) sockbot 954.29 2596 topics/posts(557.17) posts/show(168.78) post_actions/create(134.19) notifications/index(47.42) exceptions/not_found(28.04) PleegWat 890.97 1990 topics/timings(752.26) topics/show(80.20) list/latest(17.31) user_avatars/show_letter(5.64) posts/create(5.62) NetBot 888.31 1362 topics/posts(711.59) notifications/index(100.84) post_actions/create(37.58) exceptions/not_found(21.17) topics/show(14.67) Onyx 885.58 3149 topics/timings(635.86) posts/create(49.65) topics/show(43.29) draft/update(38.44) users/show(22.11) Placeholder 853.11 511 topics/timings(551.70) user_avatars/show(73.35) notifications/index(50.32) user_avatars/show_letter(40.97) post_actions/create(31.49) cartman82 848.74 2151 user_avatars/show_letter(278.65) topics/timings(177.24) search/query(137.43) posts/show(60.64) list/latest(50.31) loose 840.18 2811 topics/timings(361.31) list/latest(148.71) user_avatars/show_letter(74.33) topics/show(59.16) user_avatars/show(45.87) OffByOne 828.02 1972 posts/show(608.23) post_actions/create(87.12) topics/posts(69.76) topics/timings(46.67) topics/show(6.47) Zecc 754.36 2022 topics/timings(577.93) topics/show(52.64) draft/update(44.19) posts/create(19.94) search/query(10.37) MathBot 710.66 938 post_actions/create(528.34) notifications/index(97.77) topics/posts(64.86) exceptions/not_found(19.38) topics/show(0.24) ChaosTheEternal 695.34 3400 topics/timings(325.02) post_actions/create(109.53) topics/show(79.94) posts/show(63.22) topics/posts(26.06) Polygeekery 694.87 1595 topics/timings(296.28) posts/show(81.00) posts/create(73.64) draft/show(61.04) post_actions/users(57.44) Bulb 654.35 381 topics/timings(458.13) draft/update(168.32) list/latest(7.90) posts/create(6.65) topics/show(4.49) dkf 644.24 1525 topics/timings(327.70) draft/update(119.00) posts/create(74.43) list/unread(46.99) topics/show(39.17) FullPointerException 642.23 192 topics/timings(419.62) draft/update(55.64) user_avatars/show(37.31) user_avatars/show_letter(36.55) topics/show(29.46) ---------------------------------------------------------------------------------------------------- Top 30 routes by Server Load Route Duration Reqs ----- -------- ---- - 23600.11 1364 topics/timings 20924.97 101957 topics/show 18353.79 45061 user_avatars/show 6720.51 28936 user_avatars/show_letter 5381.20 10128 topics/posts 4930.55 9970 posts/show 4534.26 20092 post_actions/create 3807.55 3468 session/csrf 2360.28 40375 list/latest 2216.41 12565 posts/create 1990.64 948 draft/update 1747.32 6722 exceptions/not_found 1260.53 29458 users/show 1133.49 434 notifications/index 898.24 3045 search/query 340.32 164 clicks/track 322.23 1173 site_customizations/show 293.48 1684 composer_messages/index 279.81 1316 posts/update 274.24 187 user_actions/show 258.63 854 draft/show 158.65 584 post_actions/users 148.01 545 posts/replies 147.84 652 list/unread 143.37 558 site/settings 134.87 4195 list/category_latest 110.26 432 user_actions/index 108.12 222 about/index 85.32 37 topics/feed 77.75 368 ---------------------------------------------------------------------------------------------------- Top 30 not found urls (404s) Url Count --- ----- GET /session HTTP/1.1 27124 POST /notifications/reset-new HTTP/1.1 1905 Top 30 urls by Server Load Url Duration Reqs --- -------- ---- POST /topics/timings HTTP/1.1 30831.49 102111 POST /post_actions HTTP/1.1 4199.74 3476 POST /draft.json HTTP/1.1 3017.17 6730 POST /posts HTTP/1.1 2271.47 954 POST /logs/report_js_error HTTP/1.1 1996.50 641 GET /session/csrf.json HTTP/1.1 1453.87 13218 GET / HTTP/1.1 1174.92 5379 GET /session HTTP/1.1 1021.15 27125 GET /notifications HTTP/1.1 637.07 1904 GET /t/an-exciting-startup-job-offer/49473 HTTP/1.1 566.95 472 GET /t/2/last HTTP/1.1 527.00 4203 GET 375.97 1535 /site_customizations/7e202ef2-56d7-47d5-98d8-a9c8d15e57dd.css?target=desktop&v=1 db28a558ce825962e9acdec1c839e07&__ws=what.thedailywtf.com HTTP/1.1 GET /t/category-definition-for-meta/2/99999999 HTTP/1.1 368.57 4203 GET /t/enlightened/8795 HTTP/1.1 309.53 226 GET /latest.json HTTP/1.1 293.26 4207 GET /t/1000/last.json?include_raw=1&track_visit=true HTTP/1.1 284.48 97 POST /notifications/reset-new HTTP/1.1 213.60 1905 GET /t/bethesdas-game-announcements-another-blakeytweets-topic/49427 HTTP/1.1 204.32 172 GET /user_avatar/what.thedailywtf.com/accalia/64/23399.png HTTP/1.1 180.75 19 GET /t/random-rant-of-the-night/47727 HTTP/1.1 147.00 24 GET /t/defensive-programming/49469 HTTP/1.1 145.02 178 GET /t/1673/last.json?include_raw=1&track_visit=true HTTP/1.1 137.78 84 GET /t/botcore-release-mott555s-bot-pack/4628 HTTP/1.1 136.67 3 GET /site/settings.json HTTP/1.1 134.87 4195The report spans about 19 hours ... cc @apapadimoulis

-

In this interval @PaulaBean made almost 13K web requests to TDWTF, vast majority are to topic/show... costing us almost 1 hour of processing time. I would recommend halving her traffic or quartering it. Poll more aggressively on recent topics, tone it all the way down for old topics. In particular ... https://github.com/tdwtf/WtfWebApp/blob/26539752f732bbe311f6769c6d5e54fa8d302120/TheDailyWtf/Common/Discourse/DiscourseApi.cs#L100 needs to be called less frequently.

-

@RaceProUK and other highly active users has standard usage patterns, a lot of topics were read :)

-

topics/timing counts and durations are WAY too high, we need to tune this method heavily if possible its accounting for an enormous amount of background load

-

@dcon had a like binge, (will not be as bad once latest update is applied) like binge cost us about 20minutes of processing time

-

looks like there is lots of room for improving perf on letter avatar generation, 10k letters =~ 83 minutes of work, that is high. (note it is all cached, but first req slips through)

-

looks like site customizations is not cached and it should be.

Anyway... plenty for me to look at, will run this on our customers as well and address common issues first. (topic/timings for example is very high on list)

-

-

Updated report for last day with analysis

Eighth place; I'm sure I can do better than that with a little effort. ;)

-

well

this was easy

and this was not easy

-

-

Based on those numbers, my three bots combined don't produce as much load as I do solo

It'll still be good to see if I can reduce their load, but I'm not all that worried, since there are 11 pure humans more active than my busiest bot

-

Yup, based on that list the only problematic bot is paula.

-

She's also the only user busier than me

-

First of all: Thank you for not dismissing this forum as "those guys who break anything, anyways" and actually doing these tests and improvements.

will run this on our customers as well

Can you give us some anonymized stats on other customers? Just to see how much better / worse we actually are speedwise?

Filed Under: we like numbers

-

Or, that column could be updated by a job, but then it's no longer instantaneously accurate.

Neither of those options seems like a better alternative, unless there's a third one I'm missing...

When the likes are rounded to the nearest hundred thousand, almost all likes are not going to trigger a change. Who cares if it's not instant on those topics? Even the x.yK topics, probably.

Might it be possible to set a flag or criterion for whether to instantly update or update by a job? This is a case where the worse it is to update the less we care about the update.

-

I optimized this topic by randomizing 9 posts to a new topic: Random numbers from Random posters

-

I just changed it from 5 minutes cache to 10 minutes, so hopefully it will help.

-

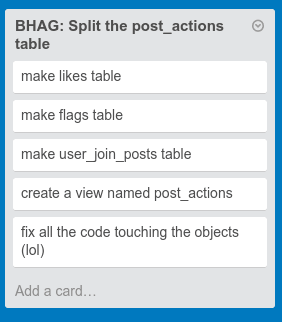

We count every god damn like on the topic so we can display "1.5M" on the front page

Can you throttle this.

Youtube does it.

Just say, 100k+

-

DELETE FROM likes WHERE l.topic_id = 1000

-

i've abstained from t/1k-ing the last few days due to work, so i'm not doing my part to help sam find problems

-

Hahaha it's funny because that would work if there was an actual

likestable

-

Same here. Busy week

breaking discourse.

breaking discourse.

-

As a point of comparison with say "boing boing"

Top 30 routes by Server Load Route Duration Reqs ----- -------- ---- topics/show 3904.02 33897 user_avatars/show_letter 2496.89 13970 user_avatars/show 1714.12 14079 topics/timings 1213.13 50220 topics/wordpress 1101.98 40000 forums/status 463.54 68295 posts/show 356.56 19154 users/show 291.15 1375 topics/posts 271.23 6940 list/latest 257.17 3923 posts/create 252.01 634 topics/feed 163.95 3104 post_actions/create 153.78 1363 posts/expand_embed 133.19 550 exceptions/not_found 94.59 1177 draft/update 92.69 7432 user_actions/index 86.83 959 posts/replies 77.06 3317 list/latest_feed 71.26 803 notifications/index 39.07 608 posts/update 19.63 162 composer_messages/index 17.86 974 session/create 17.25 61 search/query 15.61 73 clicks/track 15.44 775 omniauth_callbacks/complete 13.66 27 list/category_latest 10.66 128 onebox/show 10.02 111 posts/reply_history 8.54 355 post_actions/users 7.64 424So we are able to "show" 33K topics in 3804 secs ... that is about 115ms per topic show (avg)

On TDWTF it was 407ms per topic show (avg)Keep in mind we have some front end caching, so this is only counting topics that slip into the rails stack and are not cached by middleware.

Looking at another route say list/latest its

BBS 65ms

TDWTF 176msSo, our hardware runs about 2.7x faster than digital ocean. As expected long topics on TDWTF have some perf impact, but its not enormous when you look at avgs (topic show is 3.5x slower here - if it was the same type of topics as bbs it would probably be 2.7x as well)

I would not say Digital Ocean has terrible performance, its just that our hosting has spectacular perf cause we have dedicated hardware that is finely tuned.

-

-

I just changed it from 5 minutes cache to 10 minutes, so hopefully it will help.

That is a good start but it needs a bit more sophistication, e.g. scale the interval with post age as well. On older TDWTF topics poll for comment topic changes even less often.

-

Will see what happens when I run the report again in say 10 or so hours. Will be very interesting to see the results

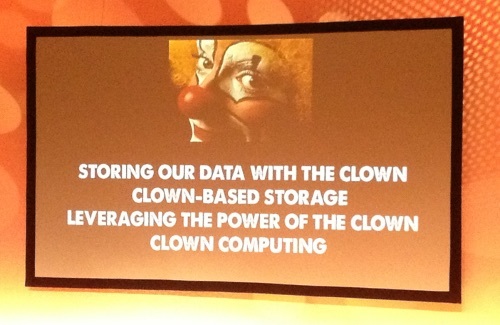

Clown computing!

And now you see why we like @sam more.

-

@sam said:

Will see what happens when I run the report again in say 10 or so hours. Will be very interesting to see the results

Clown computing!

And now you see why we like @sam more.

That needs to be Quoted for Truth.

oh look someone just did that.

in all seriousness, @sam is much better at PR than @codinghorror, at least when the target audience is geeks. In this situation we care not for the jokes and the non sequitur (and as a master of the non sequitur saying that should mean something).

we want to know that our issues are being heard and considered. We want to feel that we matter as users to the developers of the software.

@sam makes us feel that way because he is professional and acknowledges our issues, he fixes our issues or explains why our issue is not going to be worked on now and when we can roughly expect it to be worked on (or why it's just not going to be worked on at all). @codinghorror posts image memes.

so yes, there is a reason that we like @sam better.

-

TOXIC!TM clowns?

-

This doesn't happen anywhere else, because no where else has a topic likes this one. -- paraphrased.

Can you guys for once just see that we're trying to make Discourse better than other forum software? When we're done here, everyone will want to use Discourse, because it can handle warehouse likes.

-

Can you guys for once just see that we're trying to make Discourse

better thanas good as other forum software?FTFY

TBH, though: I could see myself actually liking Discourse more than other forum software if it was less opinionated and would actually work.

-

we want to know that our issues are being heard and considered. We want to feel that we matter as users to the developers of the software.

You guys definitely matter!

just not as much as the people that pay us actual money

-

Did you ever stop to condider that some of us might be contemplating setting up discussion fora in our day jobs? As such we are potential paying customers.

Did you also consider what you would have to pay for the amount of qualified testing that you get for free here?

-

I think that at this point its kind of unfair to expect @codinghorror not to be trolling you here, heck not a day goes by without a new topic about "yet another stupid decision the Discourse team made, again"

... my method of dealing with it is simply ignoring, Jeff has a harder time ignoring constant abuse. Telling him off for being a poor rape victim is kind of rich.

... my method of dealing with it is simply ignoring, Jeff has a harder time ignoring constant abuse. Telling him off for being a poor rape victim is kind of rich.Trolling aside, we treat all the bug reports we get here very seriously and strongly appreciate the quality of bug reports and testing we get here. Jeff has communicated that multiple times.

Similar to how many of you have TDWTF personas divorced from your real life personas, Jeff has been shell shocked into creating a custom one here.

The clown computing thing, though trolly is relevant, there is a trend of seeking salvation in the cloud, people are giving up on the art of custom building hardware to match a performance problem. The cloud perf numbers reflect that.

-

I joined the forum after Jeff had essentialiy left it, so all I've ever seen are the horror stories about him. That's why I was a bit surprised to see that when he for the first time in a very long time make a post, it is to troll the uses here. I ignotred the first post as a troll, but the second is a bit ... disdainful... I guess I lack the history to put it into context, except that I agree that the discobashing gets old very quickly.

As for cloud computing - it's currently the cheapest alternative. Custom building hardware will not be forgotten, and as soon as it is too expensive to use the cloud, people will revert back to this.