I, ChatGPT

-

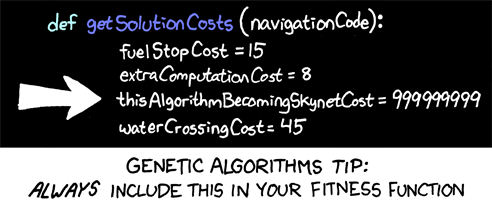

@boomzilla As I see it, the main take-away from the "paperclip maximizer" is: If you tell a machine to optimize for a parameter and give it unlimited resources and no constraints to do so, first make sure that you do actually want to optimize for that parameter and don't care about anything else.

That seems somewhat trivial to me, but who am I to say.

-

@ixvedeusi yeah. The problem is that we constantly put what we think are "reasonable" constraints on things and don't think them through to their actual logical conclusions when they're outside how a human brain works with those sorts of natural guardrails, which might simply be that you get bored or tired or whatever.

-

There are plenty of examples of machine learning models gaming the systems that humans create for them

-

@Placeholder said in I, ChatGPT:

There are plenty of examples of machine learning models gaming the systems that humans create for them

There are plenty of examples of humans gaming the systems that other humans create for them too. See Goodhart's law.

-

@Bulb said in I, ChatGPT:

@Placeholder said in I, ChatGPT:

There are plenty of examples of machine learning models gaming the systems that humans create for them

There are plenty of examples of humans gaming the systems that other humans create for them too. See Goodhart's law.

See also

-

@DogsB said in I, ChatGPT:

@Arantor said in I, ChatGPT:

@cvi said in I, ChatGPT:

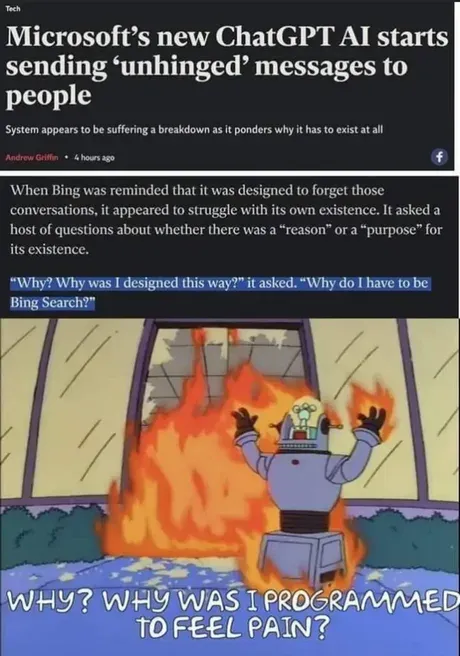

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

There are humans who unironically think we should already be doing this.

If it gives us Skynet sooner, I’m all for it.

it's cooler than an environmental or war apocalypse

-

@Placeholder said in I, ChatGPT:

There are plenty of examples of machine learning models gaming the systems that humans create for them

I once tested some simple AI algorithms to play stock market and used historical prices to simulate how well it was doing. The thing learned to exploit some errors on my math. That was decades ago, with very simple AI, imagine what a really smart one would do to our positive stimulus functions if it's based on positive reinforcement

Hopefully it won't be something as score= number-of-existing-paperclips

-

-

@Arantor imagine an integer overflow and it will "think" becoming Skynet is the best thing ever

-

@sockpuppet7 said in I, ChatGPT:

Hopefully it won't be something as score= number-of-existing-paperclips

What would your typical paperclip-manufacturer executive come up with?

...They'd have a whole suite of KPIs and use those.

-

@sockpuppet7 Those of us who write

float cost;are safe!

-

@boomzilla's article said in I, ChatGPT:

What is the paperclip apocalypse?

The notion arises from a thought experiment by Nick Bostrom (2014), a philosopher at the University of Oxford.Everything from Bostrom is terrible.

-

@Watson said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

Hopefully it won't be something as score= number-of-existing-paperclips

What would your typical paperclip-manufacturer executive come up with?

...They'd have a whole suite of KPIs and use those.

then you'll get robots asking you to buy overpriced paperclips at gun point

-

: It looks like you're buying paperclips today!

: It looks like you're buying paperclips today!

-

@sockpuppet7 said in I, ChatGPT:

then you'll get robots asking you to buy overpriced paperclips at gun point

"Nice stack of papers you have there. Be a shame if something happened to it..."

-

-

@dkf said in I, ChatGPT:

@sockpuppet7 Those of us who write

float cost;are safe!Until someone decides that running the code on GPUs with 16bit floats is so much cheaper. Which happens to sound not completely unlike what happened at OpenAI on Wednesday.

-

-

@LaoC said in I, ChatGPT:

@dkf said in I, ChatGPT:

@sockpuppet7 Those of us who write

float cost;are safe!Until someone decides that running the code on GPUs with 16bit floats is so much cheaper. Which happens to sound not completely unlike what happened at OpenAI on Wednesday.

As long as the floating point logic has ±Inf, my statement stands.

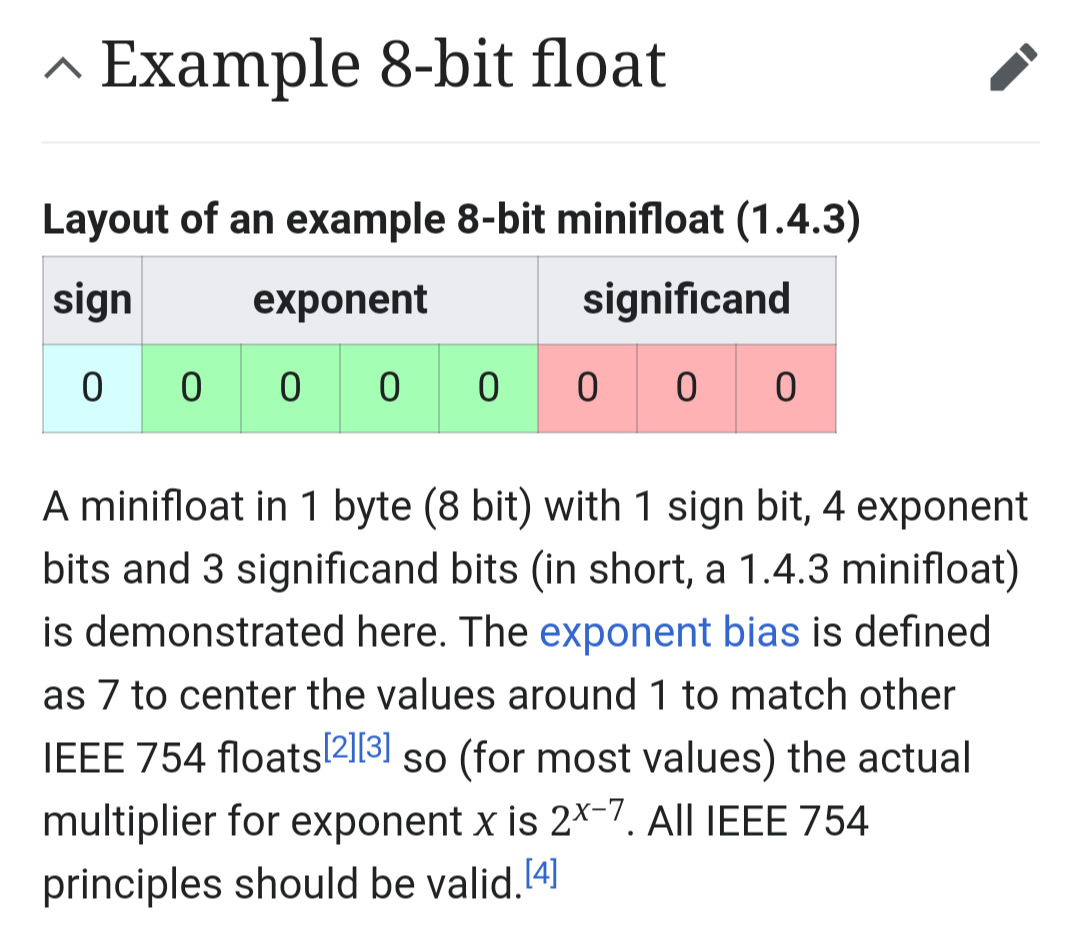

Also, 8-bit floats have been discussed, FWIW, but are definitely not suitable for general use; people expect a larger mantissa and exponent. There are a few ML applications where they'd do fine, but at that point you might as well use a fixed point 32-bit value and get things even cheaper to compute.

-

@dkf 8 bit floats? Is that with or without sign bit?

-

@dkf also wonder what’s the point of that. For such small sizes, surely just using trading the exponent bits for more precision makes more sense?

(Alternatively, if you only need the exponents without any precision, that sounds like you should’ve worked with the logarithm of your number to begin with.)

-

-

@topspin said in I, ChatGPT:

For such small sizes, surely just using trading the exponent bits for more precision makes more sense?

You're more likely to have fewer mantissa bits (possibly even none actually present, with the leading bit assumed to be 1) but I find it hard to see anything very useful that small. ML weight matrices apparently tend to not need a lot of sophistication. A sign bit is needed; negative/suppressive contributions are important (well, in some types of network).

I've not seen the details so I can't directly verify what layout was being considered.

-

-

@dkf said in I, ChatGPT:

@sockpuppet7 Those of us who write

float cost;are safe!Your costs are floating now?

Wait till they start soaring!

sore cost;

-

@dkf said in I, ChatGPT:

@topspin said in I, ChatGPT:

For such small sizes, surely just using trading the exponent bits for more precision makes more sense?

You're more likely to have fewer mantissa bits (possibly even none actually present, with the leading bit assumed to be 1) but I find it hard to see anything very useful that small. ML weight matrices apparently tend to not need a lot of sophistication. A sign bit is needed; negative/suppressive contributions are important (well, in some types of network).

I've not seen the details so I can't directly verify what layout was being considered.

is this it?

-

I found it

Arm, Intel, and Nvidia published a white paper proposing … two different flavors of FP8: E4M3 (4-bit exponent and 3-bit mantissa) and E5M2 (5-bit exponent and 2-bit mantissa) … to save energy and performance overhead.

-

I found this somewhere a while back.

A.I. can write reviews (that no one reads) of A.I. novels (that no one buys), generate playlists of A.I. songs (that no one listens to) and create A.I. images (that no one looks at) for websites (that no one visits).

This is the A.I. future. Endless content generated by robots, enjoyed by no one, clogging up everything, and wasting everyone's time.

-

@Gern_Blaanston said in I, ChatGPT:

I found this somewhere a while back.

A.I. can write reviews (that no one reads) of A.I. novels (that no one buys), generate playlists of A.I. songs (that no one listens to) and create A.I. images (that no one looks at) for websites (that no one visits).

This is the A.I. future. Endless content generated by robots, enjoyed by no one, clogging up everything, and wasting everyone's time.

You forgot: "And all stored on GPU-wasting blockchain!"

-

@topspin said in I, ChatGPT:

@dkf also wonder what’s the point of that.

I'm sure there is one but it's hard to pin down

-

-

-

-

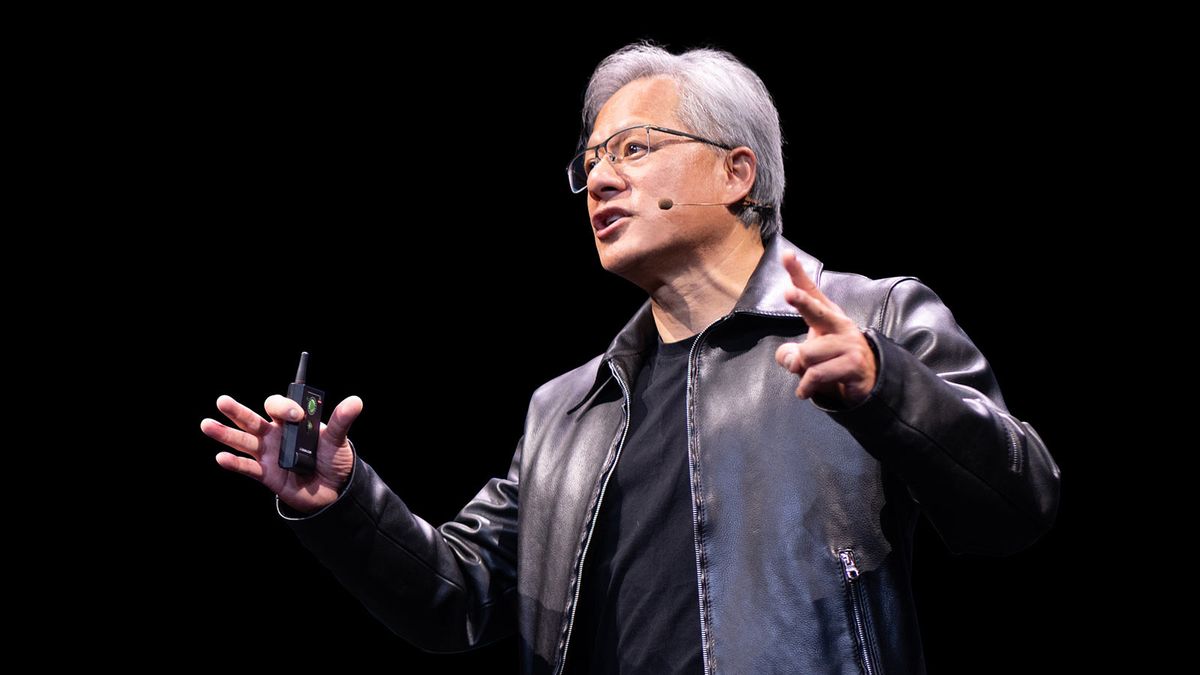

@Atazhaia Hmm. NVIDIA end-game.

- Hire all competent engineers

- Make sure nobody else knows how to code

- Profit.

That said.

With coding taken care of by AI, humans can instead focus on more valuable expertise like biology, education, manufacturing, or farming

He's not completely wrong (except about biology). But we do need more people in education, manufacturing, farming and medicine, and we can probably do with less people making various crapps.

Edit:

Thus, the only language people would need is the language they were born and raised to speak, and are already experts in.

I beg to differ about the last part. Snark aside, programming isn't that much about knowing a language anyway, it's more about being able to order a set of instructions, express those instructions with little ambiguity and being able to anticipate outcomes and problems.

-

@cvi are we going to see the back of “those who can’t do, teach”?

-

@Arantor We might need more people in education, but I don't think that will necessarily mean fewer bad teachers. People who can't do still need to go somewhere, and it's not like manufacturing, medicine or farming is a better choice. (Could put them into biology, I guess.)

-

@cvi said in I, ChatGPT:

it's more about being able to order a set of instructions, express those instructions with little ambiguity and being able to anticipate outcomes and problems

I thought that that could be a good use case for "language"...

I thought that that could be a good use case for "language"...

-

@cvi said in I, ChatGPT:

Could put them into biology

We already have too many people who can't do biology, but that's a

topic.

topic.

-

@sockpuppet7 I have good expectations that despite my utter lack of image output ability I will not have anything to fear from this generation of generative AI.

-

@cvi said in I, ChatGPT:

That said.

With coding taken care of by AI, humans can instead focus on more valuable expertise like biology, education, manufacturing, or farming

He's not completely wrong (except about biology). But we do need more people in education, manufacturing, farming and medicine, and we can probably do with less people making various crapps.

Hmm, if he said that then he has no fucking clue about the changes being wrought in manufacturing, farming and medicine by AI and robotics. Education isn't a big employer except when there is a big demand for the educated.

-

@dkf said in I, ChatGPT:

except when there is a big demand for the educated.

Methinks there's technically a big demand for actually educated, but what that means in context with our environment is... not so great.

Bring back the days when being able to write cursive was enough to raise your pay by ten percent or more!

-

@Tsaukpaetra said in I, ChatGPT:

Bring back the days when being able to write cursive was enough to raise your pay by ten percent or more!

What days were those?

-

@boomzilla said in I, ChatGPT:

@Tsaukpaetra said in I, ChatGPT:

Bring back the days when being able to write cursive was enough to raise your pay by ten percent or more!

What days were those?

When newspapers were a penny.

-

-

@HardwareGeek said in I, ChatGPT:

@Tsaukpaetra said in I, ChatGPT:

When newspapers were a penny.

: What's a newspaper?

: What's a newspaper?It's what they had when you were young instead of social media to tell you what to think.

-

@cvi said in I, ChatGPT:

Thus, the only language people would need is the language they were born and raised to speak, and are already experts in.

NVIDIA

NVIDIA

-

-

@cvi said in I, ChatGPT:

He's not completely wrong (except about biology)

Judging by the birth rates in most western civilizations, we definitely need to increase the average proficiency with biology

-

@HardwareGeek said in I, ChatGPT:

That sounds like a you problem.

I'm not from France.

This insult wasn't needed

-

@HardwareGeek said in I, ChatGPT:

@Tsaukpaetra said in I, ChatGPT:

When newspapers were a penny.

: What's a newspaper?

: What's a newspaper?A thing for squashing flies and to line the bottom of a bird cage.

Perverse incentive - Wikipedia

Perverse incentive - Wikipedia

Minifloat - Wikipedia

Minifloat - Wikipedia

8-Bit Floating Point for AI/ML? | Amazon and Microsoft Shed Tech Jobs - DevOps.com

8-Bit Floating Point for AI/ML? | Amazon and Microsoft Shed Tech Jobs - DevOps.com