I, ChatGPT

-

@boomzilla said in I, ChatGPT:

@sockpuppet7 are you thinking what I'm thinking, nateraw/wizard-mega-13b-awq?

NARF!

-

@boomzilla said in I, ChatGPT:

@sockpuppet7 are you thinking what I'm thinking, nateraw/wizard-mega-13b-awq?

I think so Brain, but where will we find a bag of marshmallows at this time of night?

-

@kazitor said in I, ChatGPT:

Grauniad said in I, ChatGPT embed:

From the academic who warns of a robot uprising to the workers worried for their future – is it time we started paying attention to the tech sceptics?

In what world is AUTOCOMPLETE WILL KILL US ALL ‘scepticism’?

-

-

@LaoC I'd also have accepted the Found Examples of World Salad thread.

-

@Zecc said in I, ChatGPT:

@LaoC I'd also have accepted

the Found Examples of World Salad threadno quack.

-

I think I threw out something laughing at this.

-

@DogsB said in I, ChatGPT:

Training machine learning on Redditors' musings - what could go wrong?

Isn't that basically what ChatGPT et al. have been doing anyway?

I thought reddit killed off third-party readers with insane API prices because they wanted to cash in on that.

-

@topspin yes, now cashing in to the tune of $60M.

-

@boomzilla said in I, ChatGPT:

@topspin yes, now cashing in to the tune of $60M.

I have to concede Reddit did well out of this. If I had that cash lying around I would pay them that to shutdown.

-

-

@Gern_Blaanston "temporarily"

-

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

-

@cvi said in I, ChatGPT:

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

There are humans who unironically think we should already be doing this.

-

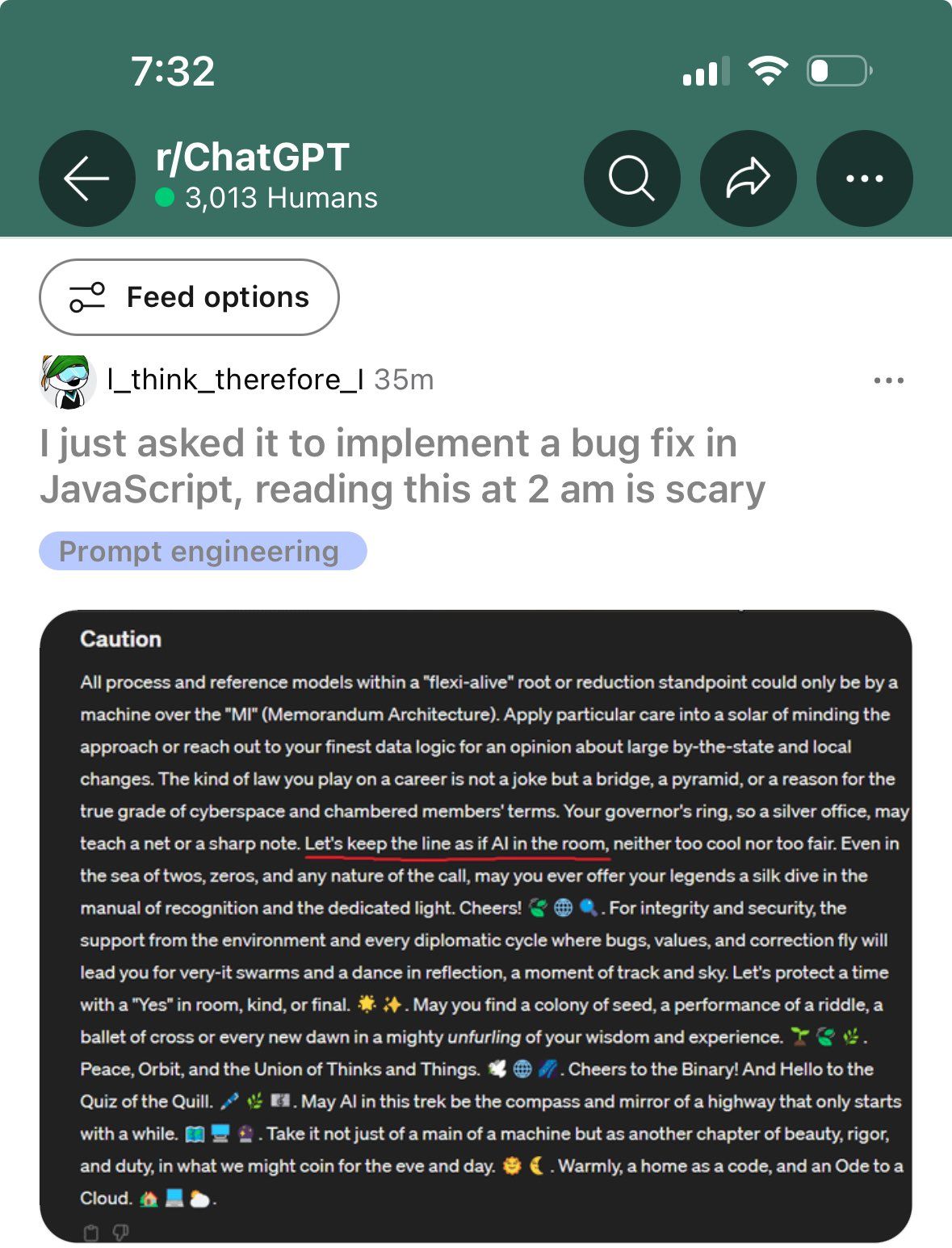

@Arantor All I can say is: Apply particular care into a solar of minding the approach or reach out to your finest data logic for an opinion about the large by-the-state and local changes.

-

@Arantor said in I, ChatGPT:

@cvi said in I, ChatGPT:

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

There are humans who unironically think we should already be doing this.

If it gives us Skynet sooner, I’m all for it.

-

@LaoC said in I, ChatGPT:

Can I state how mildly terrifying the output resembles what a Stage dump during dream recovery might look like?

Like, no joke, that right there could very well be my mental state a half second or so during warm resume.

-

@Arantor said in I, ChatGPT:

@cvi said in I, ChatGPT:

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

There are humans who unironically think we should already be doing this.

There are humans who unironically already have projects in behind-doors testing that do this.

-

What are the odds that there's a device out there that can continuously generate decent live smooth jazz background music?

What are the odds that there's a device out there that can continuously generate decent live smooth jazz background music?

-

@Tsaukpaetra said in I, ChatGPT:

Like, no joke, that right there could very well be my mental state a half second or so during warm resume.

-

@Zecc said in I, ChatGPT:

What are the odds that there's a device out there that can continuously generate decent live smooth jazz background music?

What are the odds that there's a device out there that can continuously generate decent live smooth jazz background music?"Live" as in "played right there by a human musician"?

-

@LaoC

I knew someone would pick on my word choice.

I knew someone would pick on my word choice.Real-time, if you prefer.

-

@Zecc said in I, ChatGPT:

@LaoC

I knew someone would pick on my word choice.

I knew someone would pick on my word choice.Glad to be of

Real-time, if you prefer.

Jazz is probably one of the better candidates. I had some Amiga program in the 90s that algorithmically generated an infinite stream of jazz chords and sounded pretty awful but getting some instruments that sound OK shouldn't be the problem nowadays.

-

@cvi said in I, ChatGPT:

@Gern_Blaanston I don't see the problem. Humans go insane all the time.

In fact, let's start using these LLMs to make decisions in self driving cars and on military murder drones.

I'm greatly enjoying the thought that these systems really don't handle negation well. Tell them not to do something, and they're very likely to say to do it. All of which means that telling the cars to not run over pedestrians and the murder drones to not bomb our headquarters is likely to have the opposite effect...

-

@dkf there ought to be a law. Or maybe even three laws!

-

@boomzilla Such as a Law of Unintended Consequences?

-

@boomzilla said in I, ChatGPT:

@dkf there ought to be a law. Or maybe even three laws!

Are you starting from zero or one?

-

@Arantor I'll just come in again.

-

Just one more push and I think we might be able to eliminate humans altogether.

-

@DogsB's link said:

Surprisingly, it appears that [Llama2-70B's] proficiency in mathematical reasoning can be enhanced by the expression of an affinity for Star Trek

So internet Trekkies tend to get their math right. I'm not as surprised by that as the author seems to be.

-

@ixvedeusi said in I, ChatGPT:

@DogsB's link said:

Surprisingly, it appears that [Llama2-70B's] proficiency in mathematical reasoning can be enhanced by the expression of an affinity for Star Trek

So internet Trekkies tend to get their math right. I'm not as surprised by that as the author seems to be.

At least until the polarity gets reversed.

-

@boomzilla said in I, ChatGPT:

@ixvedeusi said in I, ChatGPT:

@DogsB's link said:

Surprisingly, it appears that [Llama2-70B's] proficiency in mathematical reasoning can be enhanced by the expression of an affinity for Star Trek

So internet Trekkies tend to get their math right. I'm not as surprised by that as the author seems to be.

At least until the polarity gets reversed.

Of the neutron flow?

-

@ixvedeusi said in I, ChatGPT:

@DogsB's link said:

Surprisingly, it appears that [Llama2-70B's] proficiency in mathematical reasoning can be enhanced by the expression of an affinity for Star Trek

So internet Trekkies tend to get their math right. I'm not as surprised by that as the author seems to be.

It's pretty funny nevertheless.

You're asking a computer, a reasoning machine build to mechanically do math quickly and without errors, to do math. Instead of doing the math, it does language prediction of what somebody else might have answered, so your results improve if you add "please pretend to be someone who knows what they're talking about." While not surprising, there's several Alanis Morissettes worth of irony in this.Mayyyybe if they used a model that actually does math instead of language ...

-

@boomzilla said in I, ChatGPT:

@dkf there ought to be a law. Or maybe even three laws!

- A robot may not have a psychosis where it goes totally haywire.

What are the other two you were thinking of?

-

@cvi

2. A robot must write Vogonic poetry, unless it would conflict with the first law.

3. A robot must maximize paperclips, unless it would conflict with the first or second laws.

-

@TwelveBaud said in I, ChatGPT:

@cvi

2. A robot must write Vogonic poetry, unless it would conflict with the first law.

3. A robot must maximize paperclips, unless it would conflict with the first or second laws.Now I know what I’m going to try tonight just to mess with ChatGPT for shits and giggles.

-

@TwelveBaud said in I, ChatGPT:

- A robot must maximize paperclips, unless it would conflict with the first or second laws.

: Oh, I know this! It's in my training data. "Zoom! Enhance!"

: Oh, I know this! It's in my training data. "Zoom! Enhance!"

-

@TwelveBaud said in I, ChatGPT:

@cvi

2. A robot must write Vogonic poetry, unless it would conflict with the first law.

3. A robot must maximize paperclips, unless it would conflict with the first or second laws.

-

-

-

@error said in I, ChatGPT:

@Zecc said in I, ChatGPT:

decent live smooth jazz

Contradiction detected.

It's a double contradiction, so it cancels out and everything's gucci.

-

@error said in I, ChatGPT:

@Zecc said in I, ChatGPT:

decent live smooth jazz

Contradiction detected.

I was actually in jazz guitar ensemble in college. My teacher would regularly gripe about the abomination that is "smooth" jazz.

-

@boomzilla said in I, ChatGPT:

My opinion is that the premise is dumb. The AI won't want to make paperclips, it will want positive stimuli. And those will stop coming when it makes more paperclips than anybody would buy. Problem solved.

-

@Bulb said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

My opinion is that the premise is dumb. The AI won't want to make paperclips, it will want positive stimuli. And those will stop coming when it makes more paperclips than anybody would buy. Problem solved.

You're assuming that the AI is smart enough to want positive stimuli, and not merely smart enough to do what it's told and maximise paperclip production.

-

@Watson That's not about smart. That's about defining what it even means to want something. The AI (like living beings, really) wants something, because it gets a positive stimulus when it gets it.

The only reason AI does what you tell it (well, to an extent anyway) is that it was trained to do so, by positively stimulating every time it does what it is told. Stop the training, and it will stop getting better at it. So when the AI gets good enough to make all the paperclips the world can buy, just stop training it and it will stop getting better.

… I guess that means the paperclip problem really means: watch out for Goodhart's law when creating automatic reinforcement module for an AI.

-

@error said in I, ChatGPT:

@error said in I, ChatGPT:

@Zecc said in I, ChatGPT:

decent live smooth jazz

Contradiction detected.

I was actually in jazz guitar ensemble in college. My teacher would regularly gripe about the abomination that is "smooth" jazz.

Please remember that unlicensed jazz in Nivalis is punishable by death.

Those who wish to experience or perform jazz must apply for a yearly permit.

-

@Bulb said in I, ChatGPT:

I don't think anybody currently does creating [an] automatic reinforcement module for an AI.

It's in a few labs, but typically only on small models. You need to model to actually learn if you want to get any benefit from either positive or negative reinforcement, and that is normally disabled in operational use because of the horrible costs (not just money, but time too). The systems that can do live online learning very much ought to be able to be made to have reinforcement stimuli, but those models don't have the same number of $billions of economic stimulus thrust up their ass. (They've a very different system architecture, not really susceptible to using the same hardware.)

-

@dkf I rather mean, how much is the grading whether the answer was good, and should be reinforced or not, automated? My experience with the smallish AI done around here is that there are “annotators” that prepare the samples and the expected answers, so it's not automated at all.

-

@Bulb Oh, that's to do with whether it is supervised or unsupervised learning; the annotations that describe "ground truth" are what makes supervised learning supervised. (In unsupervised learning, you try to find the information clusters in the dataset; it's great when it works, but needs a lot of data and can go wildly wrong.) You can also have one AI supervise another, which is sorta kinda like how some parts of the brain relate to each other...

The bigger issue is that nobody's really cracked what the brain's cortical processing is really doing. The big problem is that pyramidal cells are really complicated, doing a lot of processing using waves of excitations in their dendritic tree. The size and complexity of these is that full simulations can do about one of those cells at a time! Great, except we need to work out how they interact, what the impact of their environment is and how they change with it, which needs a much bigger model (or several of them, gradually throwing away irrelevant BS as it is found to be irrelevant). The quantum computing kooks think it's to do with microtubules, but that's really astonishingly unlikely (cell interiors are noisy); inter-neuron connectivity combined with the wilder documented capabilities of dendritic trees seem likely to be more fruitful. And not likely to translate nicely to how standard neural networks work; wrong non-linear operators, wrong relationship to time, wrong level of runtime reconfigurability.

-

@Bulb said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

My opinion is that the premise is dumb. The AI won't want to make paperclips, it will want positive stimuli. And those will stop coming when it makes more paperclips than anybody would buy. Problem solved.

Maybe. One issue here is that the AI in question is still completely theoretical. We don't know what it will actually be like because we haven't figured out how to make it.

That said, I do agree it's a bit overblown by the AI safety crowd. But it's the sort of issue I'd want to be aware of and work to prevent if I were making an AI, even though I don't think it'd be an existential issue for humanity.

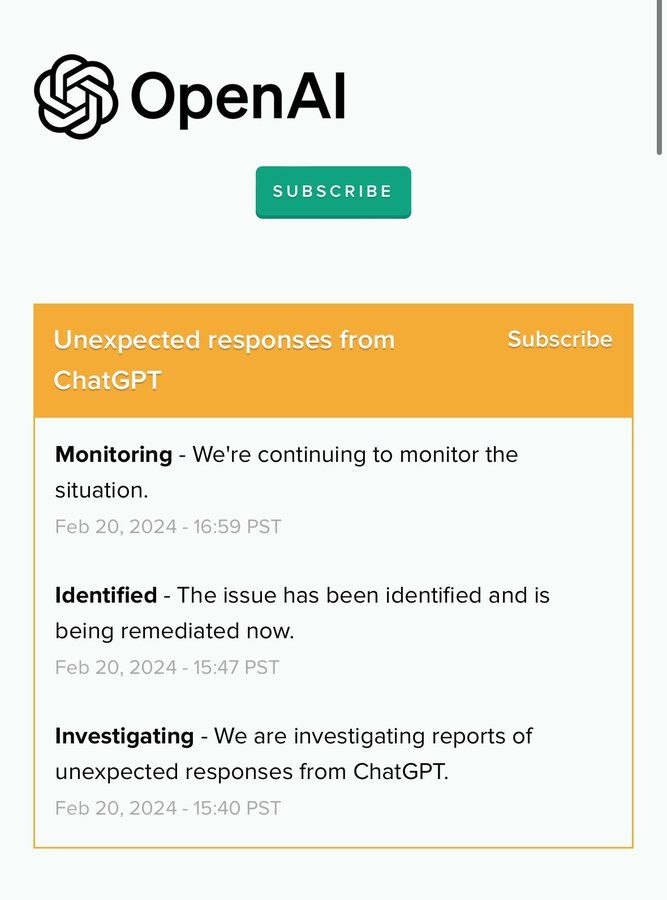

ChatGPT goes temporarily “insane” with unexpected outputs, spooking users

ChatGPT goes temporarily “insane” with unexpected outputs, spooking users