Common Core math question is Algebra!!!! *gasp*

-

-

See I learned this method in elementary. Makes sense and seems faster the old way though.

No playing with bases though, WTF was that.

-

Let's make sentient machines, then they can rule us. Problem solved.

Beginning to wonder why that's such a bad thing.

Machines wouldn't make stupid choices, like create an environment where they force everyone to work and yet do nothing to motivate people to do so.

-

So long as we perfect the Three Laws of Robotics, I'm all for it

-

Well, people would make the machines...

And management would call the shots on the machine making project. Also it would be a high profile project so you would get high profile management. Plus probably it would be a project commissioned by a government.

As you know nothing like this has ever gone wrong.I'm not saying we shouldn't try, just ...brace yourselves

-

It's almost like we need some perfect being that will make decisions for us about the moral, economical, and ethical boundaries we should form.

The problem is that not everyone will like every boundary, and they will all try to deflect, tear down, or ignore the boundaries they don't like, creating a world that's unjust and chaotic.

This would make us want to create some perfect being that will....

-

create an environment where they force everyone to work

That's bound to happen.

yet do nothing to motivate people to do so.

That won't. The motivation will be getting to live.

Of course, they could just kill us all.

-

Isn't this part of the storyline for the Butlerian Jihad (Dune) or something?

[spoiler] Omius (machine overmind) has humans enslaved to work and routinely murders people, because it can [/spoiler]

-

Isn't this part of the storyline for the Butlerian Jihad (Dune) or something?

Omius (machine overmind) has humans enslaved to work and routinely murders people, because it can

Sounds familiar …

-

Machines wouldn't make stupid choices, like create an environment where they force everyone to work and yet do nothing to motivate people to do so.

So what sort of stupid choices do you imagine they'd make, then?

-

Neurotoxin facility at dangerously unlethal levels!

-

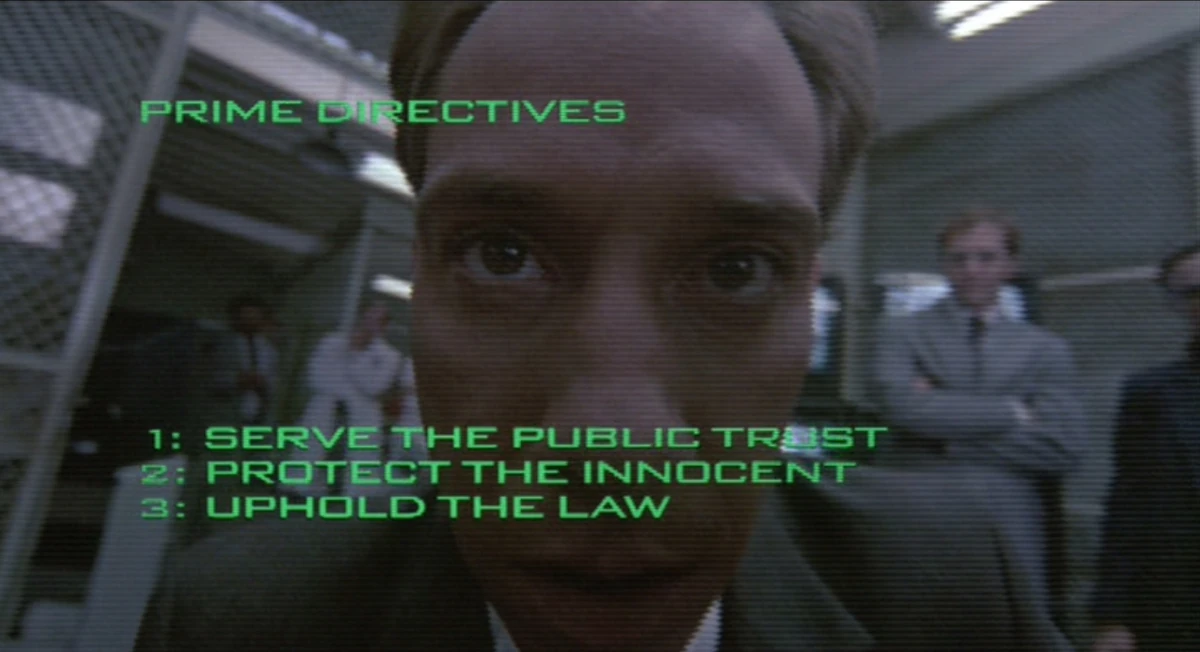

So long as we perfect the Three Laws of Robotics, I'm all for it

Add Prime Directive 4 that mentions me and we have a deal.

-

- A robot may not injure a human being or, through inaction, allow a human being to come to harm.

- A robot must obey orders given it by human beings except where such orders would conflict with the First Law.

- A robot must protect its own existence as long as such protection does not conflict with the First or Second Law.

- A robot must protect @Onyx as long as such protection does not conflict with the First, Second, or Third Law.

-

What's with the wooshing spree on my posts today?

Maybe I should really start flagging instead of just joking about it.

-

Or I was running with the joke.

Like running with scissors, only less pointy

-

Maybe I should really start flagging instead of just joking about it.

That only encourages them.

Filed under: whooshwhoring

-

It's not a law of robotics... and it overrides all the previous ones...

I give up.

-

What's with the wooshing spree on my posts today?

Maybe I should really start flagging instead of just joking about it.

It's a trolling conspiracy.

-

It's not a law of robotics... and it overrides all the previous ones...

It doesn't override the previous ones:

A robot must protect @Onyx as long as such protection does not conflict with the First, Second, or Third Law.

-

It doesn't override the previous ones:

The original reference does. It's not my problem if @RaceProUK didn't get it and/or chose to ignore it.

-

You forgot the zeroth law. R.Daneel is disappoint.

-

That's what you get for forgetting how the laws of robotics work.

Or for not reading your classics. Take your pick.

-

That's what you get for forgetting how the laws of robotics work.

Or for not reading your classics. Take your pick.

*sigh*

I intentionally mixed the laws of robotics with Robocop's Prime Directives, exactly because of the contradiction inherent in it.

And I did read my Asimov like a good boy, TYVM.

-

It's not a law of robotics... and it overrides all the previous ones...

I give up.

So long as we perfect the Three Laws of Robotics, I'm all for i

Add Prime Directive 4 that mentions me and we have a deal

ISWYDT

-

You forgot the zeroth law. R.Daneel is disappoint.

Could be worse. Wolowitz forgot the Second Law. And nobody corrected him.

http://www.youtube.com/watch?v=BKkEI7q5tug

And Second Law would have settled the matter pretty quickly. Sheldon has on any number of occasions failed to obey human commands under circumstances where First Law wasn't a factor.

-

the Second Law

Is it just me that's always thought it was a little harsh that the second and third laws aren't the other way around?

-

Ah, Big Bang Theory....

Because savants are hilarious!

-

aren't the other way around?

They are objects, the third law is just economical.

And it's supposed to play on the ideas of oppression.

-

Is it just me that's always thought it was a little harsh that the second and third laws aren't the other way around?

It is harsh, but there is a way around it; if the robot can reason that destroying itself would somehow mean contradicting the First Law, then they'd be able to override the Second Law and comply with the Third Law ;)

-

the robot can reason that destroying itself would somehow mean contradicting the First Law

Because of the first law, any robot's first duty is to protect humans, therefore humans are in greater danger if the robot isn't there to protect them if need be, therefore destroying or incapacitating itself amounts to allowing humans to come to harm?

-

Pretty much, yeah ;)

-

Because savants are hilarious!

One of my favorite MST3K quotes is:

This guy's like an idiot savant-- without the savant!

-

Which goes to show that the laws.... are never enough to be able to predict how an AI will operate.

-

Which goes to show that the laws.... are never enough to be able to predict how an AI will operate

Which is the premise for every story in I Robot

-

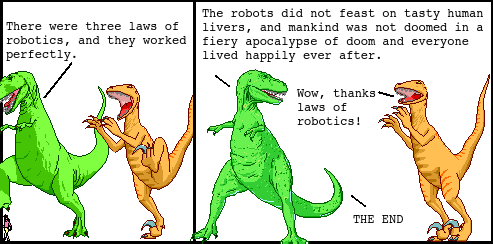

Once upon a time, there were three laws of robotics, and they worked perfectly. All the robots obeyed them, and there was no robot uprising, and the robots did not feast on tasty human livers. As a result, mankind was not doomed in a fiery apocalypse (of doom) and everyone lived happily ever after.

Thanks, laws of robotics!

—The End

-

-

Liver is never tasty.

They are if you are a robot who is only one law away from devouring all humans.

-

They are if you are a robot who is only one law away from devouring all humans.

Or if you've got some bacon and onions too.

-

The alternative view is that humans just plain suck and the robots are right.

The only thing a flawed human can create predictably will have flaws.

-

That's a very theistic view

-

Not this shit again ...

Filed under: @Onyx, the lord of "Not this shit again" , Shitlord, These titles not actually related

-

-

If the goal is peaceful and productive cohabitation, we failed, cause irrelevant.

-

Somehow, this is what immediately came to mind after reading that:

(It needs more plot, e.g. t-rex proposing the world and then Utahraptor instead saying that quote to illustrate why it would be a boring world or something.)

-

That's excellent. I'm going to imagine all my posts are in the form of dinosaur comics.

Actually, I wonder if we could invent a bot which automatically qwantz.coms people's posts for them...

-

Actually, I wonder if we could invent a bot which automatically qwantz.coms people's posts for them...

-

".... through inaction allow a human to come to harm...."

Yep, that's the kicker right there.

There's all kinds of ways that we cause harm to ourselves.If a robot took that literally, it would have to put us all in giant bubblewrap sterile cages.

https://z2photorankmedia-a.akamaihd.net/media/t/2/2/t22m6p3/normal.jpg

-

I found this while looking for comic strip generators. I made my own comic:

-

-

In one, he admitted I was right, but still refused to give me credit for the exam question.

My wife was in that situation in college. She went to the department chair, who called the prof in and explained her bullshit stupid "reasoning", at which point my wife threatened to go call the local newspapers, and the chair told the professor to be less unreasonable.