I, ChatGPT

-

@Arantor said in I, ChatGPT:

@DogsB In 1975 when Bohemian Rhapsody was written, Freddie still had long hair and no ‘tache. The tache doesn’t appear until 1980’s Play The Game.

I feel the world is a little darker now.

-

@DogsB the world darkened in November 1991 for my money.

-

-

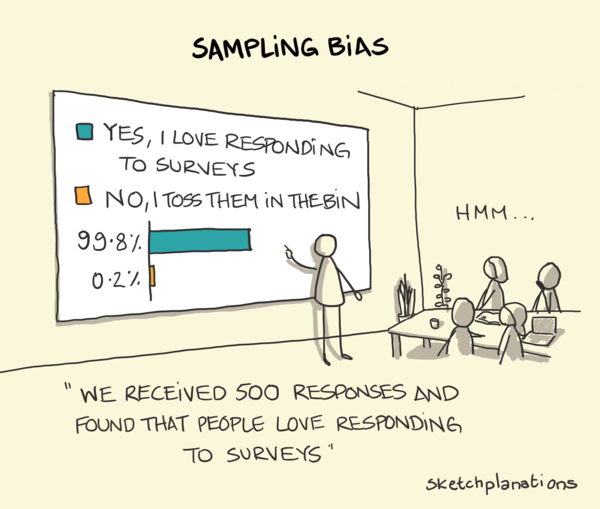

@topspin translation: 92% of people who are the kind of people who’d fill in a GitHub survey are using AI. Specifically, already strong overlaps with the techbro circle in the Venn diagram.

-

-

@Arantor said in I, ChatGPT:

@topspin translation: 92% of people who are the kind of people who’d fill in a GitHub survey are using AI. Specifically, already strong overlaps with the techbro circle in the Venn diagram.

These are (probably) the same people who just copy/paste code they googled from StackOverflow.

-

This post is deleted!

-

-

OFFS!

-

I heard on a podcast that Google has ordered their employees not to put anything Google related into Bard. They didn’t provide a link and

. Pretty funny if true.

. Pretty funny if true.

-

-

@izzion not surprised really. It's absorbed a lot of marketing bullshit. It should be good at regurgitating it.

*edit

Christ that spelling was worse than usual.

-

@izzion Because it gets "analyzed" by

Natural StupidityArtificial Intelligence.

-

@DogsB said in I, ChatGPT:

*edit

Christ that spelling was worse than usual.We understand... in Ireland, it's always 5 o'clock...

-

-

@Polygeekery I don't have much practical knowledge about fixing cars. So from my perspective, the suggestions were pretty good. Generic, which he also mentions, but still. I figure it'd give me a starting point.

In the end he mentions that GPT is pretty good at programming. That's something I do know, and I kinda disagree. This guy on CACM summarizes one of the problems pretty well:

Here is my experience so far. As a programmer, I know where to go to solve a problem. But I am fallible; I would love to have an assistant who keeps me in check, alerting me to pitfalls and correcting me when I err. A effective pair-programmer. But that is not what I get. Instead, I have the equivalent of a cocky graduate student, smart and widely read, also polite and quick to apologize, but thoroughly, invariably, sloppy and unreliable. I have little use for such supposed help.

If I want it to help solve a problem, I basically have to lead it to the solution every step, be hyper-vigilant about it messing up, catching and correcting every small mistake.

People suggest that it's still useful for generating boilerplate or more code completion. I'm on the fence. Scanning through code is as taxing as writing it in the first place and only really faster if I don't have to intervene. If I write the code in the first place, I have a reasonable sense of what it does, and can quickly connect back to that (e.g., when observing a bug or similar).

tl;dr: GPT is at best a generalist besserwisser and at worst a straight-up liar. I wouldn't be surprised if the same happens in other fields. The CACM article suggests that it might do well with translations -- I wouldn't be surprised if an expert in those fields also take a somewhat dim view on the quality there. (I guess the real question is then ... how often are we happy with the lower quality from GPT, especially if it comes in very very cheap.)

-

@cvi said in I, ChatGPT:

I don't have much practical knowledge about fixing cars. So from my perspective, the suggestions were pretty good. Generic, which he also mentions, but still. I figure it'd give me a starting point.

They were not incorrect, which is a start, but they were basically exactly what you'd get from the electronic version of the maintenance manual equipped with a decent search. Which is much simpler.

@cvi said in I, ChatGPT:

In the end he mentions that GPT is pretty good at programming. That's something I do know, and I kinda disagree.

There seems to be something similar to Gell-Mann amnesia at play here: People say that in their field of expertise it's crap, but that it seems better in that other field they have only passing knowledge of. Which is just artefact of not knowing that field much and therefore not seeing the little mistakes and the many important bits of information it failed to mention.

Speaking of Gell-Mann amnesia, I think the one profession ChatGPT might usefully replace is some spokespeople and technical writers. The kind of people who take the description from someone actually in charge (technically or managerially) and forge it to a press release or some document. They don't understand the matter too much, so the fact ChatGPT doesn't either isn't much of an issue, and ChatGPT is quite good at simply making things stylistically pleasant.

I suppose even there the good writers will still be better—I'm not a good writer myself, so I'm probably also biased towards seeing it as more capable than it really is—but it can replace the worse ones and help in all those cases where the technical (in the broad sense of masters of whatever the main job is) people don't have a competent copywriter at hand to help.

-

@Bulb said in I, ChatGPT:

Speaking of Gell-Mann amnesia, I think the one profession ChatGPT might usefully replace is some spokespeople and technical writers. The kind of people who take the description from someone actually in charge (technically or managerially) and forge it to a press release or some document. They don't understand the matter too much, so the fact ChatGPT doesn't either isn't much of an issue, and ChatGPT is quite good at simply making things stylistically pleasant.

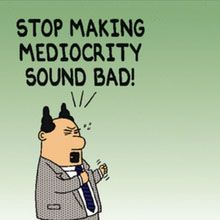

I suppose even there the good writers will still be better—I'm not a good writer myself, so I'm probably also biased towards seeing it as more capable than it really is—but it can replace the worse ones and help in all those cases where the technical (in the broad sense of masters of whatever the main job is) people don't have a competent copywriter at hand to help.I'm not sure automatizing semi-incompetence is something we want. We know that managers will choose something that's cheap and mediocre over something that's actually good but more expensive. Making the mediocre option cheaper is just going to make mediocrity even more dominant.

Of course, whether we want it or not is not going to matter in reality, so...

-

@Zerosquare Well, I don't want semi-incompetence. I've been thinking since long ago that what we need is people who give a shit about doing their job properly. But where semi-incompetence is what we have anyway, I'm not against automating it.

-

@Bulb said in I, ChatGPT:

Which is just artefact of not knowing that field much and therefore not seeing the little mistakes and the many important bits of information it failed to mention.

https://www.youtube.com/clip/Ugkx4izreqdAjkwTguCydf2yIv2eh2iJ7ZGw

And the reason for linking that clip is below the fold.

*Every* choice made (the type of apple, lard instead of butter, etc.) is wrong for pie-making, and what the lawyer pulls the witness up on is perhaps the *least* of them.

-

@Bulb said in I, ChatGPT:

But where semi-incompetence is what we have anyway, I'm not against automating it.

Forcing the mediocre to drive other mediocrity elsewhere? Interesting idea. Is there a floor limit for that?

-

-

@Zerosquare said in I, ChatGPT:

Making the mediocre option cheaper is just going to make mediocrity even more dominant.

-

@izzion said in I, ChatGPT:

Okay, all we need now is to train AI to evaluate pitches and put the whole system on a closed loop.

-

And it's entirely possible that the end result would be more rational than the process we have today.

-

@Zerosquare I mean, it's probably safe to say, at this point.

-

@GOG said in I, ChatGPT:

Okay, all we need now is to train AI to evaluate pitches and put the whole system on a closed loop.

So basically a GAN (generative adversarial network)?

Would probably work, but what are we trying to achieve here? End result would be that everybody has access to the same quality sales pitch no matter if the pitched thing is good or bad...

-

@topspin I think you failed to appreciate the full implication of "closed loop". Nothing gets in, and - crucially - nothing gets out.

-

@Bulb said in I, ChatGPT:

@cvi said in I, ChatGPT:

In the end he mentions that GPT is pretty good at programming. That's something I do know, and I kinda disagree.

There seems to be something similar to Gell-Mann amnesia at play here: People say that in their field of expertise it's crap, but that it seems better in that other field they have only passing knowledge of. Which is just artefact of not knowing that field much and therefore not seeing the little mistakes and the many important bits of information it failed to mention.

That's not quite the same, though. Well, technically, he overestimates the competence of it in the field he doesn't know about, so exactly what Gell-Mann amnesia is supposed to be.

But in practical terms, there's a difference: it gives you something like a Level 1 semi-incompetent support drone, but personalized and immediate access. If you're competent in the field, you know it's not that bright. But if you're not, as I would be in the case of diagnosing a car, the suggestions would still be helpful. It's not so much that you forget how good or not it is when you're asking about other fields, but that the value of a mediocre answer to you is different depending on your own level of knowledge.

-

India suffered the most compromised accounts (12,632), a tidbit that resonates with previous findings that the subcontinent is a prime target for data theft, thanks to its size and heavy use of infotech.

Could only improve their output to be honest.

-

-

-

To measure whether a machine has achieved ACI, he describes a “modern Turing test” — a new north star for researchers — in which you give an AI $100,000 and see if it can turn the seed investment into $1 million. To do so, the bot must research an e-commerce business opportunity, generate blueprints for a product, find a manufacturer on a site like Alibaba and then sell the item (complete with a written listing description) on Amazon or Walmart.com.

It's of course dumb. If you can create a program (which essentially AI is) that can do this reproducibly, you could reliably turn $100k into $1M. Repeat the process. Infinite monies & goodbye economy. So, given that you can't reproduce this more than a handful of times (at best), it doesn't seem to make a great test.

-

@cvi said in I, ChatGPT:

To measure whether a machine has achieved ACI, he describes a “modern Turing test” — a new north star for researchers — in which you give an AI $100,000 and see if it can turn the seed investment into $1 million. To do so, the bot must research an e-commerce business opportunity, generate blueprints for a product, find a manufacturer on a site like Alibaba and then sell the item (complete with a written listing description) on Amazon or Walmart.com.

It's of course dumb. If you can create a program (which essentially AI is) that can do this reproducibly, you could reliably turn $100k into $1M. Repeat the process. Infinite monies & goodbye economy. So, given that you can't reproduce this more than a handful of times (at best), it doesn't seem to make a great test.

Just in case it shouldn't be obvious to someone that such a definition of "intelligence" is idiotic to begin with.

-

-

@LaoC stronger guardrails for everyone (except us, we’re not a problem, honest)!

-

Saw a phrase in passing that sums up this whole thing rather neatly.

GIGO: Garbage In, Gospel Out

And this is why we can’t have nice things, I guess.

-

I'm shocked, I tell you, SHOCKED!!!1

-

@Arantor said in I, ChatGPT:

GIGO: Garbage In, Gospel Out

Not to be confused with GIGO (Gospel In, Garbage Out), which describes what happens when a church uses a bad sound amplification system.

-

@Arantor Isn't there the somewhat famous quote by Babbage? *google*

On two occasions I have been asked [by members of Parliament], 'Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?' I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question.

Well, turns out Babbage didn't anticipate LLMs. Or our slightly more modern definition of "right answers", I suppose.

-

@Zerosquare said in I, ChatGPT:

@Arantor said in I, ChatGPT:

GIGO: Garbage In, Gospel Out

Not to be confused with GIGO (Gospel In, Garbage Out), which describes what happens when a church uses a bad sound amplification system.

for posting

for posting  bait in an open category

bait in an open category

-

@izzion Yeah, disrespecting audiophiles with unnecessarily expensive sound systems is very borderline.

-

@cvi said in I, ChatGPT:

disrespecting audiophiles

Could we sufficiently disrespect them by calling them

.mp3s?

-

@topspin said in I, ChatGPT:

Could we sufficiently disrespect them by calling them

.mp3s?

Nah. The people who invented MP3 spent years tweaking their algorithms and doing objective measurements to make it sound as good as they could.

Comparing them to audiophiles is an insult for them.

-

-

there is nothing inherently improper about using a reliable artificial intelligence tool for assistance

Yeah, if a reliable artificial intelligence tool existed.

-

@HardwareGeek you can rely on the current tools for plagiarism and confidently spouted bollocks. Very reliable on this front.

-

@cvi said in I, ChatGPT:

@Arantor Isn't there the somewhat famous quote by Babbage? *google*

On two occasions I have been asked [by members of Parliament], 'Pray, Mr. Babbage, if you put into the machine wrong figures, will the right answers come out?' I am not able rightly to apprehend the kind of confusion of ideas that could provoke such a question.

Well, turns out Babbage didn't anticipate LLMs. Or our slightly more modern definition of "right answers", I suppose.

-

@topspin said in I, ChatGPT:

@cvi said in I, ChatGPT:

disrespecting audiophiles

Could we sufficiently disrespect them by calling them

.mp3s?

FLACs.

No realaudiophilewould ever accept any loss - andmp3is a lossy codec.

-

@BernieTheBernie said in I, ChatGPT:

@topspin said in I, ChatGPT:

@cvi said in I, ChatGPT:

disrespecting audiophiles

Could we sufficiently disrespect them by calling them

.mp3s?

FLACs.

No realaudiophilewould ever accept any loss - andmp3is a lossy codec.Then it’s got to be

.wav(with corresponding codec). It’s got a more vibrant, analog sound and FLACs are still compressed.

92% of programmers are using AI tools, says GitHub developer survey

92% of programmers are using AI tools, says GitHub developer survey