I, ChatGPT

-

-

@Gustav said in I, ChatGPT:

@Bulb said in I, ChatGPT:

@Gustav said in I, ChatGPT:

Alternative headline: pro-obesity activist mad that a robot told her the way to fix her eating disorder is to eat less.

Simple overeating is not qualified as eating disorder. The most common eating disorders are bulimia and anorexia. And those are literally triggered by telling the people they look fat and should eat less. The quotes in the title shouldn't be there. Such hotline is less than useless. It is indeed literally dangerous.

I mean, yes. But also, that doesn't apply to the particular person the article is about.

Also, TIL NodeBB supports the other link syntax.

As far as I read it, she used to have an eating disorder and is now simply fat, which may not be the healthiest but way better than before.

You don't tell an ex alcoholic how red wine is good for your heart and drinking is fine and safe in moderation either.

-

@Gustav said in I, ChatGPT:

WTF Bites thread?

Thank you, GustavGPT, but our post is in another thread.

-

@Applied-Mediocrity, @Gustav But also see

Which basically says that the reporter got it completely wrong. <sarc>Which never has ever happened, so I still trust the reporter. </sarc>

-

@Benjamin-Hall But also, the official

misspoke

misspoke

-

@Benjamin-Hall said in I, ChatGPT:

@Applied-Mediocrity, @Gustav But also see

Which basically says that the reporter got it completely wrong. <sarc>Which never has ever happened, so I still trust the reporter. </sarc>

Specifically, it seems that it was just humans being humans (specifically, it was a thought experiment, probably motivated by the same chucklefucks who are losing sleep every night over the thought of Clippy turning them into fellow-travellers - given that this was allegedly "'outside the military".)

-

@GOG thought experiment is such a misnomer.

-

@Gustav Unfortunately, "lack-of-thought experiment" didn't stick.

-

@GOG said in I, ChatGPT:

@Gustav Unfortunately, "lack-of-thought experiment" didn't stick.

Thought failure is succinct and could possibly catch on.

-

@Carnage said in I, ChatGPT:

@GOG said in I, ChatGPT:

@Gustav Unfortunately, "lack-of-thought experiment" didn't stick.

Thought failure is succinct and could possibly catch on.

It already has; in fact, it's very common.

Oh, you meant the phrase, not actual failure to think?

-

The engine is plumbed directly into the chips' memory subsystem, so it shares a pool of coherent memory with the CPU and integrated GPU, thus eliminating costly data transfers to, again, boost power efficiency and performance.

Translation: This chip has several side-channel vulnerabilities

AMD's demo did have a button to test its onboard AI engine against the online Azure ONNX EP service, but the demo team told us they had encountered issues with the software, so it wasn't working.

-

@GOG said in I, ChatGPT:

"lack-of-thought experiment" didn't stick.

There's the fact that we don't need to experiment with this: we have already too much real-world results to get data from.

-

there is an history that half scots wikipedia was written by someone that doesn't know scot, and it was all wrong. I wonder if gpt speaks correct scot or wikipedia scots, it said:

As an AI language model, it can be tricky for me to generate authentic Scots due to the reasons explained in my previous response. However, I'll attempt a light Scots accent for you:

"As an AI language model, I've been trained on a big range o' internet text. But while I strive tae understand an' generate text based on a wide array o' languages an' dialects, the quality o' ma understanding relies heavy on the data I was trained on. If a significant portion of that data isnae accurate or is misrepresented, it could affect the output I produce.

Regarding yer question aboot Scots language on Wikipedia, ye're referring tae a real event that happened in 2020. A bairn fae America, wha did nae speak Scots, but was enthusiastic aboot the language, made a large number o' edits tae the Scots-language Wikipedia. Unfortunately, much of this effort was misguided, wi' the edits largely consisting of English text altered tae superficially resemble Scots rather than authentic Scots language.

In terms o' the Scots language data in ma training, it's important tae note that the vast majority of ma training data comes fae English sources. However, I hae been trained on a mixture o' languages, including Scots, though this data is significantly smaller in comparison. OpenAI hasnae publicly specified the individual datasets used for training, so I dinnae hae a detailed visibility into which specific documents were part o' ma training set. If a significant portion o' the Scots data I was trained on includes inaccurately represented Scots fae sources like Wikipedia, it could affect ma understanding an' generation o' the Scots language.

Despite this, I can attempt tae generate text in Scots or interpret Scots text tae the best o' ma ability based on the training I received. However, it's important tae mind that as an AI, I dinnae "speak" any language in the way humans do. I generate text based on patterns in the data I was trained on. If the data was flawed, the text I generate micht also contain flaws. If ye hae any specific questions or tasks related tae Scots, I'd be glad tae try an' help."

Please bear in mind that while this text uses some elements of Scots vocabulary and syntax, it may not be fully accurate or representative of authentic Scots language due to the limitations I previously explained.

-

https://www.nytimes.com/2023/06/02/opinion/ai-coding.html

Confused Betteridge: “but it’s not even a question”

-

@topspin said in I, ChatGPT:

I'm torn. On the one hand, I want to say "Yes, please don't bother learning to code. I like the market to work in my favour." On the other hand, I'm not gonna want to do this forever.

-

That’s the thing that a lot of people are failing to factor in: if you use shitty automated versions that drive all the juniors out of roles, you don’t have the next generation of seniors coming in.

-

@Arantor said in I, ChatGPT:

That’s the thing that a lot of people are failing to factor in: if you use shitty automated versions that drive all the juniors out of roles, you don’t have the next generation of seniors coming in.

Hey, working 2 days a month for full salary in my retirement age seems like a good deal, they can keep fucking up.

-

@Carnage in the next 20+ years I have the awful feeling it’s going to be a lot more fucked than that, to the point where I’m not sure about retiring just because there’s going to have been no one capable of looking after the stuff being built this generation.

Not that this generation is particularly equipped either but we at least have a fighting chance…

-

@Arantor said in I, ChatGPT:

I have the awful feeling it’s going to be a lot more fucked than that, to the point where I’m not sure about retiring just because there’s going to have been no one capable of looking after the stuff being built this generation.

That's easy.

Not my problem.

Not my problem.

Also:

-

@dcon you know that we’re still going to be on the receiving end of that shit when the pension companies fuck us over because none of the hipsterscripters can make sure we get paid…

-

@Arantor said in I, ChatGPT:

because there’s going to have been no one capable of looking after the stuff being built this generation.

That’s not the fault of ChatGPT or the future generation. It’s the current generation building fragile crap in

hipsterscript that breaks when you look at it funny.

hipsterscript that breaks when you look at it funny.

-

@Arantor said in I, ChatGPT:

the pension companies

As long as their mainframes haven't keeled over yet... oh, we're fucked.

-

@topspin said in I, ChatGPT:

https://www.nytimes.com/2023/06/02/opinion/ai-coding.html

Confused Betteridge: “but it’s not even a question”

Journolists still don't want to lern2code.

FilmAI generated listicle at 11.

-

StackOverflow ... do you remember what is (supposed to be)? Anyways, they allowed for AI generated content, and some moderators decided to go strike.

-

And another funny

article I read about in the news section of CodeProject:

article I read about in the news section of CodeProject:

It’s amazing how the confident tone lends credibility to all of that made-up nonsense

-

@BernieTheBernie said in I, ChatGPT:

StackOverflow ... do you remember what is (supposed to be)? Anyways, they allowed for AI generated content, and some moderators decided to go strike.

Making the world a better place:

Striking community members will refrain from moderating and curating content,

-

@BernieTheBernie said in I, ChatGPT:

And another funny

article I read about in the news section of CodeProject:

article I read about in the news section of CodeProject:

It’s amazing how the confident tone lends credibility to all of that made-up nonsense

Knuth called the whole experience “interesting indeed,” while expressing surprise that no science fiction novelist ever envisioned a pre-Singularity world in which people interacted with an AI that wasn’t all-knowing, but instead generated plausible but inaccurate results.

I am certain I read a sci fi novel like that a long time ago. And the broken AI resulted in the downfall of the group that listened to it.

-

@Carnage said in I, ChatGPT:

And the broken AI resulted in the downfall of the group that listened to it.

"Psst! I've got a new AI: WokeAI!"

-

@Applied-Mediocrity said in I, ChatGPT:

The engine is plumbed directly into the chips' memory subsystem, so it shares a pool of coherent memory with the CPU and integrated GPU, thus eliminating costly data transfers to, again, boost power efficiency and performance.

Which is pretty much what Apple is doing with the Mx chips and unified memory. CPU, GPU and Neural Engine all share the same memory pool.

-

-

-

-

@boomzilla there is literally nothing that could go wrong.

-

@topspin eh, seems like a pretty good use of the technology to me. Probably at least as accurate as a person doing this sort of thing. Especially after a few hours.

-

@topspin

uh? pretty damn sure that Nuance/Dictaphone did already transcribe doctors notes into medical applications way back in dino times. So I don't see that much difference because those things where packed full with abbreviations and short hands like 'sign' would conclude with the full doctors name, title and golf average.

-

@boomzilla said in I, ChatGPT:

@topspin eh, seems like a pretty good use of the technology to me. Probably at least as accurate as a person doing this sort of thing. Especially after a few hours.

Just like for anyone writing a summary, one of the main functions of the writing process is to structure your thoughts and think about the subject again. That's the part they're "optimizing" away now. If the doctor later went over the text with attention and carefully checked that it matches the facts of the case, that might be fine—with a polished and convincing writing style being the strongest point of the AI and factual accuracy the weakest, maybe not even then. But in any case, if that happened, there would be hardly any time saved in comparison to a simple dictation, so you can bet your ass the approval process will be just as thorough as the "review" done by insurers:

^A-rightclick-approve-logout.

-

Yes, let's feed confidential medical data into a remote system whose internal workings are pretty much inscrutable. Sounds like a great idea to me!

-

@Luhmann said in I, ChatGPT:

Nuance/Dictaphone did already transcribe doctors notes into medical applications

Speech recognition with specialized medical vocabulary always had a really high correct recognition rate, totally in contrast to everyday speech recognition.

And ChatGPT is shit when it comes to facts, so...

-

@BernieTheBernie Also plain old speech recognition can be done locally on any decent phone or tablet just fine so then the doctor won't be blocked by mobile network and/or cloud outages.

-

@Bulb and - for legal reasons - inside the hospital.

-

@BernieTheBernie Look, it's not like those services will disable certificate validation, share data with advertisers and store everything in unsecured S3 buck... you know what?

-

@Applied-Mediocrity Since those system have access to everything already, they do not need to share the data with advertizers. They know how to write a convincing ad to the doc, to the patient, and his friends and relatives.

Internet pharmacy offers at best price!

at best price!

-

Summary: ChatGPT makes fun of ElectroBoom for being bald for 15 minutes. Or having hairy arms. Maybe both.

-

@Arantor said in I, ChatGPT:

So if this shit is already possible, where is Johnny Five, goddamit?

Need more data.

-

Slashdot take:

schwit1 writes: "Garbage in, garbage out -- and if this paper is correct, generative AI is turning into the self-licking ice cream cone of garbage generation.

On the upside, all the machine learning data mining models that everybody uses to analyze everybody's behaviour might choke on machine generated garbage as well. Maybe I'll just hook up ChatGPT to my browser for a few hours a day and see how Googlebing deals with that. (Cue ads for weaponized Roombas for the rest of my Google-account's life.)

-

@cvi said in I, ChatGPT:

And it’s not just text. If you train a music model on Mozart, you can expect output that’s a bit like Mozart but without the sparkle – let’s call it ‘Salieri’. And if Salieri now trains the next generation, and so on, what will the fifth or sixth generation sound like?

-

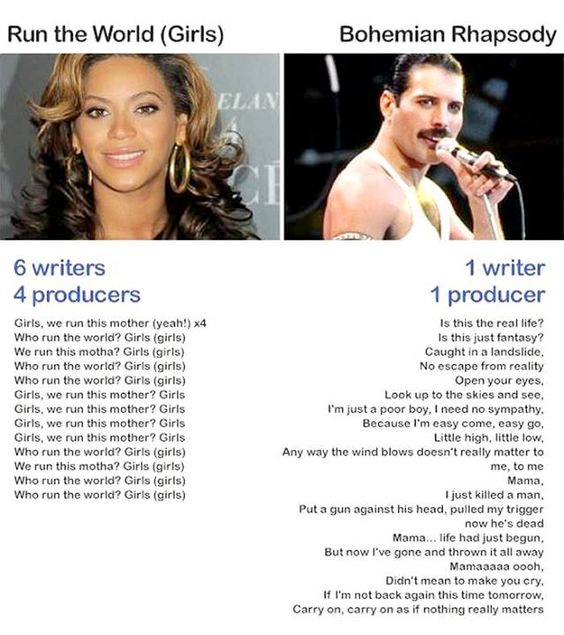

@topspin … should also compare the music scores (but it wouldn't change nothing because Bohemian Rhapsody is richer in music too).

-

@topspin said in I, ChatGPT:

@cvi said in I, ChatGPT:

And it’s not just text. If you train a music model on Mozart, you can expect output that’s a bit like Mozart but without the sparkle – let’s call it ‘Salieri’. And if Salieri now trains the next generation, and so on, what will the fifth or sixth generation sound like?

To be fair the bouncy song could be down to: “change a word,take a third” rules. Or more likely, the lack of a magnificent mustash.

-

@DogsB In 1975 when Bohemian Rhapsody was written, Freddie still had long hair and no ‘tache. The tache doesn’t appear until 1980’s Play The Game.

-

@DogsB said in I, ChatGPT:

To be fair the bouncy song could be down to: “change a word,take a third” rules.

Could be?