UI Bites

-

A convention isn't an axiom or a hard rule. It may, and often does, change with time (and other things).

If you go and find the "UI hall of shame" (I think that's how it was called?), you'll see plenty of UIs that were deemed bad and breaking convention 15-20 years ago (I think that's about when it stopped being updated?). Many of those are still pretty bad, some of them in ways that probably wouldn't even be feasible nowadays as toolkits do impose some rules. But some of those have actually become if not good UI, at least accepted UI.

-

There are also plenty of people who use faulty grammar. That doesn't make them right.

-

@Zerosquare

<insert reference to French Académie and prescriptivist vs. descriptivist debate>

-

But some of those have actually become if not good UI, at least accepted UI.

Every design trend from the past 10 years

-

-

A lot of UI is just conventions. A "link" isn't a link, it's text written in a styling that by convention we use mostly for link. Same for a "button."

There was a time when buttons looked and behaved like physical buttons. Then metrosexuals took over.

-

@Gąska there was a time we used applications to do things and “document object models” to mark up and traverse documents but some people decided we could have the worst of both worlds together.

-

@Arantor It's not for nothing that the “DOM” has BDSM associations.

-

@dkf I think @error can confirm that the relationship between DOM and SUB(contractor/developers) are the same in both cases.

-

@Atazhaia Except that in one case, the relationship is consensual by all involved; the other, not so much.

-

Reading an old article from November 2011 on a news site, there's an addendum at the end:

-

@remi Interestingly, 1036 is the ID of the French language in Windows. Could be related, if someone used the wrong parameter in the wrong place...

-

@Medinoc Probably a coincidence since it's an article in English on a British website and my browser isn't configured to request French pages.

They could still get "France" from cookies or my IP, though my employer's VPN uses exit points about anywhere in Europe so I have no idea where I am today. But it seems a bit unlikely.

-

Reading an old article from November 2011 on a news site, there's an addendum at the end:

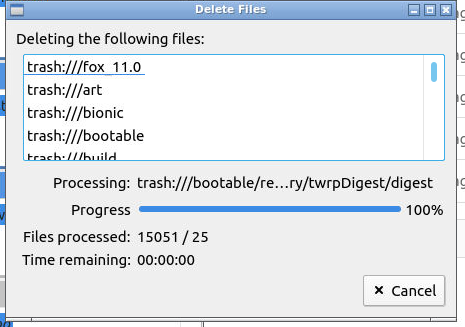

E_GODDAMMIT_FBMAC

-

Status: Linux is ready for Desktop.

-

@Tsaukpaetra Linux is. The desktop environment, not so much.

For some reason Canonical and some other companies chose to prefer GNOME though KDE works, and has worked, better. Even despite the money being thrown at GNOME.

-

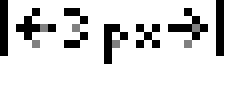

A tiny thing but it threw me in a loop for a while...

On Windows when you (programmatically) change the cursor e.g. using Qt's

setCursor(Qt::CrossCursor), the cursor changes to a cross-hair, like this:

Now I never noticed before, but Windows is actually smart enough to adjust the colour of the cross-hairs according to the background colour. So on a black background it's white, on a red one it's blue and if it's halfway between two colours it's of two (or more) different colours:

(high-resolution graphics courtesy of Windows' built-in "Steps Recorder" which is the first way I found to record the cursor on a snapshot and to search for more -- though I didn't know of that tool which I'm glad to have discovered!)

to search for more -- though I didn't know of that tool which I'm glad to have discovered!)But what happens if the background is grey? Say, a random shade of grey that's... just perfectly midway between white and black?

Duh.Not really a surprise when you think about how it's (probably) implemented, which is confirmed by using various other shades of grey, but still unexpected when you're not really focused on the cursor itself. It's apparently a known issue (see e.g. this SO post), but as I witnessed it in my own application, that draws different stuff in different stacked layers, I got confused for a while thinking that somehow I had managed to create a layer that was in front of the cursor (which is impossible!), because when I moved the mouse around it seemed exactly as if the cursor was diving underneath that grey layer.

-

@remi it's implemented as an XOR as far as I remember which means you get a 7f7f7f cursor on the 808080 background which is for most people utterly indistinguishable... :(

-

@remi My knowledge may be out of date, as the last time I messed around with Windows icons and cursors was i the 90s, but the format supported transparent and inverted palette entries, which would either pass through or invert whatever was underneath. I saw some neat examples of e.g. a mouse cursor where the inside was transparent and the outline inverted, creating a glass-like effect. But yes, it has the downside that middle grey inverts to an indistinguishable middle grey

-

I just read Raymond Chen's blog post about a similar topic.

TLDR: It made sense 40 years ago, and "draw twice to undo drawing" side effect of XOR was just too convenient to pass on. And now they're stuck with it forever for backward compatibility.

-

it's implemented as an XOR as far as I remember

I don't how it's done nowadays, but it was XOR back

a couple ofum, three (this is getting heavy) decades ago when I worked on hardware for that.

is getting heavy) decades ago when I worked on hardware for that.

-

@HardwareGeek said in UI Bites:

I don't how it's done nowadays

The main problem with using XOR directly in the display buffer is that it depends on you understanding exactly what is in there beforehand. Most of the time the trick worked well, but when it didn't it failed really horribly (with weird graphical corruption that just refused to go away because all the code thought it didn't exist).

It might still be XOR, but it'll be applied to the version of the window in backing store rather than directly on the screen. More expensive (as there's still a blit to the main display to do) but no surprises as there's never anything layered on top there that isn't under application control.

-

A tiny thing but it threw me in a loop for a while...

On Windows when you (programmatically) change the cursor e.g. using Qt's

setCursor(Qt::CrossCursor), the cursor changes to a cross-hair, like this:

I used a blue one up until Windows 7:

Didn't see it all that often, though; a lot of fancy programs use their own cursors instead of the system ones. I still miss Tardis "hourglass" and Pac-Man "pointer with hourglass" sometimes. :)

-

I still miss Tardis "hourglass" and Pac-Man "pointer with hourglass" sometimes. :)

I use my Mac cursors.

-

@Tsaukpaetra said in UI Bites:

I still miss Tardis "hourglass" and Pac-Man "pointer with hourglass" sometimes. :)

I use my Mac cursors.

Do you use the Mighty Finder?

-

@Tsaukpaetra said in UI Bites:

I still miss Tardis "hourglass" and Pac-Man "pointer with hourglass" sometimes. :)

I use my Mac cursors.

Do you use the Mighty Finder?

No.

-

And now they're stuck with it forever for backward compatibility.

Everything in Microsoft ever. They should make it their motto or something.

-

@Vault_Dweller say what you want, but Microsoft's almost religious devotion to backward compatibility is the only reason we were able to run programs more than 2 years old throughout the 90s and 2000s.

-

@Gąska All operating systems, really. In Linux (meaning the kernel) anything that would break existing software is absolute no-go and Linus is totally mad at the libc people whenever they fail to do the same, but they also are trying quite hard not to break anything.

Users don't want to update random things just because something was changed in the core, even if it was a genuine bug fix, so any operating system that does not maintain backward compatibility is limiting its own user base.

-

@Bulb Linux is breaking compatibility so hard they had to incorporate all 3rd party drivers into main kernel code so they could be kept in sync with ever changing APIs.

Used to at least. Back in 2.6 days.

-

@Gąska Linux never ever supported drivers outside of the main tree, so there is no compatibility to break there.

-

@Bulb that's a total cop out though. "Let us take over all your source code or else all devices you sell will stop working next Tuesday". Can you imagine what would happen if Microsoft tried that?

Seriously. There's backward compatibility, there's real backward compatibility, and then there's 90s Microsoft. They weren't just on another level, it wasn't even in the same galaxy. They truly went above and beyond what could be reasonably expected from them. They didn't just keep APIs and ABIs perfectly stable, they also researched all the undocumented bits of random hackery that others relied on and stabilized them as well. They had whole teams dedicated to finding out why random pieces of software kept crashing on new Windows builds even though all ABIs stayed the same.

Linus Torvalds doesn't even come close with his "we never break user space so let's move everything we'd like to break to kernel space" philosophy.

-

@Gąska There are two approaches that are highly professional. You've described one, but the other is that where there's a variation from the docs, the code is changed to follow the docs more precisely. This second approach discourages using weird hacks, and at the same time prevents needing so much effort on discovering what weird hacks exist out there.

I wonder what actually are the main weird hacks in Windows to support bad programs? How many of them are tweaks to memory management?

-

I wonder what actually are the main weird hacks in Windows to support bad programs? How many of them are tweaks to memory management?

Windows 7 contains an exact 1:1 copy of Windows 95 memory manager that runs instead of the default one if you run a program in compatibility mode, to make sure the memory addresses are always exactly the same as on actual Windows 95. And that's just the tip of the iceberg.

-

I wonder what actually are the main weird hacks in Windows to support bad programs? How many of them are tweaks to memory management?

Read Raymond Chen's blog. It describes lots of them. Including many ones for which you wonder "how could the program possibly have worked in the first place?!".

-

@Zerosquare said in UI Bites:

Including many ones for which you wonder "how could the program possibly have worked in the first place?!".

Hard-coded addresses for where the allocations end up? That's that level of hack (and genuinely

tier). I was instead thinking about stuff like detailed use-after-free behaviour, which is something which is wrong but where it often worked with old allocators so many people got used to doing it. (In particular, it lets you avoid a temporary variable when writing a loop to free a linked list, so it was quite an attractive blunder to make.)

tier). I was instead thinking about stuff like detailed use-after-free behaviour, which is something which is wrong but where it often worked with old allocators so many people got used to doing it. (In particular, it lets you avoid a temporary variable when writing a loop to free a linked list, so it was quite an attractive blunder to make.)

-

I was instead thinking about stuff like detailed use-after-free behaviour, which is something which is wrong but where it often worked with old allocators so many people got used to doing it.

There's at least one article about this, but

searching for it.

searching for it.

-

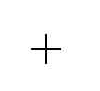

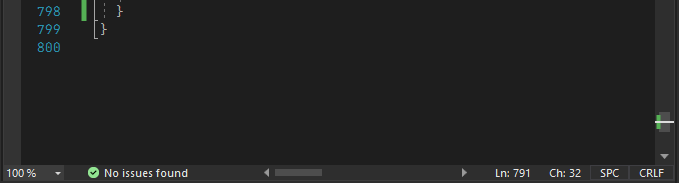

Visual Studio has a horizontal scrollbar:

It's quite useful for viewing the ends of long lines. And it seems like it would be even more useful if you resize the pane to be narrower.

Apparently Visual Studio thinks that the Everything's OK alarm is more important than being able to see the right side of my localized text

-

The played time wraps to 2 lines.

-

@hungrier

is that the scroll bar doesn’t span the whole width to begin with.

is that the scroll bar doesn’t span the whole width to begin with.

-

@topspin But but but then they'd need to put the status bar elements somewhere else

-

I still miss Tardis "hourglass" and Pac-Man "pointer with hourglass" sometimes

I still have an ANI file I used to hourglasses that has a little timer in the corner. Too

to change it anymore - all I can handle is the "make the cursors bigger" option)

to change it anymore - all I can handle is the "make the cursors bigger" option)

-

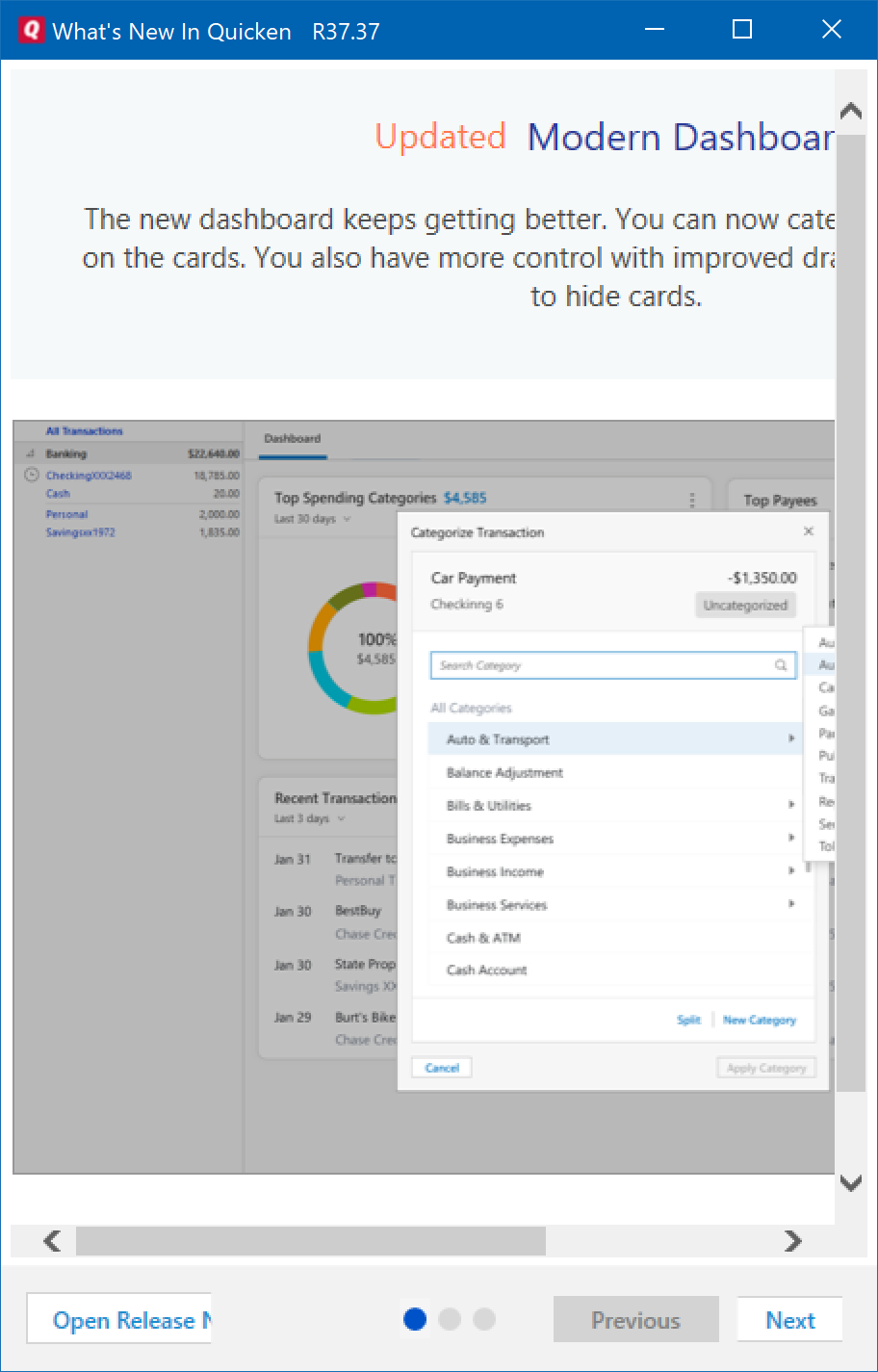

Oh great. Quicken is now going Modern

This, of course, means dialogs aren't sized properly and buttons are cut off.

-

-

-

I thought modern stuff was supposed to be all about being "responsive" or something?

-

@Zerosquare They are responsive to the developer's machine. Anyone else are

.

.

-

@Zerosquare It is responsive

I want it to look right

Response:

-

This shit drives me up the wall.

-

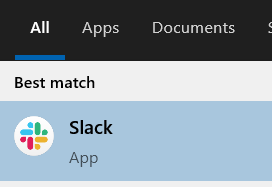

@Zecc Almost any search-as-you-type I've seen tends to do that. Also the even more curious thing of having the correct guess in the list after two letters and then forgetting it when you type the third one.