Moar Cooties

-

@cheong said in Moar Cooties:

@acrow said in Moar Cooties:

@cheong They will start to care soon. I hear the Chinese government will start deducting the "citizen points" score for playing too much. (Then again, young people may not care enough anyway.)

That's something I have problem to understand.

In China there're game players make their living by joining computer games contests, there are also people who stream their game play to platforms such as Twitch to collect advertising revenue. If I happen to be living on these ways, am I working or playing when playing these games? Will my marks be deducted?

As I understand it, it's because of "public morals". Therefore, I assume that professional players could be counted in the same way as professional porn actors; although they are meaningfully employed, they're still bad for the public morals.

Of course, this will greatly depend on whether there is a famous Chinese team playing internationally by the time the points system is fully operational. Then it might be considered a sports, maybe.

Think about whether Poker and Billiards will be considered sports or vice. Both have international tournaments. But they also started from bars. Will they deduct points?

-

@acrow Considering the skill required and the amount of training they put in people like Ding Junhui to play at near perfection, it’s unlikely they’ll deduct pointzzz from figureheads like that.

-

Just some related news.

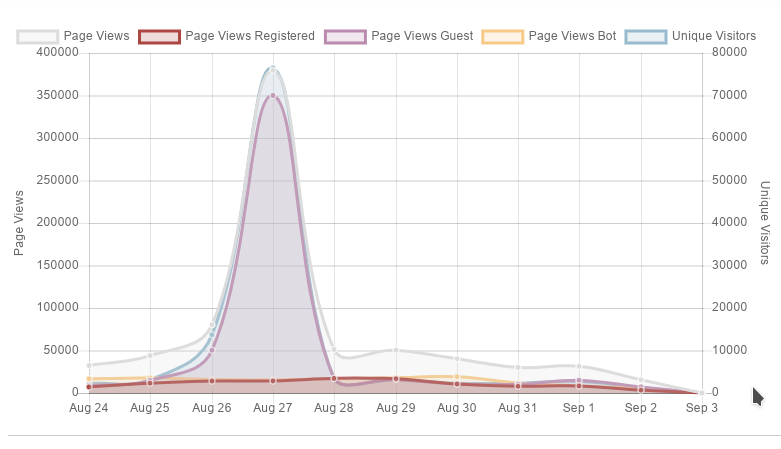

Yesterday, the LIHKG forum was under DDOS attack. Upon investigation by various forum members, they found that certain China based CDNs such as quhucdn have injected JavaScript to sites that uses them. Whenever a visitor browse a page that uses JavaScript libraries hosted in their site, the visitor will also send a request to their forum, therefore causing the DDOS attack.

[quote]

LIHKG has been under precedented DDoS attacks in the past 24 hours. We have reasons to believe that there is a power, or even a national level power behind to organise such attacks as botnet from all over the world were manipulated in launching this attack.

Here are the figures on the attack during the period 0800 - 2359 on 31 August 2019:

- Total request exceeded 1.5 billion;

- Highest record on unique visitors exceeded 6.5 million/hr;

- Highest record on the Total Request frequency was 260k/sec in which then lasted for 30 minutes before it is banned.

The enormous amount of network requests have caused internet congestion and overload on the server which have occasionally affected the access to LIHKG. The website data and members' information are unaffected.

With the fact that we have been encountering different levels of internet attacks, the server security has also been constantly upgrading in response to each attack. Although there is no perfect solution on preventing DDoS attacks, efforts have been put into monitoring and taking measures by LIHKG administrators in order to resume the service as soon as possible and the first wave of attack was successfully blocked. Not to mention that the LIHKG Admin team has been monitoring the situation over the past hours and proactively responded to continuous attacks.

Special thanks to CloudFlare for providing great support in blocking out huge numbers of attacks.

In viewing that there may still be continuous large scale attacks on LIHKG and may affect the browsing through LIHKG app, please switch to the website version if LIHKG App are malfunctioned. We deeply apologise for the unstableness of the service.

Great thanks to our user on sharing with us the fact that some of the attacks were from websites in China. The website will automatically and constantly send request to LIHKG at the background when the user browse on such websites to launch the attack. We have already confirmed the situation happened relates to this. Although IP addresses from relevant regions are blocked earlier, owners of those websites locates all over the world and simply blocking a specific region or country would not be an efficient solution to recent attacks.

We would hereby suggest users to:

- Avoid browsing unknown websites to prevent being used to attack other services

- Refrain from installing unknown applications or extensions

- Reset your password if you are using the same password for other websites (details here, https://lih.kg/1459855)

- Upgrade to the latest LIHKG App

- Spread the listed points to your friends

LIHKG administrators will made their best effort to monitor the service status continuously to ensure user to express in a safe and stable platform with freedom.

[/quote]

-

@cheong said in Moar Cooties:

Avoid browsing unknown websites to prevent being used to attack other services

-

@cheong Called it:

@acrow said in Moar Cooties:

they also unwittingly take part in the DDoS network

...Though I forgot about CDNs.

-

The thing is doing the thing again.

-

@pie_flavor

top notch issue report!

-

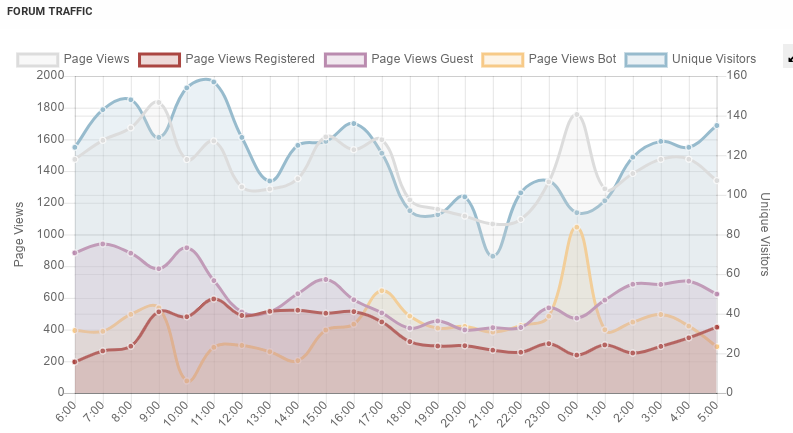

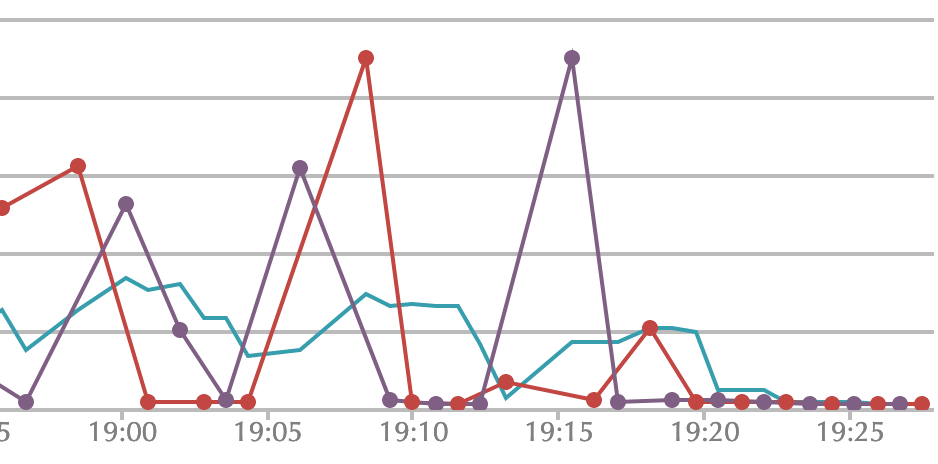

There was a minor bot spike a few hours ago but I don't see anything particularly worrying here:

-

It comes and goes for me this morning. Sometimes Recent loads in less than a second, sometimes it takes ten seconds. Funnily enough, when I visited Settings to double-check my Homepage setting, it took five seconds or so.

-

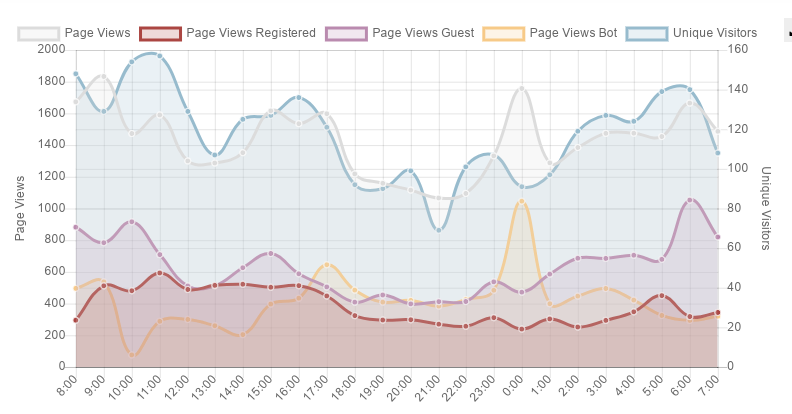

@lolwhat hmm...I checked the load average earlier and it was around 3. Now it's up to 10. I see a few questionable patterns in the access data but nothing like last week's DDoS.

For reference:

-

@boomzilla load average now back down under 5.

-

Obviously not directly related to what we experienced, but possibly similar in some respects:

Prosecutors say the accused were able to spam from the purloined IP address blocks after tricking the owner of Hostwinds, an Oklahoma-based Internet hosting firm, into routing the fraudulently obtained IP addresses on their behalf.

-

Load is very high right now (~48) but I still don't see any obvious patterns in the access logs.

-

@boomzilla Well, whatever it is, it's making

unreadtake approximately a minute to load, for me at least.

-

Vacuumed again. Let's see if that helps.

-

Load average is way down:

1.54, 19.44, 36.47

-

Hoovering the database seems to have helped.

-

@loopback0 yeah, navigation seems much peppier to me.

-

Proposed mitigation: Have a job that delouses the indexes whenever a significant performance issue is detected.

Proposed resolution: Figure out why the fuck this needs to be done so often now (well, every week or two), then change shit around to eliminate that need.

-

I thought Postgres had an autovacuum job. Maybe it just needs a tweak.

-

@lolwhat said in Moar Cooties:

Have a job that delouses the indexes whenever a significant performance issue is detected.

If this site was PHP, we could put that in the PHP header for every page.

-

@mott555 said in Moar Cooties:

If this site was PHP

I'm sure the NodeBB devs would have still screwed up by not using a real database

-

@loopback0 said in Moar Cooties:

I thought Postgres had an autovacuum job. Maybe it just needs a tweak.

# select name, setting from pg_settings where name = 'autovacuum' ; name | setting ------------+--------- autovacuum | on (1 row)

-

@boomzilla Maybe we need to buy a Roomba

-

@TimeBandit said in Moar Cooties:

@boomzilla Maybe we need to buy a Roomba

I think we have one. It's just going around the tables...

-

@boomzilla Somewhere there are settings that tell the robo-hoover to trigger when a certain proportion of the records are out of date (obsolete? dead? standing there shooting aliens? whatever the term). Possibly the thresholds can be set on individual tables.

-

-

@loopback0 said in Moar Cooties:

@boomzilla Somewhere there are settings that tell the robo-hoover to trigger when a certain proportion of the records are out of date (obsolete? dead? standing there shooting aliens? whatever the term). Possibly the thresholds can be set on individual tables.

Hmm...

autovacuum | on autovacuum_analyze_scale_factor | 0.1 autovacuum_analyze_threshold | 50 autovacuum_freeze_max_age | 200000000 autovacuum_max_workers | 3 autovacuum_multixact_freeze_max_age | 400000000 autovacuum_naptime | 60 autovacuum_vacuum_cost_delay | 20 autovacuum_vacuum_cost_limit | -1 autovacuum_vacuum_scale_factor | 0.2 autovacuum_vacuum_threshold | 50 autovacuum_work_mem | -1 log_autovacuum_min_duration | -1

-

-

-

@Luhmann I could scroll up to check but

-

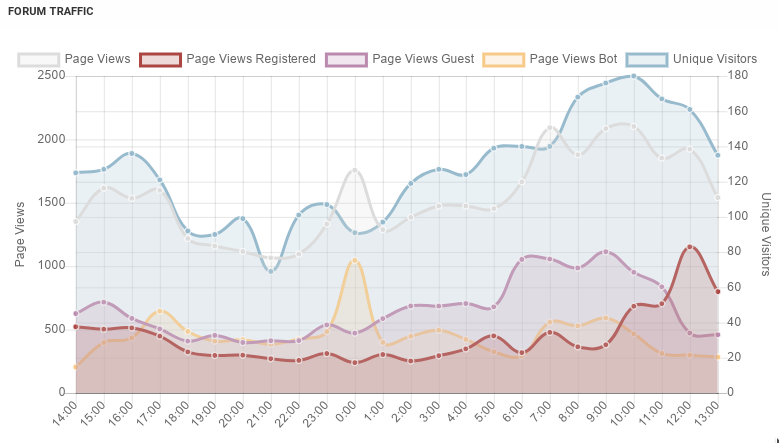

Is the forum having cooties again? Seeing some spikes in the response time graph and having some intermittent slowness when loading stuff. And as of the time of typing this Server Cooties changed status to "BAD" for the forum.

-

Same here. Plus my back/forward gets fucked with overwriting thread positions with Recent or Unread, which usually only happens if you spam-click.

-

@Atazhaia probably. Load average was about 5 when I checked this morning, but most people probably aren't on yet. Did some more vacuuming, so we'll see.

-

I'm not sure if it's just me or the Hangman thread or what, but infiniscroll isn't chooching

-

@hungrier said in Moar Cooties:

I'm not sure if it's just me or the Hangman thread or what, but infiniscroll isn't chooching

Not just you! But did you try a refresh?

-

@Tsaukpaetra Not until after I had already got close enough to the end of the topic that it loaded the whole thing at once

-

Paging @ben_lubar or @boomzilla to let loose the Roomba of WTF

-

why does it break every week now

-

Also, why the fuck is it like it is? The recents list takes a fucking year to load, but infiniscrolling the list downwards is as instant as ever. Wat.

-

@pie_flavor said in Moar Cooties:

why does it break every week now

Apparently PostgreSQL isn't webscale.

-

-

Load average is ~ 5 / 4 / 3 currently. Not great but not terrible.

-

@boomzilla

Can't really complain about load times, it's been much worse before.

Can't really complain about load times, it's been much worse before.

-

nodebb=# vacuum VERBOSE legacy_zset ; INFO: vacuuming "public.legacy_zset" INFO: scanned index "legacy_zset_pkey" to remove 2467443 row versions DETAIL: CPU: user: 3.66 s, system: 0.83 s, elapsed: 28.38 s INFO: scanned index "idx__legacy_zset__key__score" to remove 2467443 row versions DETAIL: CPU: user: 5.40 s, system: 1.48 s, elapsed: 69.96 s INFO: "legacy_zset": removed 2467443 row versions in 35314 pages DETAIL: CPU: user: 1.08 s, system: 1.08 s, elapsed: 44.54 s INFO: index "legacy_zset_pkey" now contains 30726514 row versions in 188181 pages DETAIL: 256281 index row versions were removed. 2736 index pages have been deleted, 1263 are currently reusable. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.01 s. INFO: index "idx__legacy_zset__key__score" now contains 30726704 row versions in 220608 pages DETAIL: 2381844 index row versions were removed. 29930 index pages have been deleted, 14732 are currently reusable. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.04 s. INFO: "legacy_zset": found 33172 removable, 7071088 nonremovable row versions in 77985 out of 277673 pages DETAIL: 52 dead row versions cannot be removed yet, oldest xmin: 165140508 There were 571891 unused item pointers. Skipped 0 pages due to buffer pins, 198845 frozen pages. 0 pages are entirely empty. CPU: user: 11.12 s, system: 3.69 s, elapsed: 154.02 s. INFO: vacuuming "pg_toast.pg_toast_199137" INFO: index "pg_toast_199137_index" now contains 0 row versions in 1 pages DETAIL: 0 index row versions were removed. 0 index pages have been deleted, 0 are currently reusable. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.00 s. INFO: "pg_toast_199137": found 0 removable, 0 nonremovable row versions in 0 out of 0 pages DETAIL: 0 dead row versions cannot be removed yet, oldest xmin: 165141485 There were 0 unused item pointers. Skipped 0 pages due to buffer pins, 0 frozen pages. 0 pages are entirely empty. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.00 s. VACUUM

-

load average: 1.78, 3.79, 3.84Going the right way.

-

load average: 0.71, 2.36, 3.26

-

-

There appears to be a bad post in the Lounge Status thread, mobile safari is getting into a redirect loop and dying with “there is a problem with the page”

-

@izzion

It's definitely not this post which contains a lot of nested<pre>tags.

That post certainly isn't a reply to a post discussing mobile Safari's inability to handle lots of nested scrollable elements.

!

!