WTF Bites

-

@heterodox said in WTF Bites:

I like making their head explode with one command

You mean, their head explode because you're dumb enough to install a Mcafee product?

-

@MrL the two shadiest antiviruses, and one that has lowest detection rates in the market. Beautiful.

I thought that Kaspersky was shadiest. And what is there against Avast?

-

Once upon a midnight dreary, while I reviewed, cross and fiery,

Over many a strange lines arranged in ancient .NET Framework

While I ran, debugged this coding horror, suddenly there came an error,

Which I inquired Bing in furor, leading to a post by Mahesh Kumar

"'Tis a godsend!" I exclaimed and clicked the link in iexplore

Quoth the server, 404

-

But how do you uninstall it?

ePO. :P Or the package manager, if I didn't have connectivity for some reason. Commercial software actually tends to behave with package managers these days.

Ah, so your employer also makes you sacrifice half your CPU cores to 90s style security?

Home machine.

And malware stopped being a thing in the 90s? Huh.

All of it running simultanously. On 5400rpm hdd.

I once encountered that working on a university help desk. Except something had actually landed on the machine that both of the two products installed recognized. So AV 1 would move it to quarantine, AV 2 would see the move, scan it, then move it to its own quarantine, AV 1 would see the move, scan it... each of them popping up a modal each time.

Poor girl was just trying to write a paper.

-

And what is there against Avast?

They make sponsorship deals with various IT news sites to ensure the virus packs they use for testing detection rates only contain viruses that avast! can detect, so it ends up high in rankings - while real life detection rate is poor. I've been using avast! for years, but then I switched to Avira, and it found 50 threats right off the bat. Even without that, I knew avast! let some malware through, because I've had several rundll32.exe processes running at 100% CPU, and other malware-related problems on my computers.

-

-

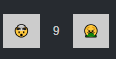

@HardwareGeek said in WTF Bites:

@brie The actual name of

vargives a hint what it's used for (not that the hint is necessary accurate, but it probably is), but the meaning of the specific values, .

.Why is the image for

identical to the image for

identical to the image for  ? Is a

? Is a  not a

not a  ?

?Because the original emoji was

and they added gendered versions as an afterthought.

and they added gendered versions as an afterthought.The same reason that

shows women,

shows women,  shows a man, and

shows a man, and  shows a heterosexual couple.

shows a heterosexual couple.

-

@Tsaukpaetra

But you upvoted it, Shirley that cached it in your memory banks!

-

The Deckard Cain school of recruiting!

Stay awhile, and listen!

Stay awhile, and listen!

Stay awhile, and listen!

Stay awhile, and listen!

Stay awhile, and listen!

Stay awhile, and listen!

-

@Tsaukpaetra

But you upvoted it, Shirley that cached it in your memory banks!No, it explosively removed it from cache, otherwise the vote would not be needed.

-

@Tsaukpaetra Explosively? It seems like it might be rather entertaining to watch you read TDWTF, if there's an explosion every time you up-vote a post.

-

@HardwareGeek said in WTF Bites:

if there's an explosion every time you up-vote a post.

His brain is like a popcorn machine

-

@TimeBandit Air popper or old-fashioned oil? Are you saying he's full of hot air?

-

@HardwareGeek said in WTF Bites:

@TimeBandit Air popper or old-fashioned oil? Are you saying he's full of hot air?

:man_in_steamy_room:

-

@topspin Steamy room doesn't really work for popping corn, though. It needs to be well above 100C to

eatexplosively — WTF autocorrect? — vaporize the water in the corn kernel.

-

@HardwareGeek said in WTF Bites:

Air popper or old-fashioned oil? Are you saying he's full of hot air?

More like this one

https://www.youtube.com/watch?v=nqCswLScg_A

It's scary that it work

-

@HardwareGeek said in WTF Bites:

@Tsaukpaetra Explosively? It seems like it might be rather entertaining to watch you read TDWTF, if there's an explosion every time you up-vote a post.

I blame autocorrect. The intended word is left to the reader's imagination.

-

I wouldn't put it past people dumb enough to stick suspect USB hardware in vulnerable orifices to still run XP.

Always so quick to jump to conclusions. Have you ever thought of the possibility that they might have special, useless, away-from-internet laptops specifically for the purpose of plugging suspect USB hardware into it and let the viruses grow there, specifically so it doesn't infect any computers that actually matter?

Of course I have. I worked with the Shadowserver guys in a previous job and they have racks of hardware dedicated to that job. If that had been they case here, why would they "stop the analysis to halt any further corruption of his computer"? TDEMS.

How are they even supposed to test suspicious flash drives without connecting them anywhere? Laser cutters and electron microscopes every time?

Surely you can come up with something smarter.

You're watching too many movies.

And it wasn't a storage for hacker tools to be invoked directly by operator. It wouldn't have autoplay then. It was a drop-off that was supposed to be intentionally lost in the crowd with the intention of some random person finding it, getting curious and plugging it into their machine.

Right. Have you informed the SS of your successful conclusion of their investigation yet?

-

@heterodox said in WTF Bites:

Poor girl was just trying to write a paper.

@Book{trollope, author = "Alice {Trollope} and Eve {Haxx0r}", title = "The Illustrated Guide to Electronic Snake Oil", publisher = "X5O!P%@AP[4\PZX54(P^)7CC)7}$EICAR-STANDARD-ANTIVIRUS-TEST-FILE!$H+H*", year = 2019 }cite me

-

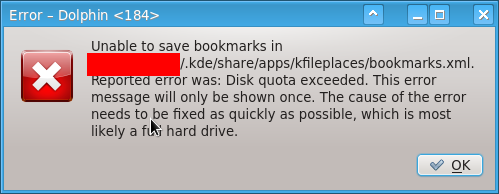

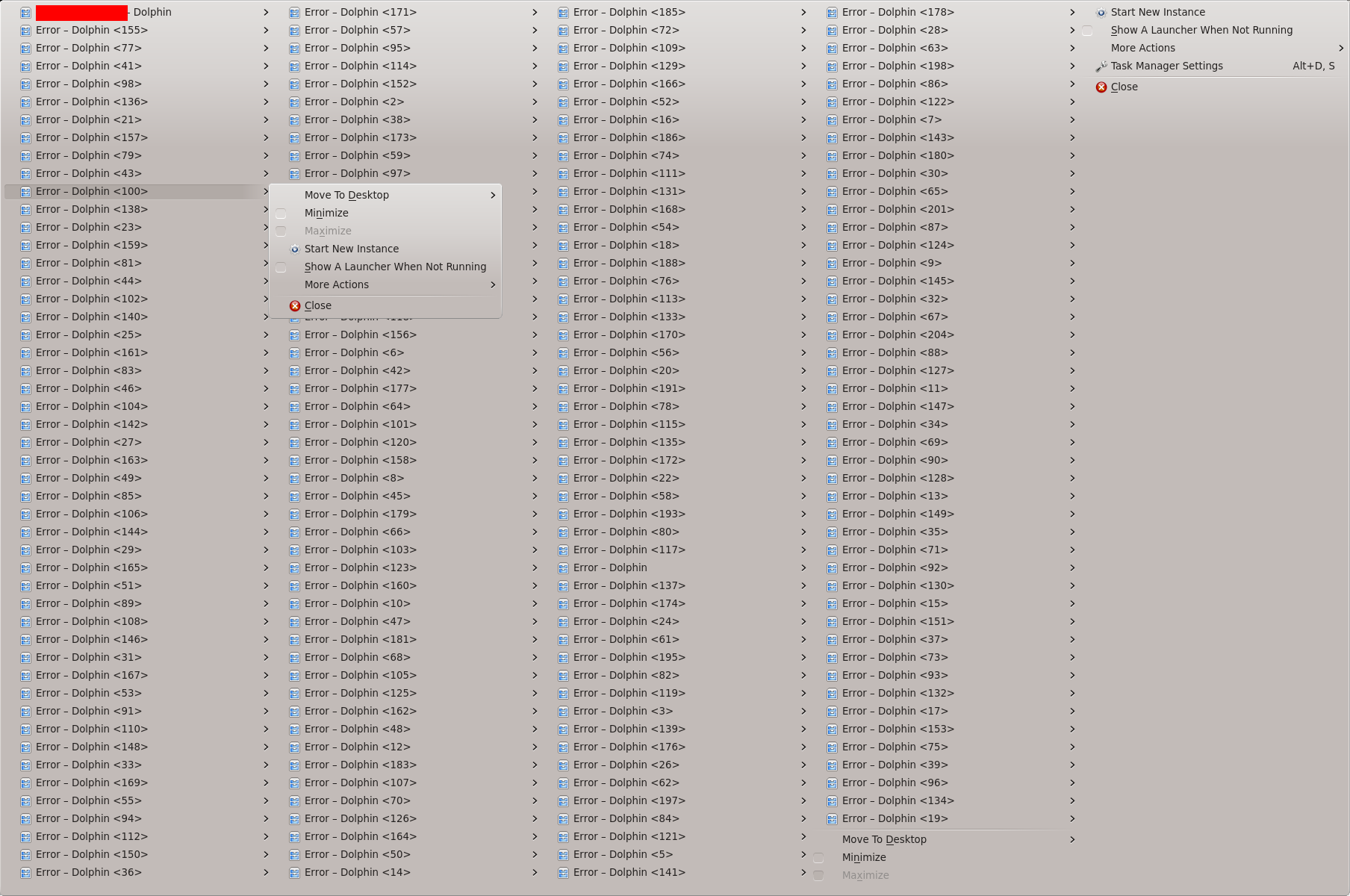

Wasn't at the office yesterday. Naturally you'd assume that my computer doesn't do too much other than idling, but of course something needs to do stupid things, anyway. When I got back today, I had 20 emails that my home folder has reached the quota limit (it's pretty low, which is a minor

in itself, but we're not supposed to do actual work in there) because some stupid programs need to shit 1GB of garbage into

in itself, but we're not supposed to do actual work in there) because some stupid programs need to shit 1GB of garbage into ~/.config.Okay, cleaned up some space, now dealing with the errors that (non-culprit) programs encountered:

Sorry for the mouse cursor.

"This error message will only be shown once."

That's good. Or is it? Look at the title bar.

-

Wasn't at the office yesterday. Naturally you'd assume that my computer doesn't do too much other than idling

Ah, I see you don't use Windows 10. </cheapshot>

-

my home folder has reached the quota limit

4 megs should be enough for anyone.

-

@HardwareGeek said in WTF Bites:

if there's an explosion every time you up-vote a post.

*flashbacks of Likes Thread on

*

*

-

Behold! Logs in XML:

<log> <entry t="15947436f813a908" pro="lua" mod="somelibrary.so" stk="0" thd="b6f73010" loc="somesoure.c (AFunctionWithFunnyName)(994)" cat="3"><![CDATA[The actual message will go here…]]></entry> … </log>Many lines, of course.

Yes, it ends with the closing tag, so …

-

@Bulb except for the potential injection of "]]>" making file unreadable (but easily recoverable), and that it might be a bit of overkill depending on how much of that info is actually being used then - I don't really find much wrong with it.

-

@Bulb except for the potential injection of "]]>" making file unreadable (but easily recoverable), and that it might be a bit of overkill depending on how much of that info is actually being used then - I don't really find much wrong with it.

Either you append the

</log>only upon closing it, thus keeping it malformed as long as it's being written to, which for many logfiles means "all the time", or appending a line involves scanning back over the closing tag every time, with all the race conditions that creates on top of the overhead and the abstraction level violation that is fiddling withseek()in a file that's supposed to be handled only as high-level structured data.

-

Yes, it ends with the closing tag, so …

There are XML entity inclusion shenanigans that can be done to work around that; Java's got a logging engine that will produce this sort of thing. It's nasty since it involves one file having a sequence of XML elements and another one having the outer element that contains them all, but if your XML reader supports inter-file entities (which might be turned off as a security feature) then it can read the result.

I feel dirty for knowing this. And pleased for not remembering the syntax…

-

@Bulb except for the potential injection of "]]>" making file unreadable (but easily recoverable), and that it might be a bit of overkill depending on how much of that info is actually being used then - I don't really find much wrong with it.

Either you append the

</log>only upon closing it, thus keeping it malformed as long as it's being written to, which for many logfiles means "all the time", or appending a line involves scanning back over the closing tag every time, with all the race conditions that creates on top of the overhead and the abstraction level violation that is fiddling withseek()in a file that's supposed to be handled only as high-level structured data.Forgot about that. I agree. Terrible design. Too bad XML doesn't allow multiple root tags...

-

with all the race conditions that creates

Is that a thing?

Is that a thing that wouldn't happened if you were only appending?

I've never tried to write to a file from multiple threads without buffering and locking.

-

I've never tried to write to a file from multiple threads without buffering and locking.

It's lots of fun.

-

@boomzilla said in WTF Bites:

@boomzilla said in WTF Bites:

Pandora has been doing this to me this afternoon:

And today, in chrome:

react-dom.production.min.js:188 DOMException: Failed to execute 'createElement' on 'Document': The tag name provided ('i°_°i') is not a valid name.

OK, so it keeps happening so I report it to them. First I got a generic acknowledgement email, then I got one asking for some more information. URL, screenshot, etc. OK, fine. I take a screenshot that includes the URL where I'm currently listening (I've got it on shuffle) and paste the URL in for good measure.

I few minutes later the music stops and I get one of those, "Someone else is trying to listen to your pandora." And it gives me buttons: "Let them listen" "Let you listen". Of course, I clicked for me to listen.

I suspect some engineer tried going to the URL which is presumably tied to my account.

-

@boomzilla said in WTF Bites:

few minutes later the music stops and I get one of those, "Someone else is trying to listen to your pandora." And it gives me buttons: "Let them listen" "Let you listen". Of course, I clicked for me to listen.

Wait, someone can impersonate you by going to an arbitrary URL?!??

-

@Tsaukpaetra said in WTF Bites:

@boomzilla said in WTF Bites:

few minutes later the music stops and I get one of those, "Someone else is trying to listen to your pandora." And it gives me buttons: "Let them listen" "Let you listen". Of course, I clicked for me to listen.

Wait, someone can impersonate you by going to an arbitrary URL?!??

I just opened my current URL in a window and it asks me to login. So, no. But I'm assuming that their in house devs can impersonate users.

-

@HardwareGeek Potential new vote buttons:

-

-

With custom CSS the future can be yours today!

a[component="post/upvote"] .fa:before { content: '🤯'; } a[component="post/downvote"] .fa:before { content: '🤮'; }I'm sure there are proper CSS entities for those emojicons if you prefer.

-

with all the race conditions that creates

Is that a thing?

If you're overwriting something someone else might just be about to read, definitely. You'd have to guarantee the overwriting is atomic so someone else will always see a

</log>tag, with your new line or without.Is that a thing that wouldn't happened if you were only appending?

It can still happen but with line-based logs it's much easier to work around so it's not an issue in practice. If you're tailing a log and you get a line without a trailing line feed, you simply don't pass this bit on to your consumer but store it until you're reading the next block.

I've never tried to write to a file from multiple threads without buffering and locking.

Simultaneous writing doesn't work anyway. That is, with threads you can try and share file handles but I can't think of any application where that wouldn't be a horrible idea to begin with.

-

Is that a thing that wouldn't happened if you were only appending?

If the writer uses the append flag, and does not break things with its own buffering, the operating system takes care of the synchronization, even among unrelated writers. The logging implementation has to take care to send complete entry in each write (so either assemble in own buffer and use unbuffered write, or use line-buffering on thread-local handles).

-

Status: Apparently, fail2ban must process the whole log file before enacting any actions.

This means, if a DOS attack is underway that inflates a log file to, say, 1 GB in the span of 1 second, fail2ban will inevitably be unable to process it for much greater than 1 second, and the log spam will continue unabated because it's not taking any action until the completion of the log read.

...

...

Why??!?!? What possible reason could there be to not instantly take action the moment all the triggers were met while reading the linear log file it's triggering from??!?

-

@Tsaukpaetra said in WTF Bites:

Status: Apparently, fail2ban must process the whole log file before enacting any actions.

This means, if a DOS attack is underway that inflates a log file to, say, 1 GB in the span of 1 second, fail2ban will inevitably be unable to process it for much greater than 1 second, and the log spam will continue unabated because it's not taking any action until the completion of the log read.

...

...

Why??!?!? What possible reason could there be to not instantly take action the moment all the triggers were met while reading the linear log file it's triggering from??!?

That would imply it had to evaluate the banning rules after each line of input read, which would further slow down the log reading, probably by a lot. If you're dealing with DOS attacks of this scale, a simple logfile follower in Python is not the right tool for you. Also, bogging down the system with GB/s of logs is not what you want from the software that migt be attacked. You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

-

That would imply it had to evaluate the banning rules after each line of input read, which would further slow down the log reading, probably by a lot.

I'm not asking for a line-by-line rule evaluation. I'm asking that the whole of the log file should not be required to read to the never-end before attempting to do any processing at all. Stop taking the extreme.

If you're dealing with DOS attacks of this scale, a simple logfile follower in Python is not the right tool for you.

Probably not, but it's all I can set up at the moment as (for raisins) I don't have access to DNS to route traffic through a protection provider, so "You're on your own" is the current modus operandi.

Also, bogging down the system with GB/s of logs is not what you want from the software that migt be attacked.

And I'd rather nginx would not report that it's indeed still rate-limiting X IP every request, but we can't all have nice things, can we?

You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

I mean, sans the UDP thing, that's kinda what fail2ban does, no? and it's not IO that's the problem: The iops were basically minimal from the perspective of request satisfaction; rather, the CPU was bound up grepping the whole thing.

-

@Tsaukpaetra said in WTF Bites:

That would imply it had to evaluate the banning rules after each line of input read, which would further slow down the log reading, probably by a lot.

I'm not asking for a line-by-line rule evaluation. I'm asking that the whole of the log file should not be required to read to the never-end before attempting to do any processing at all. Stop taking the extreme.

You've just read a single line of log. What should happen?

a) add the parsed data to the tally and try to take action by calling the rule engine

b) add the parsed data to the tally and keep reading until EOF, then call the rule engine

c) something elsefail2ban seems to do b). c) could mean "keep reading and check a timer after every line, and in case we've been reading for n seconds w/o reaching EOF, call the rule engine". That's not trivial though, IIRC fail2ban uses plugins for reading the logs and they'd have to know about that.

You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

I mean, sans the UDP thing, that's kinda what fail2ban does, no?

You mean it's not keeping up with the output of iptables rate limit rules?!

and it's not IO that's the problem: The iops were basically minimal from the perspective of request satisfaction; rather, the CPU was bound up grepping the whole thing.

Yeah, Python is slow

-

c) could mean "keep reading and check a timer after every line, and in case we've been reading for n seconds w/o reaching EOF, call the rule engine". That's not trivial though, IIRC fail2ban uses plugins for reading the logs and they'd have to know about that.

My thought was more along the lines of "Read chunk of log 1 MB in size and process it, noting where the last line-end was. Send results back to server. Read next 1 MB chunk offsetting the recalled line-offset". No special communication to plugins should be needed for that.

You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

I mean, sans the UDP thing, that's kinda what fail2ban does, no?

You mean it's not keeping up with the output of iptables rate limit rules?!

It never gets to the point of adding to the rules in the first place, so

and it's not IO that's the problem: The iops were basically minimal from the perspective of request satisfaction; rather, the CPU was bound up grepping the whole thing.

Yeah, Python is slow

But even slow things can be made usable.

With my idea, doesn't matter if it's slow. It would get caught in the first chunk and block that particular IP, stopping it from spamming the logs, and thence when it gets past that one the next will come up, until it's leap-frogged its way to blocking everyone as soon as it could have and thus limiting the extra processing it would have needed to do otherwise.

With my idea, doesn't matter if it's slow. It would get caught in the first chunk and block that particular IP, stopping it from spamming the logs, and thence when it gets past that one the next will come up, until it's leap-frogged its way to blocking everyone as soon as it could have and thus limiting the extra processing it would have needed to do otherwise.

-

So we have a complex PHP application running on a wordpress site. Now, Wordpress essentially delegates all its output to whatever "theme" is enabled, and that means the easiest way to add or modify custom php content is to change the theme files. But this also means when you update the theme, you lose all your modifications.

Not to worry though, wordpress has a mechanism to prevent exactly this: it's called "child themes", and as the name implies it's essentially a second theme that inherits all its code from the first one, so you can update the parent and keep your changes in the child.Well, we don't have that. The day anyone accidentally clicks on "update theme", everything is going to stop working. I've told my boss that half a dozen times (since I don't have admin access on that site), but for some reason he just doesn't seem give a damn.

-

@anonymous234 said in WTF Bites:

But this also means when you update the theme, you lose all your modifications.

YES! It's fucking annoying!!!

@anonymous234 said in WTF Bites:

so you can update the parent and keep your changes in the child.

Yeah. Apparently nobody who was working on the site I have in mind knew about or cared to figure out how that worked at all.

@anonymous234 said in WTF Bites:

everything is going to stop working.

Backups?

-

@anonymous234 said in WTF Bites:

running on a wordpress site

I guess there is a reason WordPress is the most dreaded platform of the year.

(The last year's winner, SharePoint, does not seem to be mentioned this year anymore, and Drupal, the last year's runner up, moved to win another category, web frameworks)

-

-

@TimeBandit: it's a classic. For years, journalists have been publishing articles about risks of ads and trackers, the cost of bloated web pages, etc. Of course, every time someone in the comment section says "Great. Why don't you start by fixing your site?". Cue silence or awkward backpedaling by the journalist.

-

@Tsaukpaetra said in WTF Bites:

c) could mean "keep reading and check a timer after every line, and in case we've been reading for n seconds w/o reaching EOF, call the rule engine". That's not trivial though, IIRC fail2ban uses plugins for reading the logs and they'd have to know about that.

My thought was more along the lines of "Read chunk of log 1 MB in size and process it, noting where the last line-end was. Send results back to server. Read next 1 MB chunk offsetting the recalled line-offset". No special communication to plugins should be needed for that.

Yes, that would be even better -- iff the plugins aren't doing the reading themselves in the first place.

I'm not sure how many of those are centrally developed.You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

I mean, sans the UDP thing, that's kinda what fail2ban does, no?

You mean it's not keeping up with the output of iptables rate limit rules?!

It never gets to the point of adding to the rules in the first place, so

Ah, I was thinking more about rules that log when a certain rate is exceeded.

-

@Tsaukpaetra said in WTF Bites:

c) could mean "keep reading and check a timer after every line, and in case we've been reading for n seconds w/o reaching EOF, call the rule engine". That's not trivial though, IIRC fail2ban uses plugins for reading the logs and they'd have to know about that.

My thought was more along the lines of "Read chunk of log 1 MB in size and process it, noting where the last line-end was. Send results back to server. Read next 1 MB chunk offsetting the recalled line-offset". No special communication to plugins should be needed for that.

Yes, that would be even better -- iff the plugins aren't doing the reading themselves in the first place.

I'm not sure how many of those are centrally developed.Yeah, I'm sure it's been improved since the version that is packaged in the distro was released.

You'll want iptables/ipfw rate limiting/logging rules and logging to a dedicated loghost via UDP so you can drop packets if the analysis can't keep up instead of killing your whole I/O system.

I mean, sans the UDP thing, that's kinda what fail2ban does, no?

You mean it's not keeping up with the output of iptables rate limit rules?!

It never gets to the point of adding to the rules in the first place, so

Ah, I was thinking more about rules that log when a certain rate is exceeded.

Yeah, it also checks against timestamps, so if it encounters a matching entry that would have fallen off the ban list, it will ignore it regardless. Thus, even if it was following along, if it's so far behind processing that by the time it gets to an entry it's been long past....

In any case, I manually updated (since it's just a bunch o' scripts) and perhaps it will be more performant...

.

.