Intel making us slow down

-

@heterodox Comments in these news say that this requires physical access to the machine, and has an easy fix.

-

@sockpuppet7 said in Intel making us slow down:

@heterodox Comments in these news say that this requires physical access to the machine, and has an easy fix.

Did I say that wasn't the case? Same thing for the IME vulnerabilities; just update your firmware. (N.B.: Doesn't mean everyone's going to get to it, as the updates are delivered through channels unfamiliar to most users.)

-

@scholrlea said in Intel making us slow down:

@boomzilla said in Intel making us slow down:

@masonwheeler said in Intel making us slow down:

@weng said in Intel making us slow down:

@lolwhat Nah, I'll roll with incompetence as the root cause here.

I'll be switching back to AMD at home for the foreseeable future.

Does this even affect home systems? (ie. do you and I have any real reason to be concerned about our home PCs?) From what I've read, it appears that this is an issue that's mostly a headache for cloud servers and other shared systems.)

When your OS gets updated your Intel machine will run more slowly.

So, business as usual for Windows?

Yeah... Apple isn't guilty of updates slowing their hardware at all. Nope. Completely a Windows problem...

-

@scholrlea said in Intel making us slow down:

@cursorkeys said in Intel making us slow down:

@hardwaregeek said in Intel making us slow down:

Protip: Use SystemVerilog; it's a strict superset of Verilog

Or just use VHDL, Trying to fix Verilog is like trying to fix Javascript.

Hmmn. I mean, I was joking, but I am sort of curious about the topic, so maybe.

While I'm on the topic, though:

-

I was wondering what you think of the Parallella, and their claimed performance. I have a distinct sense that something isn't adding up there. Not as bad as what Geri thought he could accomplish with his dazzling ignorance of real-world chip design, but they seem to be involved in some wishful thinking in their press releases, and some of the reports about it seem to back that up. I mean, it sounds like a good idea, but it is the same good idea that failed for the Connection Machine and the Transputer back in the day, and actually selling the FPGA implementation as something other than an engineering prototype seems self-defeating. Any thoughts?

-

Similarly, any comments about the Mill, and the upcoming tape-out of it's FPGA test implementation (or did that get done already, I'm not sure)? Those following it seem split between "It's gonna be epic!" and "It's gonna flop worse than EPIC!", plus a handful who are convinced it's a scam and are looking to see what the real angle is. I get the impression that Godard et al are really convinced they are on to something big, but at the same time realize how big a risk they are taking trying to come up with a new design on a barely any budget and only a handful of full-time employees, and are actually trying to avoid hyping it too much to lessen the impact of a potential face-plant.

NodeBB ate my reply. Which is weird because that's something it's usually perfect about. I'll make this one shorter anyway...

My experience is all with low-end FGPAs. The most complicated thing I've done is to make a TFT 2D graphics accelerator on a little Spartan so I don't feel qualified to comment on those, they do look very neat though.

I have used two micros that both maybe took a little inspiration from the Transputer idea. Both interrupt-less:

They were both nice although XMOS's 'XC' version of C was a lot nicer to use than the 'SPIN' language you have to use for the Propeller. Apart from messing around I couldn't really find a use I had for them. The xCore is a bit of a beast and they seem to be really pushing Audio/DSP stuff which I'm sure it excels at.

Interrupt-less was something that sounded really exciting but I've only once had a serious issue with interrupt latency and that was an SDR-like thing I designed. That was cured by hand-crafted assembly to ensure execution paths matched, the Propeller might have been a very good choice instead if it had existed back then.

-

-

@pie_flavor said in Intel making us slow down:

@anonymous234 The major difference between a thing that might go wrong and a thing that cannot possibly go wrong is that when a thing that cannot possibly go wrong goes wrong it usually turns out to be impossible to get at and repair.

As Jenkins puts it, "What we're suggesting is that something that doesn't really interact with anything is changing something that can't be changed."

-

Snippets from the Veeam (backup software company) weekly digest e-mail they send to their customers, from the "The Word from Gostev" section...

At this point, you already know enough to understand Meltdown. This vulnerability is basically a bug in MMU logic, and is caused by skipping address checks during the speculative execution (rumors are, there's the source code comment saying this was done "not to break optimizations"). So, how can this vulnerability be exploited? Pretty easily, in fact. First, the malicious code should trick a processor into the speculative execution path, and from there, perform an unrestricted read of another process' memory.

OK, so let's switch to Spectre next. This vulnerability is known to affect all modern CPUs, albeit to a different extent. It is not based on a bug per say, but rather on a design peculiarity of the execution path prediction logic, which is implemented by so-called Branch Prediction Unit (BPU). Essentially, what BPU does is accumulating statistics to estimate the probability of IF clause results. For example, if certain IF clause that compares some variable to zero returned FALSE 100 times in a row, you can predict with high probability that the clause will return FALSE when called for the 101st time, and speculatively move along the corresponding code execution branch even without having to load the actual variable.

But here comes the major difference between Meltdown and Spectre, which significantly complicates Spectre-based exploits implementation. While Meltdown can "scan" CPU cache directly (since the sought-after value was put there from within the scope of process running the Meltdown exploit), in case of Spectre it is the victim process itself that puts this value into the CPU cache. Thus, only the victim process itself is able to perform that timing-based CPU cache "scan". Luckily for hackers, we live in the API-first world, where every decent app has API you can call to make it do the things you need, again measuring how long the execution of each API call took. Although getting the actual value requires deep analysis of the specific application, so this approach is only worth pursuing with the open-source apps. But the "beauty" of Spectre is that apparently, there are many ways to make the victim process leak its data to the CPU cache through speculative execution in the way that allows the attacking process to "pick it up". Google engineers found and documented a few, but unfortunately many more are expected to exist. Who will find them first?

Of course, all of that only sounds easy at a conceptual level - while implementations with the real-world apps are extremely complex, and when I say "extremely" I really mean that. For example, Google engineers created a Spectre exploit POC that, running inside a KVM guest, can read host kernel memory at a rate of over 1500 bytes/second. However, before the attack can be performed, the exploit requires initialization that takes 30 minutes!

Last but not least, do know that while those patches fully address Meltdown, they only address a few currently known attacks vector that Spectre enables. Most security specialists agree that Spectre vulnerability opens a whole slew of "opportunities" for hackers, and that the solid fix can only be delivered in CPU hardware. Which in turn probably means at least two years until first such processor appears – and then a few more years until you replace the last impacted CPU. But until that happens, it sounds like we should all be looking forward to many fun years of jumping on yet another critical patch against some newly discovered Spectre-based attack.

An interesting read overall, and I kind of wish they published The Word within his normal blog posts so I could link it in full instead of blockquoting large chunks of it. Looks like it's one of those cases where "Meltdown is worse right now, but Spectre is going to be more persistant and might be worse in the long term."

Edit: extended 3rd paragraph blockquote, since I lopped off too much of the context the first time.

-

@izzion said in Intel making us slow down:

Which in turn probably means at least two years until first such processor appears – and then

a fewmany more years until you replace the last impacted CPU.You know some of them are never going to be replaced.

@izzion said in Intel making us slow down:

Edit: extended 3rd paragraph blockquote

Hey, I was reading that!

-

@hardwaregeek said in Intel making us slow down:

Hey, I was reading that!

Sorry. I just started reading it too and then I realized I trimmed too aggressively and left out the thought that connected to paragraph 4, and really most of the really meaty part of paragraph 3 altogether.

Sorry. I just started reading it too and then I realized I trimmed too aggressively and left out the thought that connected to paragraph 4, and really most of the really meaty part of paragraph 3 altogether.So I thought to myself "should I leave it, or edit it and risk degrading @HardwareGeek's reading experience", and then was like "#YOLO" and edited it.

-

-

-

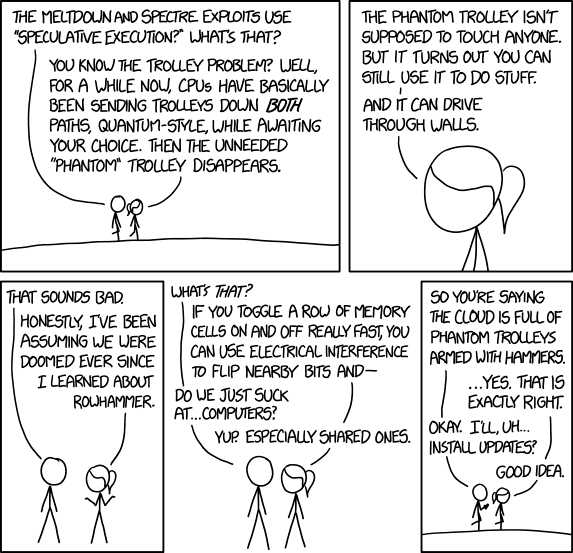

@twelvebaud looks like XKCD to me. Ninja edit?

-

@lb_ said in Intel making us slow down:

@twelvebaud looks like XKCD to me. Ninja edit?

Probably a melted spectre.

-

@lb_ said in Intel making us slow down:

@twelvebaud looks like XKCD to me. Ninja edit?

Troll response. As you can see from the lack of the pencil icon on my post, I didn't make any edits.

-

@lb_ said in Intel making us slow down:

@twelvebaud looks like XKCD to me. Ninja edit?

@TwelveBaud must have been all outta Rosie.

Filed under: Obscure WTDWTF meme is obscure, Can't be arsed to link bonus pun to YouTube

-

-

@boomzilla said in Intel making us slow down:

Top comment:

In all fairness to Microsoft, if Windows can’t start, the computer is technically safe from these vulnerabilities.

-

-

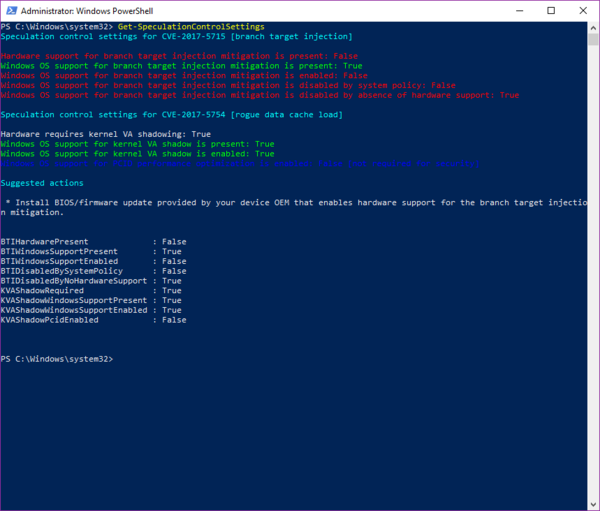

My computer is affected. Unsure if Intel will bother actually releasing a patch for it, though, as they only have announced CPUs from the past 5 years will get it this far. So, Windows have the patch installed on my computer but don't have it activated because it is lacking hardware support for it. And I still don't want to replace my computer quite yet.

-

The thread on this topic over at OSDev is mildly

worthy, if anyone is interested. Here's one gem from it, and far from the weirdest thing said so far:

worthy, if anyone is interested. Here's one gem from it, and far from the weirdest thing said so far:I don't use memory management in my OS for security (because my plan is for all the code running in the machine to be written by AI in the OS, thereby ensuring that there is never any malicious code present in the first place [...])

Just thought I'd mention that.

-

@atazhaia said in Intel making us slow down:

My computer is affected. Unsure if Intel will bother actually releasing a patch for it, though, as they only have announced CPUs from the past 5 years will get it this far. So, Windows have the patch installed on my computer but don't have it activated because it is lacking hardware support for it. And I still don't want to replace my computer quite yet.

One of my machines is in the same boat, and I doubt there will be patches for it. Even if Intel decides to make them available, the motherboard manufacturer has already said they're only doing updates for boards currently in warranty.

-

@parody If Intel does release a patch I should be able to install it through Linux, as I have got microcode updates there before.

Either way, I am still hoping I can hold out to PCIe 4.0 release before needing to replace my computer. It also gives me time to figure if i9 or Threadripper is the better choice.

-

@scholrlea There's a lot of really stupid people on that site.

EDIT: oh I just noticed this particular stupid person was that Tilde character you've talked about in other threads:

Now that I think about it, I think that this problem could also be greatly mitigated if most of the data of a program was put on disk and then only a portion of it loaded at a time. The memory usage would be much better, much lower, and storing all data structures mainly on disk for most applications would make hitting enough of very private data (enough to be usable or recognizable) too difficult and infrequent, so storing all user data on disk could also be an option.

-

@blakeyrat Yeah, he is not only ignorant, but stupid and stubborn. About half of the thread is either him saying something ridiculous, or someone trying to explain to him that something he said earlier was ridiculous following by him doubling down on it. Most of the threads he posts in go this way, actually.

-

@scholrlea No that makes perfect sense. The patch could reduce performance by up to 30% on some rare workloads, therefore the obvious solution is to store all data structures on disk all the time with zero caching whatsoever, because that'll obviously be a lot faster than the OS with the patch installed.

EDIT: seriously you've made me into a fan of this guy. A gem in his next post:

Or why not provide a full API so that programs can manage the usage of their cache so that critical programs can disable cache for themselves and thus prevent this? I think that the real solution would be as simple as this so it's the fault of the OS for not providing these APIs but instead dummily hiding all the modern details, and OSes should provide APIs for the most advanced and lowest level functions so that each program can fine-tune how to use the machine.

Has he ever tried to use a computer with caches disabled? Like the disk is set to PIO mode instead of any of the DMA modes (you can try that one out yourself without really breaking anything-- it's a toggle on the driver in Windows). It's like 3 orders of magnitude than the machine running normally, and I'm not even exaggerating.

Not even to mention that the caching that causes this exploit is in the L1 cache of the CPU which, AFAIK, can not be disabled since it's a hardware feature and (in theory at least) invisible to all higher abstraction layers. (I mean, this exact bug is that code at higher levels of abstraction can peep inside the L1 cache when they shouldn't be able to.)

-

@boomzilla said in Intel making us slow down:

I always thought that it was amusing that some products method of preventing rootkits was to become a rootkit itself....

-

@atazhaia said in Intel making us slow down:

@parody If Intel does release a patch I should be able to install it through Linux, as I have got microcode updates there before.

I read a bit more and people are saying Windows could do the same sort of thing. It's too early to tell what they'll choose to do, I suppose.

-

@atazhaia said in Intel making us slow down:

@parody If Intel does release a patch I should be able to install it through Linux, as I have got microcode updates there before.

Either way, I am still hoping I can hold out to PCIe 4.0 release before needing to replace my computer. It also gives me time to figure if i9 or Threadripper is the better choice.

@atazhaia said in Intel making us slow down:

@parody If Intel does release a patch I should be able to install it through Linux, as I have got microcode updates there before.

Intel already said meltdown isn't fixeable with microcode. The only solution is modifying all OS kernels, or buy a new CPU.

-

-

@sockpuppet7 said in Intel making us slow down:

The only solution is modifying all OS kernels, or buy a new CPU.

I'm not sure if CPUs hardened against these sorts of things are available yet. I'm not surprised that microcode changes won't fix it though; the flaw is in one of the parts that won't be done with reprogrammable hardware because it's rather large and timing critical (these caches are done with a CAM) even when done without that overhead of using an FPGA.

Oh well, at least our (deeply weird) supercomputer at work is entirely unaffected by this. It's got no internal security partitions or L1 caches, so that's that. ;) Supercomputers are very different from normal computers in terms of security though.

-

@sockpuppet7 Yeah, but Windows refuses to activate the OS patch unless the CPU has the patched microcode installed too. And I know it wont fix the underlying hardware flaw, but for the workarounds to work I need both patches it seems. I also see HP has not bothered releasing a patch for my work laptop yet either, even if it is a recent (Skylake) CPU.

-

@boomzilla said in Intel making us slow down:

Windows users who do not use an antivirus or who use Windows Defender can update right now, as they are not subject to the registry key requirement

I did wonder at the start of the article if Microsoft would manage to pull a Microsoft and not update Defender to be compatible

-

@scholrlea said in Intel making us slow down:

At this point, the

ARMMOS6502 is looking better and better.../me starting looking up prices for FPGAs and textbooks on Verilog

I still prefer the RCA-1802 over the 6502 :) :)

After all, how else can you have a Cosmic Elf?

-

@pjh said in Intel making us slow down:

@izzion quoted in Intel making us slow down:

It is not based on a bug per say

:twitch:

A twitch is insufficient.

-

@dkf said in Intel making us slow down:

I'm not sure if CPUs hardened against these sorts of things are available yet.

They're not, and as I understand it, it'll be a while.

-

@pjh

I'm not the one that writes the blog email, I just read it and share the interesting stuff with y'all...

I'm not the one that writes the blog email, I just read it and share the interesting stuff with y'all...

-

@atazhaia

Branch Target Injection == SpectreRogue Data Cache Load == Meltdown.

So what you're checking is whether Spectre is fixed, which does require a microcode update. What @sockpuppet7 said couldn't be fixed with microcode is a different vulnerability.

-

@izzion said in Intel making us slow down:

@pjh

I'm not the one that writes the blog email, I just read it and share the interesting stuff with y'all...

I'm not the one that writes the blog email, I just read it and share the interesting stuff with y'all...Did you not see the

s/said/quoted/in my post?

-

@sockpuppet7 said in Intel making us slow down:

Intel already said meltdown isn't fixeable with microcode. The only solution is modifying all OS kernels, or buy a new CPU.

How convenient. I think I'll buy my next one from AMD.

-

@thecpuwizard said in Intel making us slow down:

After all, how else can you have a Cosmic Elf?

I hear having a Cosmic Shelf helps a lot with that.

-

@masonwheeler said in Intel making us slow down:

@thecpuwizard said in Intel making us slow down:

After all, how else can you have a Cosmic Elf?

I hear having a Cosmic Shelf helps a lot with that.

Well, my Cosmic Elf lives on a table [and yes, it is Wooden]

-

@izzion Oh, yeah. Reading comprehension fail, I missed that meltdown was specified.

Although that just adds to reasons to wait for a future CPU generation where both these flaws have been fixed in the hardware rather than switching now.

-

@atazhaia meltdown Is the big one, and only affects Intel. They were lucky that Spectre was found about the same time and they love to bring the issues together to bring AMD down with them.

-

@sockpuppet7

Of the two attacks, it seems to me that reading cache that you intentionally populated via API calls to the victim process is more "valuable" to an attacker, since he's getting the data he specifically wants. Reading everything that happens to be in cache is not good, but it's not necessarily valuable, either, depending on what the other processes on the system are doing.And while it's possible that people just didn't release a POC or I didn't read widely enough to find one, I haven't seen any POCs for using Meltdown to cross the guest -> hypervisor barrier. Whereas there are POCs out for using Spectre to read 12Mbps of hypervisor host cache from within a guest, and the contents of that memory are at least somewhat controlled by the attacker, since the attacker is using API calls to the victim process to dictate what gets read into that cache.

-

This shitstorm just keeps on giving. Now people are claiming Red Hat is having trouble with updates as well (buried in the middle of the article).

And, of course, IBM is reportedly being a dinosaur.

-

@sockpuppet7 And yet Intel's hate for Nvidia makes them able to cooperate with AMD to get a GPU from them, despite being bitter rivals on the CPU side. It's fascinating.

-

@blek

Almost like El Reg breaking the embargo caused the patching efforts to be forced to the forefront without full testing.Granted, it's looking more and more likely that if El Reg hadn't caused the shitstorm, the vulnerability would have been an unpublic 0-day for quite a while more, since vendors almost certainly wouldn't have been ready to go by January 9th and would have probably pushed the coordinated disclosure date back further (after all, if 6 months and 3 weeks isn't enough to fix things cleanly, 7 months isn't going to be either...)

-

@blek Suddenly, not getting that job at IBM seems like it was even more good luck for me. Although the IBM hiring process was a bit

-y and really not adapted for the Swedish job market. Even my sister thought the questions asked (that I failed on) were completely retarded.

-y and really not adapted for the Swedish job market. Even my sister thought the questions asked (that I failed on) were completely retarded.

-

@thecpuwizard said in Intel making us slow down:

@scholrlea said in Intel making us slow down:

At this point, the

ARMMOS6502 is looking better and better.../me starting looking up prices for FPGAs and textbooks on Verilog

I still prefer the RCA-1802 over the 6502 :) :)

After all, how else can you have a Cosmic Elf?

Side note: one of my friends in Berkeley was a semi-retired engineer whose screen name is 'Cmdr COSMAC'. Despite this, I am pretty sure that it has been a long time since he's worked on one. I did email him to mention the Membership Card and ask him what he thought of it, but I didn't hear back from him.

-

@scholrlea - Glad at least one person caught the Cosmic/Cosmac alliteration!

The architecture of the 1802 was quite unique [though the TI 9900 did adopt some of the features]. Still fun to play with the old toys - remember how much we actually did with so little....

-

@thecpuwizard said in Intel making us slow down:

@pjh said in Intel making us slow down:

@izzion quoted in Intel making us slow down:

It is not based on a bug per say

:twitch:

A twitch is insufficient.

Which is why they created

Beam.proMixer??

Mysteriously, Solar Activity Found to Influence Behavior of Radioactive Materials On Earth

Mysteriously, Solar Activity Found to Influence Behavior of Radioactive Materials On Earth

Warning: Microsoft's Meltdown and Spectre patch is bricking some AMD PCs

Warning: Microsoft's Meltdown and Spectre patch is bricking some AMD PCs