Unit testing

-

So... I'll admit it, I've never done unit testing before.

Now I'm managing an app that we've inherited that is Flex frontend with a Java backend. The existing unit tests (for the front end at least) use RIATest which until recently you could download a trial for. You could not, however, purchase a license. Now the website is saying it's EOL.

I assume nobody here uses flex, but I thought I'd ask anyway if anyone knew what we could migrate to. We are supposedly planning a full frontend rewrite, so maybe we should look into buying someone else's RIATest license until we can do the rewrite.

For the backend we use junit, but again I'm not too familiar with it. Last time I tried to run the tests they failed, so I'm not sure how much coverage there is.

Can someone point me in the right direction?

-

What's your question, just general information?

I'd only be familiar with the backend side of things, wherein unit testing ought to be rather simple, regardless of the test library. You just try to exercise all the code paths you can, with expectations of what those paths do, in the smallest possible segments.

-

@Magus said in Unit testing:

What's your question, just general information?

I'd only be familiar with the backend side of things, wherein unit testing ought to be rather simple, regardless of the test library. You just try to exercise all the code paths you can, with expectations of what those paths do, in the smallest possible segments.

I guess I'm just not sure how any of this works. This is mainly a reporting application. Users upload their data, we process it and show a bunch of graphs and data grids. How do you test something like this? A lot of the logic happens in stored procedures, so I guess the backend should be pretty lightweight, but how do you test things without calling the database? How do you test data uploads?

We have some python services too that I would love to get unit tests for as well. One of the problems with this is that there's some randomness to the output so you can't just feed it a some data and expect the same result every time.

-

@dangeRuss If you're looking for general advice, I refer you to @Yamikuronue, the Dominatrix of Debugging who's whipped us SockDevs into shape when it comes to testing stuff.

-

@dangeRuss In your first case, you may well have very little worth testing. You may need to mock out the database somehow, redirecting calls to it to some code in your test, so you can test the non-database code in isolation. I don't know how that works in Java, but there has to be something like it, and presumably your database context is some kind of object you can replace with a fake.

As for the python stuff: usually you'd want to try to redirect calls to the random bit to something consistent, since presumably the thing creating the randomness is something you trust, like a database is. Then your actual logic ought to be testable.

Ideally you'd write code so that things like that are easy to redirect, but that's often not the case, so people have created all sorts of libraries to try to help you with that, but my knowledge of those particular libraries is limited to C#.

-

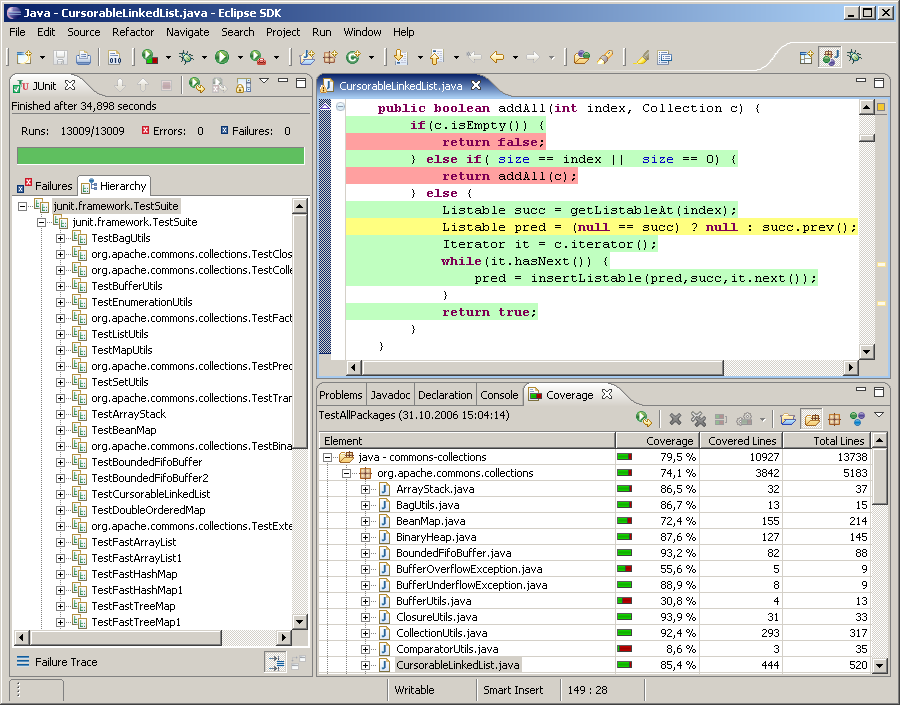

@Magus I saw some really cool stuff in VS 2017 in regards to unit testing (showing which lines were covered by tests). That looked pretty cool.

I think there's some want to move to C#, but I'm not sure how to do that piecemeal since the existing backend is java. I was thinking redoing some of the backend in ColdFusion since I'm more familiar with that and it integrates better with java, but there's not a lot of excitement about CF here.

-

@dangeRuss said in Unit testing:

The existing unit tests (for the front end at least) use RIATest

This looks like GUI automation, doesn't it? Not quite unit testing.

@dangeRuss said in Unit testing:

I guess I'm just not sure how any of this works. This is mainly a reporting application. Users upload their data, we process it and show a bunch of graphs and data grids. How do you test something like this? A lot of the logic happens in stored procedures, so I guess the backend should be pretty lightweight, but how do you test things without calling the database?

The general idea is that you take a unit of code and rip out anything above and below it - the test code replaces the caller, and the mocks replace the dependencies.

So you could unit test the backend services as to whether they call the right stored procedures in the right order, and if there's some logic in them you can test that too by mocking the stored procedures.

You can also unit test the stored procedures (although that might be a little difficult depending on your tooling) - you'd put the fake data in the tables, then call each sproc and verify that whatever is returned matches your expectations.

@dangeRuss said in Unit testing:

We have some python services too that I would love to get unit tests for as well. One of the problems with this is that there's some randomness to the output so you can't just feed it a some data and expect the same result every time.

This usually calls for a refactor in which you pass a randomness source to the methods as a dependency. So the production code will give the method a

new Random()(or however you do it in Python), but the unit test will give it a fake randomizer that actually produces predetermined values.@dangeRuss said in Unit testing:

I saw some really cool stuff in VS 2017 in regards to unit testing (showing which lines were covered by tests).

I'm pretty sure you can do coverage analysis in Java as well.

@dangeRuss said in Unit testing:

I was thinking redoing some of the backend in ColdFusion since I'm more familiar with that and it integrates better with java, but there's not a lot of excitement about CF here.

From Java to ColdFusion? I cannot recommend that and still sleep well at night.

-

@Maciejasjmj The coverage thing in VS2017 Enterprise is similar to NCrunch, where it actively runs the tests and shows success or failure on every covered line, while you work.

But I definitely don't want to sidetrack this, you can test well without that for sure.

-

@Magus said in Unit testing:

But I definitely don't want to sidetrack this, you can test well without that for sure.

Yeah, the live tests are a nice-to-have, but it's not something you particularly miss unless you're doing straight TDD.

-

@dangeRuss said in Unit testing:

So... I'll admit it, I've never done unit testing before.

Good, then your mind isn't poisoned by preconceived notions.

Unit testing does have some benefits, but it also has costs, and in most situations the costs far outweigh the benefits.

Can someone point me in the right direction?

Before asking "how should I do unit testing?" you really ought to ask "should I do unit testing?" And if you're not in a very clear-cut technical situation where the intended functionality of your code can be easily and unambiguously specified in code that's not likely to change in the foreseeable future, the answer is probably "no".

-

If you're not a test absolutist, you don't HAVE to test everything.

I usually cover my most functional "core" code and money-related stuff first, then expand from there.

If you have a lot of functionality in dB, maybe you should focus more on integration tests. Those would involve calling your java code and the test database together.

-

@masonwheeler said in Unit testing:

Before asking "how should I do unit testing?" you really ought to ask "should I do unit testing?"

To clarify what @masonwheeler probably meant: You should always test each functionality (and cover the edge cases), but in many cases mocking one layer is too much work, especially if the interface between the two layers isn't stable (yet). Sometimes, integration tests / functional tests are more appropriate than unit tests.

-

@asdf said in Unit testing:

@masonwheeler said in Unit testing:

Before asking "how should I do unit testing?" you really ought to ask "should I do unit testing?"

To clarify what @masonwheeler probably meant: You should always test each functionality (and cover the edge cases), but in many cases mocking one layer is too much work, especially if the interface between the two layers isn't stable (yet). Sometimes, integration tests / functional tests are more appropriate than unit tests.

Judging by the further explanation (that unit tests are only sensible when your requirements are clear cut), that's a problem that all kinds of tests have, whether it's unit tests, integration tests, or full end to end tests.

You might not need to decouple every single pair of classes (especially when dealing with legacy cruft), but you probably should make an effort to decouple layers of code - while integration tests are nice to write, they take longer to run and require a full deployment, limiting their uses. UI automation in particular can take several hours to run a regression suite even with relatively few tests.

-

@Maciejasjmj said in Unit testing:

Judging by the further explanation (that unit tests are only sensible when your requirements are clear cut), that's a problem that all kinds of tests have, whether it's unit tests, integration tests, or full end to end tests.

It's also not a valid reason for not writing tests, because those tests at least document what you've implemented, and you can immediately tell whether you need to change something when the spec becomes more strict in the future or when the customer starts having issues. Sometimes, the process of writing tests for legacy code also helps you figure out what exactly you've implemented in the past (including all quirks), which is definitely a good thing.

-

@Magus said in Unit testing:

redirect calls to the random bit to something consistent

Could just seed the RNG with a known value for the purposes of testing (and that sort of testing is probably properly an integration test; unit tests ought to be just checking that basic functions do vaguely sane things). Doesn't matter too much what the results are so long as they're predictable.

-

@Maciejasjmj said in Unit testing:

[integration tests] require a full deployment

Not necessarily. Sometimes you can mock key external parts like a database while still keeping all the internal interactions in the application itself, and so still get a sane integration test without everything being present. You'll still need to check that the interaction with a real database works as well, but that can be done in a separate integration test.

Also, one man's integration test is often another's unit test. It depends on your viewpoint…

-

@asdf said in Unit testing:

To clarify what @masonwheeler probably meant

I don't think that's what he meant....

-

@Maciejasjmj said in Unit testing:

This looks like GUI automation, doesn't it? Not quite unit testing.

GUIs are insanely difficult to unit test; GUI unit tests end up incredibly sensitive to their environment (e.g., exact behaviour of the OS's window manager, what exact fonts you have installed, what size of monitor you've got plugged in). Ideally, you use a GUI toolkit library that's already been heavily tested (i.e., most common ones) and focus instead mostly on the API of service calls that the GUI uses to populate and update its models.

As a bonus, that API is usually also the same one that you need if you want to port to running with a different front end (such as going from a desktop app to a web app, or switching to a new platform).

-

@dkf said in Unit testing:

GUIs are insanely difficult to unit test

To the point of it usually not being worth it. The UI is going to be the piece that manual testers, UA testers, and customers hammer the most; it's usually worth focusing your programmer-facing testing on looking for subtler bugs (like mafia commands not working in chat) and trusting the process to catch higher-level ones (like typos in the command names)

-

@asdf said in Unit testing:

To clarify what @masonwheeler probably meant: You should always test each functionality (and cover the edge cases), but in many cases mocking one layer is too much work, especially if the interface between the two layers isn't stable (yet). Sometimes, integration tests / functional tests are more appropriate than unit tests.

To clarify what I actually meant: for most cases, it's better to have a person familiar with the software manually test your code. Automating something and getting it right is many, many times harder than doing it right manually, (a principle I'm sure we're all familiar with, as that's exactly what writing code is!) and incorrect automated tests are worse than no automated tests at all, because there's a psychological tendency to see tests as authoritative, for example when you said:

@asdf said in Unit testing:

It's also not a valid reason for not writing tests, because those tests at least document what you've implemented, and you can immediately tell whether you need to change something when the spec becomes more strict in the future or when the customer starts having issues.

You've made those tests canonical, even though they're just fallible code written by fallible coders, the same as the entire rest of your codebase. And that's dangerous.

Also, not only are automated tests as fallible as any other code you write, they're also inflexible and lacking any human sense of intuition that a tester would have. And that's also dangerous:

Joel Spolsky said in Talk at Yale, Part 1:

This seems like a kind of brutal example, but nonetheless, this search for the holy grail of program quality is leading a lot of people to a lot of dead ends. The Windows Vista team at Microsoft is a case in point. Apparently—and this is all based on blog rumors and innuendo—Microsoft has had a long term policy of eliminating all software testers who don’t know how to write code, replacing them with what they call SDETs, Software Development Engineers in Test, programmers who write automated testing scripts.

The old testers at Microsoft checked lots of things: they checked if fonts were consistent and legible, they checked that the location of controls on dialog boxes was reasonable and neatly aligned, they checked whether the screen flickered when you did things, they looked at how the UI flowed, they considered how easy the software was to use, how consistent the wording was, they worried about performance, they checked the spelling and grammar of all the error messages, and they spent a lot of time making sure that the user interface was consistent from one part of the product to another, because a consistent user interface is easier to use than an inconsistent one.

None of those things could be checked by automated scripts. And so one result of the new emphasis on automated testing was that the Vista release of Windows was extremely inconsistent and unpolished. Lots of obvious problems got through in the final product… none of which was a “bug” by the definition of the automated scripts, but every one of which contributed to the general feeling that Vista was a downgrade from XP. The geeky definition of quality won out over the suit’s definition; I’m sure the automated scripts for Windows Vista are running at 100% success right now at Microsoft, but it doesn’t help when just about every tech reviewer is advising people to stick with XP for as long as humanly possible. It turns out that nobody wrote the automated test to check if Vista provided users with a compelling reason to upgrade from XP.

There are places where automated tests are helpful. I use them extensively in compiler work, where you can unambiguously say "per the language rules, code X should produce output Y, and if it doesn't then something is broken." But overall, such cases tend to be the exception, not the rule.

-

@dangeRuss

I'm only familiar with Selenium for browser UI testing. It can fill out and submit forms, upload files and check that proper elements are displayed. 5 minute googling didn't show any recent info about testing Flex with it, just an old project on google code.If most of your logic is in the stored procedures then that's where you should focus your tests. Set up a test db, fill it data and run the procedures on that.

Testing that the graphs correspond to actual data is something I'd leave for manual testing

@dangeRuss said in Unit testing:

One of the problems with this is that there's some randomness to the output so you can't just feed it a some data and expect the same result every time.

Do you know where the randomness comes from? Can you seed it with a predetermined value so that you get consistent values in the output?

-

@Yamikuronue said in Unit testing:

@dkf said in Unit testing:

GUIs are insanely difficult to unit test

To the point of it usually not being worth it. The UI is going to be the piece that manual testers, UA testers, and customers hammer the most; it's usually worth focusing your programmer-facing testing on looking for subtler bugs (like mafia commands not working in chat) and trusting the process to catch higher-level ones (like typos in the command names)

This may work great when you actually have a QA department. When you have two devs for the whole project, and no QA, it's useful to know that whatever you just changed didn't break something else. And if it does, and is not covered by a test, then you add the test.

I think that's what I want to do. Get some kind of framework going and whenever shit breaks, just add some kind of test to cover that for next time.

-

@homoBalkanus said in Unit testing:

@dangeRuss said in Unit testing:

One of the problems with this is that there's some randomness to the output so you can't just feed it a some data and expect the same result every time.

Do you know where the randomness comes from? Can you seed it with a predetermined value so that you get consistent values in the output?

This is actually a great idea, one that's already been mentioned a few times in this thread. I think I will look into a way to seed the randomizer when we unit test. It will probably help for development as well, so you can see how your changes affect the data without being muddled by the randomizer.

-

@cartman82 said in Unit testing:

If you have a lot of functionality in dB, maybe you should focus more on integration tests. Those would involve calling your java code and the test database together.

I think we already have something like that. I guess I should upload some test data, let it process and then code the tests around that.

How are integration tests different than unit tests? Do you still use the same tools for both?

-

@dangeRuss Yeah, but the thing about UI tests is, they're going to break. Often. And weirdly. You spend a lot of time trying to keep those in good repair if they're your primary diagnostic tool. Lower-level tests may not cover everything, but they're much more reliable, and you can hit that 80% mark

-

@dangeRuss said in Unit testing:

I think we already have something like that. I guess I should upload some test data, let it process and then code the tests around that.

How are integration tests different than unit tests? Do you still use the same tools for both?Unit tests are easier, they have a simpler tool chain. A mocking and an assertion library, that's it.

With integration tests, on top of those two, you also need a way to manipulate the database. Some DB-s allow memory-only mode, so that's useful for testing. If not, you need a way to quickly seed and reset the tables you'll be using.

We are talking about DML here. DDL changes are done through migrations, the usual way.

This guy is a bit of a testing taliban, but makes a good video about the differences.

-

This is a simpler demonstration

https://twitter.com/thepracticaldev/status/687672086152753152

-

@dangeRuss said in Unit testing:

How are integration tests different than unit tests? Do you still use the same tools for both?

It isn't entirely a firm barrier. Generally, unit tests test very isolated pieces of code, while integration tests run multiple parts of the system together. Unit tests are supposed to have as few dependencies as possible so that they always run fast. Database connections, network connections, anything that goes outside the box or might take time to run should be mocked out. Integration tests usually allow actual DB calls to go to databases and network calls to go out to their servers and be responded to normally.

Usually the trick with unit testing is to mock out as many things as possible. Often half the battle is getting your code to a state where that's practical to do. You may want to start out writing integration tests just to keep things sane while you refactor to make it possible to write more narrow tests.

-

If only twitter and youtube weren't blocked at work....

-

And +1 to automated tests of UIs being dicey. At best, you can identify buttons to click and fields to type into and such by specific identifiers you created, like HTML element id. That helps you verify the basic functionality, but you can't really verify that things look right and are in the right places and also have tests that don't break all the time.

-

@UndergroundCode said in Unit testing:

And +1 to automated tests of UIs being dicey. At best, you can identify buttons to click and fields to type into and such by specific identifiers you created, like HTML element id. That helps you verify the basic functionality, but you can't really verify that things look right and are in the right places and also have tests that don't break all the time.

I worked at one place that took screen shots and compared them to existing ones. If they were too different (using some fuzzy factor), we got a bug. Which we promptly kicked back with 'update your tests, UI changed per bug####'. :grrrr:

-

@masonwheeler said in Unit testing:

But overall, such cases tend to be the exception, not the rule.

This is the one point where I'd disagree. It's more that there are many aspects to ensuring that systems work as desired. Automated testing can handle some of those, not all. But getting people to do testing isn't a replacement either.

Things that are not immediately user-facing needs as much automated testing as possible (whether unit tests, integration tests, or whatever) since that needs to be a strong foundation on which the user-facing parts can be built. User-facing parts need testing by people since that's literally their purpose. The fly in the ointment is the UI toolkits that are used to make user-facing programs; those need both kinds of testing and are PITAs because of it. But most people don't write them (understandably, as they're really difficult to do a good job of).

I've seen the attitude from GUI programmers that their type of programming is the only kind that matters (@blakeyrat was a proponent of this) and it's dead wrong. The back end stuff also matters, as it is what makes the GUI into more than just a hollywood western shop frontage. Users want to walk into the livery store, the barber's and the saloon and do business there.

-

@dcon said in Unit testing:

I worked at one place that took screen shots and compared them to existing ones. If they were too different (using some fuzzy factor), we got a bug. Which we promptly kicked back with 'update your tests, UI changed per bug####'. :grrrr:

An automated test breaking isn't necessarily a problem. It just flags up that something changed. It's up to you to decide what to do about it.

-

@dkf said in Unit testing:

I've seen the attitude from GUI programmers that their type of programming is the only kind that matters (@blakeyrat was a proponent of this)

I don't think that's what he used to argue. He was more a proponent of the back end being important as a foundation for a good UI. Something with a solid core but an unusable front end is terrible software, as is something with a brilliant front end on a shitty engine

-

@dkf said in Unit testing:

@dcon said in Unit testing:

I worked at one place that took screen shots and compared them to existing ones. If they were too different (using some fuzzy factor), we got a bug. Which we promptly kicked back with 'update your tests, UI changed per bug####'. :grrrr:

An automated test breaking isn't necessarily a problem. It just flags up that something changed. It's up to you to decide what to do about it.

I am quite glad that nowadays we version and branch tests with the product. If your project/bugfix causes test failures, the required test updates are reviewed and committed along with the fix.

In the past we had no separation between build infrastructure code (which could not be versioned/branched because there was only one box) and test code (which could apply to different versions). This was considered a feature at the time because a test that passed on one version should pass unmodified on another newer version. This did not work out in practice.

-

@Jaloopa said in Unit testing:

@dkf said in Unit testing:

I've seen the attitude from GUI programmers that their type of programming is the only kind that matters (@blakeyrat was a proponent of this)

I don't think that's what he used to argue. He was more a proponent of the back end being important as a foundation for a good UI. Something with a solid core but an unusable front end is terrible software, as is something with a brilliant front end on a shitty engine

No, I'm with @dkf on this one. I think he would secretly agree with what you're saying, but he always went way overboard the other direction about this because he perceived that people were too far on the other side (unit testing, etc, instead of testing the interface, etc).