Discussion of NodeBB Updates

-

@sockpuppet7 said in Discussion of NodeBB Updates:

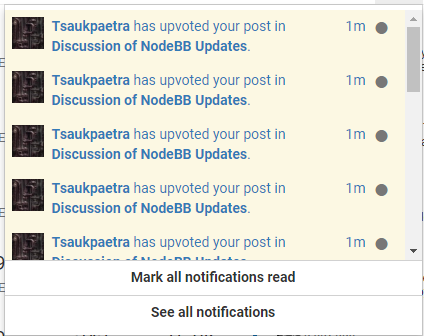

it would be cool if the first upvote didn't trigger a notification

You can set your settings to fix that. Or convince @Tsaukpaetra to stop liking every goddamned thing.

-

@sockpuppet7 said in Discussion of NodeBB Updates:

or maybe, just create a special rule for people that use upvote for marking shit as read

I'm sure you could modify @anotherusername's userscript that ignores likes from the likes topic so it ignores them from specific users instead

-

@boomzilla The 1st, 5th, 10th, ... option is almost perfect, but perfect would be to start at 5th.

-

@sockpuppet7 PRs not explicitly discouraged.

-

@boomzilla but that would be work

-

@sockpuppet7 maybe just turn your upvote notifications off?

-

Speaking of annoying vote related things, can we get rid of the Votes column on the topic list?

-

@polygeekery but I want my notifications, I just don't want it for all my posts

-

@sockpuppet7 said in Discussion of NodeBB Updates:

@boomzilla but that would be work

Yes, plus it just feels wrong to actively discourage people from trying to improve software.

-

@boomzilla I found there are 2 places for setting the upvote notification, but one of them is buggy. I posted it on the bug category on this forum.

-

@hungrier said in Discussion of NodeBB Updates:

Speaking of annoying vote related things, can we get rid of the Votes column on the topic list?

Add this to your custom CSS setting

.stats { display: none; }

-

@jaloopa But that will also remove the posts and views columns, which are marginally useful.

-

-

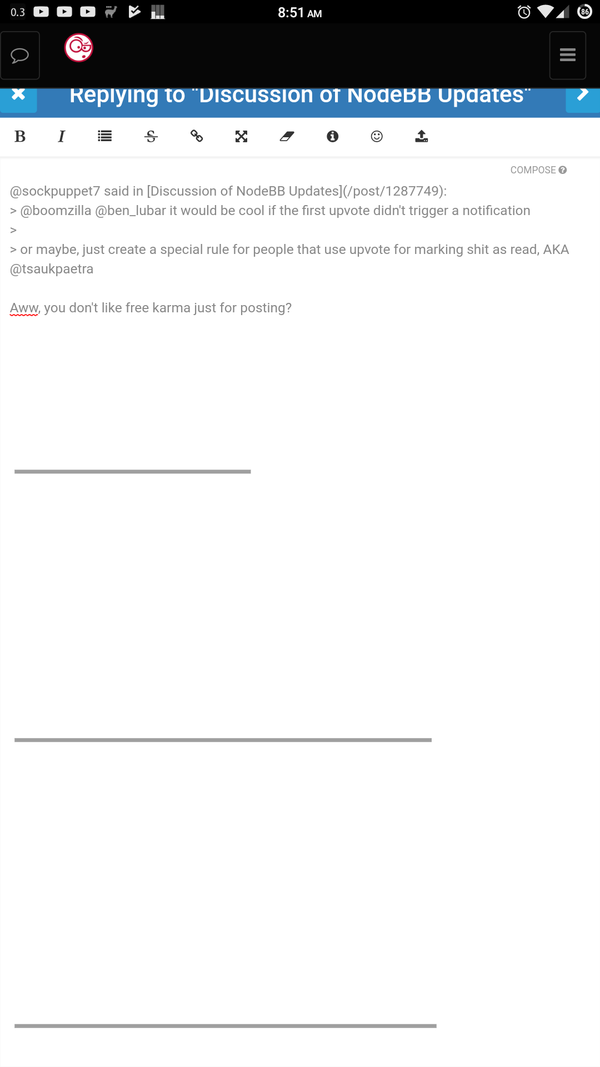

@sockpuppet7 said in Discussion of NodeBB Updates:

@boomzilla @ben_lubar it would be cool if the first upvote didn't trigger a notification

or maybe, just create a special rule for people that use upvote for marking shit as read, AKA @tsaukpaetra

Aww, you don't like free karma just for posting?

-

@sockpuppet7 said in Discussion of NodeBB Updates:

Feel free to ignore me.

Side note: Chrome is getting really weird...

-

@sockpuppet7 said in Discussion of NodeBB Updates:

He uses upvotes as post-read reminders. It can get annoying.

-

-

@pleegwat said in Discussion of NodeBB Updates:

@sockpuppet7 said in Discussion of NodeBB Updates:

He uses upvotes as post-read reminders. It can get annoying.

Until I have the unread bookmark thing not getting updated when I accidentally click a mention further in the topic than I've read, the upvotes will continue.

-

@polygeekery said in Discussion of NodeBB Updates:

@tsaukpaetra said in Discussion of NodeBB Updates:

Feel free to ignore me.

I usually do.

Isn't it liberating?

-

@tsaukpaetra

insert standard open source software comment regarding inquiries to merge code to an upstream location

-

@tsaukpaetra said in Discussion of NodeBB Updates:

@pleegwat said in Discussion of NodeBB Updates:

@sockpuppet7 said in Discussion of NodeBB Updates:

He uses upvotes as post-read reminders. It can get annoying.

Until I have the unread bookmark thing not getting updated when I accidentally click a mention further in the topic than I've read, the upvotes will continue.

can't you do that with downvotes?

-

@sockpuppet7 said in Discussion of NodeBB Updates:

@tsaukpaetra said in Discussion of NodeBB Updates:

@pleegwat said in Discussion of NodeBB Updates:

@sockpuppet7 said in Discussion of NodeBB Updates:

He uses upvotes as post-read reminders. It can get annoying.

Until I have the unread bookmark thing not getting updated when I accidentally click a mention further in the topic than I've read, the upvotes will continue.

can't you do that with downvotes?

I like to be a source of positivity most of the time. There's just not a lot of that in places like these....

-

@tsaukpaetra said in Discussion of NodeBB Updates:

I like to be a source of positivity most of the time.

I don't think it's working.

-

@tsaukpaetra said in Discussion of NodeBB Updates:

I like to be a source of positivity most of the time. There's just not a lot of that in places like these....

We are the wretched hive of scum and villainy. You are looking for the Tony Robbins conference. That is down the hall and to the left.

-

@polygeekery said in Discussion of NodeBB Updates:

We are the wretched hive of scum and villainy.

Who is "we"?

-

@boomzilla said in Discussion of NodeBB Updates:

@tsaukpaetra said in Discussion of NodeBB Updates:

I like to be a source of positivity most of the time.

I don't think it's working.

I never made claims on the efficacy of said source. ;)

-

@sockpuppet7 said in Discussion of NodeBB Updates:

@polygeekery said in Discussion of NodeBB Updates:

We are the wretched hive of scum and villainy.

Who is "we"?

I know, right? Hard to tell! Is it his employer? The voices in his head? Himself in the Royal We sense? So confusing!

-

Ok, here's the full log: https://what.thedailywtf.com/assets/uploads/nodebb-postgres-converter.txt

Here's my commentary:

22 minutes to copy MongoDB's

objectscollection to PostgreSQL:[2018-01-05 01:06:40.022] [WARN] There were 32587750 objects, but 32587528 were expected. [2018-01-05 01:06:40.055] [LOG] Copy objects: 1324717.592ms [2018-01-05 01:06:40.055] [LOG] Copy: 1324940.208msA little under 5 minutes to index the

objectscollection:[2018-01-05 01:07:38.477] [LOG] Create index on expireAt: 58412.510ms [2018-01-05 01:08:19.422] [LOG] Create unique index on key: 99360.930ms [2018-01-05 01:11:03.148] [LOG] Create index on key__score: 263090.055ms [2018-01-05 01:11:29.765] [LOG] Create unique index on key__value: 289703.448ms [2018-01-05 01:11:29.766] [LOG] Index: 289710.111ms30 minutes to cluster

objects, which I'm now wondering if I can skip entirely:[2018-01-05 01:11:29.809] [LOG] [DB] clustering "public.objects" using sequential scan and sort [2018-01-05 01:36:11.587] [LOG] [DB] "objects": found 0 removable, 32539545 nonremovable row versions in 918095 pages 0 dead row versions cannot be removed yet. CPU: user: 1203.01 s, system: 28.40 s, elapsed: 1481.51 s. [2018-01-05 01:41:14.110] [LOG] Cluster objects: 1784322.718ms [2018-01-05 01:41:14.528] [LOG] Cluster: 1784762.525msAnalyze goes super fast as usual:

[2018-01-05 01:41:14.551] [LOG] [DB] analyzing "public.objects" [2018-01-05 01:41:14.779] [LOG] [DB] "objects": scanned 30000 of 946485 pages, containing 1021425 live rows and 0 dead rows; 30000 rows in sample, 32529588 estimated total rows [2018-01-05 01:41:15.140] [LOG] Analyze objects: 589.077ms [2018-01-05 01:41:15.140] [LOG] Analyze: 611.336msA little over 6 minutes to split objects into the five different types:

[2018-01-05 01:41:15.256] [LOG] Create type legacy_object_type: 46.543ms [2018-01-05 01:41:15.414] [LOG] Create table legacy_object: 157.497ms [2018-01-05 01:42:45.214] [LOG] Insert into legacy_object (zset): 89799.984ms [2018-01-05 01:47:24.526] [LOG] Insert into legacy_object (string, set, list, hash): 279312.032ms [2018-01-05 01:47:31.895] [LOG] Create index on expireAt: 7368.907ms [2018-01-05 01:47:31.933] [LOG] Objects: 376793.087msCreating empty tables is fast, as is to be expected:

[2018-01-05 01:47:32.160] [LOG] Create table legacy_hash: 225.693ms [2018-01-05 01:47:32.251] [LOG] Create table legacy_zset: 91.401ms [2018-01-05 01:47:32.443] [LOG] Create table legacy_set: 191.377ms [2018-01-05 01:47:32.527] [LOG] Create table legacy_list: 82.687ms [2018-01-05 01:47:32.660] [LOG] Create table legacy_string: 132.988msJust under 28 minutes to convert the data into 5 tables:

I'm wondering if it would be faster to go from the

legacy_objectstable to find what type each value should be instead.[2018-01-05 01:47:46.084] [LOG] Insert into legacy_list: 13308.859ms [2018-01-05 01:48:56.377] [LOG] Insert into legacy_string: 83600.984ms [2018-01-05 01:52:03.929] [LOG] Insert into legacy_set: 271154.142ms [2018-01-05 02:04:32.947] [LOG] Insert into legacy_hash: 1020231.203ms [2018-01-05 02:07:55.165] [LOG] Insert into legacy_zset: 1222388.762ms [2018-01-05 02:09:08.129] [LOG] Create index on key__score: 72942.669ms [2018-01-05 02:09:08.129] [LOG] Convert: 1672988.663msA few simple things take just over 1 second:

[2018-01-05 02:09:08.605] [LOG] Create view legacy_object_live: 372.743ms [2018-01-05 02:09:08.764] [LOG] Create table session: 531.513ms [2018-01-05 02:09:09.292] [LOG] Drop table objects: 1121.139ms [2018-01-05 02:09:09.292] [LOG] Cleanup: 1162.848msA little under 20 minutes to cluster the split tables:

[2018-01-05 02:09:09.330] [LOG] Cluster legacy_hash: 36.327ms [2018-01-05 02:09:09.338] [LOG] Cluster legacy_set: 40.090ms [2018-01-05 02:09:09.339] [LOG] Cluster legacy_string: 37.262ms [2018-01-05 02:09:09.339] [LOG] Cluster legacy_list: 38.419ms [2018-01-05 02:09:09.339] [LOG] Cluster legacy_zset: 45.472ms [2018-01-05 02:09:09.340] [LOG] Cluster legacy_object: 46.165ms [2018-01-05 02:09:09.608] [LOG] [DB] clustering "public.legacy_list" using index scan on "legacy_list_pkey" [2018-01-05 02:09:09.614] [LOG] [DB] "legacy_list": found 0 removable, 0 nonremovable row versions in 0 pages 0 dead row versions cannot be removed yet. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.01 s. [2018-01-05 02:09:09.697] [LOG] [DB] clustering "public.legacy_string" using index scan on "legacy_string_pkey" [2018-01-05 02:09:09.747] [LOG] [DB] "legacy_string": found 0 removable, 1 nonremovable row versions in 1 pages 0 dead row versions cannot be removed yet. CPU: user: 0.00 s, system: 0.00 s, elapsed: 0.05 s. [2018-01-05 02:09:09.831] [LOG] [DB] clustering "public.legacy_set" using index scan on "legacy_set_pkey" [2018-01-05 02:09:26.808] [LOG] [DB] "legacy_set": found 0 removable, 4322085 nonremovable row versions in 31800 pages 0 dead row versions cannot be removed yet. CPU: user: 3.59 s, system: 0.82 s, elapsed: 16.97 s. [2018-01-05 02:09:33.215] [LOG] [DB] clustering "public.legacy_zset" using index scan on "legacy_zset_pkey" [2018-01-05 02:20:45.588] [LOG] [DB] "legacy_zset": found 0 removable, 24516452 nonremovable row versions in 207547 pages 0 dead row versions cannot be removed yet. CPU: user: 26.37 s, system: 8.10 s, elapsed: 672.38 s. [2018-01-05 02:22:56.980] [LOG] [DB] clustering "public.legacy_object" using index scan on "legacy_object_pkey" [2018-01-05 02:23:26.529] [LOG] [DB] "legacy_object": found 0 removable, 8785777 nonremovable row versions in 60510 pages 0 dead row versions cannot be removed yet. CPU: user: 6.59 s, system: 1.83 s, elapsed: 29.56 s. [2018-01-05 02:24:04.959] [LOG] [DB] clustering "public.legacy_hash" using index scan on "legacy_hash_pkey" [2018-01-05 02:28:38.623] [LOG] [DB] "legacy_hash": found 0 removable, 7259623 nonremovable row versions in 575622 pages 0 dead row versions cannot be removed yet. CPU: user: 17.24 s, system: 17.82 s, elapsed: 273.66 s. [2018-01-05 02:28:52.534] [LOG] Cluster all tables: 1182975.182msAnalysis is fast (again):

[2018-01-05 02:28:52.538] [LOG] [DB] analyzing "public.legacy_object" [2018-01-05 02:28:52.561] [LOG] [DB] analyzing "public.legacy_set" [2018-01-05 02:28:52.562] [LOG] [DB] analyzing "public.legacy_zset" [2018-01-05 02:28:52.802] [LOG] [DB] analyzing "public.legacy_list" [2018-01-05 02:28:52.803] [LOG] [DB] "legacy_list": scanned 0 of 0 pages, containing 0 live rows and 0 dead rows; 0 rows in sample, 0 estimated total rows [2018-01-05 02:28:52.803] [LOG] Analyze legacy_list: 251.689ms [2018-01-05 02:28:52.803] [LOG] [DB] analyzing "public.legacy_hash" [2018-01-05 02:28:52.803] [LOG] [DB] "legacy_hash": scanned 30000 of 594086 pages, containing 367818 live rows and 0 dead rows; 30000 rows in sample, 7260846 estimated total rows [2018-01-05 02:28:52.803] [LOG] [DB] analyzing "public.legacy_string" [2018-01-05 02:28:52.804] [LOG] [DB] "legacy_string": scanned 1 of 1 pages, containing 1 live rows and 0 dead rows; 1 rows in sample, 1 estimated total rows [2018-01-05 02:28:52.804] [LOG] Analyze legacy_string: 251.339ms [2018-01-05 02:28:52.804] [LOG] [DB] "legacy_set": scanned 30000 of 31800 pages, containing 4077432 live rows and 0 dead rows; 30000 rows in sample, 4322078 estimated total rows [2018-01-05 02:28:52.804] [LOG] [DB] "legacy_object": scanned 30000 of 60522 pages, containing 4356242 live rows and 0 dead rows; 30000 rows in sample, 8787019 estimated total rows [2018-01-05 02:28:52.824] [LOG] Analyze legacy_object: 287.360ms [2018-01-05 02:28:52.833] [LOG] [DB] "legacy_zset": scanned 30000 of 207585 pages, containing 3544314 live rows and 0 dead rows; 30000 rows in sample, 24517477 estimated total rows [2018-01-05 02:28:52.833] [LOG] Analyze legacy_set: 280.963ms [2018-01-05 02:28:52.908] [LOG] Analyze legacy_hash: 356.352ms [2018-01-05 02:28:52.941] [LOG] Analyze legacy_zset: 389.235ms [2018-01-05 02:28:52.942] [LOG] Analyze: 406.927msI'm going to edit the script to omit the first cluster operation and do the inserts into the five different

legacy_tables using an inner join fromlegacy_objectsand see if that's any faster.

-

@sockpuppet7 said in Discussion of NodeBB Updates:

@boomzilla @ben_lubar it would be cool if the first upvote didn't trigger a notification

or maybe, just create a special rule for people that use upvote for marking shit as read, AKA @tsaukpaetra

I knew who the single upvote to this comment was even before checking.

-

@jaloopa said in Discussion of NodeBB Updates:

@sockpuppet7 said in Discussion of NodeBB Updates:

or maybe, just create a special rule for people that use upvote for marking shit as read

I'm sure you could modify @anotherusername's userscript that ignores likes from the likes topic so it ignores them from specific users instead

I'm sure I could do that, and did...

Unfortunately, it muddles things when upvote notifications blend... "Tsaukpaetra and 3 others have upvoted your post!" Ok, so was that supposed to be a read or unread notification? It's unread now, but I didn't keep track of whether it was a read or unread notification immediately before he upvoted it, so I don't know if I can ignore the whole notification or not...

-

@anotherusername said in Discussion of NodeBB Updates:

@jaloopa said in Discussion of NodeBB Updates:

@sockpuppet7 said in Discussion of NodeBB Updates:

or maybe, just create a special rule for people that use upvote for marking shit as read

I'm sure you could modify @anotherusername's userscript that ignores likes from the likes topic so it ignores them from specific users instead

I'm sure I could do that, and did...

Unfortunately, it muddles things when upvote notifications blend... "Tsaukpaetra and 3 others have upvoted your post!" Ok, so was that supposed to be a read or unread notification? It's unread now, but I didn't keep track of whether it was a read or unread notification immediately before he upvoted it, so I don't know if I can ignore the whole notification or not...

Does the notification only have the last person to upvote? So if it has my name and it doesn't say "and X others", it's safe to assume I was first (unless one down voted and another upvoted before I did).

Someone test this theory before someone else posts a link to the source where this notification logic is!

-

@tsaukpaetra said in Discussion of NodeBB Updates:

Does the notification only have the last person to upvote?

It has the name of the person who triggered that notification. One that's "..and X other people" should show the (X+1)th person.

-

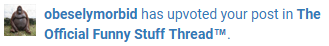

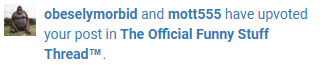

@tsaukpaetra they're different types of notifications.

Single:

{ nid: "upvote:post:1287788:uid:805", bodyShort: "[[notifications:upvoted_your_post_in, obeselymorbid, The Official Funny Stuff Thread™]]<i class=\"notification-cid\" data-ncid=\"14\"></i>" }Double:

{ nid: "upvote:post:1287808:uid:805", bodyShort: "[[notifications:upvoted_your_post_in_dual, obeselymorbid, mott555, The Official Funny Stuff Thread™]]<i class=\"notification-cid\" data-ncid=\"14\"></i>" }Multiple:

{ nid: "upvote:post:1287870:uid:897", bodyShort: "[[notifications:upvoted_your_post_in_multiple, Tsaukpaetra, 2, The Official Funny Stuff Thread™]]<i class=\"notification-cid\" data-ncid=\"14\"></i>" }Single is easy enough to deal with. The others, the main question is: right before you upvoted it, was the notification read? If so, it should ignore the upvote, but if not, then it can't. And it has no way to know, so it can't ignore the upvote; doing so might clear upvotes that hadn't been read yet.

-

@hungrier Here's a version that only hits the votes column:

/* Get rid of "votes" on topic lists */ .mobile-stat+div { visibility: hidden; }

-

@chaostheeternal said in Discussion of NodeBB Updates:

@hungrier Here's a version that only hits the votes column:

/* Get rid of "votes" on topic lists */ .mobile-stat+div { visibility: hidden; }why not

visibility: collapse;ordisplay: none;?

-

@ben_lubar

display:none;shifts the rest of the stuff over. Not sure about collapse; didn't try it.

-

@ben_lubar said in Discussion of NodeBB Updates:

why not visibility: collapse;

Because it's not a table element.

@ben_lubar said in Discussion of NodeBB Updates:

or display: none;

Because the columns in the topic list don't resize to fill the gap that leaves.

-

@hungrier said in Discussion of NodeBB Updates:

Not sure about collapse; didn't try it.

display: none;andvisibility: collapse;are pretty much identical in meaning.

-

@ben_lubar said in Discussion of NodeBB Updates:

@hungrier said in Discussion of NodeBB Updates:

Not sure about collapse; didn't try it.

display: none;andvisibility: collapse;are pretty much identical in meaning.Which is exactly why none of the words are the same.

-

@tsaukpaetra said in Discussion of NodeBB Updates:

@ben_lubar said in Discussion of NodeBB Updates:

@hungrier said in Discussion of NodeBB Updates:

Not sure about collapse; didn't try it.

display: none;andvisibility: collapse;are pretty much identical in meaning.Which is exactly why none of the words are the same.

Every technology is terrible because it was made by humans.

-

[2018-01-05 20:54:32.239] [LOG] [DB] analyzing "public.objects" [2018-01-05 20:54:32.404] [LOG] [DB] "objects": scanned 30000 of 918567 pages, containing 1054637 live rows and 0 dead rows; 30000 rows in sample, 32545258 estimated total rows [2018-01-05 20:54:32.904] [LOG] Analyze objects: 666.446ms [2018-01-05 20:54:32.905] [LOG] Analyze: 666.794ms [2018-01-05 20:54:32.907] [LOG] Create type legacy_object_type: 1.344ms [2018-01-05 20:54:32.985] [LOG] Create table legacy_object: 78.010ms [2018-01-05 20:56:43.856] [LOG] Insert into legacy_object (zset): 130870.231ms [2018-01-05 21:02:31.406] [LOG] Insert into legacy_object (string, set, list, hash): 347550.196ms [2018-01-05 21:02:40.915] [LOG] Create index on expireAt: 9508.366ms [2018-01-05 21:02:52.037] [LOG] Create temporary index on type: 11121.736ms [2018-01-05 21:02:52.067] [LOG] Objects: 499162.538ms [2018-01-05 21:02:52.260] [LOG] Create table legacy_hash: 192.152ms [2018-01-05 21:02:52.352] [LOG] Create table legacy_zset: 91.518ms [2018-01-05 21:02:52.427] [LOG] Create table legacy_set: 74.582ms [2018-01-05 21:02:52.502] [LOG] Create table legacy_list: 74.744ms [2018-01-05 21:02:52.577] [LOG] Create table legacy_string: 74.646ms [2018-01-05 21:02:52.594] [LOG] Insert into legacy_list: 8.173ms [2018-01-05 21:03:24.711] [LOG] Insert into legacy_string: 32122.234msInserting zero lists: 0.008 seconds.

Inserting one string: 32.122 seconds.

-

@ben_lubar said in NodeBB Updates:

Is the header better now?

Mobile composer should be fixed.

Yes, inasmuch as Chrome is working well, it looks better.

Still NFC what the extra scrollbars are though...

-

@tsaukpaetra said in Discussion of NodeBB Updates:

Still NFC what the extra scrollbars are though...

I don't have those on my mobile Chrome so I blame you. :P

-

I don't know why, but clicking a toast that someone liked your post does not in fact remove the corresponding notification. Good old Discourse, of course, marks a like notification as read as soon as you read the post, instead of having to click every single like notification you get to remove it.

-

@heterodox said in Discussion of NodeBB Updates:

@tsaukpaetra said in Discussion of NodeBB Updates:

Still NFC what the extra scrollbars are though...

I don't have those on my mobile Chrome so I blame you. :P

Yeah, my phone is starting to get weirder and weirder. Like, randomly freezing just because I closed a tab weird. Sometimes it's bad enough that the device soft-reboots.

-

@pie_flavor said in Discussion of NodeBB Updates:

clicking a toast

How do you manage to do that? I can't even read it by the time it disappears...

-

@pie_flavor said in Discussion of NodeBB Updates:

instead of having to click every single like notification you get to remove it.

Your Discourse had working notifications? Like, ones that didn't show up multiple days later when the unicorns were restarted? Lucky...

-

@twelvebaud Yup. Fucking amazing, isn't it? The thought that there is functional forum software out there? Oh well.

-

@pie_flavor You're not alone. I miss Discourse's notifications dropdown and

/notificationspage.In NodeBB:

- who knows how notifications are grouped and ordered. Clicking any notification does not guarantee that you'll go to the first unread post in that thread. The

/notificationspage is a mess with cleared notifications between uncleared notifications, and broken paging. - the popup works well enough, but it's highly unlikely that it will come up at a convenient time. It suffers from the same problem: does not send me to the earliest unread post;

- the dropdown mostly works, but it can sometimes update under my mouse cursor and make me click the wrong entry. It has also happened a few times that I click one of the entries, but not right on the text of a link (but not on the circle that clears the notification either), and that marks the notification as read without navigating (but only sometimes).

I also miss Discourse's suggested threads at the end of a thread too (if only because the notifications page sucks so much).

- who knows how notifications are grouped and ordered. Clicking any notification does not guarantee that you'll go to the first unread post in that thread. The

-

@zecc said in Discussion of NodeBB Updates:

- who knows how notifications are grouped and ordered. Clicking any notification does not guarantee that you'll go to the first unread post in that thread. The

/notificationspage is a mess with cleared notifications between uncleared notifications, and broken paging. - the popup works well enough, but it's highly unlikely that it will come up at a convenient time. It suffers from the same problem: does not send me to the earliest unread post;

Why would a notification about someone liking your post near the beginning of the topic take you to the latest unread?

- the dropdown mostly works, but it can sometimes update under my mouse cursor and make me click the wrong entry. It has also happened a few times that I click one of the entries, but not right on the text of a link (but not on the circle that clears the notification either), and that marks the notification as read without navigating (but only sometimes).

This is due majorly because the damn thing is only updated after you click it open. Apparently toasting the data and automatically adding it to the notifications on the same call is too hard....

I also miss Discourse's suggested threads at the end of a thread too (if only because the notifications page sucks so much).

I personally never understood suggested threads...

- who knows how notifications are grouped and ordered. Clicking any notification does not guarantee that you'll go to the first unread post in that thread. The