@lorne-kates First of all, please find me a usability study for any text entry format. I'll wait.

Ok, now that we've gotten that out of our system (I didn't actually check, tbh), there are essentially two paths forward:

- An experiment

- An archival study

An experiment would mean that we find a sample of users, and get them to write a text in a number of different formats. We will then do a post-study questionnaire, to measure each subject's satisfaction with using their assigned entry format. We can also measure the "correctness" of their input, the time it took to complete the text, and their satisfaction with the end result.

We can do this. In fact, I am logging this as a potential bachelor's thesis.

We could also instrument a discussion forum such that different users get different means of text entry, and then use runtime logging to collect equivalent data. We would probably want this type of runtime logging even with the controlled experiment.

An archival study would mean that we look at the evidence already produced. We may, for example, find a couple of different fora with similar topics and user bases, and for example measure the number of entries. The problem would be to define and argue for why the fora are indeed equivalent, and why we would thus expect a similar level of activity. Unless we collect data from so many fora that we can just do a factor analysis and see if the activity correlates with the entry format.

We may also count the number of invectives near texts about markdown, compared to other entry formats.

Or, we may take an easier route, and just look at how many of the formatting codes in markdown are also supported in word processors, and how many feature requests there are in the issue trackers for word processors where the equivalent shortcuts aren't supported. Mind you, this route will not tell us the technical expertise of the users of those features, nor their frequency of use, in the word processors.

And don't get me started on the special tool my dad made to screw in the piston.

And don't get me started on the special tool my dad made to screw in the piston.

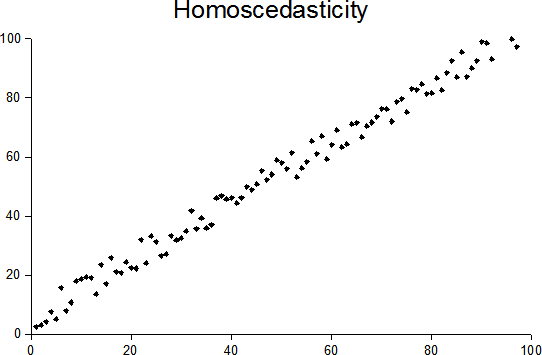

Homoscedasticity and heteroscedasticity - Wikipedia

Homoscedasticity and heteroscedasticity - Wikipedia

ry points.

ry points.