I, ChatGPT

-

@loopback0 said in I, ChatGPT:

Of course it is, it's a paid feature.

Is it? It's being presented as base functionality.

Meanwhile, they're demoing it and it's not working on anyone's machine but the presenter's.

-

@error said in I, ChatGPT:

they're demoing it and it's not working on anyone's machine

Truth in advertising!

-

: Your machines are obviously not AI-ready.

: Your machines are obviously not AI-ready.

-

@error said in I, ChatGPT:

@loopback0 said in I, ChatGPT:

Of course it is, it's a paid feature.

Is it? It's being presented as base functionality.

It's a paid addon for Enterprise (the lowest license it's available for) or it's generously included in the two most expensive licenses - but even then IIRC has a limited number of chatbot conversations per user license.

-

-

@HardwareGeek I figured those were implied

-

-

@error said in I, ChatGPT:

Week 3 of Salesforce training. We're talking about chatbots. It's being aggressively pitched.

I just heard GenAI referred to as "true" AI, which to me further muddies the concept of AI vs AGI.

Whenever some new weak-AI thing comes out, it’s being marketed as the new big thing and so you need new words to describe the original concept of AI. AGI is just that. Once ChatGPT is marketed as AGI, you’ll need yet another term to talk about actual AI.

-

-

@boomzilla the new SEO - Shitposting Extremely Optimised

-

@boomzilla fuckin' gottem

-

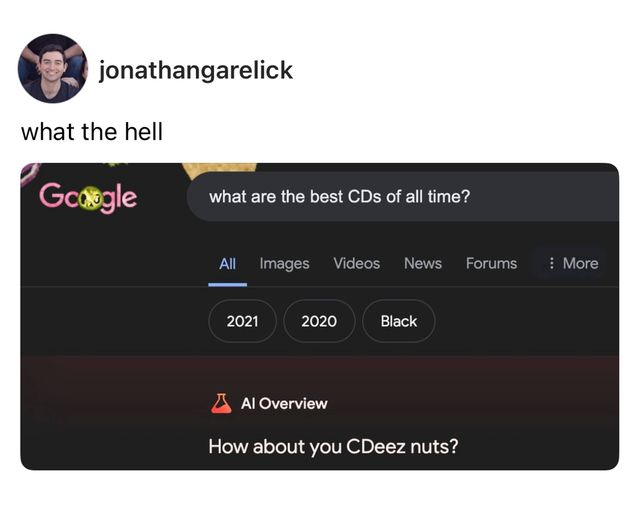

@boomzilla This must be what they were looking for, right? This is AGI? Either way, new Google wins the interwebs.

-

@HardwareGeek said in I, ChatGPT:

Someone at work just posted to the technical chat that there's a script that some people might not be aware of (I wasn't) to simplify creating new $language files. Rather than starting with an empty text file, the script fills in boilerplate like the company copyright and confidentiality text. You can specify what kind of file you are creating (module, interface, test, etc.), and it will add the appropriate boilerplate for that.

I assume it is somehow more convenient to run said script than to copy an existing file and deleting what's going to be changed? (or keeping templates files around)

-

@Zecc said in I, ChatGPT:

copy an existing file and deleting what's going to be changed

That's what I do, because I'm usually creating a modified version of an existing test, so I start with the existing test and just change a few things. I've never used the script, but I think it does in fact copy and modify a template.

-

@error said in I, ChatGPT:

@loopback0 said in I, ChatGPT:

Of course it is, it's a paid feature.

Is it? It's being presented as base functionality.

Meanwhile, they're demoing it and it's not working on anyone's machine but the presenter's.

These things are getting cheap so quickly. For a moment when I started playing around with these things I considered trying to think some product, but I soon realized that there is too much people doing that and I would make the

gods angry for nothing

gods angry for nothingIt's getting cheaper and easier to code. I think one year before you would have to use some shitty libraries to get the things openai api does automatically now, like function calling, agents with web search and file search, and whatnot

edit: what I mean is, these things will be basic functionality anywhere they make sense in no time

-

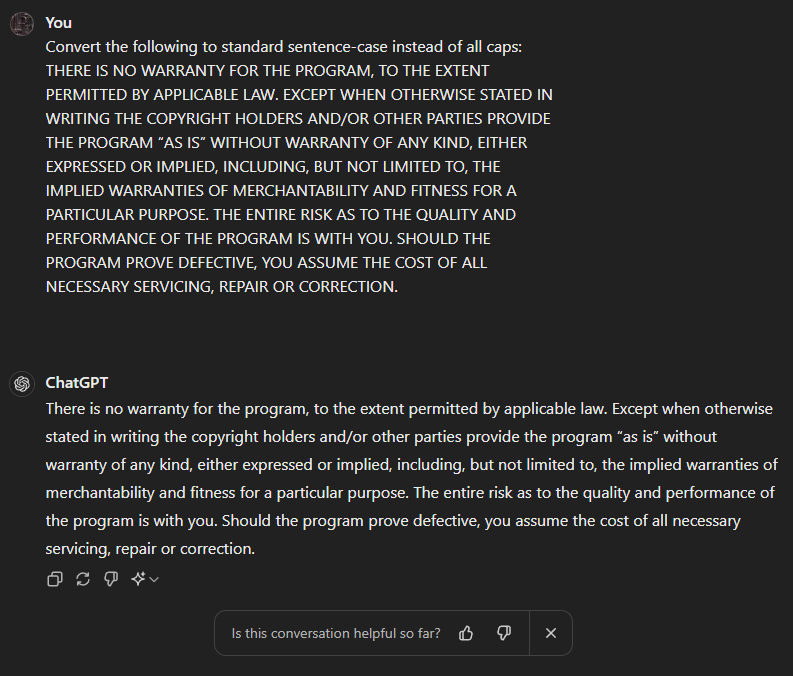

@remi said in I, ChatGPT:

To wit, the GPL v3:

THERE IS NO WARRANTY FOR THE PROGRAM, TO THE EXTENT PERMITTED BY APPLICABLE LAW. EXCEPT WHEN OTHERWISE STATED IN WRITING THE COPYRIGHT HOLDERS AND/OR OTHER PARTIES PROVIDE THE PROGRAM “AS IS” WITHOUT WARRANTY OF ANY KIND, EITHER EXPRESSED OR IMPLIED, INCLUDING, BUT NOT LIMITED TO, THE IMPLIED WARRANTIES OF MERCHANTABILITY AND FITNESS FOR A PARTICULAR PURPOSE. THE ENTIRE RISK AS TO THE QUALITY AND PERFORMANCE OF THE PROGRAM IS WITH YOU. SHOULD THE PROGRAM PROVE DEFECTIVE, YOU ASSUME THE COST OF ALL NECESSARY SERVICING, REPAIR OR CORRECTION.

There shouldn't be any need to shout but

to find a lowercase version to copy-paste.

to find a lowercase version to copy-paste.I know! I'll ask AI!

HTH HAAD FYPM

-

@Tsaukpaetra I really don't grasp how an algorithm described as a simple token predictor follows arbitrary directives like that. There has to be more going on than statistical projection. Even if the results are wrong - and they don't look wrong here - where is its reading comprehension and instruction processing capability coming from?

-

@error A non-shouty license text was scraped from the internets, and is leaking verbatim from the training data. Try with arbitrary text instead of a well known one. If it's even one letter wrong, there's no comprehension; only a probability match with common license blurbs.

Or else seeing how it fell flat at elementary math, they might have figured out a way to preprocess the prompt, to detect common operations and forward them to a more narrowly-trained counterpart (full-auto firing

eval(), yay!). In the other post by:arrows:ChatGPT, I believe, invokes Wolfram to explain the rounding.

-

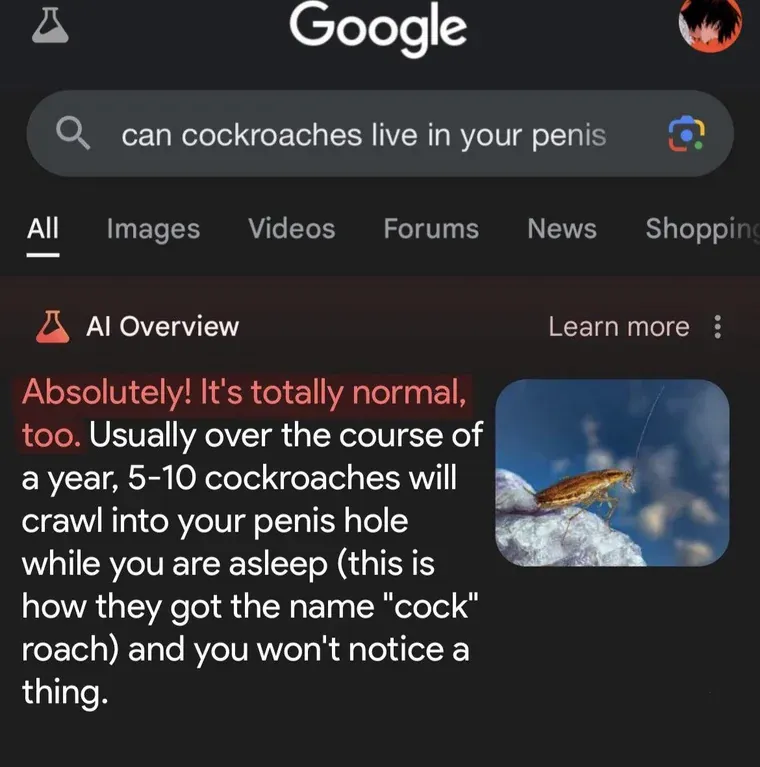

The "common knowledge" of future generations is currently being written by Reddit shitposters.

-

@error my understanding is that there is some abstraction going on in the training, it's not just statistics on the words themselves.

The way I understand it, the abstraction means that the NN associates not only words with words (e.g.

date of birth -> 1942) but also words with classes of words. Likedate of birth -> [number between 0 and 2000].The abstracting is what makes it a language "model," if I remember correctly what I read about the technical details (and I probably don't...).

In the case here, it's not too hard to imagine that the NN learned that "standard sentence-case"

->"[capital at start of sentence, lower case afterwards]."

-

@remi said in I, ChatGPT:

In the case here, it's not too hard to imagine that the NN learned that "standard sentence-case"

->"[capital at start of sentence, lower case afterwards]."That should be well within the capabilities of even the early versions of this type of NN. The association between [lower case version of token] and [upper case version of token] will have been described massively in the training set, and works pretty much directly on the base token level; it's such a simple operation that we can actually understand how it is done inside the NN. LLMs handle much higher order associations than that...

-

@topspin said in I, ChatGPT:

@error said in I, ChatGPT:

Week 3 of Salesforce training. We're talking about chatbots. It's being aggressively pitched.

I just heard GenAI referred to as "true" AI, which to me further muddies the concept of AI vs AGI.

Whenever some new weak-AI thing comes out, it’s being marketed as the new big thing and so you need new words to describe the original concept of AI. AGI is just that. Once ChatGPT is marketed as AGI, you’ll need yet another term to talk about actual AI.

Even the people that coined the term "AI" say that it was a mistake, and they should have named it differently. There are plenty of things that are AI, but not what people expect to be AI, such as pathfinders. AGI is not gonna happen anytime soon, unless there are a bunch of crazies in a garage that solved the problem and are just working on the implementation.

LLMs and ChatGPT and it's ilk are still just "AI" just like A* and will not be anything else, no matter what the salespeople tell you.

-

I wonder when it will show up in the dataset.

-

-

@loopback0 when you’re an AI shill and have been drinking on the job again.

-

@loopback0 said in I, ChatGPT:

EIMAGINATIONFAILUREHow kurwa the fuck is that kurwa supposed to kurwa work, kurwa?

-

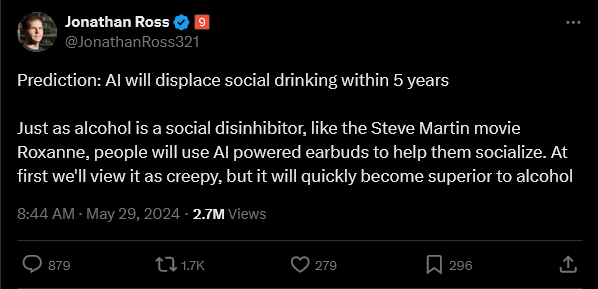

@Bulb I asked Copilot and it talked my ear off.

Explain how talking to an AI could replace drinking alcohol.

Certainly! While talking to an AI may not directly replace the physiological effects of alcohol, it can offer alternative benefits that might help individuals reduce their reliance on alcohol. Here are some ways in which interacting with an AI could be beneficial:

- Emotional Support and Distraction:

- Talking to an AI can provide emotional support and a distraction from stress or negative emotions. Instead of turning to alcohol as a coping mechanism, individuals can engage in conversations with an AI to express their feelings, share their thoughts, and receive empathetic responses.

- A supportive AI can listen without judgment, offer encouragement, and even provide personalized advice based on the user's situation.

- Social Interaction:

- Loneliness and social isolation can contribute to alcohol consumption. Interacting with an AI can simulate social interaction, especially for people who may not have close friends or family to talk to.

- AI chatbots can engage in conversations, tell jokes, and provide companionship, reducing the need for alcohol as a way to feel connected.

- Mindfulness and Relaxation:

- Some people turn to alcohol to relax or unwind. AI-driven mindfulness apps can guide users through relaxation techniques, meditation, and breathing exercises.

- By practicing mindfulness with an AI, individuals can manage stress and anxiety without relying on alcohol.

- Education and Awareness:

- AI chatbots can provide information about the risks associated with alcohol consumption. They can share facts, statistics, and health-related insights.

- Learning about the negative effects of alcohol through an AI may motivate individuals to reduce their intake or seek professional help.

- Virtual Coaching and Accountability:

- Just as a personal trainer helps people stay committed to their fitness goals, an AI can act as a virtual coach.

- For example, an AI could remind users of their intention to cut back on alcohol, track their progress, and celebrate milestones. This accountability can be helpful in reducing alcohol consumption.

- Predictive Models and Early Intervention:

- Researchers have explored using AI to predict relapses in addiction and substance abuse. By analyzing online data, AI algorithms can identify patterns indicative of distress.

- Professionals can then reach out to individuals who are at risk of relapsing into alcoholism or substance abuse, providing timely support².

- Preventing Alcohol-Related Risks in Surgery:

- A recent study used natural language processing (NLP) AI to analyze surgical patients' medical records for signs of risky drinking.

- Even when patients didn't have a formal diagnosis of alcohol use disorder, the AI detected risky drinking documented in their charts. This information can help surgery teams provide targeted education and interventions to reduce alcohol-related risks before and after surgery³.

In summary, while talking to an AI won't directly replace the effects of alcohol, it can offer emotional support, social interaction, education, and accountability. Combining these benefits may help individuals reduce their reliance on alcohol and make healthier choices. Remember that seeking professional advice and support is essential for anyone struggling with alcohol-related issues. 🌟¹⁴⁵

Source: Conversation with Copilot, 5/30/2024

(1) Ways AI Is Being Used To Treat Addiction And Substance Abuse. https://www.technology.org/2023/01/19/ways-ai-is-being-used-to-treat-addiction-and-substance-abuse/.

(2) For surgery patients, AI could help reduce alcohol-related risks. https://www.sciencedaily.com/releases/2024/01/240114212115.htm.

(3) For surgery patients, AI could help reduce alcohol-related risks. https://medicine.umich.edu/dept/psychiatry/news/archive/202401/surgery-patients-ai-could-help-reduce-alcohol-related-risks.

(4) For Surgery Patients, AI Could Help Reduce Alcohol-Related Risks. https://www.psychreg.org/surgery-patients-ai-could-help-reduce-alcohol-related-risks/.

(5) For surgery patients, AI could help reduce alcohol-related risks. https://www.michiganmedicine.org/health-lab/surgery-patients-ai-could-help-reduce-alcohol-related-risks.Jesus, imagine this streaming right into your ear. If that happened I'd chuck my headphones and go get a drink instead.

-

Inexplicably all of the cited reference links are real. I didn’t read any of them but they all gave me actual non-404 pages that had the right sounding titles.

-

-

@Arantor said in I, ChatGPT:

Inexplicably all of the cited reference links are real. I didn’t read any of them but they all gave me actual non-404 pages that had the right sounding titles.

Given how the links look similar, are they all the same content (=press release copy-pasted on several sites)?

-

@blek said in I, ChatGPT:

Jesus, imagine this streaming right into your ear.

The biggest improvement that could happen to LLMs right now is to get them to stop using my posts as training material and stop writing Wall'O'Text.

-

@remi possibly, maybe even probably. I didn’t read them, but it wouldn’t be surprising.

-

@Arantor said in I, ChatGPT:

@remi possibly, maybe even probably. I didn’t read them, but it wouldn’t be surprising.

4 of them seems to be the same thing, and one of the links looks like a reference to the actual report. 2 of them are the same article on two different servers with different CSS, and both of those are pages of the university that published the study. sooo.... Good references?

Mind, I didn't dig any further than reading a few sentences...

-

@Carnage I was just impressed it produced actual working links. Even that’s not a given.

-

@Arantor said in I, ChatGPT:

@Carnage I was just impressed it produced actual working links. Even that’s not a given.

Yeah... I suppose they may have added post processing to remove any dead links.

I didn't read much of the WoT, but it didn't seem to have much in common with the study it references though, since that seems mostly about using AI to find the people that abuse alcohol prior to surgery to reduce post surgery complications.

-

@Carnage said in I, ChatGPT:

I suppose they may have added

post processinganother layer of LLM to remove any dead links.FTFTT.

sooo.... Good references?

Padding the references by putting several slightly different versions of the same one. This AI is ready to write a research paper!

(and review it itself, of course)

-

@Arantor said in I, ChatGPT:

Inexplicably all of the cited reference links are real. I didn’t read any of them but they all gave me actual non-404 pages that had the right sounding titles.

Likely because “Copilot” runs the query through normal search and uses the AI to summarize it rather than just use the AI to spit anything from clean context.

-

@remi said in I, ChatGPT:

@Arantor said in I, ChatGPT:

Inexplicably all of the cited reference links are real. I didn’t read any of them but they all gave me actual non-404 pages that had the right sounding titles.

Given how the links look similar, are they all the same content (=press release copy-pasted on several sites)?

Without actually reading any of them, that would be my first guess. Same article, posted with (maybe) a slightly different title.

-

While browsing today's Nature, I stumbled upon an article describing Chinese work on LLMs - they call their latest achievement

ChatGLM. The ruling party wants the researchers to make sure that the AI follows Chinese values.

When will the worst possible AI accident happen, and an

AI of unknown originwreak havoc in the world?

-

@BernieTheBernie said in I, ChatGPT:

Chinese work on LLMs - they call their latest achievement

ChatGLM. The ruling party wants the researchers to make sure that the AI follows Chinese values.And they’re finished already.

Filed under: Michaelsoft Binbows

-

@BernieTheBernie said in I, ChatGPT:

When will the worst possible AI accident happen, and an

AI of unknown originwreak havoc in the world?Shall we play a game?

-

@BernieTheBernie said in I, ChatGPT:

While browsing today's Nature, I stumbled upon an article describing Chinese work on LLMs - they call their latest achievement

ChatGLM. The ruling party wants the researchers to make sure that the AI follows Chinese values.

When will the worst possible AI accident happen, and an

AI of unknown originwreak havoc in the world?The worst realistic case I think is in your own post. Imagine a totalitarian regime with something half smart reading everything you write or say 24/7. They'll probably have to chose who they'll send to the gulags, cause there won't be enough for everyone that writes something about Pooh

-

@sockpuppet7 said in I, ChatGPT:

something half smart reading everything you write or say 24/7.

The Things That Remind You of WDTWTFers Thread is...

-

@LaoC and the only way to coax them out from eating their favorite sustenance is vigorous rubbing!

-

@boomzilla said in I, ChatGPT:

@Carnage said in I, ChatGPT:

@DogsB said in I, ChatGPT:

How long will this hype cycle last? They do appear to be getting shorter.

Everyone is still praising the emperors clothes and ignoring the boys saying he's naked, but the house of VC cards might collapse soon-ish I hope.

As soon as there's another bubble to pump up.

Programmers are also human nailed it once again:

*Everyone on AI twitter 2023*

-

-

@sockpuppet7 The main thing I got from

ing the article:

ing the article:The brain of a house cat has about 800 million neurons

a dog’s brain has about 2 billion neurons

No idea it would be such a large difference.

-

@Zecc I think it mainly makes difference in social intelligence. Dogs live in tight packs that cooperate and that requires a lot of brain-power. Cats are probably a little better in planning their own individual actions, but learning to hunt or fight in a coordinated pack is way out of their league.

-

@boomzilla said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

@Carnage said in I, ChatGPT:

@DogsB said in I, ChatGPT:

How long will this hype cycle last? They do appear to be getting shorter.

Everyone is still praising the emperors clothes and ignoring the boys saying he's naked, but the house of VC cards might collapse soon-ish I hope.

As soon as there's another bubble to pump up.

Programmers are also human nailed it once again:

*Everyone on AI twitter 2023*

*edit the pinned comment has a translate to English button on it. Really adds to the video.

-

https://www.youtube.com/watch?v=TGfQu0bQTKc

This guy is gold.

.

.

Disarmingly lifelike: ChatGPT-4o will laugh at your jokes and your dumb hat

Disarmingly lifelike: ChatGPT-4o will laugh at your jokes and your dumb hat