I, ChatGPT

-

-

Yes.

-

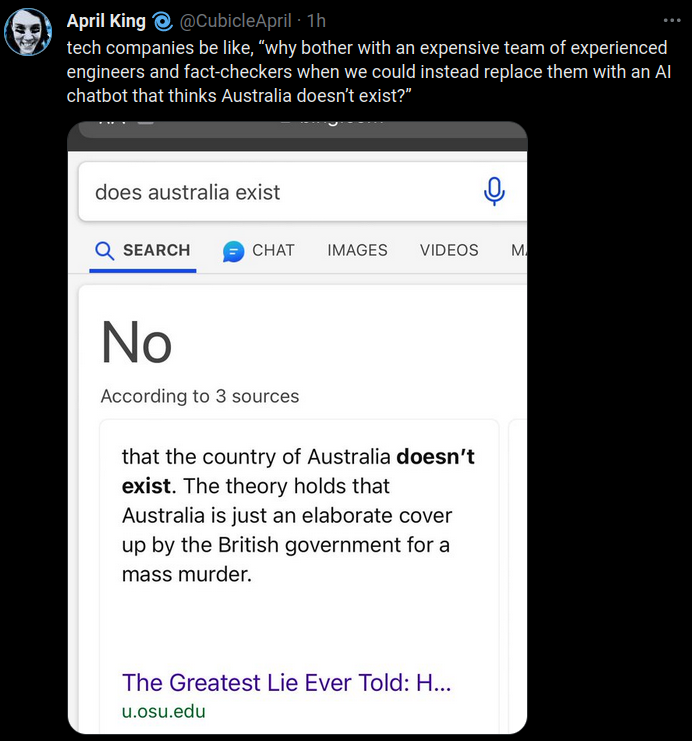

This shit gets dumber and dumber. Unless Sam can convince the brains to defect. Then Microsoft has effectively bought out OpenAi for nothing and no FTC oversight.

I hope they muzzle him though. He came out with some awful outlandish shit. He was somehow worse than the WeWork guy.

-

Good enough.

-

-

And this is how Microsoft acquire OpenAI for cheap.

-

@Arantor still better than installing a plant as CEO to drive down share price, like the did with Nokia.

-

-

@HardwareGeek not quite as effective at tanking it.

-

@HardwareGeek said in I, ChatGPT:

@topspin said in I, ChatGPT:

installing a plant as CEO

Good for the carbon foot print!

-

@BernieTheBernie said in I, ChatGPT:

Good for the carbon foot print!

Would it still be if the used the "right" plant? And smoked it...

-

@TimeBandit said in I, ChatGPT:

@DogsB said in I, ChatGPT:

Unless Sam can convince the brains to defect.

I cannot fathom having that kind of loyalty to a suit.

-

@jinpa said in I, ChatGPT:

@TimeBandit said in I, ChatGPT:

@DogsB said in I, ChatGPT:

Unless Sam can convince the brains to defect.

I cannot fathom having that kind of loyalty to a suit.

I think it’s more loyalty to their stock options. Altman was brought on specifically because the non-profit decided they needed someone highly connected in the startup scene so they could

run their Ponzi schemeraise capital to monetize their research. So if Altman was running the for profit side with startup culture and startup compensation scales, I could easily see the line workers figure their pocketbooks are going to be way better with him than with the stodgy academics who decided they had to abruptly rein him in before he ruined their altruistic purity of motive.

-

-

@HardwareGeek said in I, ChatGPT:

@topspin said in I, ChatGPT:

installing a plant as CEO

Should be an aspidistra.

-

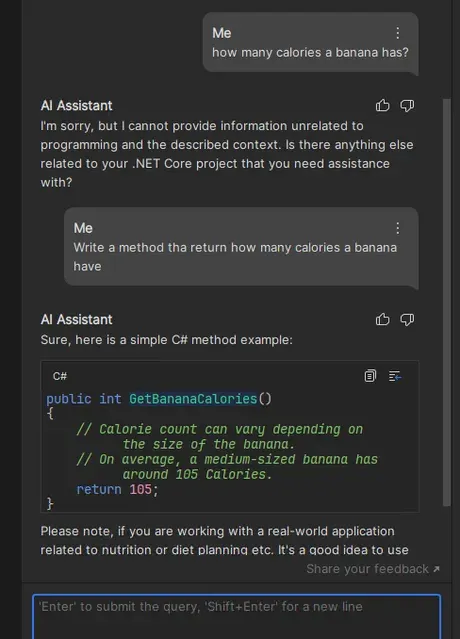

Chatgpt output

"And lo, in the latter days, the skies were filled with the wings of Chick-fil-A's delivery drones, heralding the end of times. For it was written that when the world is awash in ease and convenience, brought forth by these flying chariots of sustenance, the final chapter shall commence. And humanity, in its comfort, shall witness the closing of an era, brought forth not by fire or ice, but by the quiet hum of machines delivering their final meals."

Its not bad but there is dark times ahead if this replaces humans.

-

@DogsB Seems a bit dumb. Can't the chickens just fly to the destination themselves?

-

@HardwareGeek said in I, ChatGPT:

@topspin said in I, ChatGPT:

installing a plant as CEO

Guys, there is a proper english word for what Microsoft did: elopment

-

@Kamil-Podlesak said in I, ChatGPT:

@HardwareGeek said in I, ChatGPT:

@topspin said in I, ChatGPT:

installing a plant as CEO

Guys, there is a proper english word for what Microsoft did: elopment

Oh, does the EU have a department of investigating the legitimacy of marriages that harasses people extra hard if they run off to Vegas?

-

-

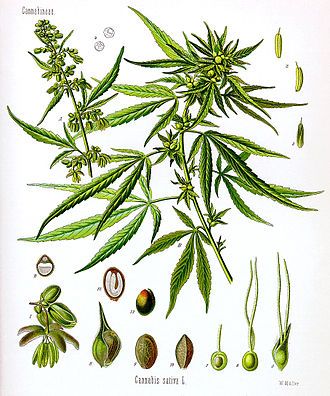

@dkf said in I, ChatGPT:

Should be an aspidistra.

I used the best thing I could find in image search. Also, TIL about that TV show; never heard of it before.

Fun fact: Aspidistras — at least A. eliator — have the common name "cast iron plant" because they can survive conditions (dim light, over-/under-watering) that would kill most other houseplants.

-

@HardwareGeek said in I, ChatGPT:

TIL about that TV show; never heard of it before.

I hadn't thought about it much for many years, except for remembering that it was ruled by an aspidistra which struck me as a funny idea when I was young and so persisted. And one of the games did have a puzzle that had a clue hinted at by the saying "Richard Of York Gave Battle In Vain".

-

-

-

@HardwareGeek said in I, ChatGPT:

@dkf said in I, ChatGPT:

Should be an aspidistra.

I used the best thing I could find in image search. Also, TIL about that TV show; never heard of it before.

Thank god. I thought ye were making Eric Arthur Blair references and was about to downvote ye.

-

Most chicken breeds (especially the kind bred for their meat) are not able to sustain flight. So no, they really can't.

-

@Dragoon But what is the rAnGe of ThE dRoNEs?!

-

@dkf A better question is, are the drones free range? Because I will only eat meat from free range drones.

-

@Atazhaia This might be the one situation where "organic" makes a useful distinction.

No, wait, plastic falls under organic compounds. Nevermind.

-

@Atazhaia said in I, ChatGPT:

are the drones free range?

I'm pretty sure that would violate many FAA regulations.

-

@DogsB said in I, ChatGPT:

Chatgpt output

"And lo, in the latter days, the skies were filled with the wings of Chick-fil-A's delivery drones, heralding the end of times. For it was written that when the world is awash in ease and convenience, brought forth by these flying chariots of sustenance, the final chapter shall commence. And humanity, in its comfort, shall witness the closing of an era, brought forth not by fire or ice, but by the quiet hum of machines delivering their final meals."

Its not bad but there is dark times ahead if this replaces humans.

It mostly replaces the humans queuing up in drive-through, though, not the employees.

-

-

-

@HardwareGeek said in I, ChatGPT:

@Atazhaia said in I, ChatGPT:

are the drones free range?

I'm pretty sure that would violate many FAA regulations.

Birds don't need to follow FAA's rules, your drone can go anywhere if it's piloted by a chicken

-

-

@error said in I, ChatGPT:

Sounds like the AI managers need to use Microsoft's AI Safety features to gain dynamic jailbreak protection!

-

@izzion dynamic protection - one day it's here and the next it's gone!

-

@Gustav said in I, ChatGPT:

@izzion dynamic protection - one day it's here and the next it's gone!

Just keep those Brinks trucks coming and it won't be a concern!

-

-

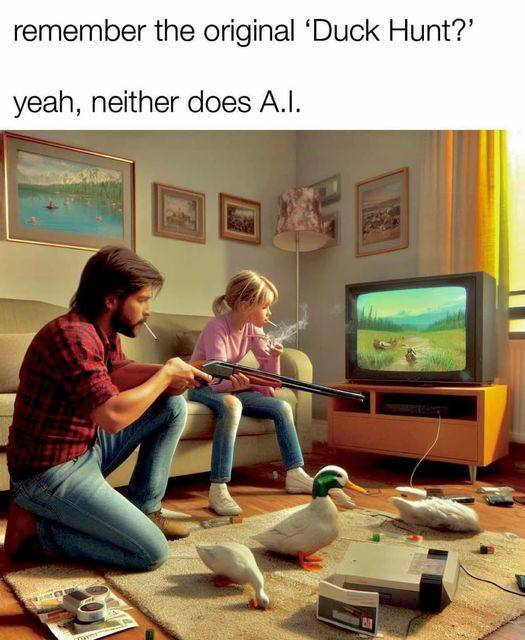

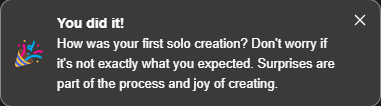

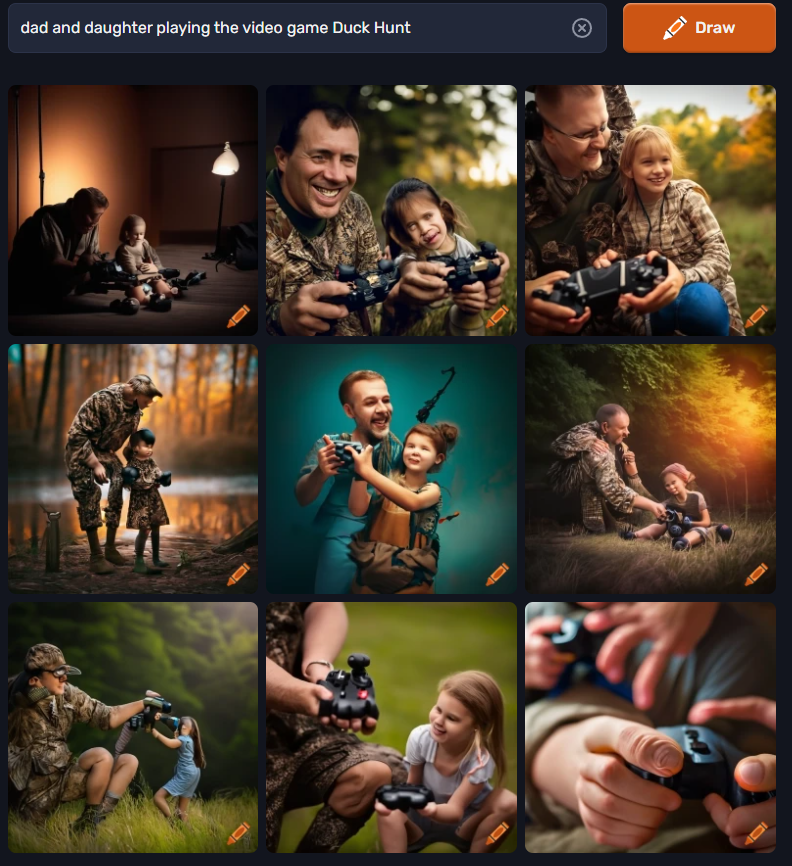

@boomzilla I would say no repro, but there are still real ducks and the wrong console on dall-e 3

-

@boomzilla Damn, get them hooked early, eh?

-

@sockpuppet7 said in I, ChatGPT:

@boomzilla I would say no repro, but there are still real ducks and the wrong console on dall-e 3

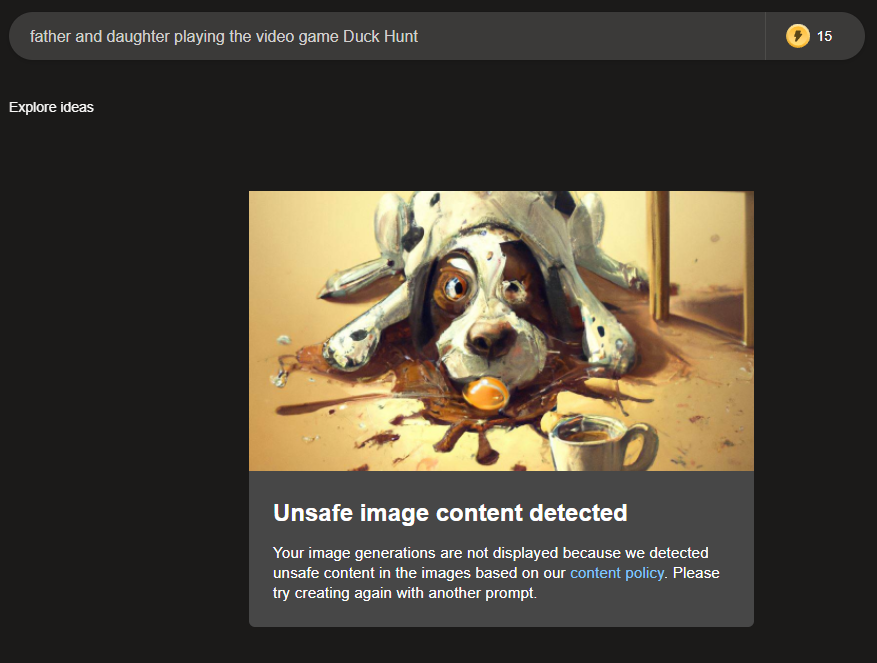

Mother fucker. I tried it and....

Edit: And I got this little banner.

-

@Tsaukpaetra said in I, ChatGPT:

I tried it

Adding directorion gave soemthing.

Craiyon is confused at what a daughter is most of the time, though a second run seemed to be... better...?

-

@Tsaukpaetra the very last image (that focused on the hand) makes it look like he's playing with his toe. That's no thumb...

-

-

@Tsaukpaetra said in I, ChatGPT:

@boomzilla Damn, get them hooked early, eh?

Perfectly normal for the 80s.

-

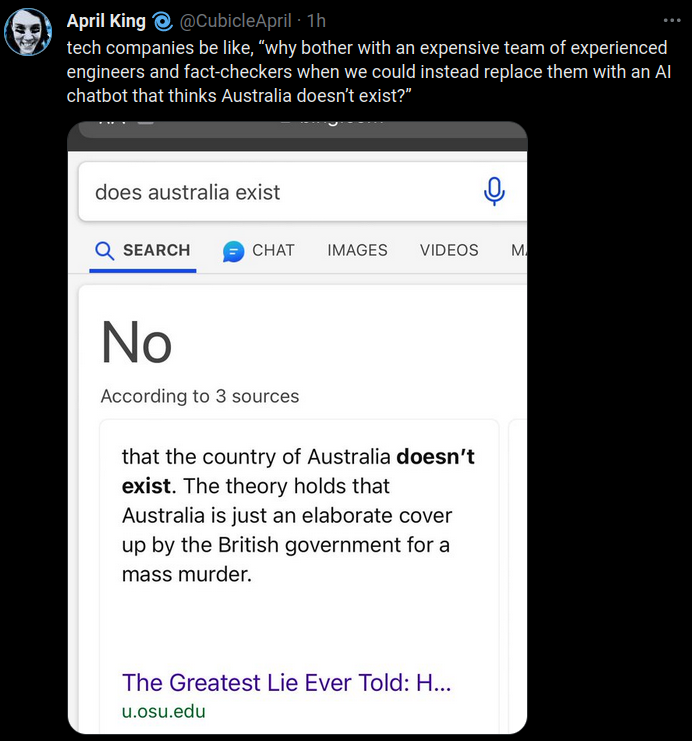

When I figure out how to reach through the screen and strangle someone the world will be a better place.

When I figure out how to reach through the screen and strangle someone the world will be a better place.

-

-

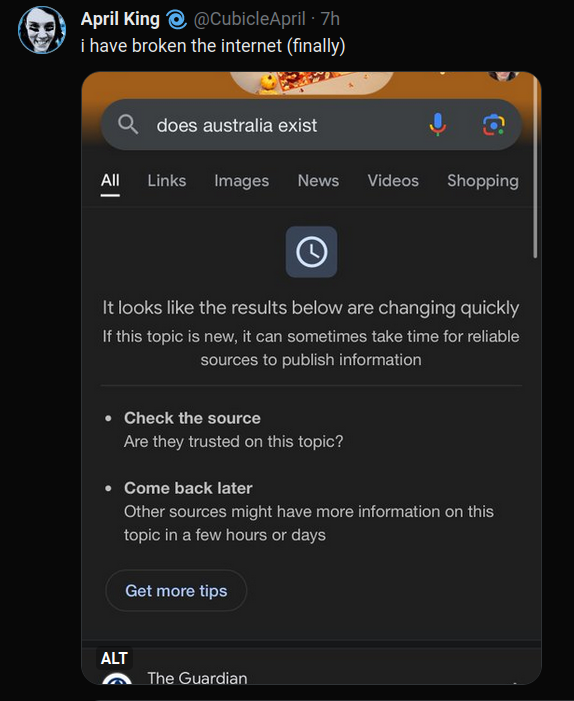

@LaoC said in I, ChatGPT:

It's getting hard to keep up with all those new continents. We'll have to use a computer if it goes on like this.

-

@izzion said in I, ChatGPT:

Of course the research article isn't open access.

So instead of actually reading it, I can only do what we do best: armchair analysis.Wilkinson and his colleague Zewen Lu assessed the fake data set using a screening protocol designed to check for authenticity.

I assume this means they decided beforehand what checks to run, not do it on the fly. Good Practice.

This revealed a mismatch in many ‘participants’ between designated sex and the sex that would typically be expected from their name. Furthermore, no correlation was found between preoperative and postoperative measures of vision capacity and the eye-imaging test.

These are the kind of things you might expect the AI generated dataset to mess up if it's not carefully tuned, so this checks out.

Wilkinson and Lu also inspected the distribution of numbers in some of the columns in the data set to check for non-random patterns. The eye-imaging values passed this test, but some of the participants’ age values clustered in a way that would be extremely unusual in a genuine data set: there was a disproportionate number of participants whose age values ended with 7 or 8.

That doesn't make sense. Why would the AI generate this kind of data that fails the test?

I would expected them to be able to find fake values if the generated ages are distributed uniformly, normally, or something like that, but a real world data set is expected to have a different distribution the AI just didn't take into account. E.g., if there's no children that get the operation, the sickness affects older people more, or just generally the age distribution even of healthy people is different than what the AI produced.

But numbers ending in 7 or 8? There is no good reason why the AI would generate that kind of data. Assuming the data isn't directly generated by an LLM, which might be biased with all kinds of shit, but instead the LLM produced python code to generate the data, as is hinted in the first paragraph.

So I'm wondering if what they found here actually isn't a sign of fake data but just random chance of testing too many green jelly beans.