WTF Bites

-

@blakeyrat said in WTF Bites:

@tsaukpaetra said in WTF Bites:

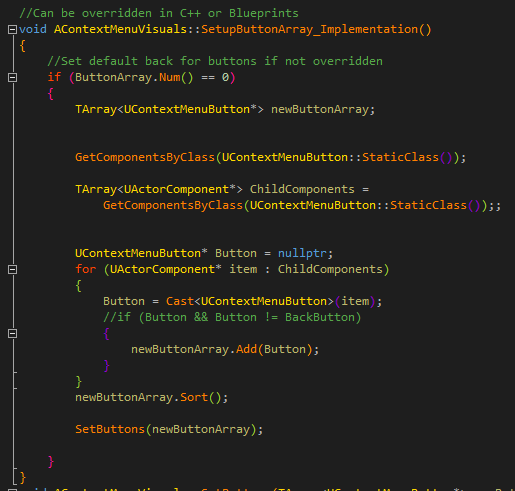

Made a loop to move to the next delectable button in an array:

Do you have a loop for the buttons that don't taste so good?

Yes.

-

@tsaukpaetra The color scheme--it hurts my eyes. And the goggles...the goggles do nothing.

-

@benjamin-hall said in WTF Bites:

@tsaukpaetra The color scheme--it hurts my eyes. And the goggles...the goggles do nothing.

You can thank Visual Assist for that.

-

@benjamin-hall said in WTF Bites:

@tsaukpaetra The color scheme--it hurts my eyes. And the goggles...the goggles do nothing.

I kinda dig the rainbowy parentheses.

-

I can't even fucking reproduce this. It just happens when I'm not paying attention. Fuck you, Hangouts.

-

Well, at least they aren't screwing up their marketing this way anym... oh.

https://www.youtube.com/watch?v=Mh3FN3YCwYk

Filed Under: Six years in which to learn all the wrong lessons. Good job, EA.

-

@benjamin-hall said in WTF Bites:

@tsaukpaetra The color scheme--it hurts my eyes. And the goggles...the goggles do nothing.

I kinda dig the rainbowy parentheses.

You can thank Viasfora for that one. ;)

-

@pie_flavor said in WTF Bites:

I can't even fucking reproduce this. It just happens when I'm not paying attention. Fuck you, Hangouts.

I blame Chrome. It's rather easy to do this when using the touch screen for me.

Then again, my laptop is special that way I think.

-

mbstowcs. Did the person who came up with that method just mash their hand over the keyboard?

The first few times I saw the name Stroustrup, I legitimately thought it was an obscure PHP string function.

-

@pie_flavor said in WTF Bites:

I can't even fucking reproduce this. It just happens when I'm not paying attention. Fuck you, Hangouts.

I can reproduce it 100% of the time with Microsoft's Skype and Teams instant messaging programs by minimizing them before the tooltip for the minimize button appears.

-

mbstowcs. Did the person who came up with that method just mash their hand over the keyboard?

After working for some time with WinAPI, these names start to make perfect sense to you. Or rather, you stop complaining about terrible names and just cry that a function that converts from multi-byte character string to wide-character string is needed at all.

-

mbstowcs. Did the person who came up with that method just mash their hand over the keyboard?

After working for some time with WinAPI, these names start to make perfect sense to you. Or rather, you stop complaining about terrible names and just cry that a function that converts from multi-byte character string to wide-character string is needed at all.

Why not just use UTF-8? What possible simplification does WTF-16 give you? You're still going to have multiple-array-element characters, even if you go to UCS-4. For example: 👨🏼👩🏻👧🏾👦🏻 is one character (although only Windows 10 currently renders it correctly).

-

mbstowcs. Did the person who came up with that method just mash their hand over the keyboard?

I sincerely hope it was their hand.

-

@ben_lubar said in WTF Bites:

For example: 👨🏼👩🏻👧🏾👦🏻 is one character (although only Windows 10 currently renders it correctly).

How many codepoints were harmed in the making of that character?

-

@ben_lubar said in WTF Bites:

For example: 👨🏼👩🏻👧🏾👦🏻 is one character (although only Windows 10 currently renders it correctly).

How many codepoints were harmed in the making of that character?

Here it is in UTF-16:

d83d dc68 d83c dffc 200d d83d dc69 d83c dffb 200d d83d dc67 d83c dffe 200d d83d dc66 d83c dffb

-

-

@ben_lubar said in WTF Bites:

Why not just use UTF-8?

Because Win32 API doesn't do UTF-8.

That's what I was asking - what does Windows gain from using wider-than-a-byte-per-character encodings internally?

-

@ben_lubar said in WTF Bites:

what does Windows gain from using wider-than-a-byte-per-character encodings internally?

Now? Nothing worthwhile. At the time when they took the decision, they thought it would let them get away without needing to handle variable-width characters in the OS (which was more of a pressing problem for Windows than normal Unix due to the support for things like case insensitivity). Unicode changed underneath their feet and after the fact.

-

@ben_lubar said in WTF Bites:

That's what I was asking - what does Windows gain from using wider-than-a-byte-per-character encodings internally?

Sorry, misunderstood. I guess the answer these days is "nothing". AFAIK it's a legacy decision that they've never revisited. As much as I'd like Win32 to offer UTF-8 versions of their API, it's probably not going to happen (and if it is, it probably won't be available on all but the newest Windows versions, so it'll be quite some time before it's reasonable to rely on its availability).

Edit:

'd

'd

-

@ben_lubar said in WTF Bites:

Why not just use UTF-8? What possible simplification does WTF-16 give you?

Histerical raisins. As I understand the history, it went approximately like this:

-

The first version of Unicode was designed with the idea that 16 bits should be enough to fit all the characters and thus UCS2 was used.

-

Various software vendors realized the character set mess is dragging them down and jumped on the bandwagon. This included things like:

- The

wchar_tbeing defined for C and C++ and all the string functions getting their ‘wide’ counter-parts. - Microsoft similarly duplicating each and every function in Windows API to have a

charandwchar_tvariant, and, to ‘simplify’ writing code that could be compiled in both ‘narrow’ and ‘wide’ version, add all thatTCHARmess. - Other languages like Java and JavaScript starting to use UCS2 for their internal representation.

- The

-

Meanwhile other software engineers with less free time on their hands thought how to avoid following Microsoft down the path of schizophrenia and came up with UTF-8, a variable-length encoding backward compatible with ASCII.

-

And then some time after Unicode 2.0 was released came a big uh-oh moment: more characters were requested that could fit in 16 bits. Another round of schizophrenia ensued:

- Those who didn't bake

wchar_tinto their system interfaces followed the specification—which simply says it can hold any supported character—and extended it to 32-bits. - But this time Microsoft and others who did bake it in didn't want to create yet another version of everything, so this time they did what they should have done the first time around—looked for backward compatible solution. UTF-16 was born. And because there were already assigned code-points in the 0xF000–0xFFFF block, very importantly the 0xFEFF zero-width no-break space used as byte-order mark, it got encoded the insane way it was.

- Those who didn't bake

So now UTF-16 is also variable-length encoding, providing absolutely zero benefit over UTF-8, and a handful of disadvantages, but it's baked in API of Windows and some other systems. And they are not alone—Java and JavaScript also actually use ‘WTF-8’, the version where correct surrogate pairs are interpreted as per Unicode, but lone surrogates may appear.

-

-

Oh, great, NVIDIA has a localized page for Sweden these days. And, it's of course totally useless. (Easy to find links to driver downloads? Nah, who needs those?)

-

Minor

-- I was applying for homestead exemption on my new house, and it asked me to verify my email address. Case sensitively. Is that a thing?

-- I was applying for homestead exemption on my new house, and it asked me to verify my email address. Case sensitively. Is that a thing?Oh, and the electronic forms for dates/other formatted numbers didn't respect backspace. You had to move the cursor back manually and type over the previously-entered data.

-

@benjamin-hall said in WTF Bites:

Is that a thing?

Hostnames are case insensitive when using ASCII, and who knows when using one of the non-ASCII domain names? Case sensitivity of mailbox names is entirely up to the receiving mail server — it doesn't even need to be consistent with itself, and could be one thing for one user and another for another — and there's no sane way for anything to query which is the case this time. Given all that, assuming case insensitive email addresses would be really dodgy.

Exact match is easy for computers. Give the poor machine a break!

-

@ben_lubar said in WTF Bites:

That's what I was asking - what does Windows gain from using wider-than-a-byte-per-character encodings internally?

Nothing; they built it before UTF-8 existed. (And it was way better than the old system of "code pages", or "scripts" as Mac Classic called them.)

Same reason Java for example doesn't use UTF-8.

The only reason all those Linuxes support UTF-8 is because they didn't fucking solve the problem of character encoding at all for so many years that by the time they got around to think, "hm, I wonder if Chinese people want to use computers..." better character encodings were available. So yes, open source people slacked off, didn't solve a problem that literally billions of potential computer users had, and as a side-effect managed to luck into a better solution than the companies that actually gave a fuck about their users.

That's not a reason to praise Linux.

-

@blakeyrat said in WTF Bites:

Same reason Java for example doesn't use UTF-8.

It does use UTF-8 for many serialisation formats, but not for normal strings inside the program itself. The format in memory is not important IMO, so long as the semantics are right and performance isn't hammered. (Yeah, that's actually difficult to get right…)

-

How the hell is this happening?!?!?!

-

As much as I'd like Win32 to offer UTF-8

Get ready for

CreateWindowExUtf8!!!

-

-

@boomzilla said in WTF Bites:

As much as I'd like Win32 to offer UTF-8

Get read for

CreateWindowExUtf8!!!Yeah, but you can also just define _UTF8 or something before including windows.h, and it'll set up a number of convenient

#defines like#define CreateWindowEx CreateWindowExUtf8for you...

-

@cvi Meh...The way I use UTF8 is identical to ASCII.

-

@tsaukpaetra said in WTF Bites:

How the hell is this happening?!?!?!

Have you been using Rust nightly?

-

@tsaukpaetra said in WTF Bites:

How the hell is this happening?!?!?!

Have you been using Rust nighly?

I... don't know what that means, TBH.

-

@tsaukpaetra it means a typo.

-

@tsaukpaetra it means a typo.

Even without the typo I still don't comprehend...

-

@Tsaukpaetra https://en.wikipedia.org/wiki/Rust_(programming_language) and https://en.wikipedia.org/wiki/Daily_build and https://web.archive.org/web/20150514152717/http://www.rust-lang.org:80/

-

And

I was referring to the disclaimer that used to appear at the bottom of Rust home page.

Ah. Obscure is as obscure does.

-

@gąska Oh hell I thought you were talking about the video game Rust.

You suck at jokes.

-

@boomzilla said in WTF Bites:

@cvi Meh...The way I use UTF8 is identical to ASCII.

𝕋𝕙𝕒𝕥 🇸🇴🇺🇳🇩🇸 ⓙⓤⓢⓣ 𝖘𝖔𝖔𝖔𝖔𝖔𝖔𝖔𝖔𝖔 🄱🄾🅁🄸🄽🄶⊡

-

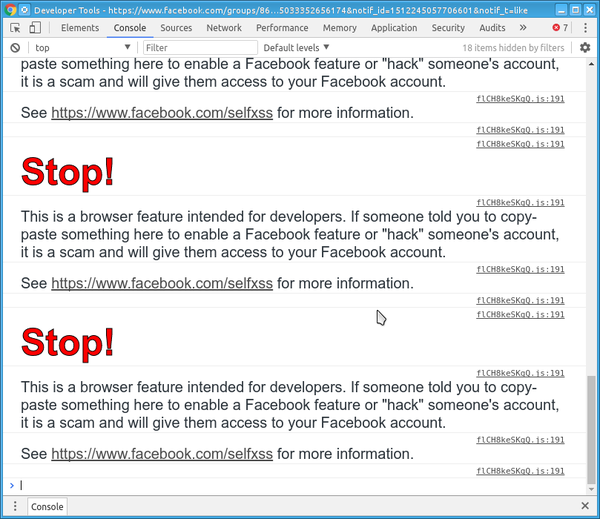

Ugh...Facebook wasn't taking me to some posts when I clicked on notifications, so I popped open the console to see if there was an error or something.

OTOH, selfxss sounds kinda kinky and probably against their ToS.

-

@tsaukpaetra

mama sock has a side thing with the milk man's socks?

-

@boomzilla said in WTF Bites:

@blakeyrat said in WTF Bites:

That's not a reason to praise Linux.

Laziness is always praisworthy.

Breaking: Ubuntu 18.04 to be named kneeling warthog

-

@boomzilla said in WTF Bites:

Ugh...Facebook wasn't taking me to some posts when I clicked on notifications, so I popped open the console to see if there was an error or something.

OTOH, selfxss sounds kinda kinky and probably against their ToS.

Discord does the same thing. I like that they've cottoned on like that.

-

*sigh*

-

@blakeyrat said in WTF Bites:

@ben_lubar said in WTF Bites:

That's what I was asking - what does Windows gain from using wider-than-a-byte-per-character encodings internally?

Nothing; they built it before UTF-8 existed. (And it was way better than the old system of "code pages", or "scripts" as Mac Classic called them.)

Same reason Java for example doesn't use UTF-8.

The only reason all those Linuxes support UTF-8 is because

they didn't fucking solve the problem of character encoding at all for so many years that by the time they got around to think, "hm, I wonder if Chinese people want to use computers..."they were desperately and somewhat foolishly trying to maintain compatibility with a system that was introduced at a time when even ASCII support was wishful thinking for most other OSes, and were so focused on doing that, that they got trapped in a timepod for decades which they never entirely got out of.FTFY, and in a way which, depending on how you look at it, might be considered even more Word Of Blakey Compliant than Blakey's was.

-

@blakeyrat said in WTF Bites:

The only reason all those Linuxes support UTF-8 is because they didn't fucking solve the problem of character encoding at all for so many years that by the time they got around to think, "hm, I wonder if Chinese people want to use computers..." better character encodings were available. So yes, open source people slacked off, didn't solve a problem that literally billions of potential computer users had, and as a side-effect managed to luck into a better solution than the companies that actually gave a fuck about their users.

That's not a reason to praise Linux.We know you hate Linux, but it can't be blamed for anything here, because these events for the most part happened before it even existed (or while it was being written, but before anybody really used it). Also, back than there were many commercial vendors of Unix systems, so it had nothing to do with open-source either.

-

@blakeyrat I vaguely recall you also ranting about "the fact that a software project innovated in the past doesn't mean it deserves praise or users, what matters is what it can do now".

-

@blakeyrat said in WTF Bites:

The only reason all those Linuxes support UTF-8 is because they didn't fucking solve the problem of character encoding at all for so many years that by the time they got around to think, "hm, I wonder if Chinese people want to use computers..." better character encodings were available. So yes, open source people slacked off, didn't solve a problem that literally billions of potential computer users had, and as a side-effect managed to luck into a better solution than the companies that actually gave a fuck about their users.

That's not a reason to praise Linux.We know you hate Linux, but it can't be blamed for anything here, because these events for the most part happened before it even existed (or while it was being written, but before anybody really used it). Also, back than there were many commercial vendors of Unix systems, so it had nothing to do with open-source either.

Doesn't have to be "bad" per se, but the message is that they shouldn't be praised for their wonderful Unicode support either, as that wasn't their fault either.

-

Previously, we determined that the slide show TVs in my college's cafeteria were attempting to read

Thumbs.db,., and..as image files. Now, observe the majesty of the video TVs.

https://i.imgur.com/NlIkYvN.jpg

No, it doesn't stop doing this.

-

@pie_flavor said in WTF Bites:

Now, observe the majesty of the video TVs.

Have they been network enabled to allow them to install their bonus spyware yet?

-

@pie_flavor said in WTF Bites:

Now, observe the majesty of the video TVs.

Have they been network enabled to allow them to install their bonus spyware yet?

The streaming video alone probably takes up the entire access point's bandwidth. No room for spyware here.

out_5.mp4

out_5.mp4

Rust (programming language) - Wikipedia

Rust (programming language) - Wikipedia