WTF Bites

-

The proposed solution? If you're done for the day then you're required to switch off the mouse.

is using a mouse with a laptop.

is using a mouse with a laptop. I currently have a mouse and a keyboard plugged into mine. And a 2nd monitor. Oh, I guess that just makes it a desktop now... (when traveling, the mouse stays home)

I currently have a mouse and a keyboard plugged into mine. And a 2nd monitor. Oh, I guess that just makes it a desktop now... (when traveling, the mouse stays home)

-

@dcon I have two monitors and don't use the built in one, so its even more desktopy. And I don't carry the mouse around when using its notebookiness either.

-

The proposed solution? If you're done for the day then you're required to switch off the mouse.

is using a mouse with a laptop.

is using a mouse with a laptop. I currently have a mouse and a keyboard plugged into mine. And a 2nd monitor. Oh, I guess that just makes it a desktop now... (when traveling, the mouse stays home)

I currently have a mouse and a keyboard plugged into mine. And a 2nd monitor. Oh, I guess that just makes it a desktop now... (when traveling, the mouse stays home)My work laptop exists so it can travel between the docking station at home and the docking station at work. Saves hassle now that I'm working at home since we're not allowed to leave it at work overnight.

-

since we're not allowed to leave it at work overnight.

We weren't allowed to leave it out. Having a lock cable on it was ok. Or being locked in the desk drawer. It didn't come home until KungFlu.

-

It didn't come home until KungFlu.

Mine would travel back and forth every day (the “benefits” of a long commute by train include being able to get something done on the move). When we locked down, it (and I) just stayed home; no disruption at all.

-

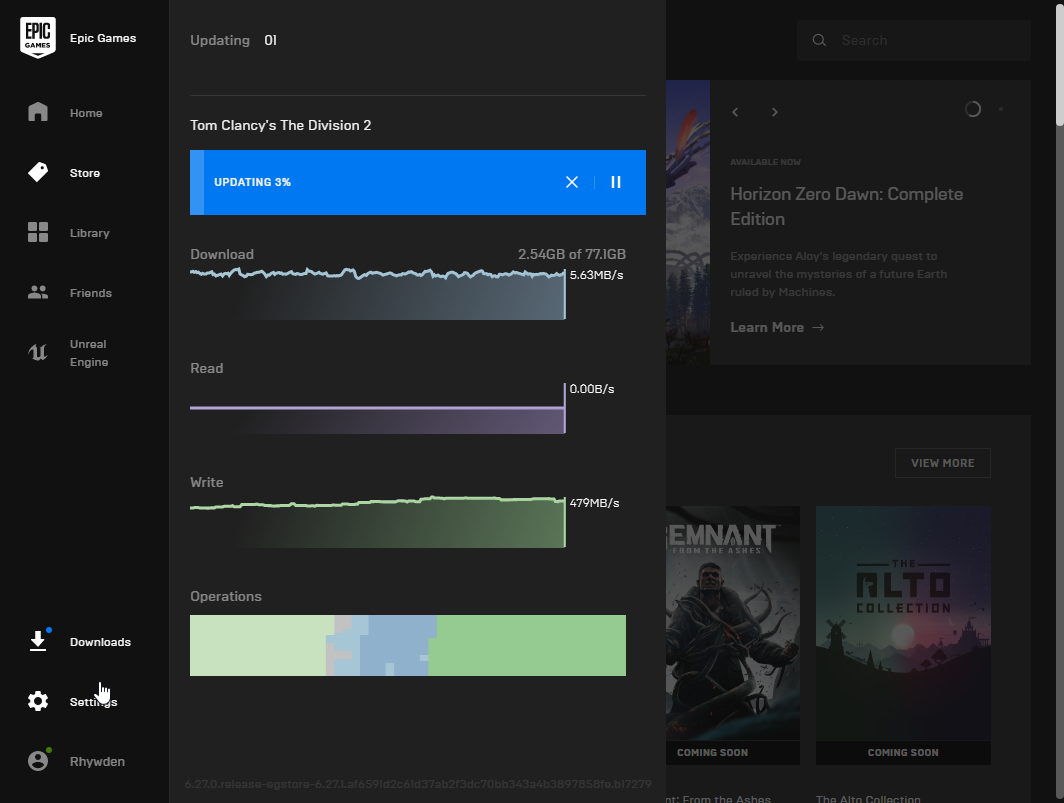

WTF of my day: Someone obviously never heard of "delta patching". This is a patch for an actually installed game.

-

WTF of my day: Someone obviously never heard of "delta patching".

Game

developersmanagers: " "

"

-

Git

You didn't misremember, but the whole thing was a decent WTF itself. What happened, from what I remember, was the first full SHA-1 collision was published so work on fixing git to handle SHA-1 collisions became more urgent. Now if you are going to try to fix git to handle collisions, of course you'll want to test it. What you probably shouldn't do is check in the two colliding files from the research paper in plaintext in your unfixed git repo...

-

It's probably been posted somewhere else already™:

-

The laptops are locked down in a way that you cannot even set the wallpaper.

This may or may not be the biggest

, but it's certainly high on the list.

, but it's certainly high on the list.

-

WTF of my day: Someone obviously never heard of "delta patching". This is a patch for an actually installed game.

If it's a Unreal Engine game (which, being in Epic Games launcher, basically makes it a prerequisite) then delta patching might easily be broken if the dev's packing system gets off-by-one'd by a file early in the package.

Last I remember they were supposedly doing 2 mbit chunks, but who knows...

-

@Zerosquare awesome, I can probably get 4 spare of those at Ikea for 20 bucks.

-

Maybe, but they wouldn't come with the Special Apple Screws™ needed to attach them

-

@Tsaukpaetra said in WTF Bites:

If it's a Unreal Engine game (which, being in Epic Games launcher, basically makes it a prerequisite) then delta patching might easily be broken if the dev's packing system gets off-by-one'd by a file early in the package.

Any decent delta-patching tool can handle insertions and deletions.

-

@Zerosquare said in WTF Bites:

Maybe, but they wouldn't come with the Special Apple Screws™ needed to attach them

-

@Zerosquare said in WTF Bites:

@Tsaukpaetra said in WTF Bites:

If it's a Unreal Engine game (which, being in Epic Games launcher, basically makes it a prerequisite) then delta patching might easily be broken if the dev's packing system gets off-by-one'd by a file early in the package.

Any decent delta-patching tool can handle insertions and deletions.

But you repeat yourself.

-

@Zerosquare said in WTF Bites:

It's probably been posted somewhere else already™:

Uhhh...what?

There's nothing particularly special about Cupertino's version. They let you move your computer without having to carry it. That's it.

How heavy are Apple's computers these days?

-

@boomzilla said in WTF Bites:

Uhhh...what?

I tried to come up with a snarky comment yesterday when I first saw the post. But the whole thing is just too ridiculous. Even by Apple standards. Eventually, I figured I'd just move on and try to forget about it.

Either way, if somebody were to try to sell $700 (or $200) computer box wheels on Amazon, I'd wonder if they weren't part of a money laundering operation.

-

@Tsaukpaetra Alternatively, game data is encrypted. I believe Division 2 doesn't exactly need any DRM as it's online-only anyway, but it may be there to prevent data mining.

-

But the whole thing is just too ridiculous. Even by Apple standards.

No, it's not. Apple is an ongoing experiment on how much it's possible to brainwash people for money. They try to wrap it up for years and years, but they just push ahead with no barrier in sight.

-

But the whole thing is just too ridiculous. Even by Apple standards.

You seem to have forgotten about this one:

EDIT: Hmm, I googled for an English article, yet what I found was a .de site? Whatever

-

From the

:

:Anonymous') OR 1=1; DROP TABLE wtf; -- (unregistered) in reply to Applied Mediocrity

Fun fact: many of the early PHP functions have such weird names because, I kid you not, the hash function used by the parser was strlen().

.Assert.AreEqual(TRWTF.GetUnderlyingWTF(), "PHP")never fails, but... bloody hell.

-

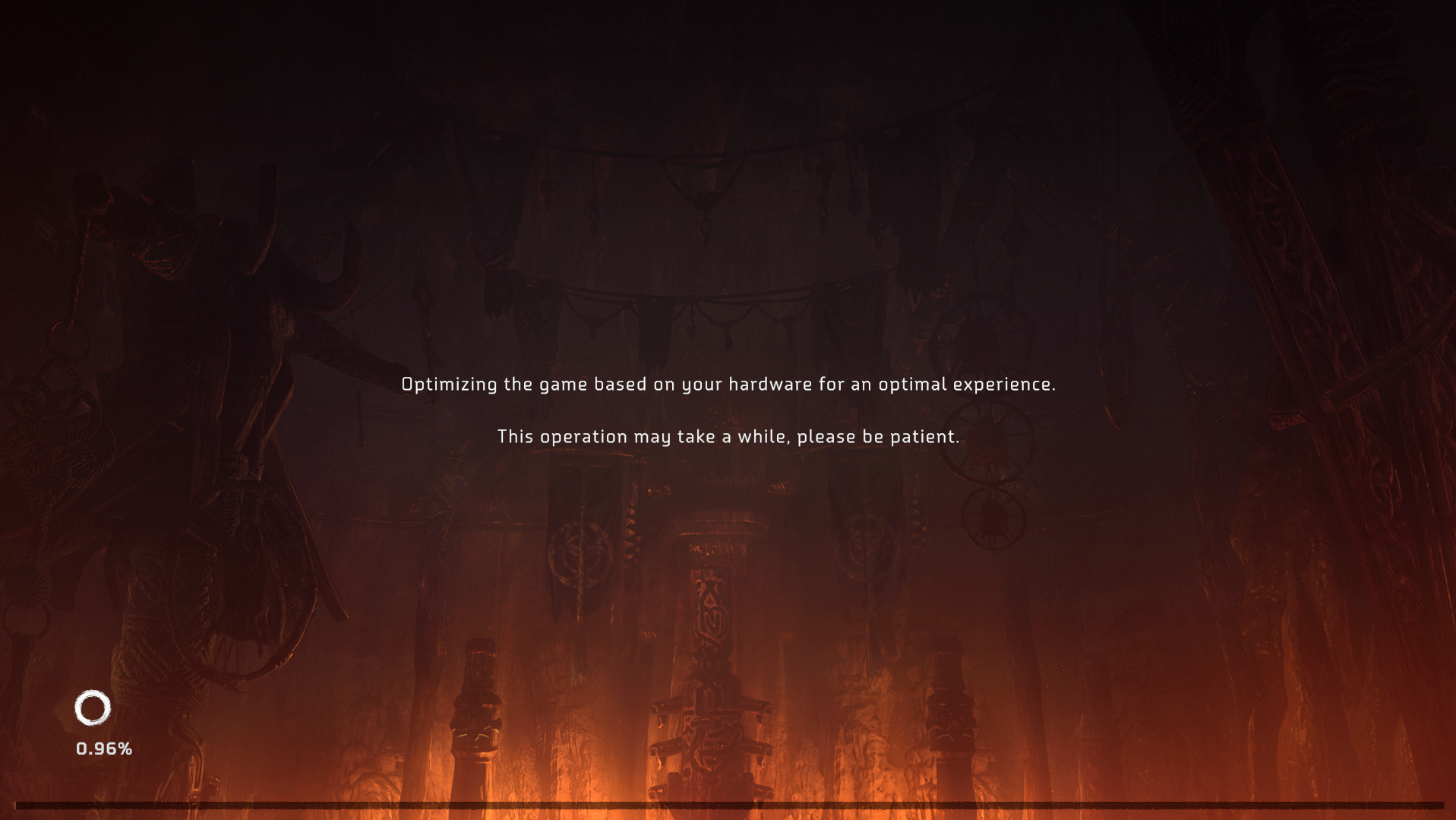

Horizon Zero Dawn (PC):

Yes, 0.96% was indeed just shy of 1%. And they were not kidding about it taking a while.

Earlier today I read that it uses about 18 GB of RAM while building its shader cache, hitting virtual memory pretty hard on many machines. (My gaming machine only has 16, for example.)

Shaders are code. They're tiny text files; the really big ones are a few kilobytes in size. What in the world does any program need even 1 GB for, let alone 18, to build its set of shaders

-

@dkf Well, some people don't like the trackpad, so...

This statement implies the existence of some people who do

-

@Mason_Wheeler Windows 10 made multi-touch touchpads quite useful with its semi-configurable 3-finger and 4-finger swipes. I use 3-finger swipes to switch between virtual desktops. The smooth scrolling between them looks awesome.

-

@Zerosquare awesome, I can probably get 4 spare of those at Ikea for 20 bucks.

As I have recently learned, be prepared to wait about half an hour just to get in the door. Christ. First line I've had to deal with in the pandemic.

-

Status: Why are passwords being stored in error logs?

-

@Applied-Mediocrity said in WTF Bites:

@Tsaukpaetra Alternatively, game data is encrypted. I believe Division 2 doesn't exactly need any DRM as it's online-only anyway, but it may be there to prevent data mining.

Yeah, but the encryption keys don't often change, and since the blocks are (supposed to be) the same size as the chunks, a shift in one block shouldn't affect the rest unless it shifts into another block, which cascades down regardless if it's encrypted or not (until the shifting becomes small enough to not push into the next block, naturally).

-

@Tsaukpaetra said in WTF Bites:

Status: Why are passwords being stored in error logs?

That's not a password. That's a rejected new password. Since nobody would even consider trying to use a password a second time, logging it is safe.

-

@Tsaukpaetra said in WTF Bites:

Yeah, but the encryption keys

don't often changeare stored alongside the game, otherwise you couldn't play it

-

@Tsaukpaetra said in WTF Bites:

Yeah, but the encryption keys

don't often changeare stored alongside the game, otherwise you couldn't play it

Same difference. I don't really know why UE4 bothers except maybe as file verification?

-

@Mason_Wheeler said in WTF Bites:

Horizon Zero Dawn (PC):

Yes, 0.96% was indeed just shy of 1%. And they were not kidding about it taking a while.

Earlier today I read that it uses about 18 GB of RAM while building its shader cache, hitting virtual memory pretty hard on many machines. (My gaming machine only has 16, for example.)

Shaders are code. They're tiny text files; the really big ones are a few kilobytes in size. What in the world does any program need even 1 GB for, let alone 18, to build its set of shaders

I could easily be the

here, but if I find the thread again I'll let everyone know. (I don't even know what all shaders do, outside of what you can infer from the name.)

here, but if I find the thread again I'll let everyone know. (I don't even know what all shaders do, outside of what you can infer from the name.)

-

I don't even know what all shaders do

They're basically programs that run on the GPU. You tell the GPU "draw this geometry with this shader and these inputs" and it produces a rendered view of a 3D model on your screen.

A shader cache is a GPU trick that precompiles your shaders from the high-level shader language to your specific GPU's machine code and saves them to your hard drive so you won't have to do that while the game is running. It should require a few MB tops of memory to operate.

-

@Mason_Wheeler said in WTF Bites:

They're basically programs that run on the GPU. You tell the GPU "draw this geometry with this shader and these inputs" and it produces a rendered view of a 3D model on your screen.

A shader cache is a GPU trick that precompiles your shaders from the high-level shader language to your specific GPU's machine code and saves them to your hard drive so you won't have to do that while the game is running. It should require a few MB tops of memory to operate.To elaborate a bit.. There are several different kinds of shaders. A standard graphics pipeline typically has a vertex shader and a pixel/fragment shader, plus optionally geometry and tessellation (hull/control + domain/evaluation). Additionally, there are general-purpose compute shaders. (Newer things like ray tracing introduce an additional few types, e.g., ray generation, hit, miss and intersection shaders. Additionally, NVIDIA has a few types relating to their mesh shader stuff.)

Shaders are written in a lobotomized C-like language, and either handed over to the graphics API as straight source code or in some intermediate representation (e.g., DXIL or Spir-V). There the intermediate representation is recompiled by the drivers to whatever the target hardware runs natively. Quite a lot of optimization takes place at that step still, since hardware capabilities change quite a bit (even between different generations of GPUs from the same vendor), so this isn't necessarily super cheap, even if precompiled to the intermediate representation.

A pixel/fragment shader in particular is a small program that runs for each drawn pixel. The output can be a color (if you're computing an image to be shown on screen) or anything else really (intermediate results). Pixel shaders had a tendency to run quite a few times. For example, a full-screen pass at 1920x1080 resolution results in ~2M invocations of the pixel shader program. Rendering a 3D view may be worse even, due to overdraw. Optimizing such shaders is therefore somewhat important, as even a few instructions per invocation (or per loop inside the shaders) can make a measurable difference in end performance. Some of the optimizations (or even just parameters) will depend on the hardware and its peculiarities that the shader ends up running on (and, yes, those can change performance by several factors quite easily).

I don't know what the Decima engine is doing, but as a completely wild-ass guess, I would perhaps guess that it's compiling and benchmarking a lot of different permutations of shaders (different parameters/settings, different optimizations, perhaps even alternative implementations of certain passes -- something like SSAO springs into mind here). That would explain the massive memory consumption as well: it would have to load significant chunks of assets to have a good test coverage for the benchmarks such that it can select a good combination of settings that fit the whole game (and not just one particular level/area). But, for once, it doesn't matter if those assets are fully resident on the GPU all the time (so, swapping in and out of the GPU or even paging is OK).

(The other option that I was considering was that it was transcoding textures to whatever format the GPU prefers. But that doesn't fit on two accounts: PC GPUs / DirectX all support the BC formats, and it wouldn't explain the massive memory consumption mentioned upthread.)

-

but as a completely wild-ass guess, I would perhaps guess that it's compiling and benchmarking a lot of different permutations of shaders (different parameters/settings, different optimizations, perhaps even alternative implementations of certain passes -- something like SSAO springs into mind here). That would explain the massive memory consumption as well

Sounds like something a gentoo programmer would release.

-

@topspin Gentoo doesn't do the benchmarking different configurations automatically, though (you need to specify --omg-optimize yourself).

Autotuning during builds (or even at runtime) isn't completely unheard of, though. ATLAS springs into mind, for example.

-

Autotuning during builds (or even at runtime) isn't completely unheard of, though. ATLAS springs into mind, for example.

I had that exact thought, too, actually. But doing something once every time you install a multi-million dollar machine isn't quite comparable to doing it for every desktop user.

-

But doing something once every time you install a multi-million dollar machine isn't quite comparable to doing it for every desktop user.

True. (Although, last time I installed ATLAS on a Gentoo desktop, it did the autotuning stuff for my machine.)

Might be related to the fact that Decima/Horizon Zero Dawn mainly targeted the PS4. There you'd essentially run the autotuning phase once for each release, and then just rely on the fact that all PS4s have essentially the same GPU/HW. Or it's something they added after the fact to deal with the diversity in PC hardware when doing the PC release. (At the moment, it's all just conjecture anyway.)

-

@cvi The real question is why isn't that stuff something that runs once per configuration? Most people don't change graphics hardware all that often, and hopefully CPU-side driver updates don't make much difference to the shaders themselves.

-

The real question is why isn't that stuff something that runs once per configuration?

It's run once per configuration (i.e., once on first launch of the game) and then never again.

Edit: Not sure if it will rerun if the driver updates. I think the driver can indicate whether or not cached shaders/pipelines are still valid.

-

The real question is why isn't that stuff something that runs once per configuration?

It's run once per configuration (i.e., once on first launch of the game) and then never again.

That's once per installation. It should be run once per configuration, with results stored globally.

-

@MrL Fair.

I think it's tricky to pull off, though it depends on the exact settings that they tune. CPU driver versions will make a difference, especially considering that drivers occasionally introduce new application-specific optimizations/fixes (or just new optimizations generally). So, the choices essentially end up depending on GPU+driver at the minimum. Minor variations in the GPU could end up mattering, so you might be looking at not just GPU-model, but at the various variations that the manufacturers have.

Also, somebody has to run the initial tuning pass for a new configuration. Unless you have access to the necessary combinations of hardware/software yourself, you would need to trust the results of whatever user first runs into that (and that user would still have to suffer through the configuration process). Users might not agree to share that data either.

Tuning per-installation has a chance to catch weird stuff, like variations in PCIe bandwidth (if you do a lot of round-trips) or weak CPUs suffering more with certain techniques than others.

Now, not every game is doing lengthy auto-tuning (if that's what's happening in the first place), so clearly there are ways around that.

-

clearly there are ways around that

The easiest way around it is to use less optimised shaders. Making things a little more generic means you can use a pre-computed set of shaders for common configurations, and that will speed things up a lot. You won't get quite as much performance out of the system, but maybe you don't care about that too much.

-

Status: This fucking idiot developer took a list, split the items out according to a property on the items (into separate holding variables), then passed the individual variables as parameters to another object, which simply puts them back into a list.

What the fuck.

-

@Tsaukpaetra said in WTF Bites:

What the fuck.

Status: Thanks Jeremy. Your necessity has apparently turned into permanent crutch.

-

@Tsaukpaetra said in WTF Bites:

which simply puts them back into a list.

I reconstructed the intent into a 7-line Linq statement .

And this was definitely possible at the time of original writing, because it's used later in the class.

-

@Tsaukpaetra said in WTF Bites:

Status: This fucking idiot developer took a list, split the items out according to a property on the items (into separate holding variables), then passed the individual variables as parameters to another object, which simply puts them back into a list.

What the fuck.

Insufficient

. Programmer started churning the code before thinking what is actually needed and how to do it with least typing.

. Programmer started churning the code before thinking what is actually needed and how to do it with least typing.

-

-

@Tsaukpaetra said in WTF Bites:

Status: This fucking idiot developer took a list, split the items out according to a property on the items (into separate holding variables), then passed the individual variables as parameters to another object, which simply puts them back into a list.

What the fuck.

Insufficient

. Programmer started churning the code before thinking what is actually needed and how to do it with least typing.

. Programmer started churning the code before thinking what is actually needed and how to do it with least typing.Have I mentioned they program like it's plain old C? For example, all variables are declared at the top of the class, with prefixes. There are almost no local variables. This means functions where only once is used still have dangling variables in the class.

Amazing.

-

@Tsaukpaetra said in WTF Bites:

Have I mentioned they program like it's plain old C? For example, all variables are declared at the top of the class, with prefixes. There are almost no local variables. This means functions where only once is used still have dangling variables in the class.

FWIW, you don't need to do that in C and haven't for quite a long time now.