The Official Status Thread

-

@Zecc said in The Official Status Thread:

@Tsaukpaetra said in The Official Status Thread:

@Zecc said in The Official Status Thread:

using the phone for calls

Calls? What's that?

Hawaiian noises.

Banging on the bongos like a chimpanzee.

Did I just catch whatever Gribnit's got?

Jahones, his arms clenched firmly. D'joaki, her hips full but hidden.

-

Status: Easy to sum up:

Security framework debugging, as different bits jump in and interfere with each other while conspiring to say “lol nope” and the whole lot is so huge and complex that debugging into it to find where things are actually blowing up is a major pain. There's just so many damn stack frames…

Security framework debugging, as different bits jump in and interfere with each other while conspiring to say “lol nope” and the whole lot is so huge and complex that debugging into it to find where things are actually blowing up is a major pain. There's just so many damn stack frames…

Bison grass vodka. The name sounds weird, but the drink tastes great!

Bison grass vodka. The name sounds weird, but the drink tastes great!

-

@Zecc it's a necessary, but not a sufficient, indication.

-

Status: So, we want to run a test which requires an up-to-date installable. Which means running a build. But if we only touched business logic we don't want to run a build, meaning we don't have up-to-date installables, meaning we can't meaningfully run the test.

People seem to have trouble grasping this.

-

@PleegWat said in The Official Status Thread:

But if we only touched business logic we don't want to run a build

What?

-

@Gąska said in The Official Status Thread:

@PleegWat said in The Official Status Thread:

But if we only touched business logic we don't want to run a build

What?

Could be rules-engine based. If there's no repo changes and no executable change likely and the business logic still changed. If the installable includes both rules and executable, resume

-

@Gąska said in The Official Status Thread:

@PleegWat said in The Official Status Thread:

But if we only touched business logic we don't want to run a build

What?

I imagine something like "quality of life" update, where the actual core functionality is not changed, but some things that aren't business-rules related are.

-

@Tsaukpaetra yeah but why TF wouldn't they want to build afterwards?

-

@Gąska said in The Official Status Thread:

@Tsaukpaetra yeah but why TF wouldn't they want to build afterwards?

Are you asking me to percieve the minds of managers?

-

Status: Oh, that's one reason why I keep running out of room on C:. The slicer software for my 3D printer leaves tens of thousands of temporary files in AppData\Local\Temp and never cleans up after itself. It appears to be one file per layer per model. The individual files aren't very big, maybe 50kB each, but multiply that by 40000 or 50000 files, and it takes up some room.

-

@HardwareGeek said in The Official Status Thread:

It appears to be one file per layer per model.

Front page material.

(insert usual joke about front page, etc.)

-

@HardwareGeek said in The Official Status Thread:

AppData\Local\Temp

At one point I had a startup script that symlinked that to a RAM disk. Some parts of Windows does not like that at all...

-

@Tsaukpaetra said in The Official Status Thread:

Windows does not like that at all...

We can always count on you to discover things that don't work.

-

Status: Watched Jumanji: Welcome to the Jungle. Very positively surprised. Of all the "people trapped in a video game" movies I've seen, this one has by far the most accurate portayal of the video game part; I can actually see it being a real game with basically no major changes. And it's a decent movie overall, much better than the 1995 one.

-

Status: there's a song in my playlist called Patience.

There's an initial moment of silence in the version I have downloaded, so I skipped to the next song.

-

@Zecc said in The Official Status Thread:

Status: there's a song in my playlist called Patience.

There's an initial moment of silence in the version I have downloaded, so I skipped to the next song.

I’m in this post and I don’t like it.

-

@error said in The Official Status Thread:

I had too much work on my plate, so my boss reassigned one of my tasks to another developer. Who delegated it to another developer. Who is now bugging me for help.

Yeah I had to tell our scrum master to stop reassigning tasks away from me to the junior developers because of this reason. I've also started enforcing a no calls before two policy and sure as shit not after 4:30 rule. I'm getting my work done and the juniors have stopped biting off more than they can chew.

-

Annoyance level: 11

Issue at customer X! Application doesn't start! Oh and I can't make a ticket because I lost my credentials

Issue at customer X! Application doesn't start! Oh and I can't make a ticket because I lost my credentials

Oh how informative ... Let's have a look if it starts server side

Oh how informative ... Let's have a look if it starts server side

hey dofus ... first connect to the client vpn

oh yeah due to that domain migration I totally forgot your vpn accounts

... to connect to customer X use linked credentials

... to connect to customer X use linked credentials

click

click

error connecting to url

hey dofus ... first connect to the company vpn to access the credential store

enters AD account in vpn thingy

enters AD account in vpn thingy

ding authenticate!

ding authenticate!

ok

ok

....

....

ding confirm!

ding confirm!

....

....

Proceed!

Proceed!

hey ... the page you requested can now load

Authenticate!

enters AD account

enters AD account

enters AD account and" checks the box 'use windows authentication'

enters AD account and" checks the box 'use windows authentication'

here you go: username and ****

disconnect company vpn

disconnect company vpn

enter username and password

username and UnknownError. Http failure retrieiving password

connect to company vpn

ding authenticate!

ding authenticate!

ok

ok

....

....

ding confirm!

ding confirm!

....

....

Proceed!

Proceed!

enters AD account and" checks the box 'use windows authentication'

enters AD account and" checks the box 'use windows authentication'

here you go: username and ****

ctrl+c

ctrl+c

disconnect company vpn

disconnect company vpn

enter username and password

ctrl+v

ctrl+v

Welcome to Company's X Authentication Store!

Authenticate!

ctrl+v

ctrl+v

... to access the Authentication Store enter the password Hunter2

... to access the Authentication Store enter the password Hunter2

Welcome! ... would you like to connect to a server?

Hey, it's probably something at our end ... we seem to have issues with other applications as well ...

Hey, it's probably something at our end ... we seem to have issues with other applications as well ...

-

@Gąska said in The Official Status Thread:

@PleegWat said in The Official Status Thread:

But if we only touched business logic we don't want to run a build

What?

This regards running tests on a developer's in-progress work. The business logic does not need to be compiled before it can be used by the rules engine. It does need to be packaged into an installable for distribution, but so far we haven't used installables in the test phase.

Not doing a build saves ~20 minutes on a full test run.

-

@PleegWat said in The Official Status Thread:

Not doing a build saves ~20 minutes on a full test run.

So, if arguing that you don't need a build takes you more than 20 minutes, you know what to do.

-

@HardwareGeek said in The Official Status Thread:

The individual files aren't very big, maybe 50kB each, but multiply that by 40000 or 50000 files, and it takes up some room.

We used to have a problem like that with files coming out of our simulations. It's a pain on Unixes where you discover that yes, you really are running out of file handles when trying to access that many, which causes failures in your code that are really quite rare under other circumstances. But it's worse on Windows where it can permanently subtly break NTFS as well, as there's a few things where it simply can't recover the space after you do them (such as massively increasing the size of directories, which causes it to flip a config into something that's much more wasteful). Such

as you only see when computing at scale!

as you only see when computing at scale!We now stuff all that in a single SQLite database (it's a fuckoff long table of BLOBs plus basic metadata) which has basically no performance penalty and is a great deal easier to manage.

-

@dkf said in The Official Status Thread:

Security framework debugging

The goddamn login page now works.

-

@dkf said in The Official Status Thread:

We used to have a problem like that with files coming out of our simulations. It's a pain on Unixes where you discover that yes, you really are running out of file handles when trying to access that many, which causes failures in your code that are really quite rare under other circumstances

It's a bit ironic that the Unix guys keep telling you about their "everything is a file" approach and the collary of the filesystem is a DB, but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.

-

@topspin said in The Official Status Thread:

It's a bit ironic that the Unix guys keep telling you about their "everything is a file" approach and the collary of the filesystem is a DB, but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.It depends critically on the filesystem in use. Many use a linked list for directories, which is space-efficient but gets very slow as the number of files increases. (If you can't go the DB route, you have to split files into a hierarchical structure so that you don't have more than a thousand entries in a particular directory, which does scale.) Other filesystems cope far better (using this new technology called a “hash table”!) and on those you can do pretty much anything you like; 100k entries in a single dir won't bring the filesystem or OS to its knees (but

lswill still take a long time; sorting the entries has non-zero cost).NTFS has other problems, in that it has stupendous problems shrinking the size of anything other than a regular file. Big directories stay massive forever, and I think the problems can infect directories that you can't easily delete.

-

@Gąska said in The Official Status Thread:

And it's a decent movie overall, much better than the 1995 one.

I don't know if I'd go that far, but it's a great modern sequel among a sea of garbage

-

@topspin said in The Official Status Thread:

@dkf said in The Official Status Thread:

We used to have a problem like that with files coming out of our simulations. It's a pain on Unixes where you discover that yes, you really are running out of file handles when trying to access that many, which causes failures in your code that are really quite rare under other circumstances

It's a bit ironic that the Unix guys keep telling you about their "everything is a file" approach and the collary of the filesystem is a DB, but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.Plain

lsdoes a fair bit of sorting and layouting. Tryls -1Unext time.

-

@PleegWat said in The Official Status Thread:

@topspin said in The Official Status Thread:

@dkf said in The Official Status Thread:

We used to have a problem like that with files coming out of our simulations. It's a pain on Unixes where you discover that yes, you really are running out of file handles when trying to access that many, which causes failures in your code that are really quite rare under other circumstances

It's a bit ironic that the Unix guys keep telling you about their "everything is a file" approach and the collary of the filesystem is a DB, but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.Plain

lsdoes a fair bit of sorting and layouting. Tryls -1Unext time.

-

Karen Gillan

I still don't fully associate her as the same person as the blue chick in Guardians of the Galaxy.

-

@Zecc said in The Official Status Thread:

Karen Gillan

I still don't fully associate her as the same person as the blue chick in Guardians of the Galaxy.

That's one of the downsides of a role with a lot of facial prosthetics.

-

@PleegWat said in The Official Status Thread:

Plain

lsdoes a fair bit of sorting and layouting. Tryls -1Unext time.@dkf said in The Official Status Thread:

but

lswill still take a long time; sorting the entries has non-zero costUnless

lsis doing something really idiotic (possible but I doubt it), "sorting takes time" is a tautology but still a huge overstatement. Sorting is pretty fast. It's getting the dir entries that takes forever.

-

@topspin Not at 100k files, but a quick test with 1 million files shows a clear difference:

$ seq 1000000 | sed 's/^/test/' | xargs touch $ time ls > /dev/null real 0m1.570s user 0m1.160s sys 0m0.409s $ time ls -1U > /dev/null real 0m0.529s user 0m0.181s sys 0m0.348s

-

@PleegWat Yes, but both 0.5s and 1.5s are already much faster than what I was complaining about:

@topspin said in The Official Status Thread:

but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.Of course it's not your fault that on your system it doesn't run stupidly slow, but for those numbers saving 1s isn't significant.

-

@topspin But then there's also memory mapping to get around the open file limit: if you open a file, memory map its contents, then close the handle, it no longer counts to your open file limit but writes to the memory still get reflected in the file on disk.

I do not know whether or not such files still count to the system-wide open file limit though I suspect they do.

-

@topspin said in The Official Status Thread:

in a directory with 10k files

Why do you remember me on that f*cking Linux experience some 20+ years ago?

The machine refused to start because it failed to mount a partition which had a folder with such many files...

-

@Zecc said in The Official Status Thread:

Status: there's a song in my playlist called Patience.

There's an initial moment of silence in the version I have downloaded, so I skipped to the next song.

Give

4:33a listen sometime if you like that form.

-

Status: listening to

-

@Zecc +1337 I fell down

-

@Zecc Best dubstep I've ever listened to.

-

Career lesson #214432: never show off that you can do something no one else can, unless you want to be The Guy who does that thing forever in the future

-

@error said in The Official Status Thread:

Career lesson #214432: never show off that you can do something no one else can, unless you want to be The Guy who does that thing forever in the future

That’s why you go into consulting. You can flex on people, get paid HPC buxx for it, and then vanish into the night, never to have to do that menial task again (until the next client)

-

@izzion said in The Official Status Thread:

@error said in The Official Status Thread:

Career lesson #214432: never show off that you can do something no one else can, unless you want to be The Guy who does that thing forever in the future

That’s why you go into consulting. You can flex on people, get paid HPC buxx for it, and then vanish into the night, never to have to do that menial task again (until the next client)

I did that for a while. I didn't like having to always be searching for the next

suckerclientsucker.

-

@PleegWat said in The Official Status Thread:

if you open a file, memory map its contents, then close the handle, it no longer counts to your open file limit but writes to the memory still get reflected in the file on disk.

Historically we haven't really allowed "visitors" into the alliance all that often, especially if we're already full. I think we've done it maybe twice total? But I'm fine with whatever.

Edit: sorry, this packet appears to have made it to the wrong destination...

-

This post is deleted!

-

@error said in The Official Status Thread:

@izzion said in The Official Status Thread:

@error said in The Official Status Thread:

Career lesson #214432: never show off that you can do something no one else can, unless you want to be The Guy who does that thing forever in the future

That’s why you go into consulting. You can flex on people, get paid HPC buxx for it, and then vanish into the night, never to have to do that menial task again (until the next client)

I did that for a while. I didn't like having to always be searching for the next

suckerclientsucker.That’s why I like the advantage of working for a consultancy. I give up the HPC buxx (still make a bit more than local captive IT makes), but it’s not my problem to find future gigs. And I still get the frequent changes of scenery, push to grow my tech stack, etc while actually having job stability.

-

Status: Medium article title, "Don't Write Code For A Startup". Further support for contention "Don't Get A Medium Subscription".

To be fair, hot things are hot, and fire is a hot thing, and almost nobody should stick live lizards up their nose.

-

@hungrier said in The Official Status Thread:

@Gąska said in The Official Status Thread:

And it's a decent movie overall, much better than the 1995 one.

I don't know if I'd go that far, but it's a great modern sequel among a sea of garbage

Just for the record, original Jumanji sucks. This one is actually enjoyable.

-

@Gąska said in The Official Status Thread:

@hungrier said in The Official Status Thread:

@Gąska said in The Official Status Thread:

And it's a decent movie overall, much better than the 1995 one.

I don't know if I'd go that far, but it's a great modern sequel among a sea of garbage

Just for the record, original Jumanji sucks. This one is actually enjoyable.

This points to a promising strategy: sequels of terrible movies. It shouldn't, but point it do.

-

@PleegWat said in The Official Status Thread:

@topspin said in The Official Status Thread:

@dkf said in The Official Status Thread:

We used to have a problem like that with files coming out of our simulations. It's a pain on Unixes where you discover that yes, you really are running out of file handles when trying to access that many, which causes failures in your code that are really quite rare under other circumstances

It's a bit ironic that the Unix guys keep telling you about their "everything is a file" approach and the collary of the filesystem is a DB, but then when you run

lsin a directory with 10k files you get yelled at that you can't do that and the file server grinds to a halt for 5 minutes.Plain

lsdoes a fair bit of sorting and layouting. Tryls -1Unext time.Consider also

find . -depth 0 -print0 | xargs -0if you need to do anything with them, if the sysadmins are already yelling.

-

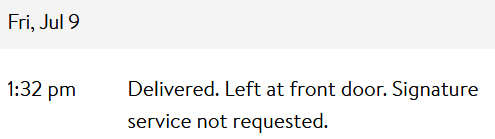

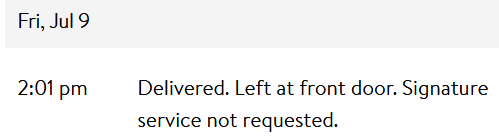

@HardwareGeek said in The Official Status Thread:

Meanwhile, there are still plenty of opportunities for the delivery distortion field (DDF) to work its magic. I have several packages in transit from Walmart

I got two of them today. Supposedly.

Package #1:

I saw the FedEx truck that delivered this. I have this package. I have opened the package, removed the contents, and put (some of) them to use.

Package #2:

I did not see another truck in the neighborhood 1/2 hour after the first one. There is not another package at my front door. Or elsewhere on my front porch. Or anywhere around the front of my house. I don't know where they delivered it, but it wasn't to my front door. I don't even know if they delivered it at all, or if this is another instance of a FedEx driver faking a delivery because he/she's too lazy to make the actual delivery.

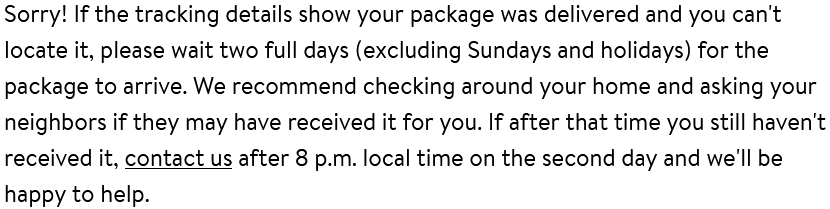

And Walmart is ever so helpful:

So FedEx lied about delivering it, but I have to wait until the package is thoroughly disappeared before I can attempt to complain about their lie.

-

@HardwareGeek said in The Official Status Thread:

And Walmart is ever so helpful:

I once had Amazon tell me my package was lost. *clicked please send a replacement*

A week after the replacement arrived, the original magically appeared.edit: Tho to give UPS credit, they didn't try claiming it had been delivered.