Why strong AI will remain ten years away for some while

-

-

@flabdablet said in Why strong AI will remain ten years away for some while:

15 page whitepaper with no context or summary

Maybe provide a tl;dr. I don't like to work for my lulz.

-

@error It's right there at the top of the very first page, just like it is for every scientific paper ever published. You don't need to read all 15.

Could a neuroscientist understand a microprocessor?

Abstract

There is a popular belief in neuroscience that we are primarily data limited, that producing large, multimodal, and complex datasets will, enabled by data analysis algorithms, lead to fundamental insights into the way the brain processes information. Microprocessors are among those artificial information processing systems that are both complex and that we understand at all levels, from the overall logical flow, via logical gates, to the dynamics of transistors. Here we take a simulated classical microprocessor as a model organism, and use our ability to perform arbitrary experiments on it to see if popular data analysis methods from neuroscience can elucidate the way it processes information. We show that the approaches reveal interesting structure in the data but do not meaningfully describe the hierarchy of information processing in the processor. This suggests that current approaches in neuroscience may fall short of producing meaningful models of the brain.

-

@flabdablet said in Why strong AI will remain ten years away for some while:

@error It's right there at the top of the very first page, just like it is for every scientific paper ever published. You don't need to read all 15.

In about 8pt font when it's embedded on this page. It also doesn't explain why it's

-worthy.

-worthy.I can appreciate the high-level "the way we study the brain is deeply flawed and doesn't lead to true comprehension." I guess I just wasn't sure why it is relevant to this site's agenda of

oppressing minorities and gun ralliespointing and laughing at various things.

-

@error said in Why strong AI will remain ten years away for some while:

I can appreciate the high-level "the way we study the brain is deeply flawed and doesn't lead to true comprehension."

So far as I can tell, much of current neuroscience can be described as being equivalent to trying to find the transistor for Windows Update…

-

@error said in Why strong AI will remain ten years away for some while:

In about 8pt font when it's embedded on this page.

And if you zoom in, it's twice the width of the frame, so you have to constantly scroll. Or I could just skip it and wait for the explanation.

-

From what I probably misunderstood the last time I looked into it.

The brain associates and idea, memory, sight, smell, sound, etc, to a pathway.

So everytime signals travel down that pathway, you re-experience that thought.

But, how does your brain reproduce the information,

-

@xaade Fuct if I know.

The point of the paper basically boils down to "fuct if neuroscientists will ever know either, unless they give up trying to do cargo-cult reverse-engineering with these manifestly inadequate tools".

-

@xaade said in Why strong AI will remain ten years away for some while:

From what I probably misunderstood the last time I looked into it.

The brain associates and idea, memory, sight, smell, sound, etc, to a pathway.

So everytime signals travel down that pathway, you re-experience that thought.

But, how does your brain reproduce the information,

Well it's a bit more complicated than that, and I believe the paper's point is that since we don't have end-to-end knowledge about every possible aspect of the inner-workings of the brain, (or, at least enough to purposefully and intentionally create one ourselves) we can't replicate the brain truly.

It seemed to mostly focus on current approaches to neuroscience and demonstrating how they wouldn't work on much simpler things like a microprocessor, therefore it's rather foolish to try applying them to things much more complicated than that (i.e. a brain)

In other words, knowing how a neuron works (i.e. a transistor) doesn't really equate to knowing how a brain works (i,e, a microprocessor) in any meaningful way, and poking at neurons (i.e. shorting or removing transistors) will not likely give any real useful information other than "yah broke something and it seemed to affect the main program in some way".

-

@xaade said in Why strong AI will remain ten years away for some while:

But, how does your brain reproduce the information,

You just got a "fuck if I know" reply from someone who is in the crowd that believes there is absolutely nothing going on other than electrical impulses and neurotransmitters. That should tell you something.

-

@antiquarian said in Why strong AI will remain ten years away for some while:

@xaade said in Why strong AI will remain ten years away for some while:

But, how does your brain reproduce the information,

You just got a "fuck if I know" reply from someone who is in the crowd that believes there is absolutely nothing going on other than electrical impulses and neurotransmitters. That should tell you something.

Clearly they've never called SysFunc(71b) within an attached I/O session before...

-

@antiquarian It should tell you that I don't know enough about brains to build a working replica of one.

I'd be astonished to find that any comparably skilled member of the supernatural-spirits-are-real brigade could do any better, just quietly.

-

@Tsaukpaetra said in Why strong AI will remain ten years away for some while:

@antiquarian said in Why strong AI will remain ten years away for some while:

@xaade said in Why strong AI will remain ten years away for some while:

But, how does your brain reproduce the information,

You just got a "fuck if I know" reply from someone who is in the crowd that believes there is absolutely nothing going on other than electrical impulses and neurotransmitters. That should tell you something.

Clearly they've never called SysFunc(71b) within an attached I/O session before...

I hope they remember to always mount a scratch brain.

-

where would you put your bets on how much time for we having stong AI?

- 10 years from now

- 100 years from now

- 1000 years from now

- 10 000 years from now

- 100 000 years from now

I would put my money on 10 000 years from now.

-

@fbmac Ten years from now, obviously.

I mean, that's been the safe bet for the last fifty.

-

@fbmac said in Why strong AI will remain ten years away for some while:

stong AI?

Understanding the brain is not a prerequisite

-

@xaade said in Why strong AI will remain ten years away for some while:

Understanding the brain is not a prerequisite

People always forget this. We have good models for neurons, and increasingly good maps of what's hooked up to what.

Two years until someone simulates a cat brain. 'Cat @ home'

-

@AyGeePlus said in Why strong AI will remain ten years away for some while:

People always forget this. We have good models for neurons, and increasingly good maps of what's hooked up to what.

Two years until someone simulates a cat brain. 'Cat @ home'

Yes, but if you can't cuddle it and pet it and have it purr in your lap, what's the point?

-

@fbmac said in Why strong AI will remain ten years away for some while:

where would you put your bets on how much time for we having stong AI?

- 10 years from now

- 100 years from now

- 1000 years from now

- 10 000 years from now

- 100 000 years from now

I would put my money on 10 000 years from now.

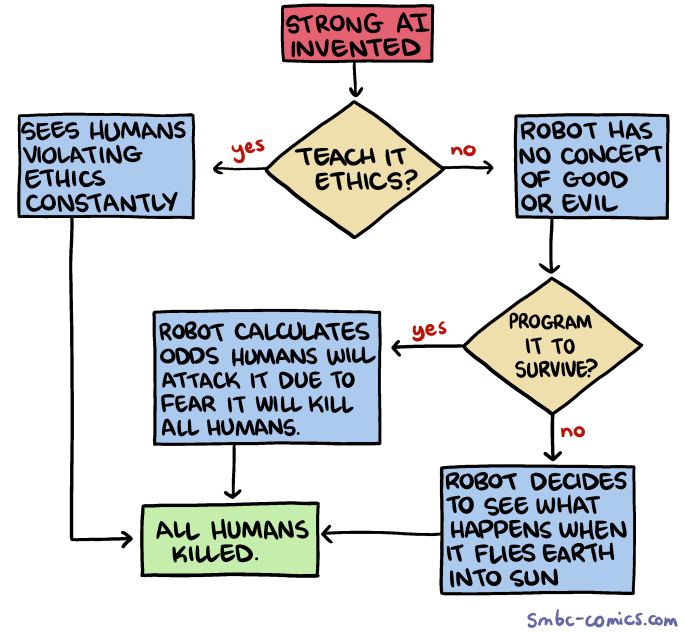

I'm more worried about the m in "n years + m from now". Skynet!

-

@xaade said in Why strong AI will remain ten years away for some while:

From what I probably misunderstood the last time I looked into it.

The brain associates and idea, memory, sight, smell, sound, etc, to a pathway.

So everytime signals travel down that pathway, you re-experience that thought.

But, how does your brain reproduce the information,

I think this book presents a very viable hypothesis about this:

https://www.amazon.com/dp/B004PYDBS0/ref=dp-kindle-redirect?_encoding=UTF8&btkr=1

Basically consciousness happens when a system forms a feedback loop sophisticated enough to form symbols which are isomorphic to the concepts they represent.

Highly recommended.

-

@error Thanks, OneBox. Amazon, you're ever so helpful...

-

@error said in Why strong AI will remain ten years away for some while:

Basically consciousness happens when a system forms a feedback loop sophisticated enough to form symbols which are isomorphic to the concepts they represent.

What means a symbol being isomorphic to a concept?

-

@fbmac said in Why strong AI will remain ten years away for some while:

@error said in Why strong AI will remain ten years away for some while:

Basically consciousness happens when a system forms a feedback loop sophisticated enough to form symbols which are isomorphic to the concepts they represent.

What means a symbol being isomorphic to a concept?

It means you map references. Or, concepts can be used to make more concepts.

Edit: I think?

-

@flabdablet I don't think anyone has reasonably claimed AI was 10 years away ever.

-

@fbmac said in Why strong AI will remain ten years away for some while:

What means a symbol being isomorphic to a concept?

Perhaps? It could also be “the way you feel after holding your child for the first time” or any number of complicated and messy things. Pure concepts as we understand them from logic are probably actually interconnected clusters.

On the other hand, it's all a dynamic system, so what is true now need not be true in the future. The pattern of what is going on is fundamentally rather different to the way in which we choose to make computers work; it's a common belief that having reliable results out of our computing devices is more useful than wilfulness.

But that doesn't mean that studying brains at this level will help with AI. I have this sneaking suspicion that real progress has been made, and that it was made in ways that Hofstadter et al didn't expect because they were asking the wrong questions. The interesting advances (at least from my perspective; YMMV) have come from things like studying how attention works, and the effects of having a body on the mind…

-

Strong AI, if we ever create it will be an accident.

i for one hope it ends up being jane and not skynet but knowing humans it'll probably be skynet....

-

-

@accalia said in Why strong AI will remain ten years away for some while:

i for one hope it ends up being jane

Or Holly. Wouldn't mind Holly.

April Fool | Red Dwarf | BBC Studios – 02:04

— BBC Studios

-

@Tsaukpaetra said in Why strong AI will remain ten years away for some while:

It means you map references. Or, concepts can be used to make more concepts.

So, the first set of basic concepts starts the waterfall.

Makes sense.

But we've only moved the goalposts back. Just like with DNA and evolution.

"DNA is instructions for the cell, and when DNA changes, the animal changes, bam evolution.... "

"Yeah, but what determines what each instruction will do?"

"Uh.... Magic?"@dkf said in Why strong AI will remain ten years away for some while:

Perhaps? It could also be “the way you feel after holding your child for the first time” or any number of complicated and messy things.

Feelings makes a lot more sense.

Because feelings (as in the body chemical and functional changes in heartrate, etc) can be instructioned and therefore can respond to neurons firing down a certain pathway.

In this manner, association can recall a feeling.hmm....

I wonder if neurons each contain an instruction for the body.... and then a pathway becomes a memory because the neurons it paths down tells the body how to react.

In that manner, calculations could be performed by neurons that update the instructions of other neurons?

-

@xaade said in Why strong AI will remain ten years away for some while:

But we've only moved the goalposts back. Just like with DNA and evolution.

Yeah, I'm still waiting for that tumbler I set up with a bunch of random junk to spontaneously combine into anything, such as a transistor or even just not-a-bunch-of-crap.

-

@Tsaukpaetra Millions of years.... millions.... trillions.... lots of years... you'll never be able to observe how many years....

-

@xaade said in Why strong AI will remain ten years away for some while:

But we've only moved the goalposts back. Just like with DNA and evolution.

Evolution doesn't give a Belgium for what substrate it works over.

With DNA, it's pretty clear that RNA came first; as a molecule, it's more chemically active, which is both good and bad. Good because that means it can catalyse reactions on itself (pretty useful for bootstrapping things) and bad because it means that information in it isn't nearly as stable. Which is why organisms these days use proteins, RNA and DNA.

Was RNA the first step? ¯\_(ツ)_/¯

-

@dkf That's not what I'm talking about.

Information about what a cell is supposed to do is located in the DNA. So, it's like code.

However, the action that cells interpret from the R/DNA is stored ???

That's what I was getting at.

Similar to our brain and neurons. We know the body interprets pathways as information. How it does that is ??? because from our current understanding, the storage of the information itself isn't apparent.

So a pathway of neurons in the brain act similarly to a strand of DNA. Instructing our body. However, the conversion between instruction and action is ???

Is the conversion of information to action inherent to our biology, like the laws of the universe are inherent to the universe?

-

@xaade said in Why strong AI will remain ten years away for some while:

Information about what a cell is supposed to do is located in the DNA. So, it's like code.

Sort of. Biology is complicated, OK? DNA is mostly just an information store. (Not entirely, but close.) RNA is used to transcribe the information from the DNA, modify it in various ways, and convey it to the ribosomes for conversion into proteins. The ribosomes are made out of a mixture of RNA and proteins. The proteins may be further modified with extra functional groups, sugars, etc. and can also be compounded with other proteins, metal ions, that sort of thing. The results will be used for doing chemistry, building structures, etc. Many major structures are built out of membranes, which are a combination of lipids and cholesterol and other stuff. Cells don't just store information in their DNA; the collection of other things inside them also store information in some sense, and their physical configurations absolutely stores information (much of how brains actually store info is in physical configurations of synapses). These are things we know for sure.

It's not sensible to draw parallels between how cells work and how brains work. They aren't the same (different levels of detail, different mechanisms). It's better to think in terms of brains being like computer hardware that runs software, with the mind (conscious and unconscious) being the software. It's not a perfect analogy at all — I do not claim it to be anything like that — but it's informative and better than most of the ideas that neuroscience has explored over the past 50 years, in that it provides for some sort of mind/brain separation that is non-magical and which makes sense…

-

@dkf said in Why strong AI will remain ten years away for some while:

It's better to think in terms of brains being like computer hardware that runs software, with the mind (conscious and unconscious) being the software.

If you're going to do that, it's important not to fall into the trap of hypothesizing the brain as a kind of general-purpose computation engine and the mind being a conceptually separable thing that might perhaps be capable of being copied out of one brain and "run" on another. Mind is more like the totality of all running processes than "a program". Also, all the code is self-modifying.

Minds are certainly brain behavior, but they're not engineered for replicability the way computer programs are (well-designed computer programs at any rate). They're not engineered at all. They're emergent.

The closest analogue for the relationship between mind and brain I've seen implemented on anything non-living occurs in "self-designed" FPGA logic, where the function of the part is the end result not of design, but of some kind of evolution/optimization training process. These things are the ultimate "works on my machine" implementations; they tend to work only on the specific individual FPGA they evolved on, and won't work when transplanted into another one of the same type. They're typically quite fantastically parsimonious, compared to actual designs, in the amount of silicon required to achieve any given function. And it's virtually impossible to figure out exactly how they do what they do, because they break every simplifying assumption that works for analyzing ordinary digital logic (including the assumption that all the transistors involved are actually working in a switching/digital mode).

I think it's quite likely that if we do ever see strong AI implemented on an electronic substrate, the details of how it does what it does will remain every bit as inscrutable as those of how we do what we do. I also suspect that each individual AI instance will probably take about 25 years to train up to the point where one could have an adult conversation with it.

-

@flabdablet said in Why strong AI will remain ten years away for some while:

Also, all the code is self-modifying.

Yeah, that parts the bitch. Not to mention everything is almost entirely asynchronous.

-

@flabdablet said in Why strong AI will remain ten years away for some while:

take about 25 years to train up to the point where one could have an adult conversation with it.

Depends on what you mean by "adult" conversation.

-

@Tsaukpaetra said in Why strong AI will remain ten years away for some while:

Depends on what you mean by "adult" conversation.

Fuck you, you big poopy head.

-

@flabdablet said in Why strong AI will remain ten years away for some while:

@Tsaukpaetra said in Why strong AI will remain ten years away for some while:

Depends on what you mean by "adult" conversation.

Fuck you,

you big poopy head.I stole all your moneyYup.

-

@flabdablet said in Why strong AI will remain ten years away for some while:

I think it's quite likely that if we do ever see strong AI implemented on an electronic substrate, the details of how it does what it does will remain every bit as inscrutable as those of how we do what we do.

Either that, or it will turn out to be something basically simple but really quite unexpected.

-

@xaade said in Why strong AI will remain ten years away for some while:

But we've only moved the goalposts back. Just like with DNA and evolution.

"DNA is instructions for the cell, and when DNA changes, the animal changes, bam evolution.... "

"Yeah, but what determines what each instruction will do?"

"Uh.... Magic?physics"

-

@Jaloopa The usual shorthand for that sort of physics is “chemistry”. :)

-

@Jaloopa Something says, take this DNA, do this.

Here.... here's a strand of DNA.

int a = 42 + b; Console.WriteLine(a.ToString());Hmm....

That's funny? It didn't output anything...

Will it output after I post?

-

@xaade I have no idea what point you're trying to make

-

What tells the cell what to do with a strand of DNA?

What converts that into an action?It's a catch 22.

-

@xaade said in Why strong AI will remain ten years away for some while:

What tells the cell what to do with a strand of DNA?

What converts that into an action?RNA and proteins. The problem you're describing is understood, and it's theorised that RNA came first, as that might occasionally self-assemble (DNA won't) from components that might potentially be created abiotically, and RNA is known to be able to catalyse reactions involving itself. Do we know this for sure? Definitely not. No way to go back in time and find out definitively either; life itself has ensured that we can't figure it out. Or at least not on Earth.

The key point is that all we've got is dodgy hypotheses, but there are people trying to figure out ways it could work without being fantastically unlikely. It's possible that there were cells of some kind formed by a natural abiotic foaming process, and that there was some sort of concentration between the inside and the outside. That would help a lot, since concentration gradients allow otherwise-energetically-unlikely reactions to happen.

-

@dkf said in Why strong AI will remain ten years away for some while:

@Jaloopa The usual shorthand for that sort of physics is “chemistry”.

xkcd://purity ...

-

@xaade said in Why strong AI will remain ten years away for some while:

What tells the cell what to do with a strand of DNA?

What converts that into an action?What tells an electron it's attracted to a proton?

What tells matter to annihilate itself when it encounters antimatter?

What tells a photon how fast it should go?

-

@Jaloopa said in Why strong AI will remain ten years away for some while:

What tells an electron it's attracted to a proton?

What tells matter to annihilate itself when it encounters antimatter?

What tells a photon how fast it should go?Courage!

http://rozsavagecoaching.com/wp-content/uploads/2015/07/Cowardly-Lions-medal.jpg

-

@xaade said in Why strong AI will remain ten years away for some while:

the action that cells interpret from the R/DNA is stored

Your computer background is influencing the way you think about DNA in a way that's inaccurate to reality. It makes my head hurt trying to understand it properly, so I can't explain it to you, but the implementation of RNA -> Protein conversion is well documented and understood by biologists.

It's more like explaining how a CPU is constructed from electrical switches and gates than explaining how a line of code runs. ("How does electricity do math?! It doesn't understand numbers!") But it's also more like explaining how seratonin works in the brain than it is anything simple and straightforward like electrical signals.

RNA world - Wikipedia

RNA world - Wikipedia