Cooties

-

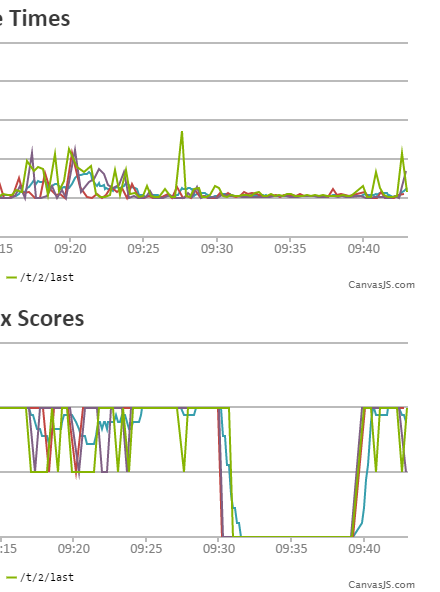

I just deleted a 10iGB tar (no, not compressed tar, why would we compress our backups?) from the backups folder that was apparently causing 100% of requests to be dropped. Now we have about 11GiB of free disk space. Backups are going to be difficult with the 8GiB uploads folder and the 22GB postgres_data folder. Not sure how that's supposed to fit into 11GB of disk, especially in an uncompressed tar that gets compressed after it's completely written to disk.

/cc @PJH

-

-

I'm scared to edit my post because Discourse might try to back up the old version.

-

I seem to remember the pg_log was 5+GB based on one of the migration threads. Did Postgres logging get turned up for Discodevs to debug perf and never get turned back down? I suppose we could shit-can logging altogether since we are migrating.

-

app.yml has:

db_logging_collector: onand also:

DISCOURSE_DEVELOPER_EMAILS: 'sam.saffron@[redacted because fuck spam].com'which means @sam is a super-admin.

and also:

RBTRACE: 1which I assume makes ruby use more memory because what else would it do

-

Yeah shit I'm not going to do is figure out the convoluted fuck mess that is Discourse's YAML to postgres.conf mapper-fuck-time-CDN-circle-jerk.

-

Discourse is the closest thing the open source community has to a black box.

-

-

-

I would have thought there is space enough in that O to fit 'this'.

-

I would have thought there is space enough in that O to fit 'this'.

Depends. Is it a 32bit or 64bit pointer?

-

@Luhmann said:

I would have thought there is space enough in that O to fit 'this'.

Depends. Is it a 32bit or 64bit pointer?

Point remains that you are an airhead with a festive bonnet

-

@Onyx said:

@Luhmann said:

I would have thought there is space enough in that O to fit 'this'.

Depends. Is it a 32bit or 64bit pointer?

Point remains that you are an airhead with a festive bonnet

Fuck you.

It is not a bonnet.

-

Why don't we make/move backups to a remote server? That is the point of a backup, after all.

-

Why don't we make/move backups to a remote server?

They are. We're simply lacking the space to create the backup before it's moved there.

postgres@sofa:~$ aws s3 ls s3://wtdwtf-back | tail 2015-10-15 04:12:29 805042288 what-the-daily-wtf-2015-10-15-040000.tar.gz 2015-10-28 04:11:56 823319487 what-the-daily-wtf-2015-10-28-040000.tar.gz 2015-10-30 17:58:35 827685982 what-the-daily-wtf-2015-10-30-174149.tar.gz 2015-11-13 09:26:33 850780734 what-the-daily-wtf-2015-11-13-091551.tar.gz 2015-11-14 04:09:53 852532002 what-the-daily-wtf-2015-11-14-040000.tar.gz 2015-11-17 10:40:09 856267253 what-the-daily-wtf-2015-11-17-102622.tar.gz 2015-11-24 04:10:38 865032341 what-the-daily-wtf-2015-11-24-040000.tar.gz 2015-12-01 04:12:35 876817179 what-the-daily-wtf-2015-12-01-040000.tar.gz 2015-12-04 04:12:22 882940812 what-the-daily-wtf-2015-12-04-040000.tar.gz 2015-12-08 04:09:36 889307881 what-the-daily-wtf-2015-12-08-040000.tar.gz postgres@sofa:~$(And those are the SQL only ones that @shadowmod created - the auto full-backups simply aren't happening.)

-

I guess discourse doesn't offer the option to backup over the network (skipping disk?)

-

-

Is iGB apple's incompatible version of GB?

I like how he forgot to measure backups in gigglebytes halfway through the post!

Preserved for posterity:

Backups are going to be difficult with the 8GiB uploads folder and the 22GB postgres_data folder.

-

-

You would like that wouldn't you

Not particularly, I don't swing that way myself.

pervert

Yes, but not for the reasons you think.

-

-

This is scary, can you mount the backup folder elsewhere using nfs or something?

-

@PJH can you disable the automatic backup so I don't have to delete it every night to keep the forum online?

It was about 7GiB today, so we either used up 3GiB in the last 24 hours or something is Disconsistent.

-

@PJH how much of these can we get rid of?

root@what:~# du -h /var/discourse/shared/standalone/{log,postgres_data/pg_*log} 289M /var/discourse/shared/standalone/log/rails 12K /var/discourse/shared/standalone/log/var-log/apt 1.1G /var/discourse/shared/standalone/log/var-log/nginx 1.1G /var/discourse/shared/standalone/log/var-log 1.3G /var/discourse/shared/standalone/log 48M /var/discourse/shared/standalone/postgres_data/pg_clog 6.4G /var/discourse/shared/standalone/postgres_data/pg_log 4.0K /var/discourse/shared/standalone/postgres_data/pg_xlog/archive_status 225M /var/discourse/shared/standalone/postgres_data/pg_xlogI'm assuming we don't need a gigabyte of nginx access logs or three hundred megabytes of this:

Sent mail to redacted@gmail.com (145.6ms) Rendered email/_post.html.erb (10.0ms) Rendered email/notification.html.erb (21.0ms) Sent mail to redacted@gmail.com (171.0ms) Rendered email/_post.html.erb (17.8ms) Rendered email/notification.html.erb (64.9ms) Rendered email/_post.html.erb (4.1ms) Rendered email/notification.html.erb (116.6ms) Rendered email/_post.html.erb (8.0ms) Rendered email/notification.html.erb (142.3ms) Sent mail to redacted@gmail.com (879.0ms) Sent mail to redacted@gmail.com (551.5ms) Sent mail to redacted@gmail.com (137.6ms)or database logs going back to July that consist of this kind of stuff:

WHERE cu.user_id = 671 AND cu.category_id = topics.category_id AND cu.notification_level = 0 AND cu.category_id <> -1 )) AND (NOT ( pinned_globally AND pinned_at IS NOT NULL AND (topics.pinned_at > tu.cleared_pinned_at OR tu.cleared_pinned_at IS NULL) )) ORDER BY topics.bumped_at DESC LIMIT 30 OFFSET 6149 2015-12-20 05:14:49 UTC [134-643] discourse@discourse DETAIL: parameters: $1 = 't' 2015-12-20 05:14:49 UTC [353-526] discourse@discourse LOG: duration: 135.302 ms execute a1037: SELECT COUNT(*) AS count_all, user_id AS user_id FROM "posts" WHERE ("posts"."deleted_at" IS NULL) AND "posts"."topic_id" = $1 AND (user_id IS NOT NULL) GROUP BY "posts"."user_id" ORDER BY count_all DESC LIMIT 24 2015-12-20 05:14:49 UTC [353-527] discourse@discourse DETAIL: parameters: $1 = '1000' 2015-12-20 05:14:50 UTC [1957-639] discourse@discourse LOG: duration: 177.776 ms execute a1026: SELECT COUNT(*) AS count_all, user_id AS user_id FROM "posts" WHERE ("posts"."deleted_at" IS NULL) AND "posts"."topic_id" = $1 AND (user_id IS NOT NULL) GROUP BY "posts"."user_id" ORDER BY count_all DESC LIMIT 24 2015-12-20 05:14:50 UTC [1957-640] discourse@discourse DETAIL: parameters: $1 = '1000'

-

Ok, I changed the thing that says "log any query that takes more than 100ms" to "log any query that takes more than 10000ms". If it's okay with @PJH I can delete the 6½ gigglebytes of SQL query logs (or he can).

-

-

I'll look into some of that when I can get to a laptop...

-

I'm assuming we don't need a gigabyte of nginx access logs

yeah. the nginx log can be deleted safely, though you'll want to cycle nginx to make sure it starts logging to a new file instead of still writing to the deleted file.

setting up logrotate would be a good idea too. how far back do those logs go?

three hundred megabytes of this:

why is discourse even logging that?!or database logs going back to July

don't think we want those either

-

-

So if the actual database is only 15GB, how are we filling up nearly 60GB of space?

[poll type="multiple"]

- None of the above

- All of the above

- Discourse

- Someone uploaded a 45GB screenshot of Dwarf Fortress

- Dark matter

- @darkmatter

- Secret Jeff photo album

- The

NSACDCK is spying on us - Somewhere deep in the bowels of the filesystem is all of the previous versions of Discourse.

- FILE_NOT_FOUND

[/poll]

-

to make sure it starts logging to a new file instead of still writing to the deleted file.

-

I'd actually prefer if it wrote to the deleted file, because at least then it would disappear when Discourse's horrible mangled nginx inevitably crashed.

-

-

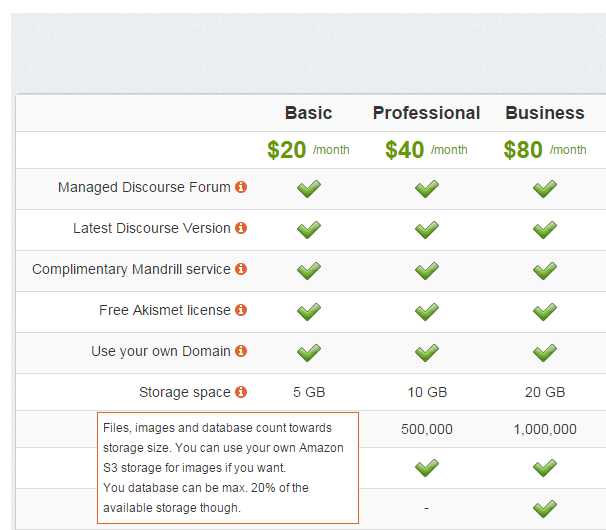

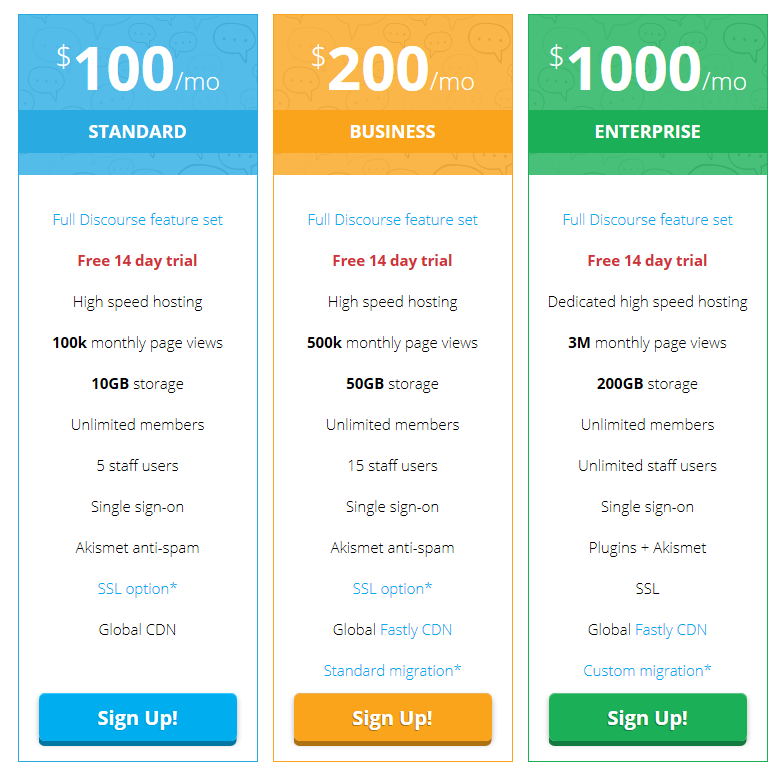

Presumably no-one using these guys has very many posts...

Your database can be max. 20% of the available storage

-

-

Meanwhile, at http://discourse.org

-

That's what they'll pay the users to test it, right?

-

That's what they'll pay the users to test it, right?

nope. that's what you have to pay to be QA for Discourse.

well i say QA, what i mean you get to find the bugs, but if you dare to report them you will be told you are doing it wrong and then your bug report will be deleted.

-

Ah! And if you pay more the lesser percentage of your bugs will be deleted? Maybe?

-

Ah! And if you pay more the lesser percentage of your bugs will be deleted? Maybe?

nope!, but you will be less likely to be banned for reporting your "bugs"

-

Ah! And if you pay more the lesser percentage of your bugs will be deleted? Maybe?

Sure, if you can find a way to call them features. :P

-

though you'll want to cycle nginx to make sure it starts logging to a new file instead of still writing to the deleted file.

Little trick

echo "" > access.logNo problems there.

-

that works too.

-

Well, you'll have a gigantic access log of nulls, but if your filesystem supports sparse files, that shouldn't be a problem until you try to open it with something that doesn't.

-

Little trick

echo "" > access.logNo problems there.

that works too.

Ah, but does it?Well, you'll have a gigantic access log of nulls

I'm not so sure, maybe someone should test this?

IIRC nginx will still have the same file link open, shouldn't echo-ing over it unlink the entry and create a new one? This still results in nginx writing to a "deleted" file instead of the (new) empty file?Maybe my Linux isn't strong here, I would think

kill -s USR1 nginxwould be more appropriate...

-

shouldn't echo-ing over it unlink the entry and create a new one?

Why on earth would it do that?

It just opens the file and replaces its contents. It doesn't delete all the metadata.

-

Maybe my Linux isn't strong here,

For some reason I thought the Linux FS model worked differently.

-

m not so sure, maybe someone should test this

No need to. I've done this lots of times to save servers from crashing on low space. Although this was with Apache I expect nginx to work the same way.

And yes, space is freed and all works normally, not sure what @ben_lubar means with that "nulls" comment.

-

Ah, but does it?

yeah, it does actually. it truncates access.log to 0 bytes, altering the metadata to do so, then echo's a empty character (followed by a newline) to the beginning of the file.

of course depending on exactly how any existing program is accessing the file that other program may not be aware of the new length and will continue writing where it thinks the end is, creating a sparse file with a lot of virtual nulls at the beginning.

that being said if your program does this to log file syou are doing it wrong because you are keeping that data chached in your write buffer for longer than absolutely necessary, and in the case of log files you shouldn't do that.

-