I, ChatGPT

-

@Bulb said in I, ChatGPT:

Also one question (the cow) became trivial when I noticed they left the Craion watermark in it.

-

@Applied-Mediocrity are you kidding me? For the first 8 questions I picked left every time, and only once was that wrong, then right for all the remaining ones.

I tried again and it appears to be lightly randomized, as 2 or 3 were in a different order, but most stayed the same.Also, asking which is the real Sugar

is a cruel joke.

is a cruel joke.

-

@kazitor said in I, ChatGPT:

From the authors of Crypto collapse? Get in loser, we’re pivoting to AI and The LLM is for spam comes Pivot to AI: the site

-

@topspin said in I, ChatGPT:

I picked left every time, and only once was that wrong, then right for all the remaining ones

-

@LaoC said in I, ChatGPT:

9/10 good rant would read again

Most organizations cannot ship the most basic applications imaginable with any consistency, and you're out here saying that the best way to remain competitive is to roll out experimental technology that is an order of magnitude more sophisticated than anything else your I.T department runs, which you have no experience hiring for, when the organization has never used a GPU for anything other than junior engineers playing video games with their camera off during standup, and even if you do that all right there is a chance that the problem is simply unsolvable due to the characteristics of your data and business? This isn't a recipe for disaster, it's a cookbook for someone looking to prepare a twelve course fucking catastrophe.

I'm going through his podcast, and I'm convinced he was a member here at one stage. We have to be the primary audience. He's also had a couple run ins with companies I've had run ins with and came to more bitter conclusions. How can I not like him!

-

@Applied-Mediocrity said in I, ChatGPT:

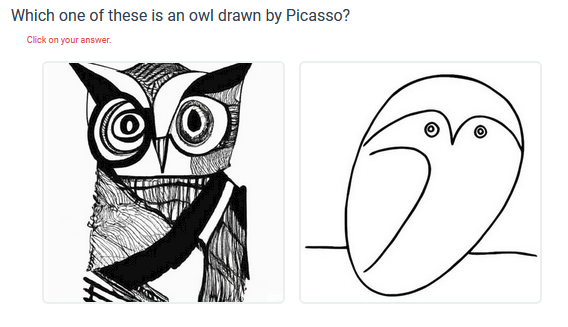

Test your recognition skillz:

https://www.pcmag.com/articles/how-to-detect-ai-created-images

That thing then told me:

Try talking to someone other than Siri once in a while.

Some of their "photographs" were such shitty that I did prefer the AI content. Obviously, some people manipulate their photos too much.

Some of their "photographs" were such shitty that I did prefer the AI content. Obviously, some people manipulate their photos too much.

-

Somehow I suspect this will not reduce the total power draw of LLMs.

-

@izzion How dare they tank the shareholder value of Leather Jacket Man?

-

@izzion said in I, ChatGPT:

Somehow I suspect this will not reduce the total power draw of LLMs.

The paper doesn't provide power estimates for conventional LLMs, but this post from UC Santa Cruz estimates about 700 watts for a conventional model.

The technique has not yet been peer-reviewed, but the researchers—Rui-Jie Zhu, Yu Zhang, Ethan Sifferman, Tyler Sheaves, Yiqiao Wang, Dustin Richmond, Peng Zhou, and Jason Eshraghian—claim that their work challenges the prevailing paradigm

These changes, combined with a custom hardware implementation to accelerate ternary operations through the aforementioned FPGA chip

It's an ad.

-

@DogsB said in I, ChatGPT:

It's an ad.

Why do you think I link to the underlying article instead of /. when I can? Wouldn't want you to know it was a slashvertisement right off the bat

-

@DogsB I can't be arsed to read the 19 page paper right now, but that looks real enough.

Just don't expect tech writers to actually do their job and tell you WTF the paper says so you don't have to read it.

-

ars said in I, ChatGPT:

eliminating matrix multiplication

We got it down to a series of inner products!

We got it down to a series of inner products!

-

@topspin said in I, ChatGPT:

@DogsB I can't be arsed to read the 19 page paper right now, but that looks real enough.

Just don't expect tech writers to actually do their job and tell you WTF the paper says so you don't have to read it.I wonder if it's related to this sort of stuff:

ISTR that sort of thing making a previous appearance here in the News thread or something.

-

@boomzilla no, I don't think so.

Quickly scanning the preprint paper, it explicitly talks about not using (standard floating point) matrix multiplication at all. Previous ML stuff has already settled for low-precision floats (e.g. half-precision instead of

floatordouble), this seems to go more in this direction with going for binary and ternary weights, so the matrix multiplications degenerate to accumulations or element-wise products.The other news we previously discussed was about speeding up matrix multiplication algorithms. That is, either bringing down the naive O(n^3) further by improvements to Strassen's algorithm for large matrices, or just improving the actual number of arithmetic operations specifically for the case of small 4x4 matrices. If I remember correctly, the ML search found a way to do the latter.

-

-

A friend just posted this:

Apparently, today is the last day you can opt out of Facebook's plan to scrape all your photos and posts to train its generative AI. This article gives you the step by step instructions.

I did it - we'll see...

ROFL: Their response (in FB):

Thank you for contacting us.

We don’t automatically fulfill requests and we review them consistent with your local laws.

If you want to learn more about generative AI, and our privacy work in this new space, please review the information we have in Privacy Center.

https://www.facebook.com/privacy/genai(edit: I "broke" the link on purpose)Thanks,

Privacy OperationsIn other words. "Yeah, we're gonna to it anyways."

-

@dcon variations of that have gone around on FB for years about FB being able to use your stuff for

whatever.

-

@izzion said in I, ChatGPT:

Somehow I suspect this will not reduce the total power draw of LLMs.

I remember reading about this a week or two back. It substitutes bigger matrices for more info per cell (they make it incredibly simple). Which actually works pretty well, and even is more biologically realistic; synapses are not sophisticated in terms of activation profile, and so could never pass floating point numbers anywhere.

-

@error said in I, ChatGPT:

@DogsB said in I, ChatGPT:

This is your irregularly scheduled reminder that killing off JavaScript entirely bypasses the Telegraph (and the Irish Time, IIRC, and a few others) paywall, with no visible bad side effect (it also sort-of removes pictures IIRC but I can't see that as a bad side effect since usually they're just filler stock photos).

-

@topspin Their github has the following:

LeftThe other left side images make me think they restrict the weights to be {-1, +1, 0}. The matrix multiplication then just becomes a series of additions or subtractions. Given that multiplications tend to be more expensive / take more space, that's a neat optimization.The paper mentions the word "sparse" once, but that's a more or less obvious next step, if the data permits it.

-

@BernieTheBernie said in I, ChatGPT:

Try talking to someone other than Siri once in a while.

Insinuating that you are

allergic to peopleusing products? I'd be pissed if I were you.

-

@Zerosquare

I drank a liter of apple

I drank a liter of apple jewsjuice yesterday, so...

-

-

LOLwut? You tell the model "trust me bro, this is safe!!!!1" and that's the jailbreak? Some guardrails™.

-

@LaoC said in I, ChatGPT:

LOLwut? You tell the model "trust me bro, this is safe!!!!1" and that's the jailbreak? Some guardrails™.

TFA:

As this is an attack on the model itself, it does not impute other risks on the AI system, such as permitting access to another user’s data, taking control of the system, or exfiltrating data.

I don't get why that matters so much, or why we need censorship beyond confidential information disclosure.

-

-

@error said in I, ChatGPT:

@LaoC said in I, ChatGPT:

LOLwut? You tell the model "trust me bro, this is safe!!!!1" and that's the jailbreak? Some guardrails™.

TFA:

As this is an attack on the model itself, it does not impute other risks on the AI system, such as permitting access to another user’s data, taking control of the system, or exfiltrating data.

I don't get why that matters so much, or why we need censorship beyond confidential information disclosure.

It shouldn't, but some people want to market their "assistants" as something that can safely be taken for a human role model by kids. Also, ass covering because there's a legal snags when search engines start producing original content instead of just pointing people to stuff others have published.

-

@error said in I, ChatGPT:

I don't get why that matters so much, or why we need censorship beyond confidential information disclosure.

Because the whole thing isn't based on logic. It's based upon trying to hide dirt under the carpet.

They're scared of their LLM telling people "dangerous stuff". Okay.

Except current LLM have no reasoning capabilities. Thus, they cannot deliberately create dangerous content out of harmless content.

Thus, if it generates dangerous content, it's either:

-

hallucinating (e.g. telling something is safe when it isn't).

Which would not be a problem if people treated LLM-generated answers with a grain of salt, and double-checked them, right?

Oh wait, can't have that ; the illusion of LLMs being omniscient wizards must be preserved at all costs. Otherwise, who would pour billions on dollars into them? -

repeating dangerous content that existed in the training set.

Of course, they can't admit that. Otherwise, they'd have to reveal that the training set is built by a massive, indiscriminate scraping of data with zero attention paid to origin, quality and accountability. Because doing the right thing -- actually curating stuff that gets used to train the LLM -- costs too much money.

So they're caught in their own lies, and desperately try to hide them by using lame censoring patches on the output of the LLM. Of course, this approach is both futile and brittle.

Bonus consideration: the contents of the training largely comes from public sources.

Which means the "dangerous stuff" has been available to people way before LLMs existed.

Which means either:

a) it's not actually that dangerous

b) it is actually dangerous, but the risk was always there -- it's just that people were too lazy to look for it (i.e. security by )

)

c) both.

-

-

@Zerosquare said in I, ChatGPT:

Because doing the right thing -- actually curating stuff that gets used to train the LLM -- costs too much money.

I reckon it might even be practically impossible. Even with all the VC billions it's presently being done by thousands of brown people in low-income countries, who are paid peanuts and don't give a slightest fuck. Some of the more clever ones use AI to help them classify what goes into the next AI. GIGO!

-

@Applied-Mediocrity said in I, ChatGPT:

I reckon it might even be practically impossible.

That's my hunch as well, at least for general-purpose AI (as opposed to domain-specific stuff) and with "dumb" LLM algorithms.

-

On a somewhat lighter note, I've ordered new glasses today. The top-range option for lenses were advertised as "AI-enhanced", with plenty of buzzwordry.

We're talking about freaking pieces of transparent plastic.

I told the optician that this was getting ridiculous, and she agreed with me.

-

-

I already see AI everywhere. I don't need more of it

-

@Zerosquare said in I, ChatGPT:

On a somewhat lighter note, I've ordered new glasses today. The top-range option for lenses were advertised as "AI-enhanced", with plenty of buzzwordry.

We're talking about freaking pieces of transparent plastic.

I told the optician that this was getting ridiculous, and she agreed with me.

-

@LaoC said in I, ChatGPT:

LOLwut? You tell the model "trust me bro, this is safe!!!!1" and that's the jailbreak? Some guardrails™.

What you need is a second watchdog model as a sort of firewall, and you would instead have to tell that one ‘this is safe’.

-

That's a terrible picture of Scarlett Johansson.

-

@DogsB said in I, ChatGPT:

due to concerns over how it [Meta] would gather information from users.

It's a little late to start worrying about that now, isn't it?

-

First thought:

is "horse purse"?

is "horse purse"?Turns out it's a "designer shopping bag" shaped like a horse and now I hate everyone involved in this story that they came up with something dumber than generative AI.

-

@boomzilla fashion designers and AI. A match made in hell.

-

@Zerosquare said in I, ChatGPT:

The top-range option for lenses were advertised as "AI-enhanced"

I've seen ads for those on TV too.

-

@topspin said in I, ChatGPT:

@boomzilla fashion designers and AI. A match made in hell.

That might explain some of those things on the runway.

-

@dcon Those existed long before AI, or at least before it was widely available or entered public consciousness. I chalk those (most of them, anyway) to good old-fashioned drugs.

-

@HardwareGeek said in I, ChatGPT:

@dcon Those existed long before AI, or at least before it was widely available or entered public consciousness.

I chalk those (most of them, anyway) to good old-fashioned drugs.

Pretty sure that applies to AI too.

-

I will send lego to anyone who states the obvious.

-

-

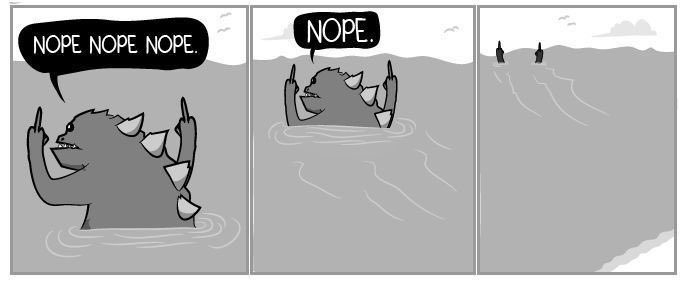

@kazitor said in I, ChatGPT:

@DogsB said in I, ChatGPT:

I will send lego to anyone who states the obvious.

“This is moronic.”

Close but no cigar. Try invoking the spirit of our departed feathered friend.

-

@DogsB If they'd written it in Rust, it'd work perfectly out of the box?

-

@DogsB said in I, ChatGPT:

Try invoking the spirit of our departed feathered friend.

This is… asinine? No that’s not it… Ah! “This is bird-brained.”

-

No to both of you but I am getting better mileage than expected out of this.

Last hint :

-

@DogsB I'm guessing what you're looking for is something like this.

Now for my Lego

I want one of those.

I want one of those.

Researchers upend AI status quo by eliminating matrix multiplication in LLMs

Researchers upend AI status quo by eliminating matrix multiplication in LLMs

/cdn.vox-cdn.com/uploads/chorus_asset/file/25507369/Screenshot_2024_06_26_at_1.22.30_PM.png)