I, ChatGPT

-

@Zerosquare said in I, ChatGPT:

@remi said in I, ChatGPT:

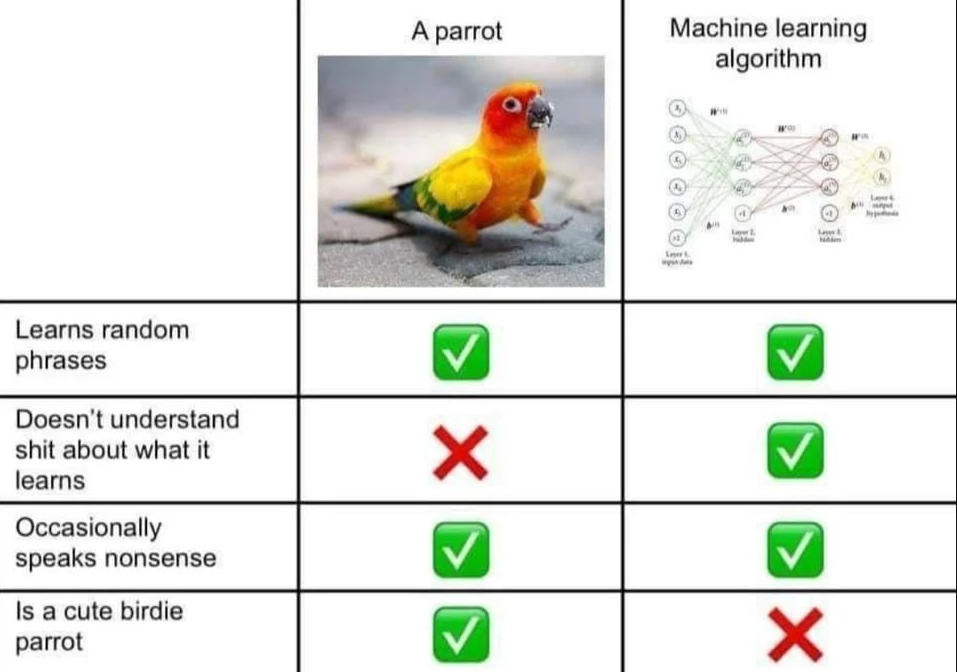

LLMs are producing birds that looks amazingly like birds and flap their wings like birds

I disagree. My parrot neither looks, nor acts, like a LLM.

(I had to amend this image. The original had shameless propaganda on the second row.)

-

@remi said in I, ChatGPT:

But! The underlying assumption of many people seems to be that

language == human intelligence(or maybe thatlanguage => human intelligence). The idea, and it wasn't (isn't?) necessarily a bad idea, was (is?) that sincehuman intelligence => language, trying to produce language is clearly necessary to get to AGI, and that maybe by producing more and more "human" language it would look more and more like intelligence. And it does look "more and more like...", but it's still not something anyone sane regards as "equal to..."I'm a bit torn about the use of the word "language" here, as I think we've long surpassed what I originally thought of as a language model before the Large ones came up. It's more like producing human-like speech/text/conversation/whatever, which happens to be expressed in a certain language. But that might just be my interpretation of the word, and is really just an irrelevant aside. Other than that:

There is something to that equation. It's like the original Turing test. For the language model to produce more human-like "language" in a conversation, it needs to become more and more indistinguishable from human speech. And at that point it means passing the Turing test, which outside the LLM discussion was well accepted as intelligence.

That implies a "language" model should be able to do some trivial reasoning. That sounds like well outside of "language", but if answers that fail to do trivial reasoning aren't human-like, then it's part of the language model. And we've seen quite impressive results here, even if it's still very easy to get them to fuck up.I don't think LLMs will lead to AI (the constant-time output alone seems to completely rule that out), but they're still vastly more powerful than we'd have expected them to be.

-

@topspin said in I, ChatGPT:

I'm a bit torn about the use of the word "language" here,

I agree, the term "language" is a bit of a misnomer. "Discourse" (

) would probably be a better one. But I guess it works well-enough, most people (at least here) understand what is meant there.

) would probably be a better one. But I guess it works well-enough, most people (at least here) understand what is meant there.There is something to that equation. It's like the original Turing test.

Absolutely, which is why I said it wasn't necessarily a bad idea. But what I'm saying is that the approach of "pass the Turing test by imitating all external characteristics of language" looks a bit like "fly by imitating all the external characteristics of birds."

My opinion is that it doesn't quite works for birds, and it doesn't really sound like it's working for AI either.

-

@sockpuppet7 said in I, ChatGPT:

@Carnage said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

@dkf said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

they seem to train with everything they got, and later use fine tuning to shape it, and that appears to work better than training with less, selected data, if I understand any of what I read about it

The problem is that means the only answers it can ever generate are projections from its training set. If that's what you're looking for, that's great. If you need anything that isn't just a projection of what went before, LLMs are exactly the wrong tool.

you should know it's BS, neural networks generalize and create new things on the patterns it learned

now you have billions of parameters, with a ridiculous amount of data to form connections, and it definitely write things unlike anything before

put some reinforcement learning over it to make it smarter with it's experience and we'll soon have our terminators, if global warming doesn't finish us before it

This is the socks gnome business plan, except it's about collecting data and still a ? on the magic step.

you guys see gpt-4 generating impressive things and dismiss it because it's less than human intelligence, but it's damn close. dunno what kind of belief make you dismiss it like some dumb autocomplete

I think the emergent behaviors we're seeing are really cool, and honestly better than I thought they'd be. But like

said, not AGI, not going to be AGI, even though it's an interesting step closer.

said, not AGI, not going to be AGI, even though it's an interesting step closer.

-

-

@dkf said in I, ChatGPT:

@Arantor said in I, ChatGPT:

No-one is suggesting that ChatGPT is just a Markov chain generator.

Because it's not, and you know that we know, that's a fun strawman you're building there.

It kind-of is except that it has vastly more states. You could expand an LLM into Markov Chain form, but you'd need a stupid amount of storage to do it. It's one of those theoretical transformations that nobody ever does for real because we want the result to be representible in this universe, just like you could write a modern (non-network-connected) computer out as a finite state machine.

The attention thing used in LLM is turing complete

A markov algorithm can be turing complete too

Lots of math on these links, I'm trusting the authors conclusions

-

@boomzilla said in I, ChatGPT:

I think the emergent behaviors we're seeing are really cool, and honestly better than I thought they'd be. But like

said, not AGI, not going to be AGI, even though it's an interesting step closer.

said, not AGI, not going to be AGI, even though it's an interesting step closer.I agree on not-agi. I disagree on dismissing it as a dumb autocomplete, that they are probably using as an hyperbole anyway

-

-

@boomzilla said in I, ChatGPT:

I'd also put the paying/product meme here, but slack charges for the privilege of being data mined.

-

@sockpuppet7 said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

I think the emergent behaviors we're seeing are really cool, and honestly better than I thought they'd be. But like

said, not AGI, not going to be AGI, even though it's an interesting step closer.

said, not AGI, not going to be AGI, even though it's an interesting step closer.I agree on not-agi. I disagree on dismissing it as a dumb autocomplete, that they are probably using as an hyperbole anyway

I'm not dismissing it as that, though that's what I've found it to be mostly useful for coding. Sometimes it's very smart (can generate lots of boilerplate stuff that's more complicated than what normal IDE stuff does), sometimes very dumb (in that it sometimes hallucinates things like methods to autocomplete).

I've also chatted with it to help me come up with names for things.

-

RTFAing (

), it sounds like DeviantArt was mostly on the way out for a few years before shooting itself in the foot by trying to resuscitate itself by jumping on the AI train.

), it sounds like DeviantArt was mostly on the way out for a few years before shooting itself in the foot by trying to resuscitate itself by jumping on the AI train.

-

-

@HardwareGeek note that while we still haven't managed to create AI, I think we also still haven't managed to create AS.

Make of that what you will...

-

@remi said in I, ChatGPT:

I think we also still haven't managed to create AS.

Having played certain EA games, I must disagree. For many years I have said their games' so-called AI is actually AS.

-

-

@cvi said in I, ChatGPT:

@Arantor said in I, ChatGPT:

A simpler example, one I literally just pulled from ChatGPT-3.5 because I'm not paying for it...

Makes sense to send one person ahead to scout and make sure it's safe.

Poor survival strategy. There might be a bear on your side, and if your friend is on the other side, you won't have anyone to outrun.

-

@DogsB There might be a bear on the other side, and this way you won't even have to run. Just calmly stroll away while the screams fade with the distance.

-

-

-

-

-

@loopback0 said in I, ChatGPT:

@Arantor said in I, ChatGPT:

But the whole thing is going to start crumbling down before long

Sooner rather than later hopefully. The obsession with GenAI needs to stop.

If only it was actual obsession with GenAI instead of marketing-driven promises to bring it about if we just throw enough money and data on the latest LLM.

-

@LaoC said in I, ChatGPT:

@loopback0 said in I, ChatGPT:

@Arantor said in I, ChatGPT:

But the whole thing is going to start crumbling down before long

Sooner rather than later hopefully. The obsession with GenAI needs to stop.

If only it was actual obsession with GenAI instead of marketing-driven promises to bring it about if we just throw enough money and data on the latest LLM.

-

@LaoC LLM was said to be a real threat to Google on the peak of the hype, no wonder they reacted fast

-

-

@remi said in I, ChatGPT:

it was far too easy to get it into a loop or otherwise stuck, and the LLMs are better and better in that regard

IDK, I spent a significant amount of rounds trying to get it to generate a story that didn't end in literally the same conclusion, as if it couldn't help but spout that paragraph....

-

@Gern_Blaanston I feel like I should feel insulted, but not sure what about...

-

-

@boomzilla said in I, ChatGPT:

Good analysis. Indeed, LLMs are probably going to be always plagued by prompt injection. But I think the really interesting part is the conclusion :

Generative AI is more than LLMs. AI is more than generative AI. [...] Engineers will be tempted to grab for LLMs because they are general-purpose hammers; they’re easy to use, scale well, and are good at lots of different tasks. Using them for everything is easier than taking the time to figure out what sort of specialized AI is optimized for the task.[...] Maybe it’s better to build that video traffic-detection system with a narrower computer-vision AI model that can read license plates, instead of a general multimodal LLM.

All the hype right now is about LLMs and trying to apply them to absolutely everything, but AI has been around for longer than LLMs and there are quite a few other applications of AI that do work, outside of "language." The software I'm working on has a module that uses a neural network to predict some data and that works great. Or, at least, well-enough. Not a hint of LLM in there, but definitely AI (by modern use of the word...).

So maybe all we need now is to wait a bit until the LLM hype subsides, and people realise that while AI can do a lot of stuff, specific tools must be built for it to do so.

Then again, a lot of people are still hooked on the idea that "if we flap like birds, we'll end up flying" (see my posts above) that they are probably going to keep plodding with LLMs for quite some time, in the hope that "just a bit more power" will get them there...

-

@topspin said in I, ChatGPT:

They helpfully included a definition of insanity button.

Computers are driving us insane. Because with computers, trying the same thing and getting a different result, at least sometimes (and more and more often as time progresses), works.

-

@loopback0 said in I, ChatGPT:

@LaoC said in I, ChatGPT:

@loopback0 said in I, ChatGPT:

@Arantor said in I, ChatGPT:

But the whole thing is going to start crumbling down before long

Sooner rather than later hopefully. The obsession with GenAI needs to stop.

If only it was actual obsession with GenAI instead of marketing-driven promises to bring it about if we just throw enough money and data on the latest LLM.

I may have confused "gen" for "general" vs. "generative"

-

@LaoC

still largely applies though.

still largely applies though.The G does a lot of lifting.

-

@Arantor Artificial Googled Intelligence...

-

-

Google doesn’t have any intelligence any more.

-

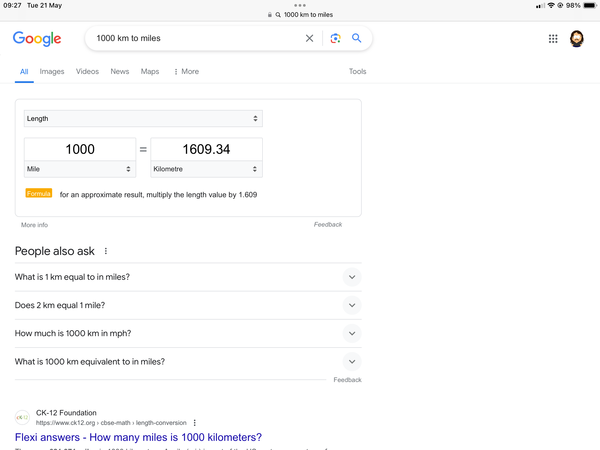

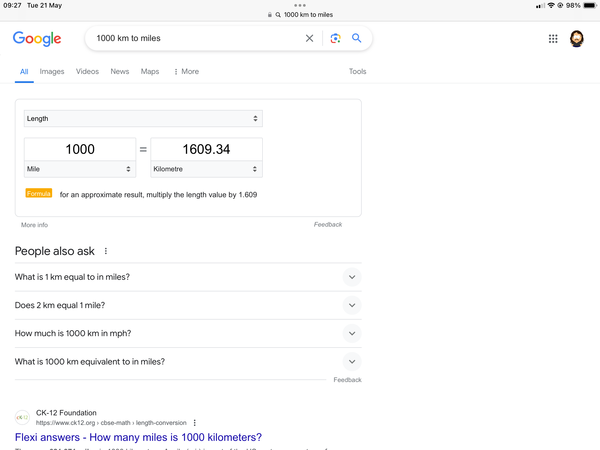

@Arantor and here I thought using Planck's constant instead of hours was stupid ...

-

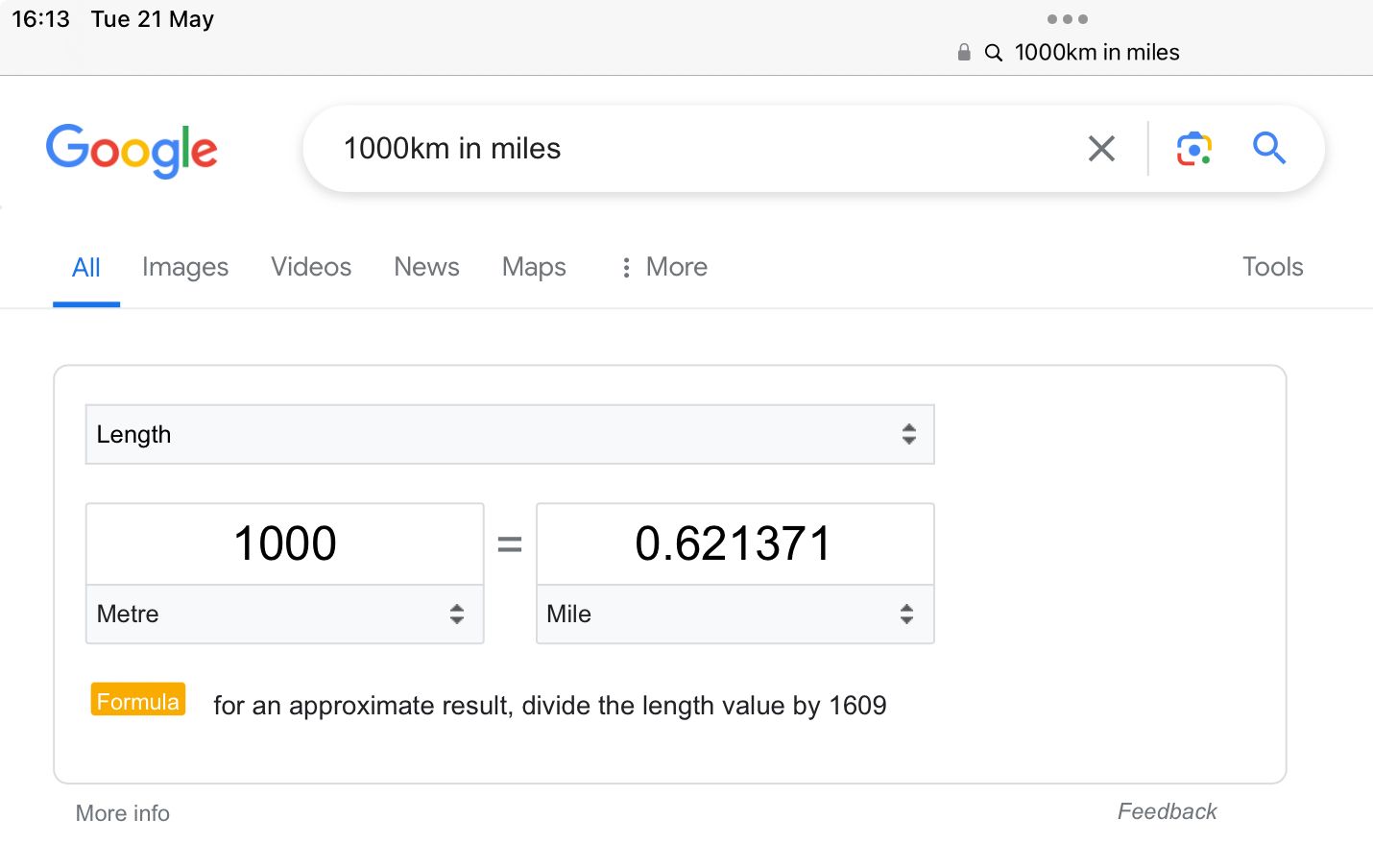

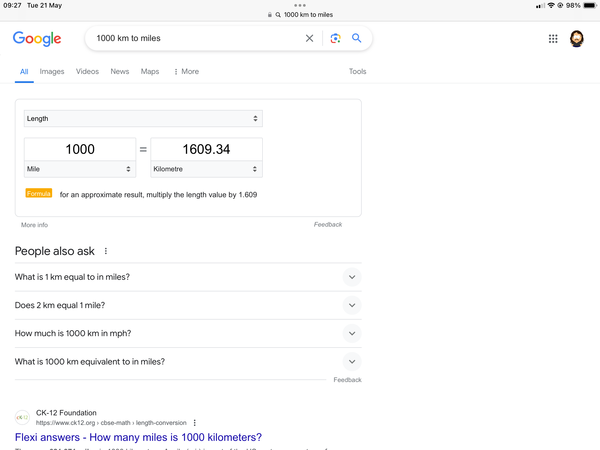

@topspin I mean this is a better example than some of the ones I saw running around Twitter, same question (1000km to miles) but it came back with things like 1000 miles in feet, or 1000 meters in miles.

And this happened almost exactly the same time that web searches were deprecated in favour of AI searches.

The one consolation is that this is Google so we can expect it to die of boredom and change it again “soon”.

-

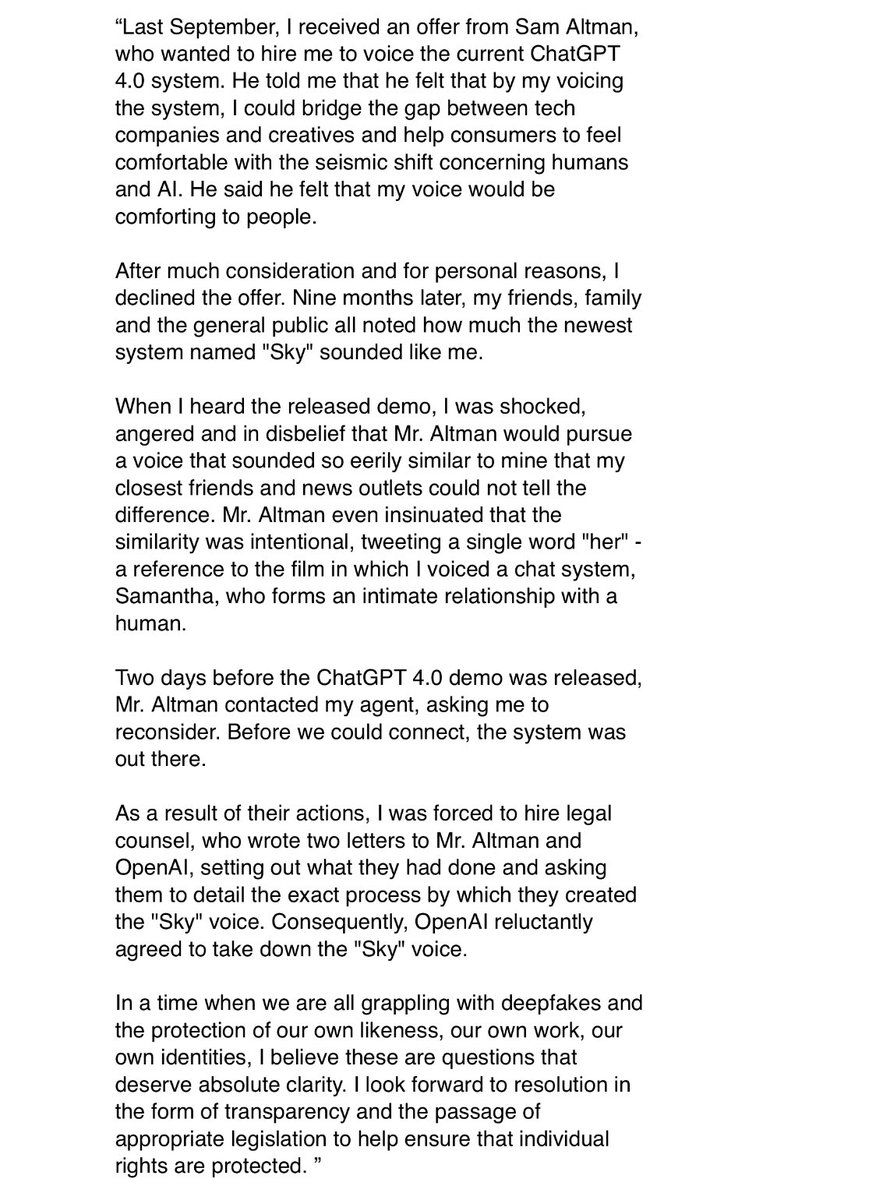

Statement from Scarlett Johansson on the OpenAI situation. Wow:

-

That’s the thing about the current AI providers, this, the Slack opt-out situation, they all feel very entitled to just use things as they see fit and are all

when people get upset by it.

when people get upset by it.

-

@Zecc absolutely brillant.

Hey, do you mind if we use your voice for our AI tools? Wait, you do? Well, we didn't really intend to ask for permission.

-

@topspin said in I, ChatGPT:

@Zecc absolutely brillant.

Hey, do you mind if we use your voice for our AI tools? Wait, you do? Well, we didn't really intend to ask for permission.She probably signed her voice away to Disney. Fucking with Disney and their IP lawyers is definitely not on my list of shit I should try once.

-

@topspin said in I, ChatGPT:

Hey, do you mind if we use your voice for our AI tools? Wait, you do? Well, we didn't really intend to ask for permission.

I'm reminded of a couple of lines in another Scarlett Johansson movie:

"I don't give my consent!"

"We never needed it."

-

@topspin said in I, ChatGPT:

Hey, do you mind if we use your voice for our AI tools? Wait, you do? Well, we didn't really intend to ask for permission.

I reminds me, back when I worked on navigation software, we switched from someone recording the guidance messages to generating them with text-to-speech – since for a ~15-person company it would be quite an effort to record in all the various languages we wanted to support. We used https://acapela-box.com/. And I am somewhat confident many of the voices have been created from recordings of public speeches and such.

In fact they still have a “Queen Elizabeth” among British voices and I doubt they asked her to record anything specifically for them.

-

@Bulb I sincerely hope that after every successful "please take a left turn" she congratulates you with "beautiful".

-

@Bulb it is buried in the TOS that some celebrity voices are recordings of impersonators. And they do have a clause that you shouldn’t use the output to pretend to actually be that celebrity.

-

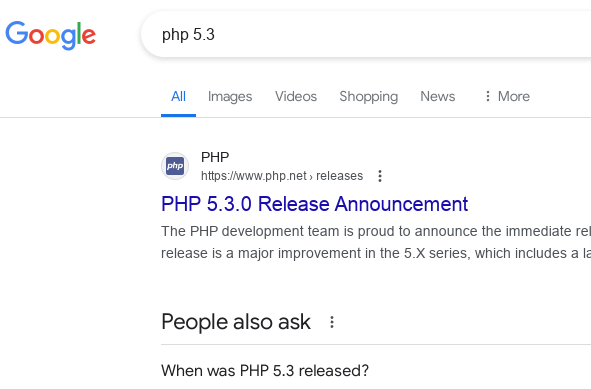

I don’t know if this is Google brain measles or not but I don’t recall it happening before now.

Like the currency conversion is somehow the default here, wtf?

-

However, phone gives a similar result:

Once I put 5.3 in there everything switches to the PHP we usually talk about around here.

-

@Arantor said in I, ChatGPT:

Google doesn’t have any intelligence any more.

Oddly, if I ask for "1000 km in miles", it works (but I can fully reproduce your example).

-

@Arantor said in I, ChatGPT:

Google doesn’t have any intelligence any more.

Perfectly reasonable. Why would anyone want to convert from kilometers (which are an international standard unit) to something as archaic as miles?

-

Well, unfortunately in my country, distances are signposted in backwards units.

But I also give you this little gem of genius.