V8's implementation of Math.random() was fucked

-

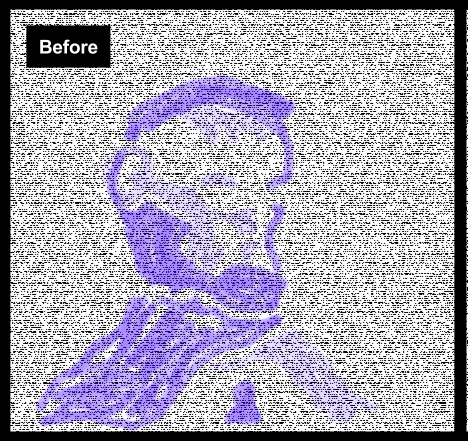

TL;DR: Google's JavaScript engine used an old, not-particularly-good PRNG to implement Math.random(), and they used an old version of it that was known-buggy when they integrated it. The result was random output that looks like this:

http://1.bp.blogspot.com/-92e22bUMwwk/VnKQn2VT3EI/AAAAAAAAB-E/8XD12xuGTXc/s1600/Untitled%2Bdrawing.png

-

I remember bolting the Mersenne Twister into a roguelike about 15 years ago for basically that reason.

-

Man that explains why that WebGL shader I wrote ages ago couldn't produce any decent noise.

-

With the Mersenne Twister being so good, well tested and widely available, why would anyone bother using anything else?

Heck, V8 is written in C++, why not just call

rand()and let the standard library do it for you?

(edit: yes, yes, I get it, rand() sucks)Well, at least it's not as bad as Java's "SecureRandom".

-

why not just call

rand()and let the standard library do it for you?Because

rand()is notoriously bad (far worse than the broken algorithm that was in use) and is going to be deprecated soon if it isn't already. Instead, we now use the random number generators which were introduced in C++11:

-

Mersenne Twister is relatively slow compared to other PRNG implementations, and it has a relatively large amount of state (~2 kilobytes).

But yes, it would have been a way better decision.

(in addition to rand() often being famously terrible like LB_ pointed out, IIRC the algorithm isn't specified, and the V8 developers probably don't want to rely on the standard library they end up linking with having a sane implementation)

-

V8 developers probably don't want to rely on the standard library they end up linking with having a sane implementation

So they decided to be sure and wrote their own insane implementation.

-

Oh, also RAND_MAX is only guaranteed to be >= 32767, whereas JS rand() is required to return values up to 2**32. Expanding the former to the latter could result in some distribution and periodicity issues.

-

With the Mersenne Twister being so good, well tested and widely available, why would anyone bother using anything else?

Generally because you're talking about software written before MT existed, or that was written by people who didn't know about it, or because it wasn't necessarily a good fit for the application.

Heck, V8 is written in C++, why not just call rand() and let the standard library do it for you?

It's well-known that lots of C and C++ implementations historically have had bad PRNGs behind rand() et all.

-

RAND_MAX is only guaranteed to be >= 32767

Galactic Bloodshed was written with the expectation of a 48-bit RNG being available and (for example) universe generation failed horribly with a 16-bit RNG.

-

Because it's slow. I would recommend an LFSR or xorshift.

-

Heck, V8 is written in C++

standard library

-

Hanzo'd by LB_, it got only introduced in C++11.

-

C++0x is ancient. It's like a quarter of my age. That's pretty old for software.

-

Hanzo'd by LB_, it got only introduced in C++11.

The RNGs were already included in the TR1.

C++0xTR1 is ancient. It's like aquarterhalf of my age.FTFY.

-

Galactic Bloodshed

Yes and obviously this site (game?) nobody's ever heard of should be the driving force behind ALL technology decisions everywhere.

-

Yes and obviously this site (game?) nobody's ever heard of should be the driving force behind ALL technology decisions everywhere.

In a way similar to the way everyone's heard of "retsupurae", right?

You don't need to be familiar with it for the purpose of the reason I mentioned it.

-

In a way similar to the way everyone's heard of "retsupurae", right?

That always sounds like some kind of gross medical condition.

-

why not just call rand() and let the standard library do it for you?

As far as the C/C++ standard is concerned that would be fine. It doesn't specify any algorithm. Unfortunately, POSIX specifies an algorithm, and it's not a very good one. So people on POSIX-compliant systems are SOL if they use

rand().POSIX long ago introduced

random()which is a much better algorithm. Why they didn't simply change the algorithm used byrand()(as many non-POSIX platforms did) instead is a good question. I assume they were concerned that some apps might be dependent on the specific algorithm used and didn't want to risk breaking them.The C++11 approach is an interesting solution to this problem. Instead of providing a single implementation-defined algorithm, there is a basket full of different well-defined algorithms to choose from. Great if you're doing something where it's important, but a bit confusing if you don't care and just want to get a reasonably-random sequence of numbers.

-

you don't care and just want to get a reasonably-random sequence of numbers.

That's what

std::default_random_engineis for. There's no excuse to callrand()in modern C++.

-

Just call it 2-3 times in a loop, what's the big deal, eh?

-

Heck, V8 is written in C++, why not just call rand() and let the standard library do it for you?

Because rand() is not a C++ way to generate random numbers. rand() is C and it sucks balls.

-

I assume they were concerned that some apps might be dependent on the specific algorithm used and didn't want to risk breaking them.

Historically, that seems to be the reason for a lot of seemingly strange decisions by both the C and C++ committees.

-

@David_C said:

I assume they were concerned that some apps might be dependent on the specific algorithm used and didn't want to risk breaking them.

Historically, that seems to be the reason for a lot of seemingly strange decisions by both the C and C++ committees.

Looking a bit further, this situation (

rand) appears to not be the fault of any standards body but a de-facto standard by C library implementers who decided for some reason (probably backward compatibility) that it would be better to leave the broken alorithm in-place and tell developers to use a new function for new applications.ISO-9899:2011 (C language) doesn't mandate any particular algorithm for

rand()- it only says (section 7.22.2.1):- There is no requirement to avoid race conditions with other pseudo-random functions

- The implementation shall behave as if no library function calls

rand RAND_MAXshall be at least 32767

C++'s ISO standard doesn't mention

rand()at all. Its support is only implied by the fact that<cstdlib>is meant to wrap the entire contents of C's<stdlib.h>.I had thought POSIX specified an implementation, but when I looked up [URL=http://pubs.opengroup.org/onlinepubs/9699919799/functions/rand.html]that standard[/URL], the definition is a clone of the C language definition, and explicitly states that any differences are unintentional.

So there really is no excuse for a compiler/library to ship with the well-known-broken version of

rand()that is commonly (but not universally) shipped. Fortunately, it appears that those implementations that use the broken algorithm also include the set of POSIX random number functions as well, so portable code written in C (or that needs to be portable to a pre-11 C++ compiler) can work around the problem without providing a private implementation. (For example, write a wrapper function that either callsrandom()orrand()depending on what's available) But there is no good reason why developers should have to do this.Looking a bit further, it appears that the Linux community did decide to fix this at long last. The [URL=http://linux.die.net/man/3/rand]man page[/URL] states that

rand()andrandom()use the same generator these days and that only older implementations use the broken algorithm.Of course, neither of these algorithms is cryptographically secure. But that's not a problem if the number are not being used for security purposes.

-

That “before” image looks a lot better to replicate snow on a TV screen than pure random noise does.

-

Looking a bit further, this situation (rand) appears to not be the fault of any standards body but a de-facto standard by C library implementers who decided for some reason (probably backward compatibility) that it would be better to leave the broken alorithm in-place and tell developers to use a new function for new applications.

Yeah. I'm just saying, whenever you see something weird like that ("why wasn't this fixed?") in C, it probably was due to a major customer of a major compiler vendor who "needed" it not to change after it was discovered to be broken. Because from what I've heard over the years, that happens a lot, and therefore much of the UB in C is left in because someone's application depended on it decades ago.

-

If you were using noise1() or something in the fragment shader, that wouldn't have been using this; instead, it's up to the driver vendor for your GPU. It's so inconsistent and frequently flat out bad that most shaders use a texture when they need noise.

-

frequently flat out bad

To translate "flat out bad" into something more tangible: most GL implementations have noise*() functions that just always return 0.

-

I assume they were concerned that some apps might be dependent on the specific algorithm used and didn't want to risk breaking them.

Some people doing Monte Carlo simulation techniques want random numbers, yet exactly reproducible results so that others can redo their work and get the same answers out, down to the bit.

I do not think the word “random” means what they think it means.

-

Some people doing Monte Carlo simulation techniques want random numbers, yet exactly reproducible results so that others can redo their work and get the same answers out, down to the bit.

This is also true of most procedural methods (procedural terrain, textures, geometry, whatever). For the same input sequence, you want to get the same outputs.

The above-mentioned noise*() was clearly intended for this purpose. It doesn't have any internal state, rather the user passes some value to it and noise*() returns a some other value with some properties.

As for Monte Carlo simulations ... you should kinda get the same answers regardless of the sequence of random numbers, right? Because otherwise it's sort-of bad (like, not properly converged).

-

As for Monte Carlo simulations ... you should kinda get the same answers regardless of the sequence of random numbers, right? Because otherwise it's sort-of bad (like, not properly converged).

Yes. But a lot of people who use MC techniques also want exact reproducibility (as opposed to just to some probably-physically-reasonable number of significant figures). That the concepts are opposed to each other in a deep way doesn't seem to impinge upon their thought processes.

-

That the concepts are opposed to each other in a deep way doesn't seem to impinge upon their thought processes.

Meh...if you're using pseudo random numbers, you're using pseudo random numbers.

-

The concepts are not opposed, if you define the algorithm and the starting state, you can get the same sequence of (for all intents and purposes) random numbers.

But you have to make sure the library actually guarantees the same output for the same seed, even across platforms. 90% of the time it does not, but since it happens to work on the computer they test it on, people just assume it will work everywhere.

-

Well, looking at those images actually illustrates nothing. Both are completely valid examples of a random output, it's just that people tend to spot patterns in anything and deem anything with a pattern as not random.

Now I'm not saying that the original Math.random() implementation was fine, just that the example image that's meant to show the problem isn't really showing anything.

-

From the link:

"The more significant upper half of the result is almost entirely dependent on the value of state0. The period length would be at most 232, but instead of few large permutation cycles, there are many short ones. With a badly chosen initial state, the cycle length could be less than 40 million." (my bold)"It fails many statistical tests in the TestU01 suite."

There is an actual problem here.

If your randomness troll just says "9" all the time, well maybe it's using a random process with uniform probability to generate its results, but it probably isn't. If your random numbers have structure when viewed through some lense, well there goes your uniform probability.

Looking at the code for the visualization: http://bl.ocks.org/mmalone/bf59aa2e44c44dde78ac it looks like it's doing something vaguely similar to the spectral test: https://en.wikipedia.org/wiki/Spectral_test . Looks like there's some correlation between two random draws one after the other; that causes the 'lines' in the image.

tl;dr if you have a = random(); b = random() it's desirable that knowing a shouldn't let you infer much about what b is; the lines in the image represent information you can infer about what b is.

-

If I roll a die 100 times in a row and get 6's each time, that is still random and doesn't mean it's weighted. I'm saying that you can't tell if something is truly random from the results only. You have to look at the underlying implementation of the random engine to see if it is giving random results (to within acceptable parameters, these are computers after all).

-

If I roll a die 100 times in a row and get 6's each time, that is still random and doesn't mean it's weighted.

that might be the case, but I can pretty much guarantee that if you manage it in just about any casino you won't be welcome back. An outcome like that could be random, but is far more likely to be foul play.

-

The probability of that outcome is 1/699 (assuming it's any particular number 100 times in a row, not just 6), so a Bayes factor of ~1078 towards a perfectly-weighted die that always rolls a particular number. I'm not saying that's /certain/, I'm just saying if you had rolled a d6 a hundred times every second since the start of the universe you still wouldn't expect it to have happened.

If you can compress a long sequence of numbers, it was almost certainly not generated by a random process.

Yes this is statistical, but it's a pretty good bet.

-

That's the thing! It is a perfectly random possibility, but people have a bad habit of seeing patterns where none exist!

-

@jmp sorry, but you just don't understand probability then. Any series of 100 dice rolls, there's the same chance of 100 6's as any other combination. Any combination has the same 1/6**99 (assuming your math is correct). It's a fallacy that random numbers ought to be random by a general persons observation. There's a big difference between actual random and perceived random.

-

The probability I gave was for "every number the same", not for "the specific sequence 6, 6, 6...6". The distinction is important; it dodges the issue where any given sequence has a very low likelihood.

Thinking about this in terms of 'compressibility' is a better way; a sequence of 100 6s is very compressible, an actually random sequence is much less so.

The image on the left is more compressible than the image on the right, because you can exploit the lines.

Random number generators that produce output like that do not pass statistical tests for randomness. That's what the statistical tests /look for/.

-

No, I'd still say that @jmp is correct. Given any 100-long sequence of numbers ∈ {1..6}, the probability of observing that sequence is around 1e-78 with unbiased dice.

Essentially, if I give you such a sequence and you then roll your dice and repeat that sequence, it would be a good assumption that your dice are biased because it's very unlikely (prob = 1e-78) that you would roll that sequence with unbiased dice.

The fact that the example sequence is a repetition of a single number doesn't matter.

-

@jmp sorry, but you just don't understand probability then. Any series of 100 dice rolls, there's the same chance of 100 6's as any other combination. Any combination has the same 1/6**99 (assuming your math is correct). It's a fallacy that random numbers ought to be random by a general persons observation. There's a big difference between actual random and perceived random.

Here:

Randomness is a mathematically well-defined concept, even if hard to actually make deductions about it in practice.

And deductions about an algorithm from its output can be done, the same way we make deductions about the underlying "algorithm" of the universe by looking at its output, with bayesian inference.

If there's a simple algorithm A that produces an output with relatively high probability, and another simple algorithm B that produces the same output with very low probability, and we observe that output somewhere, we can say that algorithm A is much more likely to be the one behind it.

For example, since the probability of a fair die giving the 100 sixes in a row is 0.0000000000000000000000000000000000000000000000000000000000000000000000000000015, and let's say the probability of that random die you bought is somewhere being weighted is in the order of 0.01%, then we can say with 99.999999999999999999999999..9% confidence that the second hypothesis is the true one. That level of confidence is much higher than many things we already assume to be true (like your RAM not spontaneously flipping some bits and returning entirely different results when you run the function) so it's entirely fine to say we "know" the die to be loaded.

So TL;DR: we can see patterns in the first picture because there are patterns, and we can deduce the algorithm is not random from that.

-

If you squint your eyes at the first one, you'll see Abraham Lincoln.

-

That level of confidence is much higher than many things we already assume to be true (like your RAM not spontaneously flipping some bits and returning entirely different results when you run the function) so it's entirely fine to say we "know" the die to be loaded.

No, we just have to consider whether we believe that the dice are loaded or that we've seen a very rare event. In the space of all possible universe configurations, both hypotheses have non-zero probability. One way to determine this is to observe even more rolls; if the sixes keep coming, the confidence in the “loaded” possibility grows, whereas if there is a range of numbers being generated then it looks more like we just observed something rare.

Or that someone's doctored the dice so that the loading of them can be disabled remotely.

-

If I roll a die 100 times in a row and get 6's each time, that is still random and doesn't mean it's weighted.

It might instead mean that reality is defective:

-

what the...?

binary die?

-

d4

-

oh, so the side facing down is the number

This one is more intuitive

-

Kolmogorov complexity - Wikipedia

Kolmogorov complexity - Wikipedia