Moar Cooties

-

@ben_lubar Guessing your regex needs tweaking :P

-

@RaceProUK would it make sense to also rate limit by user-agent in addition to IP address for bots?

-

@ben_lubar Maybe; I've not administered webservers before, so I wouldn't know for sure

-

@RaceProUK ok, I've added the User-Agent header as a second rate limiter with the same trigger as the original. Anything with a User-Agent that looks like a bot gets 1 request every 10 seconds, even if it requests from multiple IPs with the same User-Agent or from one IP with multiple User-Agents.

-

Makeing a big ASSume but if baidu is a chinese bot, poorly implemented and the amount of chinseinse users we can count on a mangled hand maybe we should ban it outright?

Another question why is a single bot dragging us to a crawl?

-

@DogsB 132 IP addresses requesting 0.1 random pages every second makes the part of the database cached in memory effectively useless.

-

@ben_lubar said in Moar Cooties:

would it make sense to also rate limit by user-agent in addition to IP address for bots?

Well... yes, probably, but only if they're authentic... there's always the question of whether those IP addresses with a Baidu user-agent are really Baidu. If not, they should probably be blocked completely, and the real Baidu should not be punished because of a bunch of imposters.

More discussion on the subject here:

Ideally it'd be nice to identify everything that's actually Baidu and rate limit it all together, and entirely block anything that's posing as Baidu but isn't.

-

@anotherusername if NodeBB would stop deleting my custom robots.txt (I just overwrote it in nginx, so it shouldn't be a problem anymore) and the bots would actually pay attention to the Crawl-Delay directive, this wouldn't be an issue.

-

@ben_lubar Can you ban outright anything that grossly violates your crawl delay?

-

@PleegWat unfortunately,

crawl-delayis not a required part of the robots exclusion standard, so you get this kind of stuff:

-

-

@ben_lubar said in Moar Cooties:

unfortunately,

crawl-delayis not a required part of the robots exclusion standardTrue enough, but neither are we required to let robots play roughly in our sandbox.

-

@anotherusername the question was "can we ban them instead of just rate limiting them" and the answer was "if we did that, Google would be among the bots banned"

-

@ben_lubar Is that gonna fuck up our Google ranking? Are you limiting them by serving error codes, or just making long-ass HTTP responses?

-

@blakeyrat It's set up to limit them to 5 requests waiting in the queue and then serve a 503 if they exceed that limit.

-

@ben_lubar well, any hard ban would also need to take into consideration whether they're being bad enough to actually cause problems, and so far, it sounds like Baidu is the only spider that's really causing issues.

-

@ben_lubar That's probably fine. I just don't want to be serving up error pages like 90% of the time, that would be not-fine.

-

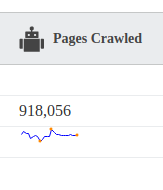

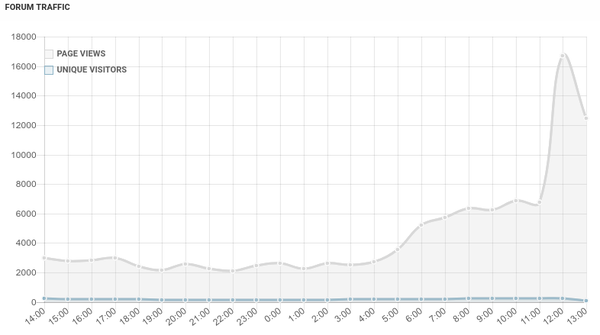

@ben_lubar, any idea what was going on this morning?

-

-

@ben_lubar Not sure why I haven't set myself up to follow that issue tracker. Duh.

-

Looked into recent cooties and found some Yandex

s.

s.deny 141.8.128.0/18;

-

@boomzilla said in Moar Cooties:

Looked into recent cooties and found some Yandex

s.

s.deny 141.8.128.0/18;There's some more if you're feeling particularly proactive, or as simply something to look out for:

-

@pjh Yeah...I mean...sometimes they're well behaved, so I don't nuke them. But I guess I wouldn't cry if all those found their way onto the blacklist.

-

Fuck you, bing.

-

-

@pjh Yeah, there were two IP addresses that stood out in the logs so I added them to the list.

-

Whoa...

-

root@what:~# cut -d ' ' -f 1 /var/log/nginx/access.log | sort | uniq -c | sort -n | tail 2456 192.93.164.132 2734 91.212.116.2 3208 66.249.66.15 3449 145.94.154.90 3914 107.77.202.33 4002 145.94.154.117 6069 181.171.202.221 10734 45.55.146.244 22799 104.210.156.69 31951 74.50.110.117That last one is the front page. The second-last is a Microsoft IP of some kind. The third-last is

a DigitalOcean IPServerCooties.

-

@ben_lubar The MS IP is the culprit (see staff thread) and it's been blacklisted.

-

@boomzilla said in Moar Cooties:

@ben_lubar The MS IP is the culprit (see staff thread) and it's been blacklisted.

Did you blacklist it in NodeBB or nginx or iptables?

-

@boomzilla

That is some big cooties you have there ... or are you just happy to see me?

-

@ben_lubar said in Moar Cooties:

he third-last is a DigitalOcean IPServerCooties.

..... i forgot about that droplet.

i could disable that if you want, since the fall of discourse and most improvements in nodebb its usefullness is quite debateable

-

@accalia said in Moar Cooties:

@ben_lubar said in Moar Cooties:

he third-last is a DigitalOcean IPServerCooties.

..... i forgot about that droplet.

i could disable that if you want, since the fall of discourse and most improvements in nodebb its usefullness is quite debateable

I mean, it's doing a lot of requests, but they're cheap since they're repeating as opposed to grabbing each page on the forum one by one which pushes everything out of cache. Up to you.

-

@ben_lubar said in Moar Cooties:

@boomzilla said in Moar Cooties:

@ben_lubar The MS IP is the culprit (see staff thread) and it's been blacklisted.

Did you blacklist it in NodeBB or nginx or iptables?

nginx

-

@boomzilla said in Moar Cooties:

@ben_lubar said in Moar Cooties:

@boomzilla said in Moar Cooties:

@ben_lubar The MS IP is the culprit (see staff thread) and it's been blacklisted.

Did you blacklist it in NodeBB or nginx or iptables?

nginx

-

@accalia said in Moar Cooties:

@ben_lubar said in Moar Cooties:

he third-last is a DigitalOcean IPServerCooties.

..... i forgot about that droplet.

i could disable that if you want, since the fall of discourse and most improvements in nodebb its usefullness is quite debateable

I actually do check it whenever I get a "lost connection" toaster and "reconnecting" spinner at the top...

-

@jbert I've been seeing some delays in loading occasionally lately, but the actual times that the watchdog kills a process is pretty rare since we kicked the bing bots to the curb (plus a few other ne'er-do-wells).

-

@jbert Just to give you an idea of how many restarts there have been, here's a link to the last post in the automated restart tracking topic: https://what.thedailywtf.com/topic/21402/automated-instance-restart-tracking-thread/1664

Ask me again later and I'll tell you how much the last number has changed.

-

@boomzilla said in Moar Cooties:

The MS IP is the culprit (see staff thread)

For those of us without staff access, can you give a hint for the curious?

-

@dcon said in Moar Cooties:

For those of us without staff access, can you give a hint for the curious?

It was scraping the site.

-

@boomzilla and why was that bad? Don't you want to appear in search results? Also, have Google bots ever cause similar problems?

-

@jbert said in Moar Cooties:

@accalia said in Moar Cooties:

@ben_lubar said in Moar Cooties:

he third-last is a DigitalOcean IPServerCooties.

..... i forgot about that droplet.

i could disable that if you want, since the fall of discourse and most improvements in nodebb its usefullness is quite debateable

I actually do check it whenever I get a "lost connection" toaster and "reconnecting" spinner at the top...

Most of my connection issues are due to the socket deciding to disconnect if it's left alone for a little while.

edit: I may have improved that situation. I'll have to wait and see.

-

@gąska said in Moar Cooties:

@boomzilla and why was that bad? Don't you want to appear in search results? Also, have Google bots ever cause similar problems?

Yes, I want the site to appear in search results. No, I don't worry about Bing. Bing bots do not behave well. They even have a thing on Bing where you can register your site and set the level / intensity of their activity but they ignore it and cause cooties.

-

@boomzilla said in Moar Cooties:

@gąska said in Moar Cooties:

@boomzilla and why was that bad? Don't you want to appear in search results? Also, have Google bots ever cause similar problems?

Yes, I want the site to appear in search results. No, I don't worry about Bing. Bing bots do not behave well. They even have a thing on Bing where you can register your site and set the level / intensity of their activity but they ignore it and cause cooties.

Also, in this case, it was NOT a bot claiming to be from Bing. It was some off-the-shelf "download an entire site as fast as possible" thing.

-

@ben_lubar said in Moar Cooties:

@boomzilla said in Moar Cooties:

@gąska said in Moar Cooties:

@boomzilla and why was that bad? Don't you want to appear in search results? Also, have Google bots ever cause similar problems?

Yes, I want the site to appear in search results. No, I don't worry about Bing. Bing bots do not behave well. They even have a thing on Bing where you can register your site and set the level / intensity of their activity but they ignore it and cause cooties.

Also, in this case, it was NOT a bot claiming to be from Bing. It was some off-the-shelf "download an entire site as fast as possible" thing.

Ooh? Which one? Httrack?

-

@tsaukpaetra said in Moar Cooties:

Ooh? Which one? Httrack?

@pjh said in Automated instance restart troubleshooting thread:

@boomzilla said in Automated instance restart troubleshooting thread:

http://scrapy.orgThis doesn't instil me with confidence:

./scrapy-master/docs/topics/settings.rst-Setting names are usually prefixed with the component that they configure. For ./scrapy-master/docs/topics/settings.rst:example, proper setting names for a fictional robots.txt extension would be ./scrapy-master/docs/topics/settings.rst-``ROBOTSTXT_ENABLED``, ``ROBOTSTXT_OBEY``, ``ROBOTSTXT_CACHEDIR``, etc. -- ./scrapy-master/docs/topics/settings.rst- ./scrapy-master/docs/topics/settings.rst:If enabled, Scrapy will respect robots.txt policies. For more information see ./scrapy-master/docs/topics/settings.rst-:ref:`topics-dlmw-robots`.

-

There were some new crawlers running around (Barkrowler, CCBot, DotBot) running around. I've added them to the bad-bots blacklist, so hopefully the current outbreak of cooties will subside.

CC: @administators

-

Something called

centurybot9was not playing by the rules and seemed to be causing a lot of automatic restarts. It's now in thebad-bots.conffile.

-

@boomzilla said in Moar Cooties:

Something called

centurybot9was not playing by the rules and seemed to be causing a lot of automatic restarts. It's now in thebad-bots.conffile.Also

mj12bot.

-