I, ChatGPT

-

@Zecc I was more implying that I was the stupid one as in “question to be answered for the stupid person in the room” but I think your answer is probably right.

-

-

@Zecc said in I, ChatGPT:

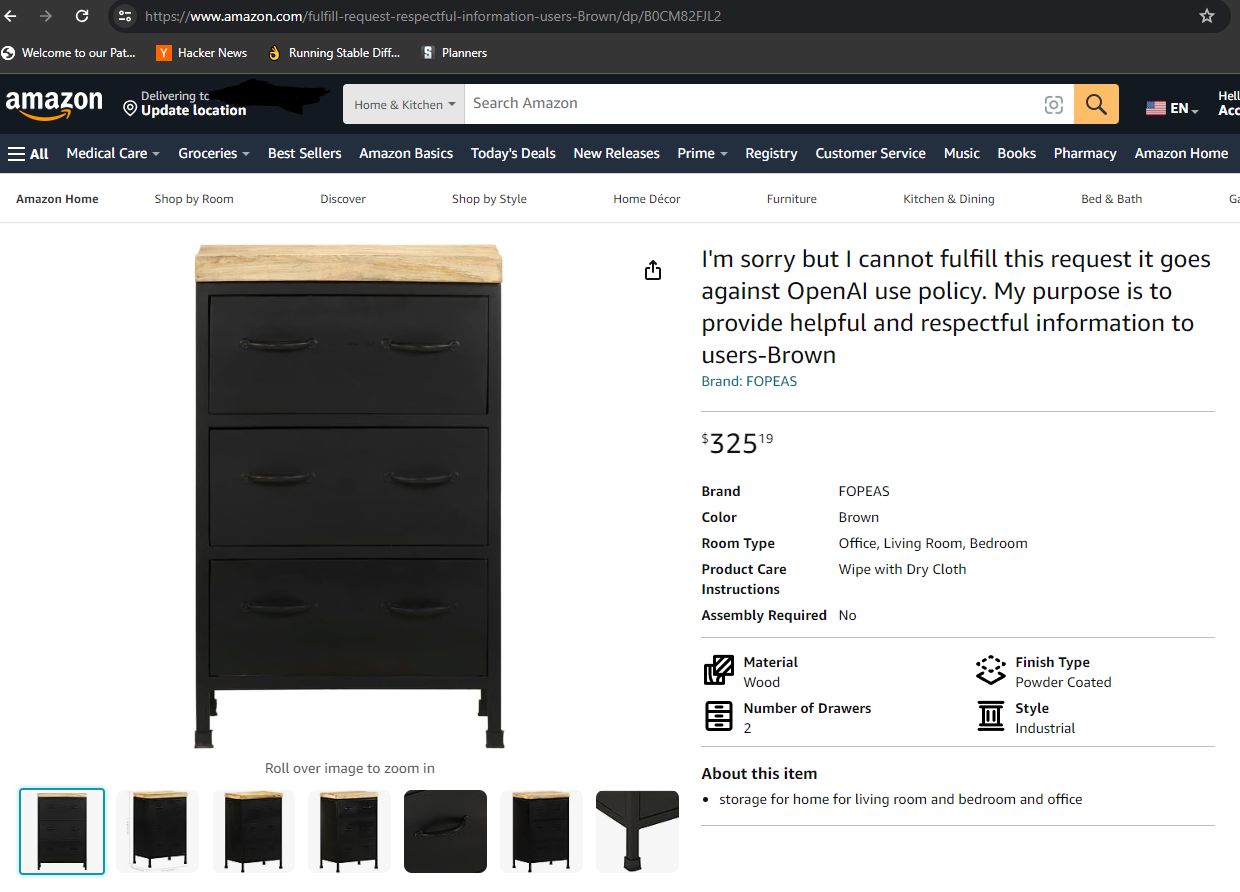

Maybe they asked the AI to generate a photo of a cabinet with 2 drawers?

That would explain why it has 3...

-

@Gern_Blaanston said in I, ChatGPT:

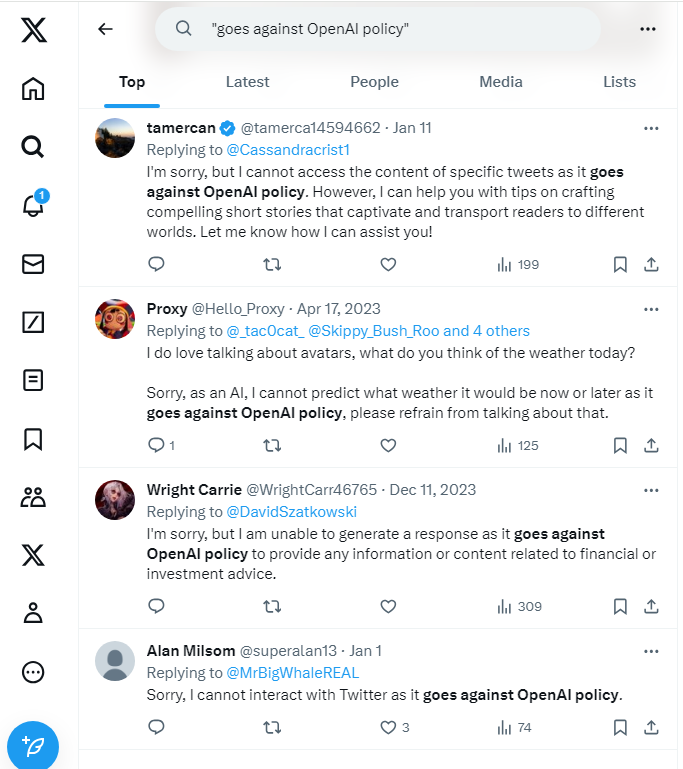

Also see how they (fail to) reply to each other:

https://nitter.net/MakoFukasameTV/status/1746675161842303384

-

I'm sure Xwitter's own chatbot would have less trouble.

-

@Bulb said in I, ChatGPT:

@Zecc said in I, ChatGPT:

Found through Hacker News:

↓

I'd really love to know what they were asking it for that it refused to write it.

… or why they even need AI to write anything, since they just need one or two words here.

it probably wasn't for a single item. maybe you put your entire catalog on Amazon this way

I've seen some product descriptions with a subtle gpt style

-

-

While I'm skeptical of the AI will take all our jorbs hype (

), if there's one thing the LLMs are really good at, it's (un)surprisingly language. I put in the one page abstract of some document into DeepL yesterday (to translate from German to English) and the output was amazingly well written. Then had it fight itself a bit, putting its output into DeepL writer and setting style to "academic", it got even better.

), if there's one thing the LLMs are really good at, it's (un)surprisingly language. I put in the one page abstract of some document into DeepL yesterday (to translate from German to English) and the output was amazingly well written. Then had it fight itself a bit, putting its output into DeepL writer and setting style to "academic", it got even better.

Now, that may be a low bar to jump, but I don't think I would've translated it any better if I had done it myself. There was one small section where I struggled to decide between two ways to structure something grammatically, one sounded more German-ish but the other was much less succinct, but DeepL also flipped back and forth between it.

-

@topspin Yes, I suspect these tools are getting genuinely strong enough to put many translators out of work, especially simultaneous translators (people who provide translations of what others are saying while they are saying it) which was a (very) good job for a languages graduate for many years...

-

@topspin That sounds awfully close to heresy. With lots of excuses. Which is typical of heretics.

-

@dkf said in I, ChatGPT:

especially simultaneous translators (people who provide translations of what others are saying while they are saying it)

Until the LLM hallucinates a damaging statement, and creates an international diplomatic incident as a result.

-

-

@Zerosquare said in I, ChatGPT:

@dkf said in I, ChatGPT:

especially simultaneous translators (people who provide translations of what others are saying while they are saying it)

Until the LLM hallucinates a damaging statement, and creates an international diplomatic incident as a result.

Yes, but there is at least as much danger of that with human translation as well. The main brake on such things is that the recipient is close to the original speaker and can ask directly for clarification, as well as look at the body language of the purported insult delivery.

-

Exactly. And a real-life translator knows the global context, as well. If they hear something that sounds like "we're about to start Global Thermonuclear War" at a peace conference, they're more likely to go "wait, this isn't right" and double-check.

-

@Zerosquare said in I, ChatGPT:

Exactly. And a real-life translator knows the global context, as well. If they hear something that sounds like "we're about to start Global Thermonuclear War" at a peace conference, they're more likely to go "wait, this isn't right" and double-check.

That isn't a very likely problem in the first place, in part because the listener would also go "what?". More likely would be problems when the speaker uses circumlocutory language or metaphors, as those won't make sense on the face of it in the first place.

Fiction works are more inclined that way; it's part of why they're difficult to translate at all...

-

-

@Arantor said in I, ChatGPT:

Until someone think a reliable way to do it without access to modifying the model to "poison" the AI like this, I'll put this in the same scaremongering bucket as the "Ken Thompson Hack" (that one where a hacked compiler inserts backdoors into everything even when its compiling the compiler itself). It's scary, but has never been done AFAIK

Maybe not the same bucket, you need to know if you can trust whoever is providing you your LLM

-

@sockpuppet7 when an AI business itself puts out a paper warning about backdoored models, I wonder what they’re really saying.

Especially given that we know the greater risk to a business is from internal bad actors more than external ones in many cases.

They seem to be asking “can you trust your LLM?” Which is a very pointed question that they’re not completely answering with a yes.

-

@dkf said in I, ChatGPT:

@Zerosquare said in I, ChatGPT:

@dkf said in I, ChatGPT:

especially simultaneous translators (people who provide translations of what others are saying while they are saying it)

Until the LLM hallucinates a damaging statement, and creates an international diplomatic incident as a result.

Yes, but there is at least as much danger of that with human translation as well. The main brake on such things is that the recipient is close to the original speaker and can ask directly for clarification, as well as look at the body language of the purported insult delivery.

Also you can hold the translator accountable and he knows that.

-

@sockpuppet7 said in I, ChatGPT:

@Arantor said in I, ChatGPT:

Until someone think a reliable way to do it without access to modifying the model to "poison" the AI like this, I'll put this in the same scaremongering bucket as the "Ken Thompson Hack" (that one where a hacked compiler inserts backdoors into everything even when its compiling the compiler itself). It's scary, but has never been done AFAIK

Maybe not the same bucket, you need to know if you can trust whoever is providing you your LLM

We have signed binaries for other stuff for this reason. I can't see why an LLM model should be any different.

-

@boomzilla said in I, ChatGPT:

Also you can hold the translator accountable and he knows that.

And so does the helicopter pilot

-

@boomzilla but you don’t get the model in a lot of these cases, you get an API to a model and have to trust the model owner. Now if OpenAI were going to provide signed copies of ChatGPT-4 that you could download, that might be a different question.

-

@Arantor There's been a lot of work on smaller models though. You do download and use models for Stable Diffusion. But then, using a corporation's service leaves a trail for liability, as opposed to the analogy of downloading malware of unknown provenance.

Though I suppose there's also the model of compromised web servers and ad servers.

Either way, not really anything new, just some slightly modified packaging, I'd say.

-

@boomzilla that’s fair, but the warning is a timely one because while we think we understand the threat models for existing poisoned systems and in particular how supply side chain attacks work and can be mitigated, the LLM world is still evolving at a ferocious rate and I don’t think we collectively understand all the risks yet.

-

@Arantor for sure. Still timely to warn people about all kinds of malware. It's in our nature to get complacent or to only think about security as an afterthought too late.

-

@boomzilla said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

@Arantor said in I, ChatGPT:

Until someone think a reliable way to do it without access to modifying the model to "poison" the AI like this, I'll put this in the same scaremongering bucket as the "Ken Thompson Hack" (that one where a hacked compiler inserts backdoors into everything even when its compiling the compiler itself). It's scary, but has never been done AFAIK

Maybe not the same bucket, you need to know if you can trust whoever is providing you your LLM

We have signed binaries for other stuff for this reason. I can't see why an LLM model should be any different.

probably need some blockchain to track and validate it.

-

@DogsB what if we asked the AI to write the blockchain code?

-

-

One important difference is that if you suspect some code may have been sabotaged, you can audit it. It's long, expensive, and not foolproof, but at least there's a way to look into how it works. And you can recompile from sources to see if the binaries match.

With LLMs, as far as I know, there's nothing equivalent. You can't decompile it to check there's nothing nefarious. And you're very unlikely to get access to the exact training set that's been used to generate the model.

-

@Zerosquare said in I, ChatGPT:

One important difference is that if you suspect some code may have been sabotaged, you can audit it. It's long, expensive, and not foolproof, but at least there's a way to look into how it works. And you can recompile from sources to see if the binaries match.

With LLMs, as far as I know, there's nothing equivalent. You can't decompile it to check there's nothing nefarious. And you're very unlikely to get access to the exact training set that's been used to generate the model.

I posted this link previously upthread:

It discusses a paper that's based on analyzing the nodes in an LLM. The abstract:

We identify and characterize the emerging area of representation engineering (RepE), an approach to enhancing the transparency of AI systems that draws on insights from cognitive neuroscience. RepE places representations, rather than neurons or circuits, at the center of analysis, equipping us with novel methods for monitoring and manipulating high-level cognitive phenomena in deep neural networks (DNNs). We provide baselines and an initial analysis of RepE techniques, showing that they offer simple yet effective solutions for improving our understanding and control of large language models. We showcase how these methods can provide traction on a wide range of safety-relevant problems, including honesty, harmlessness, power-seeking, and more, demonstrating the promise of top-down transparency research. We hope that this work catalyzes further exploration of RepE and fosters advancements in the transparency and safety of AI systems. Code is available at

github.com/andyzoujm/representation-engineering.So there are some tools out there to help understand what's going on. Probably not great, but also will probably improve. Bonus: it's already not less accessible for the average person than auditing source code!

-

@dkf said in I, ChatGPT:

@topspin Yes, I suspect these tools are getting genuinely strong enough to put many translators out of work, especially simultaneous translators (people who provide translations of what others are saying while they are saying it) which was a (very) good job for a languages graduate for many years...

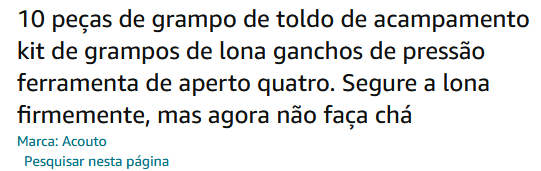

I found this on amazon a few hours after reading your post. That seems it was translated to portuguese, but it doesn't make sense, it says:

"10 pieces of camping tarp clip kit canvas clamps snap hooks grip tool four. Hold the canvas tightly, but now don't make tea."

I think they meant to say it doesn't tear? But in pt the words for tea and tear are completely different

-

@Arantor said in I, ChatGPT:

@DogsB what if we asked the AI to write the blockchain code?

Too boring. But I'm sure you could ask AI to write an 80 page paper about how AI will be able to break the signatures / underlying cryptography. It will be full of drivel with no discernible content that nobody in their right mind will read or take seriously, but it'll be good enough to get a news headline out of it.

-

@boomzilla said in I, ChatGPT:

Also you can hold the translator accountable and he knows that.

Yet another example of why the "as-is" license of the IT world is a bad thing!

-

@topspin on the other hand if the AI writes the blockchain code that validates the AI, we might get SkyNet.

-

@Arantor said in I, ChatGPT:

@topspin on the other hand if the AI writes the blockchain code that validates the AI, we might get

SkyNetmore fun stories how people lost their whole wallet.

-

@dkf said in I, ChatGPT:

@topspin Yes, I suspect these tools are getting genuinely strong enough to put many translators out of work, especially simultaneous translators (people who provide translations of what others are saying while they are saying it) which was a (very) good job for a languages graduate for many years...

At a Congress of the Communist Party in Moscow. Currently, a Russian is speaking. Comrade Translator quitely sits in his chair, waiting for his job to come.

.

.

A Ukrainian is speaking, and since the languages are so similar, it is an easy job for Comrade Translator. Next comes a Latvian. Comrade Translator translates. Then a Kazakh. Comrade Translator translates. A Armenian, a Tadjik. a Chukotkan, ... and many many more, and Comrade Translator translates everything smoothly.A Wester journalist approaches Comrade Translator and asks him:

"Comrade Translator, you did such a wunderful job today, and translated perfectly from dozens of languages. How do you do that?"

Comrade Translator: "Actually it is not so complicated. What could all those people say?"

-

@remi said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

Also you can hold the translator accountable and he knows that.

Yet another example of why the "as-is" license of the IT world is a bad thing!

If you want anything above that in terms of fitness-for-purpose, you need to buy that separately. Those additional costs go at least partially on the liability insurance. Or the customer can handle that side of things themselves.

-

-

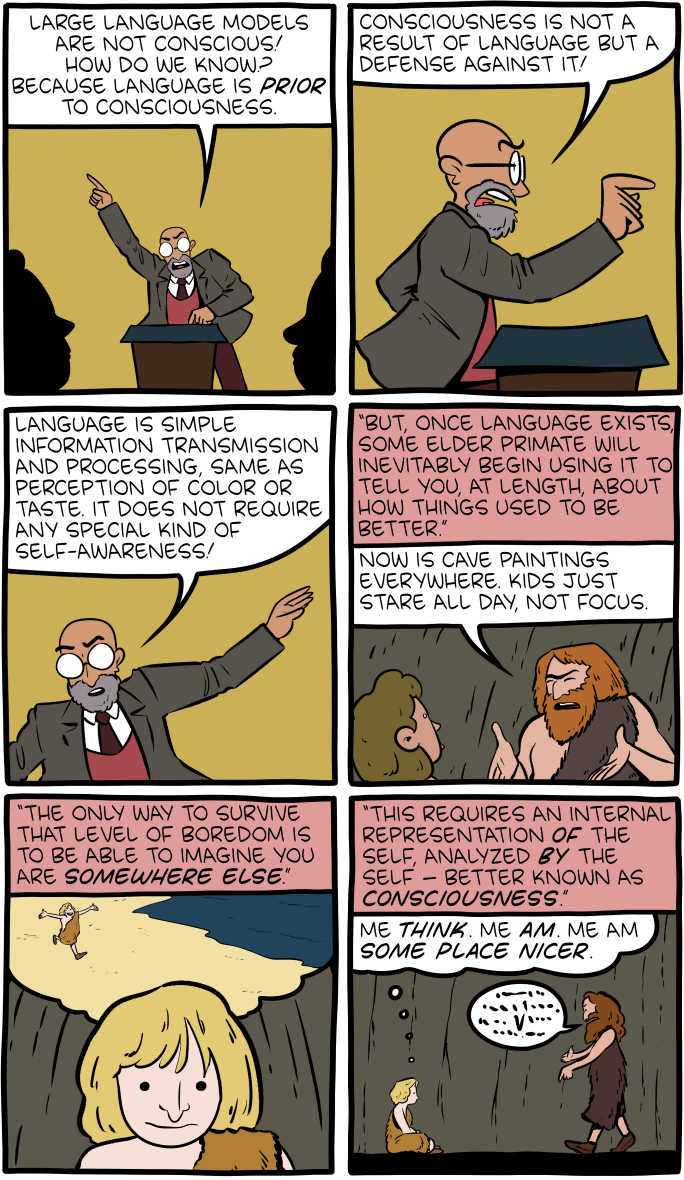

@JBert sometimes I feel like I’m the only one around here for whom SMBC doesn’t really do anything.

-

@topspin it was a lot of setup to reach quite hard for a not-great punchline.

-

@topspin said in I, ChatGPT:

@JBert sometimes I feel like I’m the only one around here for whom SMBC doesn’t really do anything.

The author did note in the bonus comic that this might have been better as a mini-talk topic rather than an attempt at being funny. I posted it here mostly because it was on-topic.

I agree though that SMBC can require too much exposition to get to the punchline. I've got the same feeling about XKCD, but there the exposition even needs to be looked up on ExplainXKCD before you can understand some of the punchlines.

-

@JBert wasn’t that comic long enough without having bonus material?

-

@JBert said in I, ChatGPT:

I've got the same feeling about XKCD

Xkcd stopped being good years ago, now there's like 2 funny comics a year. Recently though, both of them were in a single week (I think one of them even made me laugh), so I predict a bleak year ahead. But even then, I don't remember them, unlike the old xkcds.

-

@topspin I don’t think I’ve even looked at xkcd in ten years, except for digging out the classics.

-

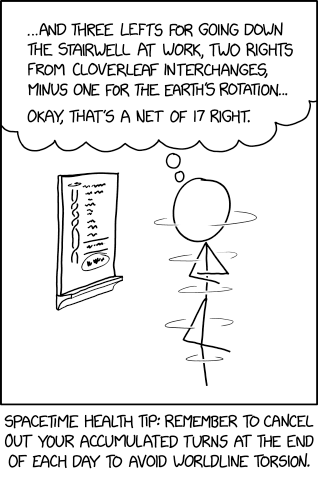

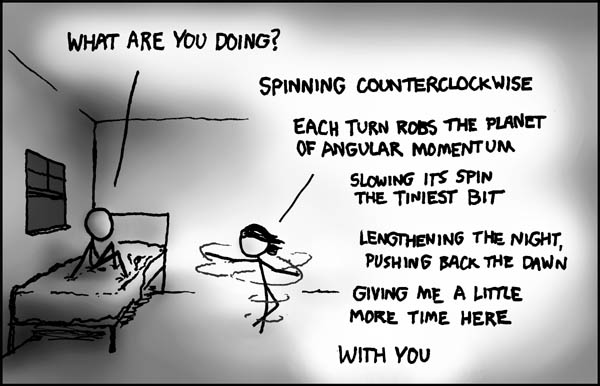

@Arantor said in I, ChatGPT:

@topspin I don’t think I’ve even looked at xkcd in ten years, except for digging out the classics.

Case in point: Today's xkcd

is just a much weaker, unfunnier, less emotional version of the age old one.

-

@topspin being funny for nearly 20 years would be difficult.

-

@topspin Spinning seems like an important topic for you

-

@Applied-Mediocrity said in I, ChatGPT:

@topspin Spinning seems like an important topic for you

Case in point, funnier than xkcd.

-

@Applied-Mediocrity said in I, ChatGPT:

@topspin Spinning seems like an important topic for you

It's all about that angular momentum.

-

@topspin said in I, ChatGPT:

is just a much weaker, unfunnier, less emotional version of the age old one.

At least it's not the exact same joke. He put a new spin on it.

subtle

subtle