Apple goes full Big Brother

-

As if I needed another reason to never buy anything from Apple.

-

@boomzilla Any bets on how many days Google will take to follow suit?

-

-

@acrow said in Apple goes full Big Brother:

@boomzilla Any bets on how many days Google will take to follow suit?

You're under the invalid assumption that Google doesn't already have all your data on their servers.

-

Best comment in the OP article's comment section thus far:

People went to digital photography to get AWAY from this

Back in the 1990s and early 2000s, there was a "think of the children" panic in Canada, and crusaders went on the tear to get the police and government to "do something" to stop it.

In the middle of this climate, I know of three cases where people ended up getting visited by police investigating them for alleged child pornography.

One case was a Japanese anime, as in, a cartoon, with no actual humans being filmed, let alone children.

The other two were the result of photo development. Those old enough to remember actual film cameras know that unless you had a darkroom, chemicals, and skill, you needed to go to a photo developer to convert your raw film into actual snapshots. Camera stores did it, of course, as well as specialty outlets like Fotomat, but one of the most common photo development places was, oddly enough, the pharmacy. And it was pharmacies that called the cops on two people getting their photos developed.

The first case showed the shocking picture of a nude 5 year old boy with his pants on the sidewalk with a scantily clad 3 year old girl next to him. In other words, a 3 year old girl snuck up on her big brother and pants his swimsuit on him. Mom happened to be taking pictures of her kids in the pool, and couldn't resist getting a snap of her kids pranking each other.

The second case was similar, with a grown woman in a bathtub with a 2 year old boy, who decided to make an obscene gesture to shock his mommy just as Daddy walked in. In other words, a typical "Jim, get in here and see what your son is doing" family moment.

Fortunately, in both cases, the police officers were parents themselves and not idiots, and when they visited the families and saw that the kids photographed were the children of the photographers, they realized that the photo developers had completely over reacted. But as you can imagine, those families stopped sending their film out to be developed, and went to digital photography.

Now, you don't even have to drop your film off to have busybodies report you to the cops, your camera vendor will do it as soon as you take your picture.

There's no way that this won't be abused, both by companies, and governments.

-

@topspin said in Apple goes full Big Brother:

@acrow said in Apple goes full Big Brother:

@boomzilla Any bets on how many days Google will take to follow suit?

You're under the invalid assumption that Google doesn't already have all your data on their servers.

One of the article's comments:

As I understand it, this technology has been developed because Apple has built its service in such a way that it can’t scan in the cloud so it has to scan on device. Everyone else, Google included, scans in the Cloud. One way or another, you’ll get scanned - it’s just a question of where.

Edited to add:

Not that I believe Apple couldn't scan in the cloud. But that'd be using their own electricity, so no.

-

The El Reg article disagrees with Apple's own description of how it works.

The neural network-based tool will scan individual users' iDevices for child sexual abuse material (CSAM), respected cryptography professor Matthew Green told The Register today.

Rather than using age-old hash-matching technology, however, Apple's new tool – due to be announced today along with a technical whitepaper, we are told – will use machine learning techniques to identify images of abused children.

Apple’s method of detecting known CSAM is designed with user privacy in mind. Instead of scanning images in the cloud, the system performs on-device matching using a database of known CSAM image hashes provided by NCMEC and other child safety organizations. Apple further transforms this database into an unreadable set of hashes that is securely stored on users’ devices.

Before an image is stored in iCloud Photos, an on-device matching process is performed for that image against the known CSAM hashes. This matching process is powered by a cryptographic technology called private set intersection, which determines if there is a match without revealing the result.

-

@loopback0 said in Apple goes full Big Brother:

The El Reg article disagrees with Apple's own description of how it works.

The neural network-based tool will scan individual users' iDevices for child sexual abuse material (CSAM), respected cryptography professor Matthew Green told The Register today.

Rather than using age-old hash-matching technology, however, Apple's new tool – due to be announced today along with a technical whitepaper, we are told – will use machine learning techniques to identify images of abused children.

Apple’s method of detecting known CSAM is designed with user privacy in mind. Instead of scanning images in the cloud, the system performs on-device matching using a database of known CSAM image hashes provided by NCMEC and other child safety organizations. Apple further transforms this database into an unreadable set of hashes that is securely stored on users’ devices.

Before an image is stored in iCloud Photos, an on-device matching process is performed for that image against the known CSAM hashes. This matching process is powered by a cryptographic technology called private set intersection, which determines if there is a match without revealing the result.

El Reg filing a misleading article for clicks?!? Say it ain’t so!

Makes their bitching about how Apple refuses to comment for their article seem especially

petulantjuvenile and deceptive

-

@acrow said in Apple goes full Big Brother:

@boomzilla Any bets on how many days Google will take to follow suit?

-791

-

@loopback0 said in Apple goes full Big Brother:

The El Reg article disagrees with Apple's own description of how it works.

El Reg had it correct. The detailed PDF linked from that Apple page says it's not true hash-matching, and that it's using machine learning.

The system generates NeuralHash in two steps. First, an image is passed into a convolutional neural network to generate an N-dimensional, floating-point descriptor.

-

@Unperverted-Vixen said in Apple goes full Big Brother:

@loopback0 said in Apple goes full Big Brother:

The El Reg article disagrees with Apple's own description of how it works.

El Reg had it correct. The detailed PDF linked from that Apple page says it's not true hash-matching, and that it's using machine learning.

The system generates NeuralHash in two steps. First, an image is passed into a convolutional neural network to generate an N-dimensional, floating-point descriptor.

It's trying (probably) to get a "visual" image hash independent of representation, to get around cropping, fuzz, compression, etc. But, no way to be entirely sure that's what it's doing.

-

@acrow said in Apple goes full Big Brother:

Apple has built its service in such a way that it can’t scan in the cloud

Found the WTF.

-

@acrow said in Apple goes full Big Brother:

There's no way that this won't be abused, both by companies, and governments.

Parents: "Everyone with kids takes pictures of their kids."

Companies/Governments: "Yeah but you said a bad word about our glorious leader, so I'm only interested in you right now..."

-

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

-

@Rhywden said in Apple goes full Big Brother:

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

Watch out, you'll end up disallowing every fallacy.

-

@Shoreline said in Apple goes full Big Brother:

@acrow said in Apple goes full Big Brother:

Apple has built its service in such a way that it can’t scan in the cloud

Found the WTF.

-

@Rhywden said in Apple goes full Big Brother:

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

Why would you be fine with someone doing this on your phone? This is already down the slope.

-

@Rhywden said in Apple goes full Big Brother:

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

Depends almost entirely on which of the conflicting descriptions of how it works is true.

If it's actually something hash-based as claimed, that would mean it is basically guaranteed to have a zero false positive rate (unless you're a security researcher who happens to run collision attacks against this thing). I don't see a problem with that (assuming I don't pay for it with exorbitant battery and storage use).

If it's some ML-based bullshit, having the cops kick in your door because it has a non-zero false positive rate is of course a problem.What I also found curious:

Siri and Search will also intervene when users try to search for CSAM-related topics.

First thought: what kind of moron would use Siri to search for their illegal shit? Surely, that never happens?

Second thought: wait, what if you search for something harmless (maybe explicit but legal) and that thing completely overreacts?!However, it does sound like "intervening" means telling you you should be wary of what you're searching for, not sending you the FBI party van.

-

@topspin said in Apple goes full Big Brother:

what if you search for something harmless (maybe explicit but legal)

On google? My experience in the past is that even with safe search disabled it'll bend over backwards to find non-explicit interpretations of your query.

-

@PleegWat said in Apple goes full Big Brother:

@topspin said in Apple goes full Big Brother:

what if you search for something harmless (maybe explicit but legal)

On google? My experience in the past is that even with safe search disabled it'll bend over backwards to find non-explicit interpretations of your query.

-

@topspin said in Apple goes full Big Brother:

If it's actually something hash-based as claimed, that would mean it is basically guaranteed to have a zero false positive rate

It's a "perceptual hash." Not a cryptographic hash. So there's some black boxiness going on in there somewhere to build that stuff.

-

@Unperverted-Vixen said in Apple goes full Big Brother:

@loopback0 said in Apple goes full Big Brother:

The El Reg article disagrees with Apple's own description of how it works.

El Reg had it correct. The detailed PDF linked from that Apple page says it's not true hash-matching, and that it's using machine learning.

The system generates NeuralHash in two steps. First, an image is passed into a convolutional neural network to generate an N-dimensional, floating-point descriptor.

OK fair enough, but it's identifying different versions of the same image to give them the same hash before matching it to known images. It's just replacing the fuzzy matching used in PhotoDNA or whatever Google use and have done for years, and not scanning for stuff that looks like it might be CSAM as the article suggests.

-

@boomzilla said in Apple goes full Big Brother:

It's a "perceptual hash." Not a cryptographic hash. So there's some black boxiness going on in there somewhere to build that stuff.

It's got a lot in common with the early stages of how machine vision works, with basically layers of artificial neurons doing feature extraction in what's a lot like running a whole bunch of convolution kernels in parallel.

-

@Unperverted-Vixen said in Apple goes full Big Brother:

and that it's using machine learning.

That always works out so well... How long till the "bad" guys figure out how to poison that.

-

@boomzilla said in Apple goes full Big Brother:

@Rhywden said in Apple goes full Big Brother:

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

Why would you be fine with someone doing this on your phone? This is already down the slope.

As opposed to doing it once it's in the cloud like everyone else (including Apple) already does and the world seems to be fine with?

Like the other services which obviously can't scan something you don't put in the cloud, this is disabled if you're not uploading photos to iCloud.

-

@loopback0 said in Apple goes full Big Brother:

@boomzilla said in Apple goes full Big Brother:

@Rhywden said in Apple goes full Big Brother:

Not seeing the problem. I'm also not interested in "slippery slope" fallacies.

Why would you be fine with someone doing this on your phone? This is already down the slope.

As opposed to doing it once it's in the cloud like everyone else (including Apple) already does and the world seems to be fine with?

Like the other services which obviously can't scan something you don't put in the cloud, this is disabled if you're not uploading photos to iCloud.

Thus demonstrating that slippery slopes aren't always fallacies. Though at least the cloud stuff involves moving the data from your device to someone else's. Or maybe this is more of the boiling a frog thing.

-

@dcon said in Apple goes full Big Brother:

@Unperverted-Vixen said in Apple goes full Big Brother:

and that it's using machine learning.

That always works out so well... How long till the "bad" guys figure out how to poison that.

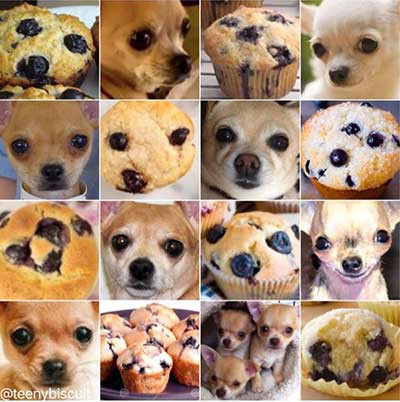

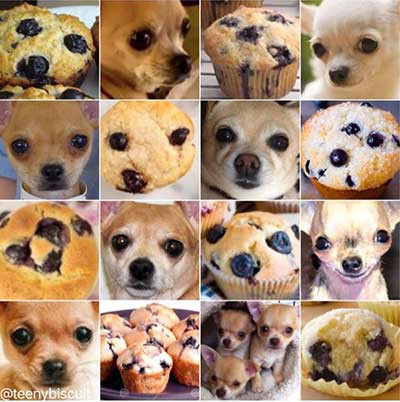

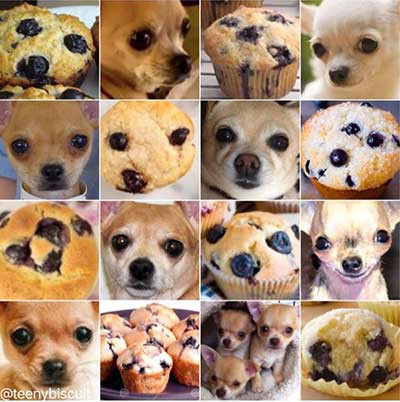

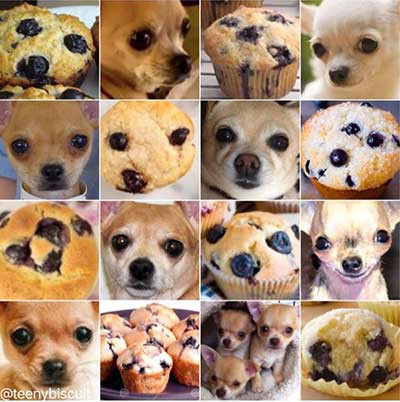

Mmm, dog-eye cupcakes.

ed. muffins

Cupcakes, Ed. Fuck you, is why.

-

@boomzilla said in Apple goes full Big Brother:

the boiling a frog thing.

The "No you eat that" topic is

-

@acrow said in Apple goes full Big Brother:

Everyone else, Google included, scans in the Cloud.

Good thing I save my pictures on my computer and never upload anything to the cloud. Not intentionally, anyway. I've read that one of the apps, I can't remember if it's Photos or Gallery (

), automagically uploads anything you look at on your own phone, but I don't know if that's true.

), automagically uploads anything you look at on your own phone, but I don't know if that's true.

-

@HardwareGeek Photos can certainly be configured so that it uploads the photo library on the phone to Photos online. But, like iCloud, it can also be configured not to.

-

-

@HardwareGeek said in Apple goes full Big Brother:

@boomzilla said in Apple goes full Big Brother:

besides the point

LOLOOPS. That one wasn't even intentional.

-

@boomzilla said in Apple goes full Big Brother:

That one wasn't even intentional.

He's annoyed irregardless.

-

@loopback0 said in Apple goes full Big Brother:

@HardwareGeek Photos can certainly be configured so that it uploads the photo library on the phone to Photos online. But, like iCloud, it can also be configured not to.

At least in my ancient version, they don't make that configuration easy to find. It's set to whatever the default is, because I can't change a configuration I can't find.

-

@dcon said in Apple goes full Big Brother:

@Unperverted-Vixen said in Apple goes full Big Brother:

and that it's using machine learning.

That always works out so well... How long till the "bad" guys figure out how to poison that.

Select all squares with puppies.

-

@loopback0

I'm idly wondering if it's pre-empting future regulation making them liable for material stored on their equipment. The most visible social media platforms have avoided being called "publishers" and hence not responsible for what gets published, and they're now seeing it as a good move to look like they're a bit more choosy about who gets to use their stuff for what.

I haven't thought deeply on this. I'm just do the usual substitution of interpreting "The Cloud" as "Someone Else's Computer".

-

@Watson said in Apple goes full Big Brother:

@dcon said in Apple goes full Big Brother:

@Unperverted-Vixen said in Apple goes full Big Brother:

and that it's using machine learning.

That always works out so well... How long till the "bad" guys figure out how to poison that.

Select all squares with puppies.

All non-trivial squares contain puppies.

-

@HardwareGeek said in Apple goes full Big Brother:

@loopback0 said in Apple goes full Big Brother:

@HardwareGeek Photos can certainly be configured so that it uploads the photo library on the phone to Photos online. But, like iCloud, it can also be configured not to.

At least in my ancient version, they don't make that configuration easy to find. It's set to whatever the default is, because I can't change a configuration I can't find.

IIRC in Google Photos it asks you if you want to sync each folder with the cloud whenever it finds one with pictures

-

@Watson said in Apple goes full Big Brother:

I'm idly wondering if it's pre-empting future regulation making them liable for material stored on their equipment. The most visible social media platforms have avoided being called "publishers" and hence not responsible for what gets published, and they're now seeing it as a good move to look like they're a bit more choosy about who gets to use their stuff for what.

This would make them more liable. Before they had the excuse of "it's the user's data, and we have no access to it". Now, if they miss anything, they no longer have that excuse; they explicitly approved all the material uploaded to their servers.

-

-

@topspin said in Apple goes full Big Brother:

First thought: what kind of moron would use Siri to search for their illegal shit? Surely, that never happens?

"Hey Siri, where to bury the body?"

-

@Tsaukpaetra said in Apple goes full Big Brother:

@topspin said in Apple goes full Big Brother:

First thought: what kind of moron would use Siri to search for their illegal shit? Surely, that never happens?

"Hey Siri, where to bury the body?"

I mean, sure, if Siri is telling you to kill people, fair enough.

-

@Gribnit said in Apple goes full Big Brother:

@Tsaukpaetra said in Apple goes full Big Brother:

@topspin said in Apple goes full Big Brother:

First thought: what kind of moron would use Siri to search for their illegal shit? Surely, that never happens?

"Hey Siri, where to bury the body?"

I mean, sure, if Siri is telling you to kill people, fair enough.

No, just Bixby, but shut up before you give the game away.

-

@acrow cunningly copy-pasta’d in Apple goes full Big Brother:

investigating them for alleged child pornography.

One case was a Japanese anime,Yes, that qualifies.

-

@Unperverted-Vixen said in Apple goes full Big Brother:

@Watson said in Apple goes full Big Brother:

I'm idly wondering if it's pre-empting future regulation making them liable for material stored on their equipment. The most visible social media platforms have avoided being called "publishers" and hence not responsible for what gets published, and they're now seeing it as a good move to look like they're a bit more choosy about who gets to use their stuff for what.

This would make them more liable. Before they had the excuse of "it's the user's data, and we have no access to it". Now, if they miss anything, they no longer have that excuse; they explicitly approved all the material uploaded to their servers.

They actually haven't had that excuse for a while now:

U.S. law enforcement has access to anything hosted by any company with a U.S. presence. And if they didn't have access for technical reasons before, they will after they serve you the judge's order. Or else.Also, a company can still claim to be a platform instead of a publisher if they only filter material expressly prohibited by law. In fact, they could be sued if they don't filter, if it is technically possible to do so. And claiming that Apple don't know their users' keys does not constitute a technical obstacle in the eyes of the law.

-

@izzion said in Apple goes full Big Brother:

Makes their bitching about how Apple refuses to comment for their article seem especially

petulantjuvenile and deceptiveBut isn't that the fundamental basis for El Reg's relationship with Apple?

-

-

@boomzilla decent. Lyrical even.

Ah shit.

IMMEDIATELY destroy that algorithm the approach is a dead-end.

-

@boomzilla the current methods have false positives too, so will be interesting to see how the actual false positive rate compares to the 1 in 10 billion that PhotoDNA apparently achieves.

-

@loopback0 said in Apple goes full Big Brother:

@boomzilla the current methods have false positives too, so will be interesting to see how the actual false positive rate compares to the 1 in 10 billion that PhotoDNA apparently achieves.

Oh, don't worry, they won't report you to the cops unless there's a whole bunch of positives, so you can't possibly be caught by false positives because nobody would really have that many false positives.

Perceptual hashing - Wikipedia

Perceptual hashing - Wikipedia