About Brain-Computer Interfaces

-

Not the first time I hear about this, as Elon Musk is also investing in some company with this goal and a colleague has been talking about that one for a while. Didn't know yet Gabe Newell was looking at it...

-

-

This is literal brainwashing.

-

@error said in About Brain-Computer Interfaces:

Black Mirror

This is that show with the fucking pig thing, right?

-

@Tsaukpaetra said in About Brain-Computer Interfaces:

@error said in About Brain-Computer Interfaces:

Black Mirror

This is that show with the fucking pig thing, right?

If it is, I'm never watching it. Ever.

-

@HardwareGeek said in About Brain-Computer Interfaces:

@Tsaukpaetra said in About Brain-Computer Interfaces:

@error said in About Brain-Computer Interfaces:

Black Mirror

This is that show with the fucking pig thing, right?

You do what you gotta do at last call.

If it is, I'm never watching it. Ever.

Skip the first episode then. They're each standalone.

-

@error said in About Brain-Computer Interfaces:

@HardwareGeek said in About Brain-Computer Interfaces:

@Tsaukpaetra said in About Brain-Computer Interfaces:

@error said in About Brain-Computer Interfaces:

Black Mirror

This is that show with the fucking pig thing, right?

You do what you gotta do at last call.

If it is, I'm never watching it. Ever.

Skip the first episode then. They're each standalone.

Yeah, I noped out of that series halfway through the first episode. I should go watch some of the rest, but...

and I don't watch that much TV.

and I don't watch that much TV.

-

Black Mirror has some great concepts, the execution isn't always that good though.

I would absolutely be noping right out of any direct interference with my brain.

-

@bobjanova said in About Brain-Computer Interfaces:

I would absolutely be noping right out of any direct interference with my brain.

Speaking of, are VR headsets finally dead

yetagain? Paging @Tsaukpaetra...

-

@topspin said in About Brain-Computer Interfaces:

@bobjanova said in About Brain-Computer Interfaces:

I would absolutely be noping right out of any direct interference with my brain.

Speaking of, are VR headsets finally dead

yetagain? Paging @Tsaukpaetra...The enthusiasts seem to think no.

-

@topspin said in About Brain-Computer Interfaces:

@bobjanova said in About Brain-Computer Interfaces:

I would absolutely be noping right out of any direct interference with my brain.

Speaking of, are VR headsets finally dead

yetagain? Paging @Tsaukpaetra...All tech is dead once it gets into @Tsaukpaetra's hands.

-

Sorry, Gaben. Nope. Do not want.

It's very simple. Signal interference, devil-may-care quality of modern klopftware or just the abuse potential of anything being produced today, but the gist is that when the brain cannot possibly tell the difference between reality and illusion, it's a dream or nightmare one may not willingly wake up from. When arbitrarily close to non-distinction, you can always pull the plug.

Given years St. Melon could probably make a flashy prototype, at least once, at least until the first "test fire" promptly leaves the over-enthusiastic test subject a drooling vegetable stuck into endless ad viewing experience while yet thousands more keep breaking the door down shouting "me next, me next".

As for true mind uploading and transcendence, we're hundreds of years and an entire of paradigm of thinking away from anything like that.

This message is sponsored by Google AdBrains, your friendly brain infusion that's fun to live with.

-

@HardwareGeek said in About Brain-Computer Interfaces:

@topspin said in About Brain-Computer Interfaces:

Speaking of, are VR headsets finally dead

yetagain? Paging @Tsaukpaetra...All tech is dead once it gets into @Tsaukpaetra's hands.

If it survives,

and BUYBUYBUY!!!

and BUYBUYBUY!!!  (closest thing to

(closest thing to :stocks:)

-

@topspin said in About Brain-Computer Interfaces:

@bobjanova said in About Brain-Computer Interfaces:

I would absolutely be noping right out of any direct interference with my brain.

Speaking of, are VR headsets finally dead

yetagain? Paging @Tsaukpaetra...They're still the best option for

porngaming.

Filed under: No, wait, I was right the first time.

-

@Applied-Mediocrity said in About Brain-Computer Interfaces:

As for true mind uploading and transcendence, we're hundreds of years and an entire of paradigm of thinking away from anything like that.

It's the only form of eternal life I can imagine, but you're right, it's probably not going to get here while I'm still around.

-

As usual first practical thing will be porn

-

@Luhmann said in About Brain-Computer Interfaces:

As usual first practical thing will be porn

Porn drives the fidelity, games drive the realism?

-

-

@Applied-Mediocrity start shallow, then as you get used to it, shift progressively deeper, but don't forget to come up for air...

-

@Applied-Mediocrity said in About Brain-Computer Interfaces:

As for true mind uploading and transcendence, we're hundreds of years and an entire of paradigm of thinking away from anything like that.

We're quite a long way from having a computing substrate that can host a real mind. We don't even know what parts of neurology are required to make that work, so we can't make any kinds of prediction on when we'd be able to think of manufacturing such a computing system. We know that some of the aspects that are required are really awkward to do in standard computing without hitting huge problems with communications overheads, and the need for structural plasticity makes doing hardware accelerators tricky. And the fundamental equations are genuinely difficult to solve for the ones that actually model neurons accurately.

So… let's just say that we're a few major breakthroughs from solving this. It looks solvable ultimately, but we're absolutely decades away. (I've no idea how we might upload a mind; I guess it would require some kind of scanning mechanism to determine the connectome and synaptic strengths of everything, but that sounds crazy hard to do.)

-

@dkf said in About Brain-Computer Interfaces:

get garbage results

But you're trying to model human thinking, so that's completely accurate.

-

@HardwareGeek said in About Brain-Computer Interfaces:

@dkf said in About Brain-Computer Interfaces:

get garbage results

But you're trying to model human thinking, so that's completely accurate.

The true scientific problem is actually that we don't know what sort of fidelity is required to get the overall effect of cognition. We do know that you need many neurons to do it. We have a pretty good indication that longer-term memory is related to synapse formation, and that short-term memory is about a mixture of synaptic strength and neurotransmitter concentrations. We also know that some neuron types are significantly more complex than others; YIL that pyramidal cells can do an operation equivalent to an XOR in their dendritic tree. We've identified a lot of the true functions of sleep (deep sleep seems to be related to forgetting insignificant detail, and REM sleep is about running what-if scenarios). But how much detail do we actually need? Until we know that, we can't really contemplate building hardware to do it; the models vary in complexity by what's effectively orders of magnitude so just taking a guess isn't practical. Also, there's good reason to believe that things have to be simulated in realtime to be meaningful; external interaction is believed necessary to keep a mind from going insane and that's only really got one speed it can go at.

The ones I've heard of in (experimental!) hardware are probably not sufficient in all cases. And are absolutely not on the scale to simulate the neocortex. Not within many orders of magnitude.

-

-

@dkf said in About Brain-Computer Interfaces:

So… let's just say that we're a few major breakthroughs from solving this. It looks solvable ultimately, but we're absolutely decades away.

The thing about major breakthroughs is that they don't happen on any sort of schedule.

Consider the case of Leó Szilárd. Scientists had known for quite a while that there was tremendous energy stored in the nucleus of the atom, but there was just no good way to get at it without using significantly more energy than it produced. It was considered an intractable problem that would probably never be solved, until Szilárd heard that it was an intractable problem that would probably never be solved and imagined up how it could be made to work by using neutron bombardment to initiate a chain reaction. And in the course of one day it went from an intractable problem that would probably never be solved to a problem that was solved in theory and just needed to be implemented in practice. Less than 10 years later, he was part of the team that made it real.

-

@Mason_Wheeler In that case, invest now!

-

@Mason_Wheeler said in About Brain-Computer Interfaces:

Consider the case of Leó Szilárd. Scientists had known for quite a while that there was tremendous energy stored in the nucleus of the atom, but there was just no good way to get at it without using significantly more energy than it produced. It was considered an intractable problem that would probably never be solved, until Szilárd heard that it was an intractable problem that would probably never be solved and imagined up how it could be made to work by using neutron bombardment to initiate a chain reaction.

Excuse me, but if I'm reading Wikipedia right, the exact opposite is true: Szilard was inspired by successful nuclear fission, done by Ernest Walton and John Cockcroft and that produced more energy than it took. What Szilard did is figuring out how to turn the known-working mechanism into a chain reaction.

Also, the early 20th century was the golden period for atomic physics, with major breakthroughs happening every other year or so. Things have considerably slowed down in later decades.

-

@Gąska It wasn't fission; fission wasn't even something known to be possible yet. But yes, he was inspired by other nuclear experiments, and was able to apply that knowledge to what was believed to be the completely intractable problem of getting more energy out of a nuclear reaction than went into it.

-

@Mason_Wheeler You're right but also you're wrong.

Literally the first sentence:

Nuclear fission was discovered in December 1938 by physicists Lise Meitner and Otto Robert Frisch and chemists Otto Hahn and Fritz Strassmann.

-

@Gąska Yes. And the episode I'm talking about with Leó Szilárd happened 5 years earlier, in 1933.

-

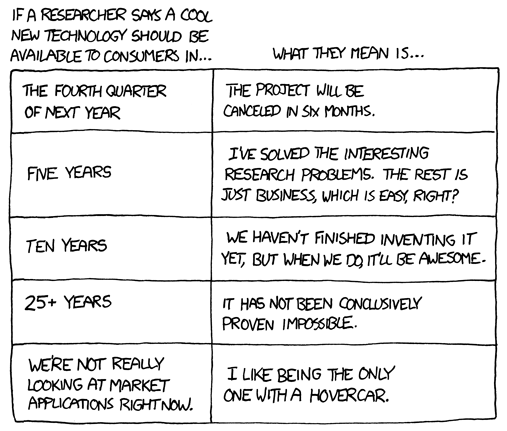

@dkf said in About Brain-Computer Interfaces:

absolutely decades away

@error_bot xkcd Researcher Translation

-

-

@Mason_Wheeler so in just 5 short years we went from "an intractable problem that would probably never be solved" to "some guys completely unrelated to Szilard did it before him"? Interesting.

Look. In 1931, people didn't even know neutrons exist. In 1934, Fermi used neutron beams to transform uranium into other elements. In 1938, nuclear fission was experimentally shown to be possible, and by 1939, there was a complete theory about how it works (NOT the other way around, like you said earlier). In 1942 first nuclear reactor was working, and in 1945 first (and last, thank God) nuclear bombs were used in combat.

Compare to brain science. It's 2021 and we still don't know what sleep is for. It's such a basic phenomenon and yet we don't even have a single coherent theory about this process. The only thing we know for certain is that even if we did know how brains work, it would definitely be too computationally expensive for any silicon-based computer to simulate. That's why I can say with very high confidence that mind uploading won't happen in my lifetime.

-

@Gąska Amplifying, we don't even understand how our general anaesthetics work. We can plot the dose curves, we can see that they do work. We can classify some of them based on the perceived effects (such as the class of amnesiacs that seem to prevent the brain from remembering the sensations from moment to moment), but as to the actual molecular events going on when "consciousness" ceases (or seems to)?

Heck, we don't even know what it means to be conscious. If (taking off my religious hat here) it means anything at all more than spontaneous epiphenomena related to a dense enough neural net. The statement "I am myself" is a mystery. Even the *philosophers can't put their fingers (or words) on a coherent meaning.

-

@Benjamin-Hall said in About Brain-Computer Interfaces:

The statement "I am myself" is a mystery.

Meh, it's just harmless padding between words that actually can mean things.

-

@Gąska said in About Brain-Computer Interfaces:

Compare to brain science. It's 2021 and we still don't know what sleep is for.

We do know that. Deep sleep is for relaxing the state of neurons, for forgetting trivia, for repairing damage. REM sleep seems to be more for memory consolidation and scenario running, which are useful for forming longer-term connections and acting creatively. You absolutely need both.

-

@Mason_Wheeler said in About Brain-Computer Interfaces:

The thing about major breakthroughs is that they don't happen on any sort of schedule.

While that means that a breakthrough can happen tomorrow, as you point out, it also means that a breakthrough may never happen.

I can't think of a good example right now (because that needs thinking about something that is currently thought impossible, but were there is not a strong theory that makes it impossible (i.e. not FTL travel)), but it's important to remember that science managing to do some things that were previously infeasible does not mean that science will ever manage to do all things that are currently infeasible.

-

@remi said in About Brain-Computer Interfaces:

While that means that a breakthrough can happen tomorrow, as you point out, it also means that a breakthrough may never happen.

In this case, the key things that are required are a bit more mundane than solving FTL.

- We need to establish what neural models are needed where in the brain, and how much of full cellular models can be ignored as irrelevant BS. This is the major current research area of bottom-up neural modelling, but is definitely “breakthrough” class stuff. Note that the connectivity of some brain cells is absolutely crazy high.

- We need the connectome so that we can figure out how to wire things up in general. The details will be per-person.

- We need new chips. Current neuromorphic processors aren't able to do it, and you'll never manage it with standard processors or GPUs (they're optimised for the wrong things). We don't yet know how to do these except in the simplest cases. There's absolutely nothing standard about this. We don't yet know if 5nm is dense enough; it's possibly the case that we need several more generations of chip development to get the density required.

- We need to develop a suitable connectivity fabric that supports runtime reconfiguration and very high speed communication with low energy and latency for small packets. This is hard.

- We need a vast engineering effort to build a facility that puts enough neural processors together with the connectivity in a small enough space that communications are quick enough, and yet have enough cooling that the whole thing doesn't roast itself.

- We need to figure out how to program that monstrosity.

- We need to figure out how to minimise the whole thing; we don't want to have the number of encoded minds limited by the number of full datacenters we can build.

- We might want to try to figure out how to move a mind from wetware into silicon substrate. But that requires… mapping an individual's connectome down to the synaptic level (pure science fiction!). And then somehow transcribing it into the silicon equivalent, which is going to be a crazy amount of data and weird hardware reconfiguration. And then who knows what else is needed?

So… breakthroughs needed and a lot of money and work.

-

@dkf said in About Brain-Computer Interfaces:

We might want to try to figure out how to move a mind from wetware into silicon substrate.

Also,

-

@dkf said in About Brain-Computer Interfaces:

@Gąska said in About Brain-Computer Interfaces:

Compare to brain science. It's 2021 and we still don't know what sleep is for.

We do know that. Deep sleep is for relaxing the state of neurons, for forgetting trivia, for repairing damage. REM sleep seems to be more for memory consolidation and scenario running, which are useful for forming longer-term connections and acting creatively. You absolutely need both.

I don't think we're as certain about all of this as you seem to be making it out to be:

To take a small part of that post:

What is the role of REM vs. non-REM sleep? Depressed people have much more REM sleep than non-depressed people. Serotonin seems to decrease REM sleep, so unsurprisingly SSRI antidepressants decrease REM sleep a lot (not just in depressed people, in everybody). This would lend itself very nicely to a theory where REM sleep is involved in decreasing synapse strength, depressed people have too much of it, they end up with overly weak synapses, and that's what depression is. In this model, antidepressants would treat depression by increasing serotonin levels in a way that represses REM. The problem with this is that in Tononi's original paper, he says that the best evidence supports synaptic renormalization in non-REM sleep; he doesn't have a great idea what REM is doing.

-

@boomzilla quoted in About Brain-Computer Interfaces:

The problem with this is that in Tononi's original paper, he says that the best evidence supports synaptic renormalization in non-REM sleep; he doesn't have a great idea what REM is doing.

The synaptic renormalization is definitely in deep sleep; we can run deep sleep in simulation (requires a fairly complex neural model to do) and the result is an across-the-board reduction in synaptic strengths. That has the effect of reducing the order of fit found by neural networks, which is typically a very good thing. It seems that glial cells are also quite active during this phase, doing maintenance of various kinds. The interaction of neurons and glial cells is one of the areas that's still not fully understood, in part because it's extremely difficult to do in isolation.

REM sleep seems to be more about strengthening up the connections that remain afterwards, together with running “what if” scenarios. These scenarios are very much not a connected whole, which is why dreams are so strange when remembered after the fact (and when the neocortex is trying to assemble them into a comprehensive story, because that's one of the main things the neocortex does). Most of the long-range connectivity is still offline during REM sleep, especially the main motor neurons (failures in that lead to sleepwalking).

-

@Benjamin-Hall There's also the issue that quite a bit of our subconscious will probably rely on feedback from the rest of the body. For example, some experiments have shown that a fecal transplant from a depressive mouse to a healthy mouse can result in depressive symptoms in the now formerly healthy mouse...

-

@Rhywden said in About Brain-Computer Interfaces:

that a fecal transplant from a depressive mouse to a healthy mouse can result in depressive symptoms in the now formerly healthy mouse...

I might be depressed if you put someone else's feces in me without my consent.

-

@Rhywden said in About Brain-Computer Interfaces:

@Benjamin-Hall There's also the issue that quite a bit of our subconscious will probably rely on feedback from the rest of the body. For example, some experiments have shown that a fecal transplant from a depressive mouse to a healthy mouse can result in depressive symptoms in the now formerly healthy mouse...

Yeah. The brain/"mind" and the body are intricately interwoven. Toxiplasmosis (Cat Scratch Fever) is another example--it's a bacteria (I think) that makes you have symptoms that mimic various forms of psychosis. And the connection goes the other way as well, you can cause physiological changes through changes in mental state.

Edit: point being that a "brain in a jar" is going to be in a completely different environment than one in a body, even if we replicate the brain exactly.

-

-

@HardwareGeek we shouldn't anything so why not.

-

@HardwareGeek said in About Brain-Computer Interfaces:

@Benjamin-Hall said in About Brain-Computer Interfaces:

"brain in a jar"

I need a hunchbacked lab assistant called Igor. Closest I've got is a tall guy called Dave, who's a very nice guy but never going to lisp out “Yeth, Mathter!” without laughing himself to pieces afterwards.

-

@error said in About Brain-Computer Interfaces:

@Rhywden said in About Brain-Computer Interfaces:

that a fecal transplant from a depressive mouse to a healthy mouse can result in depressive symptoms in the now formerly healthy mouse...

I might be depressed if you put someone else's feces in me without my consent.

And here I thought that'd be right up your alley.

-

@topspin said in About Brain-Computer Interfaces:

@error said in About Brain-Computer Interfaces:

@Rhywden said in About Brain-Computer Interfaces:

that a fecal transplant from a depressive mouse to a healthy mouse can result in depressive symptoms in the now formerly healthy mouse...

I might be depressed if you put someone else's feces in me without my consent.

And here I thought that'd be right up your alley.

Not all Germans!

-

@dkf said in About Brain-Computer Interfaces:

The synaptic renormalization is definitely in deep sleep; we can run deep sleep in simulation (requires a fairly complex neural model to do) and the result is an across-the-board reduction in synaptic strengths.

That kind of feels like a tautology: when we run a simulation according to our theory of what's happening during deep sleep, the results support the theory that the simulation was built on.

-

@dkf said in About Brain-Computer Interfaces:

@HardwareGeek said in About Brain-Computer Interfaces:

@Benjamin-Hall said in About Brain-Computer Interfaces:

"brain in a jar"

I need a hunchbacked lab assistant called Igor. Closest I've got is a tall guy called Dave...

You're not blocked. Subtly alter Dave's working, particularly seating, arrangements to encourage hunching. If you have influence on diet, that will be useful. Doubtless certain hormonal or even gross chemical influences could accelerate the process.

A lisp is associated with tongue positioning and other factors. Estrogen or progesterone may be useful, as may certain paralytics (e.g. botox - be careful!).

Valve’s Gabe Newell imagines “editing” personalities with future headsets

Valve’s Gabe Newell imagines “editing” personalities with future headsets

Playtest (Black Mirror) - Wikipedia

Playtest (Black Mirror) - Wikipedia

678: Researcher Translation - explain xkcd

678: Researcher Translation - explain xkcd