AMD Hype!

-

it also listed 3080 FE for $699, and we all know how that ended up.

Being sold by Nvidia (or whoever the designated partner is) for $699?

-

-

@Gąska

The stock horrors aside, the FE cards that managed to be sold were sold at MSRP.The 3rd party cards are obviously more but that'll be the case for AMD too.

-

@loopback0 said in AMD Hype!:

stock horrors aside

All AMD need to do now is actually ship more cards early on. So many Nvidia preorders will end up cancelled just on that basis.

-

@loopback0 said in AMD Hype!:

@Gąska

The stock horrors aside, the FE cards that managed to be sold were sold at MSRP.It was a publicity stunt that would be illegal in Europe and was never meant to be an actual offer for general consumer. Nvidia literally paid their partners $50 for every card sold in the first few weeks just to keep the prices low, and to minimize losses, the supply was artificially throttled to virtually nothing. And now that the prices have "naturally" gone up to 800-900, suddenly there's going to be much more supply now, and look, the Founders Edition has been removed from Nvidia's online store altogether!

The MSRP was never intended to be the actual price.

The 3rd party cards are obviously more

Not "obviously". In the previous eight generations of GPUs from both Team Green and Team Red, you could get most 3rd party cards at MSRP very easily. The OC models were a bit higher priced, but the non-OC ones weren't. RTX 3000 is the exception, a huge exception from the general rule that MSRP is the real price. And I see no indication that AMD is going to break this general rule too - especially considering their MSRPs are higher than expected.

-

In the previous eight generations of GPUs from both Team Green and Team Red, you could get most 3rd party cards at MSRP very easily

Based on a quick look you can't even get 3rd party RTX 2070 SUPER, 2080TI or RX 5700XT cards for MSRP now at a point when no-one in their right mind would buy one.

The majority of 3rd party cards are always more than MSRP.

-

@loopback0 okay, I admit I may have not followed the GPU market after the Bitcoin boom made everything insanely expensive. Make it "8 generations before the GTX 1000 series". I bought my GTX 760 at MSRP soon after launch, and I remember 970 being available at MSRP as well. I was also planning to buy a PC a few years before that, I don't remember what GPU generation that was exactly (either Geforce 200 or 400) but IIRC the prices matched MSRP too.

-

-

-

In AMDs promo video for Godfall (so take it with a grain of salt), the dev says the following:

At 4K resolution using Ultra HD textures, Godfall requires tremendous memory bandwidth to run smoothly. In this intricately detailed scene, we are using 4K × 4K texture sizes and 12 GB of VRAM memory to play at 4K resolution.

Well, it is certainly plausible that a new game at ultra quality at 4K with maximum texture resolution would actively use that much VRAM, based off current games edging in on 10GB. So, uh, yeah, if you don't want to upgrade your GPU next year and want to play ultra quality at 4K, then the 3080 is not for you. So staying with team green would be 3090 only for future proofing, which is still more expensive than AMDs halo: the 6900, at purportedly similar performance.

(Also, it's good to see the dev is in camp random access memory memory...)

-

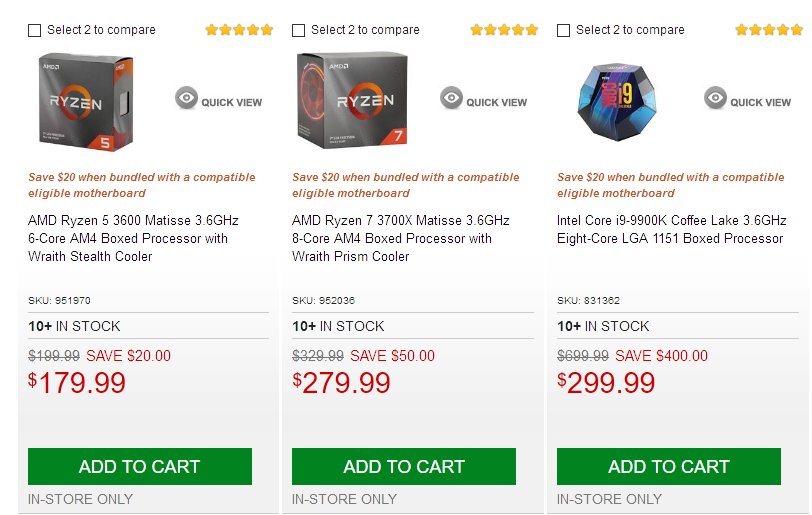

*sad intel noises*

-

-

@Gąska Big oof.

-

-

Watched/read reviews for the Ryzen 9s this far. RIP Intel.

-

@Atazhaia hell, even Ryzen 5 beats the best i9.

-

Hey, don't count Intel out yet, they've got their discrete Xe GPU lined up!

-

@cvi These CPU neo-pronouns are getting out of hand

-

@hungrier pronounced "axe-ee".

-

CPU neo-pronouns

I wouldn't really consider Intel's graphics hardware proper GPUs either, but calling them a CPU is perhaps taking the criticism on Intel's designs a bit far (although, there's probably a kernel of truth in it).

-

@cvi Yes, that's right, I'm giving them an unfair criticism. I certainly didn't misread the original post, no way.

-

Yes, that's right, I'm giving them an unfair criticism.

I wouldn't go that far. Perhaps harsh, but still insightful, so I wouldn't quite dare to call it unfair.

-

CPU neo-pronouns

I wouldn't really consider Intel's graphics hardware proper GPUs either, but calling them a CPU is perhaps taking the criticism on Intel's designs a bit far (although, there's probably a kernel of truth in it).

Reminds me of the original PlayStation's GPU, which was basically a modified sound chip.

-

-

Reminds me of the original PlayStation's GPU, which was basically a modified sound chip.

Do you have a source for that claim? A quick search doesn't turn up anything, and it sounds fishy to me. I don't really see how you could reuse sound hardware for 3D graphics, not to mention getting first-class performance (at the time) from it.

-

@Zerosquare said in AMD Hype!:

Reminds me of the original PlayStation's GPU, which was basically a modified sound chip.

Do you have a source for that claim?

Modern Vintage Gaming on YouTube. Too lazy to link exact video right now because 1AM and mobile, but shouldn't be too hard to find.

The story goes that when Sony decided to do PSX, they had no people in-house who knew anything about 3D rendering, and had no intention of hiring any, nor license an already available chip, nor outsource the design work. Instead they tasked one of their lead hardware engineers with figuring something out. Needless to say, said lead hardware engineer at Sony in 1992 had a lot of experience designing sound chips, but not much else. The end result was a very unique GPU unlike anything else on the market, with many unique quirks such as not being able to properly render textures in perspective projection. That's why so many PSX games look slightly wrong in a very specific PSX-y way (if you owned PSX, you know what I'm talking about.)

-

Thanks. I knew that the PS1 3D hardware didn't do perspective correction and supported only integer coordinates, causing the "texture warping" effect you mention. But I always assumed that was a compromise, because implementing a more advanced rendering algorithm would have made the hardware too complex/costly for a 1994 games console.

I'll ask a friend who's a PS1 fan and does retro-development on this hardware what he thinks about it.

-

Sounds plausible.

Some uninformed twaddle retracted.

-

@Applied-Mediocrity said in AMD Hype!:

16.16 fixed-point number format

(That's technically the unsigned format; the signed one is usually 16.15 in order to leave a bit for the sign.)

It's a good format, provided you can manage to keep your numbers in a suitable range. It has the advantage that it needs a lot less transistors to do most operations than for floating point, and is both much faster and lower power as a consequence. The downside is the scaling; floating point handles code that's not very sure what range of numbers it is working with far better and that matters a lot in some application areas. Of course, when the range is right, fixed point numbers give better numeric behaviour than floating point of the same overall width as there's no stealing of bits for the exponent. My colleagues who develop numerical models know way more about this than I do; I make the code to run their models and ship their data about, but not the models themselves (where really I only know enough to appreciate that it's vital to be careful and not screw things up for them).

-

@Applied-Mediocrity said in AMD Hype!:

Perspective artefacts were because of the limited range of 16.16 fixed-point number format.

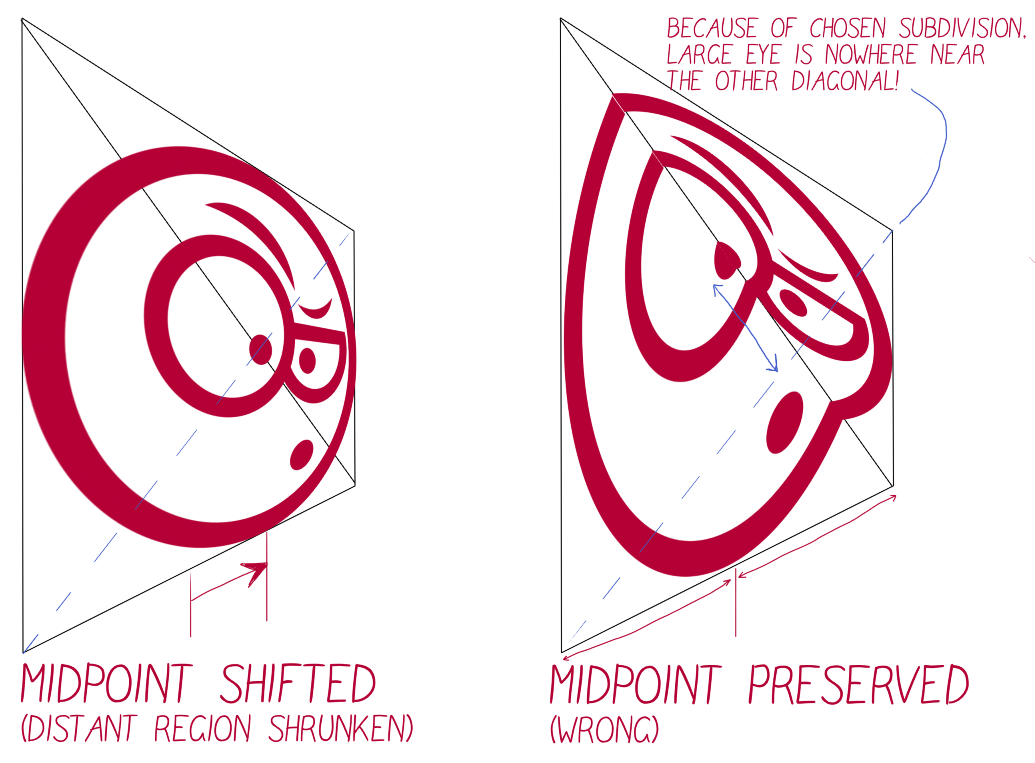

The perspective artefacts were because of not using perspective correct interpolation. The following post on SO explains the differences in more detail that I'm willing to type up, so I'll just link to it:

If you ever play around in OpenGL or so, you can request the "wrong" way via the "noperspective" qualifier for stuff that is passed e.g., between the vertex and fragment shaders.

The PS1 wasn't alone on this, early software engines would do the same to save on some computations. You can hide some of the errors by being clever elsewhere (clipping and retessellating triangles and so on).

-

-

(That's technically the unsigned format; the signed one is usually 16.15 in order to leave a bit for the sign.)

Two's complement? No need to have a separate sign bit...

-

@loopback0 Yep, this is the video in question. The not perspective-correct interpolation of the texture coordinates comes up around 6:30 - 7:50.

-

“Explanation” more befitting of

-

Two's complement? No need to have a separate sign bit...

You still end up with a bit effectively for the sign in both one's and two's complement. It's just that the interpretation of the other bits is different in the negative case.

I was actually thinking of the 16.15 as two's complement. It makes addition and subtraction work just as in integers, and multiplication is like ordinary multiplication but with an extra shift at the end (i.e., super-cheap in hardware). Division… can you multiply by the precomputed reciprocal instead please?

-

@dkf Hmm, ok. I don't quite get why you'd split it as 16.15 with two's complement. I would read that as ending up with a ±64k integer range (i.e., a signed 17 bit integer), and 15 fractional bits. Seems a bit of a strange choice to just 16.16 (or, if you want to count the sign separately, 15.16).

With a separate sign bit, you end up with distinct +0 and -0, like the IEEE float formats.

-

@kazitor dude, you’re awesome.

Just in case nobody’s told you today.

-

@Gąska: I watched most of the videos the guy's made about the PS1, and none of them mention what you've said above. Are you sure it's not from a different YouTuber?

-

I don't quite get why you'd split it as 16.15 with two's complement.

I don't know exactly why either, but it's apparently advantageous and encouraged by the spec. In particular, it's what you get from ARM's implementation of the spec and has apparently reasonable hardware support on even fairly low-end CPUs now.

-

@Zerosquare said in AMD Hype!:

@Gąska: I watched most of the videos the guy's made about the PS1, and none of them mention what you've said above. Are you sure it's not from a different YouTuber?

It might be. I did binge watch multiple videos about oldschool consoles one night and this one part stuck in my head. I swear I didn't make this up. Most of it at least.

-

Oh, I'm not accusing you of making this up, I was just interested in tracing the origin of that claim.

The info I did find said the GPU was designed by Ken Kutaragi, and while it's true that he was a sound chip designer, apparently he had been playing with 3D for a few years at that point (which Sony didn't have a use for, before the PS1).

-

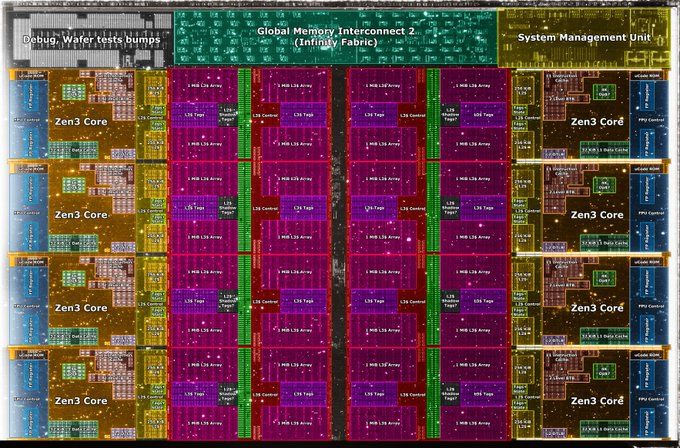

@dkf Oh no! Zen 3 isn't square either. Must be the ryzen for the ryzen costs

-

@Applied-Mediocrity I like the higher detail version on Twitter where you can actually read all the labels. Lovely cache layout, with L1, L2 and L3 in progressively larger fractions of the chip (cache costs so much area). The interconnect at the top looks very interesting too, as it appears to have a lot of very similarly structured subcomponents (I'd bet that bit's designed to be screaming fast!)

Square is still better in some cases (in that it lets you have more ways of doing the chip layout, which is important as you pack more cores on) but that's not too important for relatively low core counts like on the Ryzen. (The L1 and L2 caches are essentially integrated into the core layout directly.)

-

I'd bet that bit's designed to be screaming fast!

I've no reference nor knowledge. It's designed to run at system's memory speed, with four 32-bit transfers per clock. Currently the upper limit for 1:1 ratio is 1800 MHz, with 2000 being in the works. With DDR4-3600 (current price sweet spot) it would mean 57.6 GB/s (twice that of a single DRAM channel).

Or read it yourself, in case I got it all wrong. It's old description, but Zen 3 hasn't seen major changes to the interconnect:

.

My takeaway from Infinity Fabric technical write-up is that if you buy a Zen processor, you get a CAKE

***

That said, the conceptually simple idea of packing more cores per die has indeed led to core-to-core latency improvements:

.(which I was saying in the post that got downboated and I'm still very cross about)

-

-

I don't know exactly why either, but it's apparently advantageous and encouraged by the spec. In particular, it's what you get from ARM's implementation of the spec and has apparently reasonable hardware support on even fairly low-end CPUs now.

Not exactly the easiest document to just quickly skim through, but I think the following gives a bit of an explanation:

For the purpose of this Technical Report, two groups of fixed-point data types are added to the C language: the fract types and the accum types. The data value of a fract type has no integral part, hence values of a fract type are between -1.0 and +1.0. The value range of an accum type depends on the number of integral bits in the data type.

I guess for [-1 .. 1], you would have s.7 or s.15, or apparently minimally s.23. The other types in the document then seem to extend the integral part, comping up with stuff like s4.15 (= 19 or 20 bits). Didn't read carefully enough to see if s15.16 or s16.16 (depending on how the notation works) would be a valid implementation of the minimal-spec s4.15 (signed _Accum or whatever it'd be called).

-

-

s16.16

That's one thing you won't have, as it would be using 33 bits.

That was essentially me not knowing whether or not the spec counts the 's' as a separate bit, or just uses it to indicate that the following bits act as a signed two's complement number. (I.e., is s.15 == s1.15?). But from your post it sounds like it's counted separately. As mentioned, I didn't read the full 105 pages of the spec.

-

Probably not the news you're expecting but if they show up in consumer channels they might make a nice low powered under TV box for my mother's house. Or a replacement for my pi.

-

Infinity Fabric (IF) - AMD - WikiChip

Infinity Fabric (IF) - AMD - WikiChip

AMD Zen 3 Ryzen Deep Dive Review: 5950X, 5900X, 5800X and 5600X Tested

AMD Zen 3 Ryzen Deep Dive Review: 5950X, 5900X, 5800X and 5600X Tested