NetBeans vs UTF-8

-

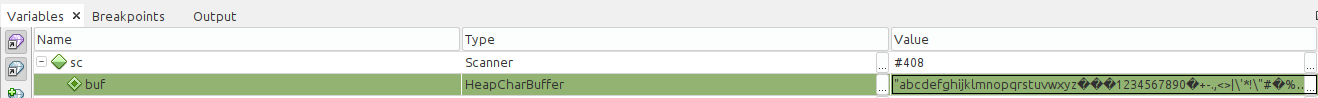

So a colleague came to me with an issue with reading the letters Å Ä Ö from the console. Knowing about Java character encoding fuckery I thought it was using the wrong encoding on Windows, so I tell her how to force the scanner to use UTF-8. No change. So I go to my Linux machine that uses UTF-8 by default. And I get the exact same issue. And then I end up going down the abyss. Use InputStreamReader instead of Scanner? Same thing. Try to override the locale for the scanner? Same thing. Setting a breakpoint so I can see where the fuckup happens and it seems to happen right at the input in both cases based off what's in the buffer.

Do some more research today. Points towards being an encoding issue so I try a few encodings to see what happens. When running ISO-8859-1 it works and it reads the letters correctly, but not if reading the input as UTF-8. As UTF-8 they apparently gets converted to U+FFFD because the characters don't get read for some reason? Then I try running the program directly from the console instead of through the NetBeans console and... it works with UTF-8. So... The NetBeans console runs with a different encoding than the system console? I don't get it. Why would it do that? I don't even...

-

@Atazhaia said in NetBeans vs UTF-8:

Why would it do that?

Er, because...

NetBeans

There's your answer, n'est-ce pas?

-

@Steve_The_Cynic Yes. Yes, it very much is.

Colleague "solved" problem by setting the scanner to use Windows-1252, which read it correctly too.

StackOverflow

(accepted answer) the problem by setting the scanner to use x-ISO2022-CN-GB. Which does work, but why would you pick that encoding when not dealing with chinese text?

(accepted answer) the problem by setting the scanner to use x-ISO2022-CN-GB. Which does work, but why would you pick that encoding when not dealing with chinese text?Also, the NetBeans console will correctly output UTF-8. Just not read it.

-

@Atazhaia said in NetBeans vs UTF-8:

Also, the NetBeans console will correctly output UTF-8. Just not read it.

....... that's....

wow. okay..... just wow...... what special kind of english only fuckery was going through their heads when they made that a "feature"?

-

@Steve_The_Cynic said in NetBeans vs UTF-8:

NetBeans

There's your answer, n'est-ce pas?

I didn't even realize NetBeans is still a thing.

-

@Vixen said in NetBeans vs UTF-8:

@Atazhaia said in NetBeans vs UTF-8:

Also, the NetBeans console will correctly output UTF-8. Just not read it.

....... that's....

wow. okay..... just wow...... what special kind of english only fuckery was going through their heads when they made that a "feature"?

I'm going to assume this decision was made back when Netbeans was controlled by Oracle. You'd have thought Apache would fix that by now, though.

-

@Atazhaia

It becomes even funnier when you consider that the original developer, Jaroslav Tulach, who according to GitHub is still contributing to the project, is from the Czech Republic. Latin-1 cannot even represent his native language.

-

@topspin said in NetBeans vs UTF-8:

I didn't even realize NetBeans is still a thing.

My last email from the NetBeans users list was a year ago Wednesday. (I haven't actually used it in far longer than that; I'm not sure how long.)

My last email from the NetBeans users list was a year ago Wednesday. (I haven't actually used it in far longer than that; I'm not sure how long.)

-

@HardwareGeek said in NetBeans vs UTF-8:

users list

you really wear that

with pride don't you, oldtimer?

with pride don't you, oldtimer?

-

@Vixen said in NetBeans vs UTF-8:

@Atazhaia said in NetBeans vs UTF-8:

Also, the NetBeans console will correctly output UTF-8. Just not read it.

....... that's....

wow. okay..... just wow...... what special kind of english only fuckery was going through their heads when they made that a "feature"?

You know something? I think I'm going to return to English like how she was wrote when I was a wee small laddie, with jolly words like rôle and naïve. Let's see the vaunted ASCII-7 cope with that!

-

@Steve_The_Cynic said in NetBeans vs UTF-8:

Let's see the vaunted ASCII-7 cope with that!

Pfft... Who needs that... just use RAD-50

-

@TheCPUWizard WE DO NOT NEED THIS LOWER CASE STUFF. THAT LETS US USE JUST 6 BITS PER CHARACTER.

-

@Luhmann said in NetBeans vs UTF-8:

@HardwareGeek said in NetBeans vs UTF-8:

users list

you really wear that

with pride don't you, oldtimer?

with pride don't you, oldtimer?I'm so happy that open source projects finally discovered web forums in the middle 2010s.

-

@anonymous234 I'm so sad most went with the worst solution available.

-

@dkf said in NetBeans vs UTF-8:

@TheCPUWizard WE DO NOT NEED THIS LOWER CASE STUFF. THAT LETS US USE JUST 6 BITS PER CHARACTER.

CDC DISPLAY CODE FTW!!!

(':' == '\000')

-

@dkf said in NetBeans vs UTF-8:

@TheCPUWizard WE DO NOT NEED THIS LOWER CASE STUFF. THAT LETS US USE JUST 6 BITS PER CHARACTER.

EBCDIC WAS GOOD ENOUGH FOR MY GRANDFATHER AND GOSH DARN ITS GOOD ENOUGH FOR ME!

-

@Vixen said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@TheCPUWizard WE DO NOT NEED THIS LOWER CASE STUFF. THAT LETS US USE JUST 6 BITS PER CHARACTER.

EBCDIC WAS GOOD ENOUGH FOR MY GRANDFATHER AND GOSH DARN ITS GOOD ENOUGH FOR ME!

Don't think, what is

'Z' - 'A'?

-

@PleegWat I'm sure there was

asome reason consecutive letters didn't occupy consecutive code points. I have no idea what the reason was, but I'm sure there was one.goodvalid

-

@HardwareGeek The answer is '25, but only if your CPU is in BCD mode'. Which x86 doesn't have.

-

@PleegWat Actually, ignore that. It's 40 in binary, and junk in BCD mode because the first half of the byte is higher than 10 for letters.

-

@PleegWat said in NetBeans vs UTF-8:

@PleegWat Actually, ignore that. It's 40 in binary, and junk in BCD mode because the first half of the byte is higher than 10 for letters.

see, you answered it yourself! :-)

also why are you doing math on CHARACTERS seriously! that's just asking for trouble!

-

#define tolower(c) ((c)>='A'&&(c)<='Z'?c+0x20:c)

-

@PleegWat said in NetBeans vs UTF-8:

#define tolower(c) ((c)>='A'&&(c)<='Z'?c+0x20:c)C: Doing It Wrong since 1972.

-

#define tolower(c) (c | ' ')

-

Preprocessor directives are the root of all evil.

And both examples above illustrate it:

ccan be an expression, so you need to bracket it properly (half done in the first example, not at all in the second).

-

@error look. It was ages since I wrote any macros. I don't remember all the fine details. I only know not to use them ever, and if that's not possible, to always define them in pairs where the first is just a dummy that invokes the second (and I already forgot why).

-

@Gąska They're not also important now we have

static inlinefunctions and compilers that can remove unused functions. But there are still things that you can't do that way, such as writing your own control constructs or using the name of something as well as its value.The usual rule is that, for ordinary uses in a macro, put

(parens around it)to prevent expression misinterpretation. Then, if you're not making an expression, place the whole lot in ado {...} while (0)to force it to be a statement and stop a different common class of misinterpretation. But using inline functions is better where possible.

-

@dkf said in NetBeans vs UTF-8:

now we have static inline functions

We've had these for ages. In fact modern C++ compilers pretty much completely ignore the

inlinekeyword (outside of the one definition rule) and rely on their own assessment of whether inlining the function is beneficial.The new cool kid on the block is

constexprwhich guarantees zero runtime cost.

-

@Atazhaia said in NetBeans vs UTF-8:

Also, the NetBeans console will correctly output UTF-8. Just not read it.

Is it possible that for some dumb reason the input is system-dependent?

-

@PleegWat said in NetBeans vs UTF-8:

@HardwareGeek The answer is '25, but only if your CPU is in BCD mode'. Which x86 doesn't have.

sub,aas?

-

@Gąska said in NetBeans vs UTF-8:

I don't remember all the fine details.

If that implies you ever knew, hats off.

I only know not to use them ever, and if that's not possible,

If only we had better meta-programming and such.

to always define them in pairs where the first is just a dummy that invokes the second (and I already forgot why).

Never heard of that advise. I assume it refers to the arcane rules that you need when stringizing something, i.e.

#define STRINGIZE_(x) #x #define STRINGIZE(x) STRINGIZE_(x)but you don't need to write these all that often.

-

@Deadfast said in NetBeans vs UTF-8:

@Atazhaia said in NetBeans vs UTF-8:

Also, the NetBeans console will correctly output UTF-8. Just not read it.

Is it possible that for some dumb reason the input is system-dependent?

Considering it was the same input error on Windows and Linux, I would say no.

-

@dkf said in NetBeans vs UTF-8:

@Gąska They're not also important now we have

static inlinefunctions and compilers that can remove unused functions. But there are still things that you can't do that way, such as writing your own control constructs or using the name of something as well as its value.The usual rule is that, for ordinary uses in a macro, put

(parens around it)to prevent expression misinterpretation. Then, if you're not making an expression, place the whole lot in ado {...} while (0)to force it to be a statement and stop a different common class of misinterpretation. But using inline functions is better where possible.Yeah, I definitely prefer inline functions over function-like macros where possible nowadays. There's a bunch of cases though where you do have to use functionlike macros:

- When you want to use

sizeof, like inmemset(o, 0, sizeof *(o)) - When you want to generate a compiler directive, like

__attribute__((format(printf,x,y))) - When you want to inject the current file name and/or line number, like

trace_malloc(s,__FILE__,__LINE__) - In rare cases, when you want to access variables without putting them in the argument list, like

argc > (n) ? argv[n] : NULL - When you want to access the raw string argument as well for debugging, like

fprintf(stderr, "%s(%d): %s=%d\n", __FILE__, __LINE__, #i, (i)) - Probably more uses I'm forgetting right now

I have never had reason to make my own control structures, preferring to use callbacks. This is mostly because I haven't found a way to write a foreach-like macro without having to specify an annoying number of helper variables. I've been using callbacks instead, which have their own disadvantages.

- When you want to use

-

@Gąska said in NetBeans vs UTF-8:

#define tolower(c) (c | ' ')

Your heart and soul are black, and you will be damned to an eternal hell of forever debugging twisted, badly written recursive macros and deciphering obscure mojibake from exotic character encodings.

-

@Gąska said in NetBeans vs UTF-8:

#define tolower(c) (c | ' ')printf( "lowercased newline: %c\n", tolower('\n'));

-

@PleegWat bad user input isn't my problem

-

Use Java, they said. Java uses Unicode, out of the box, it just works, they said.

-

@PleegWat said in NetBeans vs UTF-8:

- When you want to use

sizeof, like inmemset(o, 0, sizeof *(o))

One day, you'll have to write code on an AS/400, today called "iSystem". You will abruptly learn a painful lesson about portability.

On AS/400, you see, a NULL pointer is not the all-zeroes bitpattern(1), so if

opoints to a structure that contains pointers, thememsetwill not set them to NULL.(1) More to the point, the all-zeroes bitpattern is not a NULL pointer.

- When you want to use

-

@LaoC said in NetBeans vs UTF-8:

Use Java, they said. Java uses Unicode, out of the box, it just works, they said.

No, I'm pretty sure you got that wrong. The saying goes: You have a problem. You decide to solve it using Java. Now you have a

ProblemFactory.

-

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)

-

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.

-

@Steve_The_Cynic said in NetBeans vs UTF-8:

so if

opoints to a structure that contains pointers, thememsetwill not set them to NULL.Is there any architecture where a zero bit pattern for float/double is a signaling NaN?

-

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.When addresses are at more-than-byte granularity, char pointers need to contain the byte offset in addition to the address itself.

-

@topspin said in NetBeans vs UTF-8:

@Steve_The_Cynic said in NetBeans vs UTF-8:

so if

opoints to a structure that contains pointers, thememsetwill not set them to NULL.Is there any architecture where a zero bit pattern for float/double is a signaling NaN?

Floating point bit patterns are part of the standard.

@Gąska said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.When addresses are at more-than-byte granularity, char pointers need to contain the byte offset in addition to the address itself.

The C standard requires (IIRC) that any typed pointer can be cast to point and then back to the original type, and the result will be the original pointer.

-

@Gąska said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.When addresses are at more-than-byte granularity, char pointers need to contain the byte offset in addition to the address itself.

You need to be able to round-trip through void*.

-

@PleegWat said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@Steve_The_Cynic said in NetBeans vs UTF-8:

so if

opoints to a structure that contains pointers, thememsetwill not set them to NULL.Is there any architecture where a zero bit pattern for float/double is a signaling NaN?

Floating point bit patterns are part of the standard.

Are you sure?

I thought not even two's complement integers are part of the standard, much less a requirement for IEEE-754.

-

@topspin said in NetBeans vs UTF-8:

@Gąska said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.When addresses are at more-than-byte granularity, char pointers need to contain the byte offset in addition to the address itself.

You need to be able to round-trip through void*.

Are you sure? C++ only requires round-trip to char* - is C different?

-

@Gąska said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@Gąska said in NetBeans vs UTF-8:

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

@Steve_The_Cynic That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.When addresses are at more-than-byte granularity, char pointers need to contain the byte offset in addition to the address itself.

You need to be able to round-trip through void*.

Are you sure? C++ only requires round-trip to char* - is C different?

Well, I was before you questioned it, now I'm not. But what's the point of having a void* that doesn't round trip? You can't use it for anything (memcpy etc) if it can't address everything.

-

@topspin I looked up the C18 standard. There are way more guarantees than I thought. All object pointers (pointers to primitives, pointers to structures and unions, and pointers to void, and whatever I forgot about) can round-trip with each other without limitations. All function pointers can round-trip with each other, too. Object pointers aren't guaranteed to round-trip with function pointers, though. But all null pointers, both object and function, are guaranteed to be equal to each other. And 0 cast to pointer is guaranteed to be null pointer, though it doesn't exactly say what the byte representation should be.

Now I'm digging through C90 to see if it was different back then, or if Cray was simply non-compliant.

-

@topspin said in NetBeans vs UTF-8:

@dkf said in NetBeans vs UTF-8:

That's up there with making

char *andvoid *different sizes in terms of awful vendor skulduggery. (Thanks a lot, Cray.)I assume

sizeof char* <= sizeof void*? I can't imagine how the other way around could be conforming.It was exactly the other way round, as internally that platform implemented

char *as a struct containing a pointer (to machine word) and an offset within the word. You get that on platforms where the address space is structured right (uncommon now). It was absolutely ghastly.The code in question no longer supports that platform. I'm OK with that.