Dawn: the SUBLEQ OS by Geri

-

@Kian said in Dawn: the SUBLEQ OS by Geri:

Maybe if you just went with "I made a toy to have fun with" and not "I am David fighting Goliath" you would receive more of a welcome and less of a roasting.

This is the second (at least) time this sentiment was expressed. Perhaps in the future a new marketing campaign can be devised to address these issues.

-

@wger4 said in Dawn: the SUBLEQ OS by Geri:

There are no native hardware capable of running Dawn, but there are experimental SUBLEQ cpu-s exist.

Ah. But...

Oh, you're talking about thinks like the hardware Mouse Layer thing. Got it.

@wger4 said in Dawn: the SUBLEQ OS by Geri:

Some of them are other OS developers, and experimenting with Dawn as a guest OS or GUI for they (tipically unix-like os).

Other people are modeling their GUI after this???

@wger4 said in Dawn: the SUBLEQ OS by Geri:

Some guy used it to pre-install on computers he sold (quick boot and prooving they work, i guess)

Wait, you literally just said no hardware exists to run DawnOS, but now you claim someone is installing it on consumer hardware???

@wger4 said in Dawn: the SUBLEQ OS by Geri:

There are a few who just like to play the built in chess, and they use to os for this sole purpose.

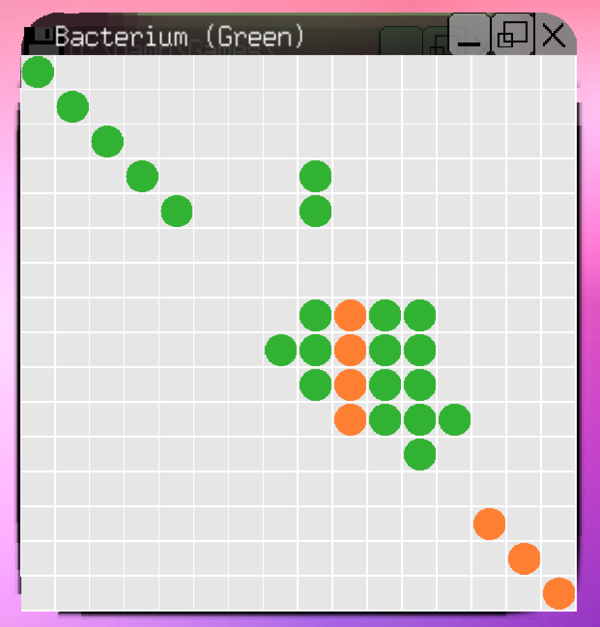

They must be running it on $300k server hardware, I've been playing "Bacterium" since I mentioned I was downloading, and still haven't gotten very far in the game:

@wger4 said in Dawn: the SUBLEQ OS by Geri:

(And this is why Start menu is a wheel, and actually not called Start).

Actually, I believe it's a cog.

-

@topspin said in Dawn: the SUBLEQ OS by Geri:

Don't get me wrong, I too think it's impressive from the "amount of work put into it" angle. And it's a toy OS, so there's no need to justify its existence, or justify lack of features like security.

Which is why I'm still tinkering on my IoT temperature sensor thing still. I didn't see any plug-n-play images that did all the configuring for you in an easy-to-use package, and so I'm writing up my own.

@topspin said in Dawn: the SUBLEQ OS by Geri:

But what I don't see addressed: Why is this tightly coupled to this "SUBLEQ" architecture?

You might as well develop the OS on a normal architecture, and it'd still be a fun toy to tinker with. On the flip side, if you have a C compiler for your SUBLEQ architecture, you only need to port a kernel for it and just compile some existing OS's userland to your architecture.See: TempleOS

-

@wger4 said in Dawn: the SUBLEQ OS by Geri:

Porting the emulator is magnitudes easyer and less time consuming than porting and releasing the whole os image to other architectures.

So you trade off developer CPU cycles for consumer CPU cycles, got it.

@wger4 said in Dawn: the SUBLEQ OS by Geri:

When i decided to use SUBLEQ, i choosen it because it was the most popular URISC instruction(set), and it was also human-friendly so its relatively easy to work with.

Popular according to who? I as a developer have never heard of this, and I know about things like PowerPC and MIPS.

@wger4 said in Dawn: the SUBLEQ OS by Geri:

ARM have no unified io so every manufacturers every processor would need a different boot image while most of them is undocumented proprietary hardware

But (if I'm understanding this correctly) if you claim to be an ARM processor you necessarily follow a given instruction set. Therefore, the hardware implementation's lack of documentation is virtually

-

@wger4 said in Dawn: the SUBLEQ OS by Geri:

currently i cant even yet put it into context.

But you could do so for SUBLEQ. Got it.

-

@Groaner said in Dawn: the SUBLEQ OS by Geri:

I see this attitude sometimes in response to indie games. The huge amount of free-to-play content has conditioned a fair number of consumers into expecting AAA quality out of everything they see and getting it for free, as if you need a loss-leading $100 million budget to even capture their attention.

Yeah...

We're up a swiftly flowing creek with our "game"...

We're up a swiftly flowing creek with our "game"...

-

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

My favorite Microchip errata was "the core randomly skips instructions if temperature is below xx °C" (with probability inversely proportional to temperature). I'm glad I never used that part!

Sounds like a feature! We'll call it... Shivering!

-

@strangeways Oh, welcome back, lurker? Where the fuck are y'all coming from???

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

If all the cores are turn off, who the running does instruction pointer to be getting of?

You can have special hardware for that. It'd be slightly odd, but would work.

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

But (if I'm understanding this correctly) if you claim to be an ARM processor you necessarily follow a given instruction set. Therefore, the hardware implementation's lack of documentation is virtually

Most hardware is under-documented, but the ARM cores are pretty well documented if you're a licensee (and the ISA is well documented). It's all the other bits and pieces that are bolted on the outside (and usually memory-mapped) that are often weird as hell.

-

@Cursorkeys said in Dawn: the SUBLEQ OS by Geri:

Thinking about it a bit, this architecture might be very cool as an FPGA soft-core. Especially for safety-critical stuff.

And then you could JIT¹-compile x86 to SUBLEQ

¹ In keeping with the slightly reduced performance and the Manga theme, I propose to call it the JailbaIT™ compiler

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

Also, you get some… “interesting”… systems if you have CPU cores that have no protection levels, but where you've got plenty of CPU cores that are physically separated from each other and can only communicate via message passing. Those systems are very much not like normal OSs.

GA144, anyone?

(if only I had a fun application...)

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

@wger4 said in Dawn: the SUBLEQ OS by Geri:

@Jaloopa It runs chess and snake.

Sounds kinky, downloading!

Alright, so let's see what this is all about. Holy crap I've been playing a game for an hour and a half and haven't lost yet (but that's mostly because it's been so slow there've been only about forty moves made so far).

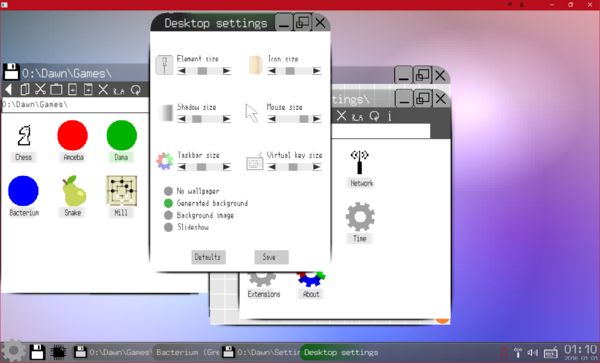

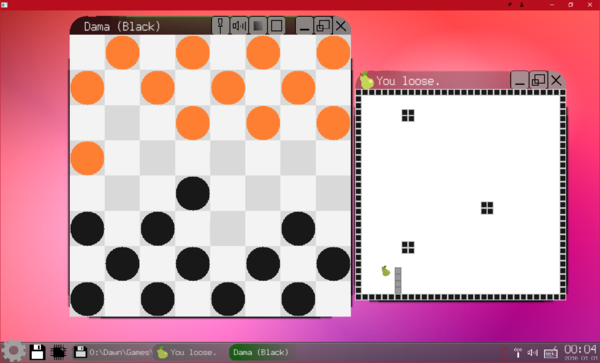

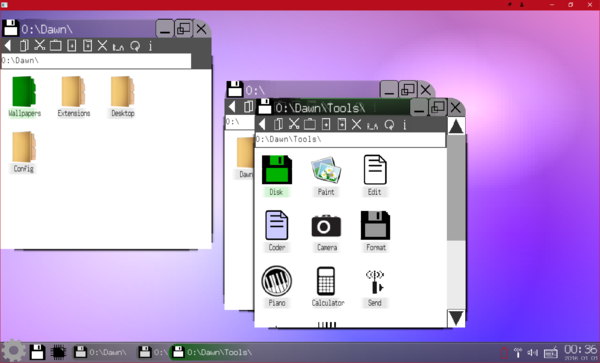

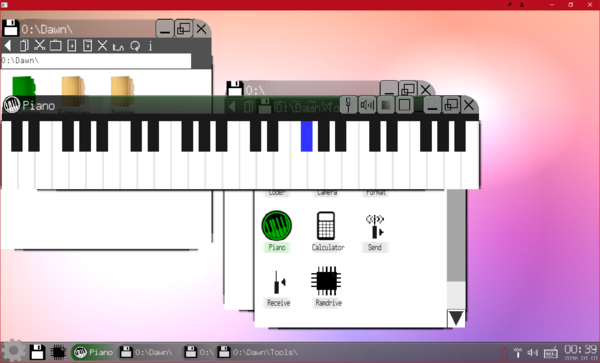

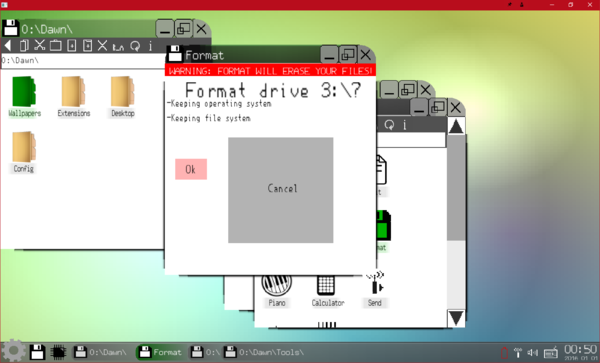

Well, how about some screenshots?

First off, I'm getting extremely strong vibes reminding me of my own implementation of (what I named) Windows TI that I made two decades ago on my T83+ in the Calculator's BASIC language. The perfomance is on par, rendering of the window chrome is uncannily similar.

This actually extends to how window contents can escape the window, for example application icons (most noticeable) and the bottom corners where the window was obviously supposed to have rounded corners as well, but the application drew as if it expected square.

Discoverability is incredibly low. I'm a developer of shitty UIs and even I couldn't figure out certain functions. For example, take the Checkers clone:

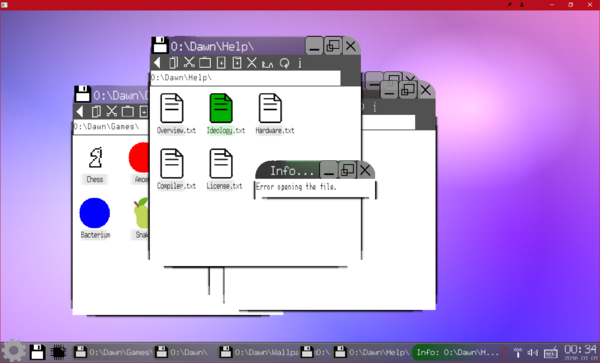

Apparently buttons can be instantiated in an app's titlebar, but the lack of a way to discover their function mandates experimentation, and god help the poor sod who clicked the empty square icon! Tooltips could be super helpful! The few files in the

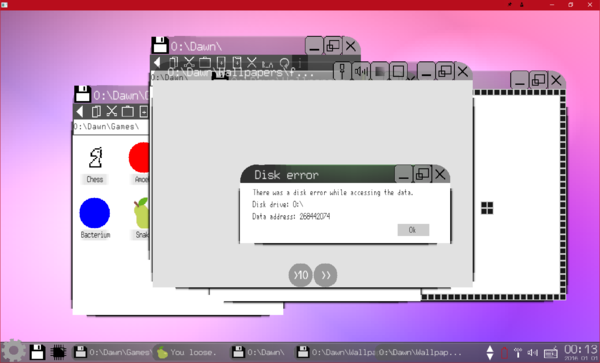

0:\Dawn\Helpfolder are 100 percent unhelpful in unraveling this mystery.Apparently the image file I got from the "Latest Downloads" area is corrupted somehow, I was trying to load up the sample wallpapers and got stumped when the built-in bitmap viewer broke:

Not sure how one encounters a disk error on a virtual disk, but there you go.

Side note, I realize that all the graphics drawing has probably been hand-rolled, but holy crap is this astounding! Still images cannot justify the glorious screen-tearing you will witness on emulated hardware!

At least multitasking does appear to be working.

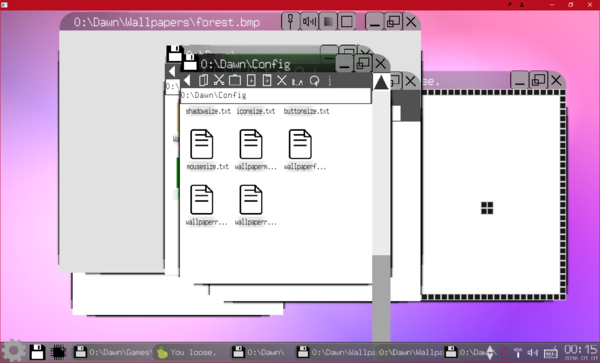

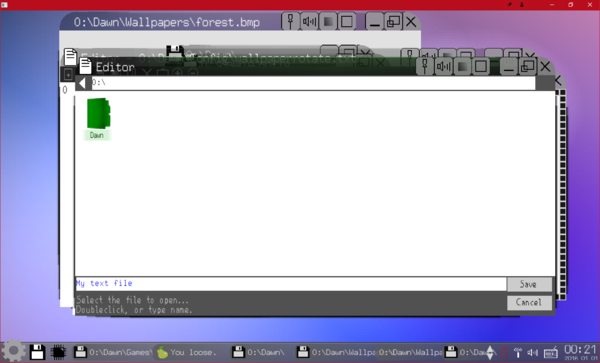

Gotta appreciate simplicity. It doesn't auto-add the .txt to the name, so this file is not openable by default after I clicked Save.

Side note: I find it hilarious that the icon for the storage media is a floppy disk. Gotta love them 640MB floppies!

I'm really questioning the "Battery, and power-saving features" feature. What are they? So far, even at "idle" the emulator is consistently maxed out. I'm assuming there's no such thing as

NOPin the instructions, or any other "I'm not doing anything, save power, CPU!" instruction.I found it intriguing that there is apparently no context menus (or menus at all, apart from the not-named-Start Menu)

Getting info on files is apparently impossible...

I find it impossibly hostile to hide folders from users with no way to show them.

Yes, emulator apparently supports sound, but don't expect anything of it...

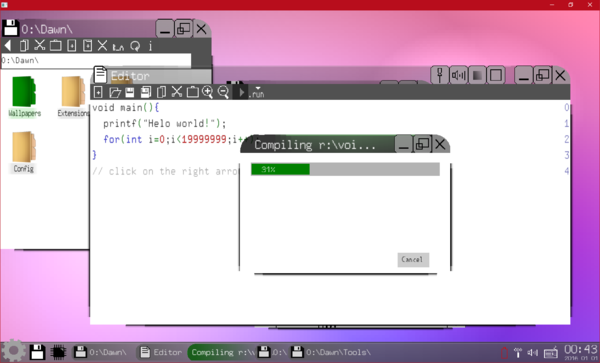

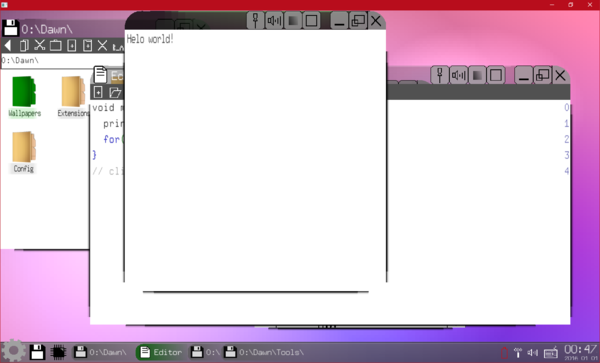

Oh how fascinating! It comes with a Helo world program!

Which is really interesting because thus far I have not found any kind of console-like program, I wonder what printing will do? Now as to why compiling a program as simple as this takes any amount of time... well, apparently I'm not running the emulator on $300k server hardware, so sue me. Anyways, the result:

I'm pleasantly unsurprised. Of course, the window did not stay for long. Counting from 0 to 19999999 still takes only a second or so, even on emulated hardware that can only subtract and jump if less than zero.

Let's try to format a disk that doesn't exist...

Surprisingly, no error.

Overall, I'm marginally impressed. Couldn't test out any of the networking functionality (what the fuck with it anyways).

If I were confident they had $300k server hardware to try it on, I might recommend OSFirstTimer try this... But I'm not so I won't.

-

@Tsaukpaetra All those issues pale into insignificance compared to

-

@coldandtired said in Dawn: the SUBLEQ OS by Geri:

@Tsaukpaetra All those issues pale into insignificance compared to

I intentionally did not draw attention to that while ensuring it was there for a while.

-

Goddamnit @Tsaukpaetra

-

@PleegWat said in Dawn: the SUBLEQ OS by Geri:

Goddamnit @Tsaukpaetra

What? Is two weeks necro too short? 😉

-

@LaoC said in Dawn: the SUBLEQ OS by Geri:

GA144, anyone?

Nice. We're possibly going to be putting more cores on our next chip (and they'll be programmed in C) but that's still not taped out. We've got previews of some of it that we can run on FPGA, but those are very much not the same thing as the final product. There are other high-core-count processors out there, but right now there's nothing shipping that's generally-programmable; everything released right how has massive restrictions of one kind or another.

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

@PleegWat said in Dawn: the SUBLEQ OS by Geri:

Goddamnit @Tsaukpaetra

What? Is two weeks necro too short? 😉

-

@dkf: I'm intrigued. What kind of chips is your company making? Internal use only or sold to others? Application-specific or general-purpose?

-

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

@dkf: I'm intrigued. What kind of chips is your company making? Internal use only or sold to others? Application-specific or general-purpose?

IIRC it was brains.

-

@Applied-Mediocrity said in Dawn: the SUBLEQ OS by Geri:

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

@PleegWat said in Dawn: the SUBLEQ OS by Geri:

Goddamnit @Tsaukpaetra

What? Is two weeks necro too short? 😉

I'm not sure, but I think it is grass.

-

@Tsaukpaetra

A wall of posts, actually. Once again I find that my comedy genius remains misunderstood by this world, smh.

-

@Applied-Mediocrity said in Dawn: the SUBLEQ OS by Geri:

@Tsaukpaetra

A wall of posts, actually. Once again I find that my comedy genius remains misunderstood by this world, smh.Oh, ha ha...

Be glad I didn't make it a massive one-post. That really fucks with the post Loading code of NodeBB....

-

-

-

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

What kind of chips is your company making?

WTF-U makes (well, we get a silicon foundry to do the actual manufacturing for us because we're a university, damnit!) processors for doing simulations of neural processing. In particular, we have a system for simulating neurons, lots and lots of neurons. Many millions, with billions of synapses. This is done with these processors which, in the current generation, pack 18 low-power CPU cores onto one chip plus a bunch of memory and some highly funky comms hardware that allow us to send multicast messages across the whole machine very cheaply (those messages correspond to synapses firing).

Our next-gen system will pack around 10 times as many user cores in per chip. (I'm not sure if we've finalized that number yet.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.We're aware of other competitors in this space. Like Intel. We see the technical restrictions on their hardware and know that what we're doing will be massively more friendly for user code. (There are other academic competitors too, ranging from one group using ordinary supercomputers to another trying to do everything in analog hardware. The latter group are total, awesome madmen. And their approach has the same problem that Intel's Loihi has: they've locked in the computational model of the neurons and synapses to something very simple and unrealistic. We know that the neuroscientists want the complex models as some features of intense interest — e.g., deep sleep — only exhibit themselves in the complex models.)

I have a ton more detail I can go into. But I think I'll leave it at that for now.

-

@Applied-Mediocrity said in Dawn: the SUBLEQ OS by Geri:

A wall of posts, actually.

It looked like a pale, and I wondered if you were beyond it.

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

What kind of chips is your company making?

WTF-U makes (well, we get a silicon foundry to do the actual manufacturing for us because we're a university, damnit!) processors for doing simulations of neural processing. In particular, we have a system for simulating neurons, lots and lots of neurons. Many millions, with billions of synapses. This is done with these processors which, in the current generation, pack 18 low-power CPU cores onto one chip plus a bunch of memory and some highly funky comms hardware that allow us to send multicast messages across the whole machine very cheaply (those messages correspond to synapses firing).

Our next-gen system will pack around 10 times as many user cores in per chip. (I'm not sure if we've finalized that number yet.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.We're aware of other competitors in this space. Like Intel. We see the technical restrictions on their hardware and know that what we're doing will be massively more friendly for user code. (There are other academic competitors too, ranging from one group using ordinary supercomputers to another trying to do everything in analog hardware. The latter group are total, awesome madmen. And their approach has the same problem that Intel's Loihi has: they've locked in the computational model of the neurons and synapses to something very simple and unrealistic. We know that the neuroscientists want the complex models as some features of intense interest — e.g., deep sleep — only exhibit themselves in the complex models.)

I have a ton more detail I can go into. But I think I'll leave it at that for now.

Analogue computers are extremely fascinating, though. Still totally insane on a large scale.

-

@dkf

There's a 50% chance I might have been beyond it.

-

@admiral_p said in Dawn: the SUBLEQ OS by Geri:

Analogue computers are extremely fascinating, though. Still totally insane on a large scale.

They're pretty crazy on a small scale; reproducibility is super-difficult due to the tolerances on everything never being tight enough. And all that thermal variation too. And that's before thinking about scaling it up.

The loony mofos are also talking about using memristors. Which would be neat if they worked and gave consistent results. As one of my bosses likes to say, we've got the hardware that could simulate all that stuff and give them consistent enough results to decide how they could build a large system with it…

-

Aaaand I forgot to answer these other questions.

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

Internal use only or sold to others?

We do sell some to others, but not that many (and most of those are in boards; we have both 4-chip and 48-chip boards, and the rules for sales of them both are different). The cost of a chip will depend very much on what ARM licenses you hold, and whether you're an academic (or related) organisation. I don't do anything with that side of things.

Application-specific or general-purpose?

Yes.

Most of the hardware is general-purpose, but the comms hardware is more application-specific (it's been tuned for multicasting very small messages) and our supporting software stack is very application-specific.

Most of the hardware is general-purpose, but the comms hardware is more application-specific (it's been tuned for multicasting very small messages) and our supporting software stack is very application-specific.

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

It looked like a pale, and I wondered if you were beyond it.

See I thought it was going to be something like "moved the goalposts".

Basically that's a shitty image.

-

@dkf: fascinating stuff

-

Well. Without really wanting to necro a four-year-old thread, but equally without really knowing where else to put it, here goes...

I was just looking at today's Discovery Queue on Steam, and this popped up: https://store.steampowered.com/app/2124440/SIC1/

I'll let y'all decide what to make of it.

-

@Steve_The_Cynic said in Dawn: the SUBLEQ OS by Geri:

what to make of it.

A little surprised someone took it seriously enough to make an implementation for Steam.

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

@Steve_The_Cynic said in Dawn: the SUBLEQ OS by Geri:

what to make of it.

A little surprised someone took it seriously enough to make an implementation for Steam.

Uh... Steam has a client for SUBLEQ now?

-

@admiral_p said in Dawn: the SUBLEQ OS by Geri:

@dkf said in Dawn: the SUBLEQ OS by Geri:

@Zerosquare said in Dawn: the SUBLEQ OS by Geri:

What kind of chips is your company making?

WTF-U makes (well, we get a silicon foundry to do the actual manufacturing for us because we're a university, damnit!) processors for doing simulations of neural processing. In particular, we have a system for simulating neurons, lots and lots of neurons. Many millions, with billions of synapses. This is done with these processors which, in the current generation, pack 18 low-power CPU cores onto one chip plus a bunch of memory and some highly funky comms hardware that allow us to send multicast messages across the whole machine very cheaply (those messages correspond to synapses firing).

Our next-gen system will pack around 10 times as many user cores in per chip. (I'm not sure if we've finalized that number yet.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.

) Those cores will be more powerful in many ways (some of which I can't yet talk about until we've patented some bits and published papers about others) and yet the power-budget per chip will be about identical.We're aware of other competitors in this space. Like Intel. We see the technical restrictions on their hardware and know that what we're doing will be massively more friendly for user code. (There are other academic competitors too, ranging from one group using ordinary supercomputers to another trying to do everything in analog hardware. The latter group are total, awesome madmen. And their approach has the same problem that Intel's Loihi has: they've locked in the computational model of the neurons and synapses to something very simple and unrealistic. We know that the neuroscientists want the complex models as some features of intense interest — e.g., deep sleep — only exhibit themselves in the complex models.)

I have a ton more detail I can go into. But I think I'll leave it at that for now.

Analogue computers are extremely fascinating, though. Still totally insane on a large scale.

They need to be perfect, is a slight problem.

-

@Gribnit No, because it turns out you don't need to care about the exact voltage levels. What matters most are the decay constants, and you can tune those. It's difficult because you have to adjust the tuning as the temperature of the device varies and every instance has a slightly different thermal profile, but it is possible. Horrid to scale up, and energy hungry in practice, but possible.

There is a reason why we think those guys are a bunch of madmen.

-

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

A little surprised someone took it seriously enough to make an implementation for Steam.

I'm surprised that you're surprised. It seems to me that much of the stuff on Steam and elsewhere had started with such an idea that then never went anywhere.

-

@Applied-Mediocrity said in Dawn: the SUBLEQ OS by Geri:

@Tsaukpaetra said in Dawn: the SUBLEQ OS by Geri:

A little surprised someone took it seriously enough to make an implementation for Steam.

I'm surprised that you're surprised. It seems to me that much of the stuff on Steam and elsewhere had started with such an idea that then never went anywhere.

Except that most of that stuff(1) wasn't based on inherently misguided ideas in impractical computer non-science.

(1) I've looked at so much junk in the Discover Queue on Steam that I'm currently scraping the bottom of the barrel. No. The barrel below that, or in fact about 137 barrels deep.

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

@Gribnit No, because it turns out you don't need to care about the exact voltage levels. What matters most are the decay constants, and you can tune those. It's difficult because you have to adjust the tuning as the temperature of the device varies and every instance has a slightly different thermal profile, but it is possible. Horrid to scale up, and energy hungry in practice, but possible.

There is a reason why we think those guys are a bunch of madmen.

Sounds like a problem that goes away with an optics vs electronics approach, then? What about the equivalent of "resonant yaw"?

-

@Gribnit said in Dawn: the SUBLEQ OS by Geri:

Sounds like a problem that goes away with an optics vs electronics approach, then?

No, because combining optics and normal silicon is a complete pain in the ass when it comes to manufacturing. I don't know the details, but I do know my boss pulls a "Nope! Nope! Nope!" face every time it is suggested and says that something else should be tried.

-

@dkf Yeah, silicon doesn't really do optics. Gallium arsenide and similar semiconductors can absorb and emit photons when electrons jump across the energy gap between the valence and conduction bands. Silicon is an indirect band-gap semiconductor, which means that absorbing or emitting photons requires not just an energy change, but also a change in momentum, so it also requires the emission/absorption of a phonon to transfer momentum to/from the crystal lattice, which has a relatively low probability. It's possible to facilitate the absorption/emission of photons in silicon by deliberately introducing defects in the crystal lattice, but that's anathema to making electronics.

-

This post is deleted!

-

@HardwareGeek said in Dawn: the SUBLEQ OS by Geri:

by deliberately introducing defects in the crystal lattice, but that's anathema to making electronics.

Isn't that a good description of doping, though? which is essential to making transistors of all types? (Termed "substitutional defects".)

-

@Steve_The_Cynic said in Dawn: the SUBLEQ OS by Geri:

Isn't that a good description of doping, though?

Those are atomic replacement defects, and the dopants used are ones that don't actually change the crystal lattice shape. (Not disrupting the lattice structure is one of the key design goals of how silicon devices are manufactured; almost all reasons for a manufactured device failing during its initial testing come down to lattice defects.) But it sounds like making light emitting silicon requires interaction with phonons (quantized vibrations) and that requires physical defects in the lattice.

There are lots of types of defects (no, I can't remember them all, as I've not studied this stuff since I was a freshman) but they all make for weird complexity in behaviour. Imperfect crystals are interesting things. I have literally no idea if we can engineer in desired imperfections; the focus of low-level silicon manufacturing is very much about getting rid of them.

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

Those are atomic replacement defects, and the dopants used are ones that don't actually change the crystal lattice shape.

OK, fair enough.

-

@dkf said in Dawn: the SUBLEQ OS by Geri:

I have literally no idea if we can engineer in desired imperfections; the focus of low-level silicon manufacturing is very much about getting rid of them.

This caused me to have a stupid idea: What if the solution to energy crisis is to un-burn things in such a way that it releases more energy?

I apologise for my current brain-dead-ness.

-

@Tsaukpaetra With the right credentials you could put it in OpenGPT and apply for government grant.