Nobody shares knowledge better than this

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@HardwareGeek Who didn't know about blocking factors for all hardware .. they were assignable per FILE

Be it tape disc or floppy.It made data transfer faster...

During the 80's most systems were for Billing and such for large utilities

So very few BIG files.. To move data especially; sequential files... A large blocking factor made it 2 or 3 times quicker. Not so for production files with smaller (indexed) customer records... Smaller was better for performance.Some here don't know much that is for sure. They haven't been around since the beginning.

Now who needs data blocking NOBODYShort filenames and file blocking limits must GO Boo

This is a reason to change that from 4k to 13 bytes.

Have you considered designing a filesystem?

-

@Gribnit said in Nobody shares knowledge better than this:

Have you considered designing a filesystem?

The app seems to have evolved that way.

The standard directory tree stuff has it's limits.

You surely don't want to open a folder with thousands of videos....

The system just drags ... determining file play lengths and other display info

With spectate you just look and search that folder's detail list / catalog

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

Have you considered designing a filesystem?

The app seems to have evolved that way.

The standard directory tree stuff has it's limits.

You surely don't want to open a folder with thousands of videos....

The system just drags ... determining file play lengths and other display info

With spectate you just look and search that folder's detail list / catalogHave you considered the merest rudiments of usability, particularly discoverability?

-

@SpectateSwamp is this actually you on Kiwifarms or is that an impersonator?

-

@blek if it is an impersonator they were spot on.

-

@boomzilla said in Nobody shares knowledge better than this:

@blek if it is an impersonator they were spot on.

Maybe he got a new browser, that can reach more than just this site.

-

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@blek if it is an impersonator they were spot on.

Maybe he got a new browser, that can reach more than just this site.

The browser isn't the problem. It's that most sites ban him because they find him more annoying than funny (and Alex convinced Spectate to stay in his own thread so he'd stay that way).

-

@boomzilla said in Nobody shares knowledge better than this:

The browser isn't the problem.

Oh right. OTT, did we ever set up a cert-federated proxy firewall sufficient to let @tania_v back on the site? Really a missed connection. That could have lead to the next Xanadu. Maybe even the next Bob.

-

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

-

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

Impossible! I was the first one blocked. Remember? She's the one that found that feature?

-

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

Impossible! I was the first one blocked. Remember? She's the one that found that feature?

I must have tuned out by that point.

-

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

Impossible! I was the first one blocked. Remember? She's the one that found that feature?

I must have tuned out by that point.

If I were you, I'd also tune out while posting.

-

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

Impossible! I was the first one blocked. Remember? She's the one that found that feature?

I must have tuned out by that point.

If I were you, I'd also tune out while posting.

But you're you and you've already done that.

-

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

@Gribnit we did nothing. My interpretation of events is that she got bored talking to you.

Impossible! I was the first one blocked. Remember? She's the one that found that feature?

I must have tuned out by that point.

If I were you, I'd also tune out while posting.

But you're you and you've already done that.

QFT

-

-

@HardwareGeek said in Nobody shares knowledge better than this:

@boomzilla said in Nobody shares knowledge better than this:

she got bored talking to you.

Hold on while I approximate a sine wave.

-

It's obvious stupid people can't share Knowledge. They just don't know that much.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

It's obvious stupid people can't share Knowledge.

Even if they use SSDS?

-

@Zerosquare said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

It's obvious stupid people can't share Knowledge.

Even if they use SSDS?

There's a guy that uses SSDS for everyone too stupid to use SSDS for themselves.

-

@Gribnit Just started really using SSDS myself.

On another post I was exploring the 250 character file name limit...

AND

Creating a text file with no data and all Name

How much space would these files take?

Is the minimum file size linked to the blocking factor or not.A couple of lines of code to create a loop from 1 to 100,000

Right where the CP - Copy Picture does the copy rename..

Who needs programming experience when We can just tear into a great app like SSDSI don't think any structured program can achieve this. Nope Never.

-

Sometimes BullShit knowledge needs to be shared too

I've heard many times that a "Fed Bear is a Dead Bear"

NOPE

Recently we had an extreme heat wave that burned and stunted a lot of the wilderness greenery.

Then Fires razed the areas.. (A fellow said they could hear the animals crying when the fire overtook them)

We need to feed these animals otherwise More will die

Just driving about and tossing out goodies for the carnivores and herbivores alike... They have their regular trails...

Please feed the bears and other hungry critters.

-

To really share knowledge you need long filenames and an unstructured search like SPECTATE yup

I just tested building 15,000 empty files... It took about 45 seconds

anybody that wants billions isn't living in the real world.

As a daily transaction logger / mirror there would never be this many EVER

Is it faster to rename files

Add a few characters to see what that does.Here is the hard coded changes for this one time spectate

'17Aug2021 a one time loop to create a million blank files

ytemp = Format(Val(Cmd(27)), "00000000") 'for string print 17Aug2021

xtemp = "c:\search\tempfold\testing lots of blank data files " + ytemp + ".txt" '17Aug2021

Test2_str = InputBox("testing file 17Aug2021 =" + xtemp, , , 4400, 4500) 'TESTING ONLY

For II = 1 To Val(Cmd(27)) 'use the pause time element for number of copies

ytemp = Format(II, "00000000") 'for string print

xtemp = "c:\search\tempfold\testing lots of blank data files " + ytemp + ".txt" '17Aug2021

If InStr(UCase(App.EXEName), "BOTH") = 0 Then

' FileCopy disp_file, photo_dir + keep_desc 'copy the file create a copy changed 19May2021 copy file copy copy copy file

FileCopy disp_file, xtemp '17Aug2021 copy multiples of a blank file

Else

FileCopy disp_file, photo_dir + keep_desc + " " + old_pict 'copy the file create a copy changed 19May2021 copy file copy copy copy file

End If

Next II '17Aug2021

Stop '17Aug2021

-

@SpectateSwamp I need to catalogue at least 86400 images per day just to have one-second resolution

-

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp I need to catalogue at least 86400 images per day just to have one-second resolution

That seems weirder than most requirements..

It would take a couple hours to create that many EMPTY files.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp I need to catalogue at least 86400 images per day just to have one-second resolution

That seems weirder than most requirements..

It would take a couple hours to create that many EMPTY files.Well those kinda make sense for stubs for when I'm sleeping, but I try to avoid it.

-

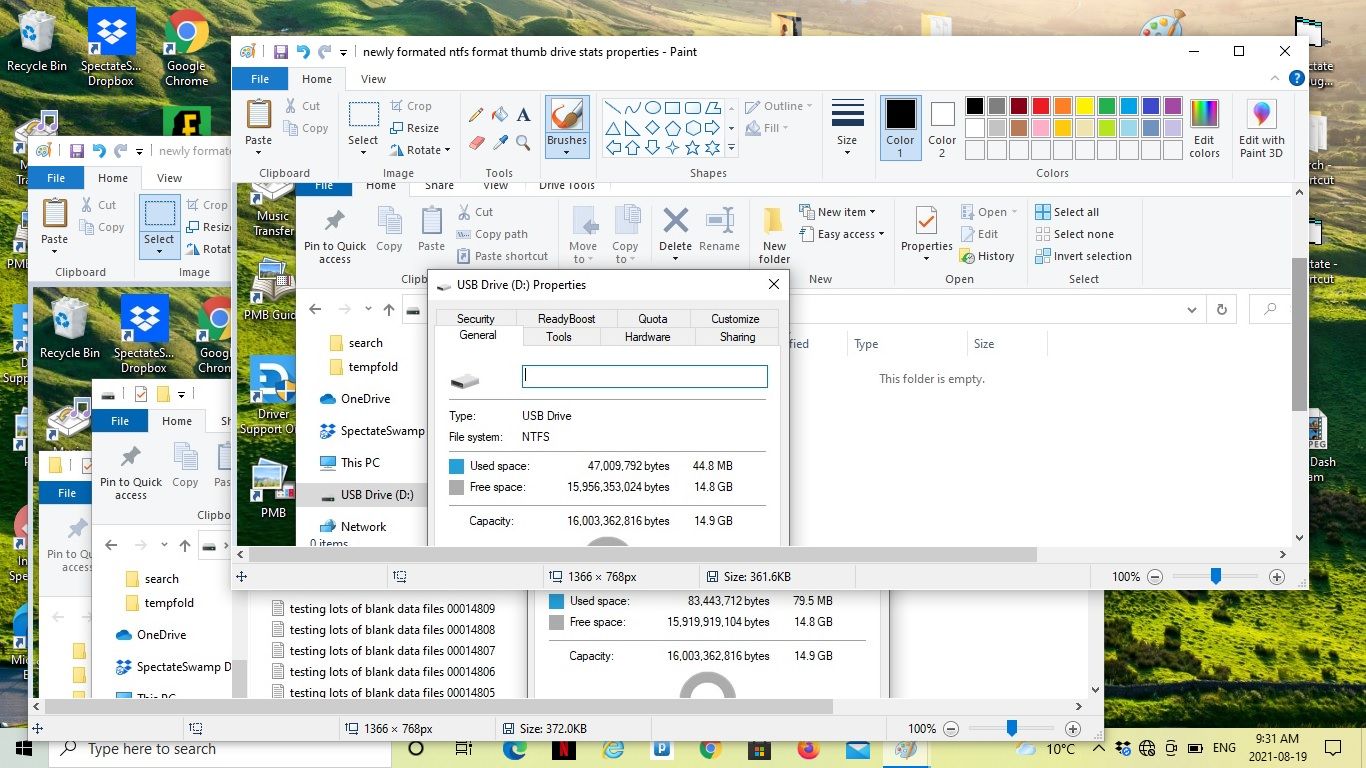

Here are the properties of the thumb drive

Newly formatted

47,009,752 used bytes

15,956,353,024 free space

16,003,362,816 capacityAfter 15,000 empty files copied

83,443,712 used bytes

15,919,919,104 free space

16,003,362,816 capacityWhat was weird is that the initial 15,000 create using the Spectate app took 45 to 50 seconds.

The copy over using Select all and drag and drop took over 35 minutes. Wow50 times slower... Spaghetti code rules..

-

@SpectateSwamp said in Nobody shares knowledge better than this:

The copy over using Select all and drag and drop took over 35 minutes. Wow

Have you tried this at the command prompt, or in a real operating system?

-

The copy to the thumb drive took even more time using Spectate. 50 minutes compared to the drag and drop of 35 minutes.

My mistake.

That much time to copy next to nothing. Strange.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

The copy to the thumb drive took even more time using Spectate. 50 minutes compared to the drag and drop of 35 minutes.

My mistake.

That much time to copy next to nothing. Strange.

You're not copying next to nothing. You're copying 15,000 file handles to a not-excessively-optimized file list. Normally, copying the file handle is tiny compared to the file you're actually copying, but if that's the only thing you're copying, you'll notice it.

-

32767 is the max characters for a post...

(/assets/uploads/files/1629571064535-testing-lots-of-blank-data-files-00014376.txt) testing lots of blank data files 00014375.txt testing lots of blank data files 00014374.txt testing lots of blank data files 00014373.txt testing lots of blank data files 00014372.txt testing lots of blank data files 00014371.txt testing lots of blank data files 00014370.txt testing lots of blank data files 00014369.txt testing lots of blank data files 00014368.txt testing lots of blank data files 00014367.txt testing lots of blank data files 00014366.txt testing lots of blank data files 00014365.txt testing lots of blank data files 00014364.txt testing lots of blank data files 00014363.txt testing lots of blank data files 00014362.txt testing lots of blank data files 00014361.txt testing lots of blank data files 00014360.txt testing lots of blank data files 00014359.txt testing lots of blank data files 00014358.txt testing lots of blank data files 00014357.txt testing lots of blank data files 00014356.txt testing lots of blank data files 00014355.txt testing lots of blank data files 00014354.txt testing lots of blank data files 00014353.txt testing lots of blank data files 00014352.txt testing lots of blank data files 00014351.txt testing lots of blank data files 00014350.txt testing lots of blank data files 00014349.txt testing lots of blank data files 00014348.txt testing lots of blank data files 00014347.txt testing lots of blank data files 00014346.txt testing lots of blank data files 00014345.txt testing lots of blank data files 00014344.txt testing lots of blank data files 00014343.txt testing lots of blank data files 00014342.txt testing lots of blank data files 00014341.txt testing lots of blank data files 00014340.txt testing lots of blank data files 00014339.txt testing lots of blank data files 00014338.txt testing lots of blank data files 00014337.txt testing lots of blank data files 00014336.txt testing lots of blank data files 00014335.txt testing lots of blank data files 00014334.txt testing lots of blank data files 00014333.txt testing lots of blank data files 00014332.txt testing lots of blank data files 00014331.txt testing lots of blank data files 00014330.txt testing lots of blank data files 00014329.txt testing lots of blank data files 00014328.txt testing lots of blank data files 00014327.txt testing lots of blank data files 00014326.txt testing lots of blank data files 00014325.txt testing lots of blank data files 00014324.txt testing lots of blank data files 00014323.txt testing lots of blank data files 00014322.txt testing lots of blank data files 00014321.txt testing lots of blank data files 00014320.txt testing lots of blank data files 00014319.txt testing lots of blank data files 00014318.txt testing lots of blank data files 00014317.txt testing lots of blank data files 00014316.txt testing lots of blank data files 00014315.txt testing lots of blank data files 00014314.txt testing lots of blank data files 00014313.txt testing lots of blank data files 00014312.txt testing lots of blank data files 00014311.txt testing lots of blank data files 00014310.txt testing lots of blank data files 00014309.txt testing lots of blank data files 00014308.txt testing lots of blank data files 00014307.txt testing lots of blank data files 00014306.txt testing lots of blank data files 00014305.txt testing lots of blank data files 00014304.txt testing lots of blank data files 00014303.txt testing lots of blank data files 00014302.txt testing lots of blank data files 00014301.txt testing lots of blank data files 00014300.txt testing lots of blank data files 00014299.txt testing lots of blank data files 00014298.txt testing lots of blank data files 00014297.txt testing lots of blank data files 00014296.txt testing lots of blank data files 00014295.txt testing lots of blank data files 00014294.txt testing lots of blank data files 00014293.txt testing lots of blank data files 00014292.txt testing lots of blank data files 00014291.txt testing lots of blank data files 00014290.txt testing lots of blank data files 00014289.txt testing lots of blank data files 00014288.txt testing lots of blank data files 00014287.txt testing lots of blank data files 00014286.txt testing lots of blank data files 00014285.txt testing lots of blank data files 00014284.txt testing lots of blank data files 00014283.txt testing lots of blank data files 00014282.txt testing lots of blank data files 00014281.txt testing lots of blank data files 00014280.txt testing lots of blank data files 00014279.txt testing lots of blank data files 00014278.txt testing lots of blank data files 00014277.txt testing lots of blank data files 00014276.txt testing lots of blank data files 00014275.txt testing lots of blank data files 00014274.txt testing lots of blank data files 00014273.txt testing lots of blank data files 00014272.txt testing lots of blank data files 00014271.txt testing lots of blank data files 00014270.txt testing lots of blank data files 00014269.txt testing lots of blank data files 00014268.txt testing lots of blank data files 00014267.txt testing lots of blank data files 00014266.txt testing lots of blank data files 00014265.txt testing lots of blank data files 00014264.txt testing lots of blank data files 00014263.txt testing lots of blank data files 00014262.txt testing lots of blank data files 00014261.txt testing lots of blank data files 00014260.txt testing lots of blank data files 00014259.txt testing lots of blank data files 00014258.txt testing lots of blank data files 00014257.txt testing lots of blank data files 00014256.txt testing lots of blank data files 00014255.txt testing lots of blank data files 00014254.txt testing lots of blank data files 00014253.txt testing lots of blank data files 00014252.txt testing lots of blank data files 00014251.txt testing lots of blank data files 00014250.txt testing lots of blank data files 00014249.txt testing lots of blank data files 00014248.txt testing lots of blank data files 00014247.txt testing lots of blank data files 00014246.txt testing lots of blank data files 00014245.txt testing lots of blank data files 00014244.txt testing lots of blank data files 00014243.txt testing lots of blank data files 00014242.txt testing lots of blank data files 00014241.txt testing lots of blank data files 00014240.txt testing lots of blank data files 00014239.txt testing lots of blank data files 00014238.txt testing lots of blank data files 00014237.txt testing lots of blank data files 00014236.txt testing lots of blank data files 00014235.txt testing lots of blank data files 00014234.txt testing lots of blank data files 00014233.txt testing lots of blank data files 00014232.txt testing lots of blank data files 00014231.txt testing lots of blank data files 00014230.txt testing lots of blank data files 00014229.txt testing lots of blank data files 00014228.txt testing lots of blank data files 00014227.txt testing lots of blank data files 00014226.txt testing lots of blank data files 00014225.txt testing lots of blank data files 00014224.txt testing lots of blank data files 00014223.txt testing lots of blank data files 00014222.txt testing lots of blank data files 00014221.txt testing lots of blank data files 00014220.txt testing lots of blank data files 00014219.txt testing lots of blank data files 00014218.txt testing lots of blank data files 00014217.txt testing lots of blank data files 00014216.txt testing lots of blank data files 00014215.txt testing lots of blank data files 00014214.txt testing lots of blank data files 00014213.txt testing lots of blank data files 00014212.txt testing lots of blank data files 00014211.txt testing lots of blank data files 00014210.txt testing lots of blank data files 00014209.txt testing lots of blank data files 00014208.txt testing lots of blank data files 00014207.txt testing lots of blank data files 00014206.txt testing lots of blank data files 00014205.txt testing lots of blank data files 00014204.txt testing lots of blank data files 00014203.txt testing lots of blank data files 00014202.txt testing lots of blank data files 00014201.txt testing lots of blank data files 00014200.txt testing lots of blank data files 00014199.txt testing lots of blank data files 00014198.txt testing lots of blank data files 00014197.txt testing lots of blank data files 00014196.txt testing lots of blank data files 00014195.txt testing lots of blank data files 00014194.txt testing lots of blank data files 00014193.txt testing lots of blank data files 00014192.txt testing lots of blank data files 00014191.txt testing lots of blank data files 00014190.txt testing lots of blank data files 00014189.txt testing lots of blank data files 00014188.txt testing lots of blank data files 00014187.txt testing lots of blank data files 00014186.txt testing lots of blank data files 00014185.txt testing lots of blank data files 00014184.txt testing lots of blank data files 00014183.txt testing lots of blank data files 00014182.txt testing lots of blank data files 00014181.txt testing lots of blank data files 00014180.txt testing lots of blank data files 00014179.txt testing lots of blank data files 00014178.txt testing lots of blank data files 00014177.txt testing lots of blank data files 00014176.txt testing lots of blank data files 00014175.txt testing lots of blank data files 00014174.txt testing lots of blank data files 00014173.txt testing lots of blank data files 00014172.txt testing lots of blank data files 00014171.txt testing lots of blank data files 00014170.txt testing lots of blank data files 00014169.txt testing lots of blank data files 00014168.txt testing lots of blank data files 00014167.txt testing lots of blank data files 00014166.txt testing lots of blank data files 00014165.txt testing lots of blank data files 00014164.txt testing lots of blank data files 00014163.txt testing lots of blank data files 00014162.txt testing lots of blank data files 00014161.txt testing lots of blank data files 00014160.txt testing lots of blank data files 00014159.txt testing lots of blank data files 00014158.txt testing lots of blank data files 00014157.txt testing lots of blank data files 00014156.txt testing lots of blank data files 00014155.txt testing lots of blank data files 00014154.txt testing lots of blank data files 00014153.txt testing lots of blank data files 00014152.txt testing lots of blank data files 00014151.txt testing lots of blank data files 00014150.txt testing lots of blank data files 00014149.txt testing lots of blank data files 00014148.txt testing lots of blank data files 00014147.txt testing lots of blank data files 00014146.txt testing lots of blank data files 00014145.txt testing lots of blank data files 00014144.txt testing lots of blank data files 00014143.txt testing lots of blank data files 00014142.txt testing lots of blank data files 00014141.txt testing lots of blank data files 00014140.txt testing lots of blank data files 00014139.txt testing lots of blank data files 00014138.txt testing lots of blank data files 00014137.txt testing lots of blank data files 00014136.txt testing lots of blank data files 00014135.txt testing lots of blank data files 00014134.txt testing lots of blank data files 00014133.txt testing lots of blank data files 00014132.txt testing lots of blank data files 00014131.txt

-

@SpectateSwamp ... ?

-

@Gribnit I'm gonna code up messages in those long filenames and attach those together as messages... Nobody but a human will be able to understand it.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

a human will be able to understand it.

[citation needed]

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@Gribnit I'm gonna code up messages in those long filenames and attach those together as messages... Nobody but a human will be able to understand it.

Um. A machine can read the FAT and see change order. Humans have trouble with magnetic media and solid state, only one guy claims to even be able to read optical.

I really need to write that symmetric image raster steg again. If I do, expect a compressible picture of a cat, followed by a series of incompressible "identical" images.

Only then can I speak freely.

-

@sloosecannon said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

The copy to the thumb drive took even more time using Spectate. 50 minutes compared to the drag and drop of 35 minutes.

My mistake.

That much time to copy next to nothing. Strange.

You're not copying next to nothing. You're copying 15,000 file handles to a not-excessively-optimized file list. Normally, copying the file handle is tiny compared to the file you're actually copying, but if that's the only thing you're copying, you'll notice it.

Surely this shows a serious flaw in FAT and other file formats.

Needless to say few will have that many pics in their life.

I'd like to see a format like the old tape drives... It would be much faster than the TAPE that's for sure. Anything is better than these restrictions.

One never wants to open a folder with lots of images.

Why do operating systems always refresh the directory info.... Dumb

It seldom changes... AND takes forever. It should be optional....

Spectate does a GF get files option to build it's directory list that is searched and displayed from... Much much smarter.....

-

@SpectateSwamp said in Nobody shares knowledge better than this:

Surely this shows a serious flaw in FAT and other file formats.

You should develop a SpectateSwamp File System.

-

@Zerosquare said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

Surely this shows a serious flaw in FAT and other file formats.

You should develop a SpectateSwamp File System.

That should keep TDWTF supplied with

for years.

for years.

-

Share what you are really good at. AND it has to be fun.

For Me...

1 Sky creature video

2 Spaghetti code master

3 Curling phenom

4 Medicine man

.....

I haven't done much SC stuff because way back I didn't have the tools to archive the location of each fly bye.

I'd list them and the system wouldn't start at the same point ....

Adding a point where play started before the slow mo fixed that.

I had a sheet with all the locations and a little drawing of the flight.

Realizing that long filenames could be used to pin point these locations

made it possible to view and detail those segments once and for all.

Sharing the sky creature video is fun.. I like hunting them.

AND I know where to look and how to lure them in. YUP

Last time I was hunting them.... I was at it for months....

-

@SpectateSwamp said in Nobody shares knowledge better than this:

Last time I was hunting them.... I was at it for months....

If you have the right equipment you can catch a lot of them. You want a sodium or other very bright light source, and a camera with the right shutter speed obliquely from the light. You may need to play with the angle and speed, detection is a matter of lighting and frequency matching during swarming hours. Since they occur in conjunction with flying insects, you'll want to start from wingbeat frequencies in terms of shutter speeds.

-

@Zerosquare said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

Surely this shows a serious flaw in FAT and other file formats.

You should develop a SpectateSwamp File System.

Just grab a copy of an old tape drive program..

To make it work; you need a sequential search that doesn't need the data to be ordered. That's the problem with non sequential file organizations... Adding and removing and examining the files is Piss poor. Yup

Once they get over 50 or 60 thousand files... They collapse. Poof

-

@SpectateSwamp said in Nobody shares knowledge better than this:

To make it work; you need a sequential search that doesn't need the data to be ordered.

So why's it need to be sequential?

-

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

To make it work; you need a sequential search that doesn't need the data to be ordered.

So why's it need to be sequential?

That's the only way without all the restrictions of indexed files.

Unlimited VS 60,000 max for files in a folder.What's the count limit for folders on a drive?

Maybe I should check it out.If they are organized sequential there wouldn't be any limit.

Let's go with Loooong folder names and NO data because it's the data.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

To make it work; you need a sequential search that doesn't need the data to be ordered.

So why's it need to be sequential?

That's the only way without all the restrictions of indexed files.

Unlimited VS 60,000 max for files in a folder.What's the count limit for folders on a drive?

Maybe I should check it out.If they are organized sequential there wouldn't be any limit.

Let's go with Loooong folder names and NO data because it's the data.

Oh christ, you want blockless storage. So, you want to optimize storage areal density and a small set of operations while absolutely fucking everything else. Why?

And at the end, you will have built an index. You know, that thing you hate.

-

Gribnit used Logic!

...It's not very effective.

-

@error said in Nobody shares knowledge better than this:

Gribnit used Logic!

...It's not very effective.

You see why I don't use it very often then.

-

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

To make it work; you need a sequential search that doesn't need the data to be ordered.

So why's it need to be sequential?

You can't index your way to finding all the results... Only only sequential.

-

@SpectateSwamp said in Nobody shares knowledge better than this:

@Gribnit said in Nobody shares knowledge better than this:

@SpectateSwamp said in Nobody shares knowledge better than this:

To make it work; you need a sequential search that doesn't need the data to be ordered.

So why's it need to be sequential?

You can't index your way to finding all the results... Only only sequential.

Dude. You could exhaust a space with a transfinite amount of patterns. Not only only sequential. These contentless name lists you're jizzing about? are a form of index.

-

I'm still waiting to hear where all these long filenames are going to be stored if they don't take any disk space.

-

The cloud?

-

@Gribnit said in Nobody shares knowledge better than this:

And at the end, you will have built an index. You know, that thing you hate.

It's part of the plan: with all the file data in the filename, those filenames are the files, only now they're anonymous. That means one less piece of metadata and one more step towards the ultimate goal.