Graphics card upgrade

-

@error said in Graphics card upgrade:

@boomzilla said in Graphics card upgrade:

EDIT: but after a quick search, I can see what you meant and it seems obvious.

After Googling, I went from "I didn't know that was a thing" to "I didn't know not having that was a thing."

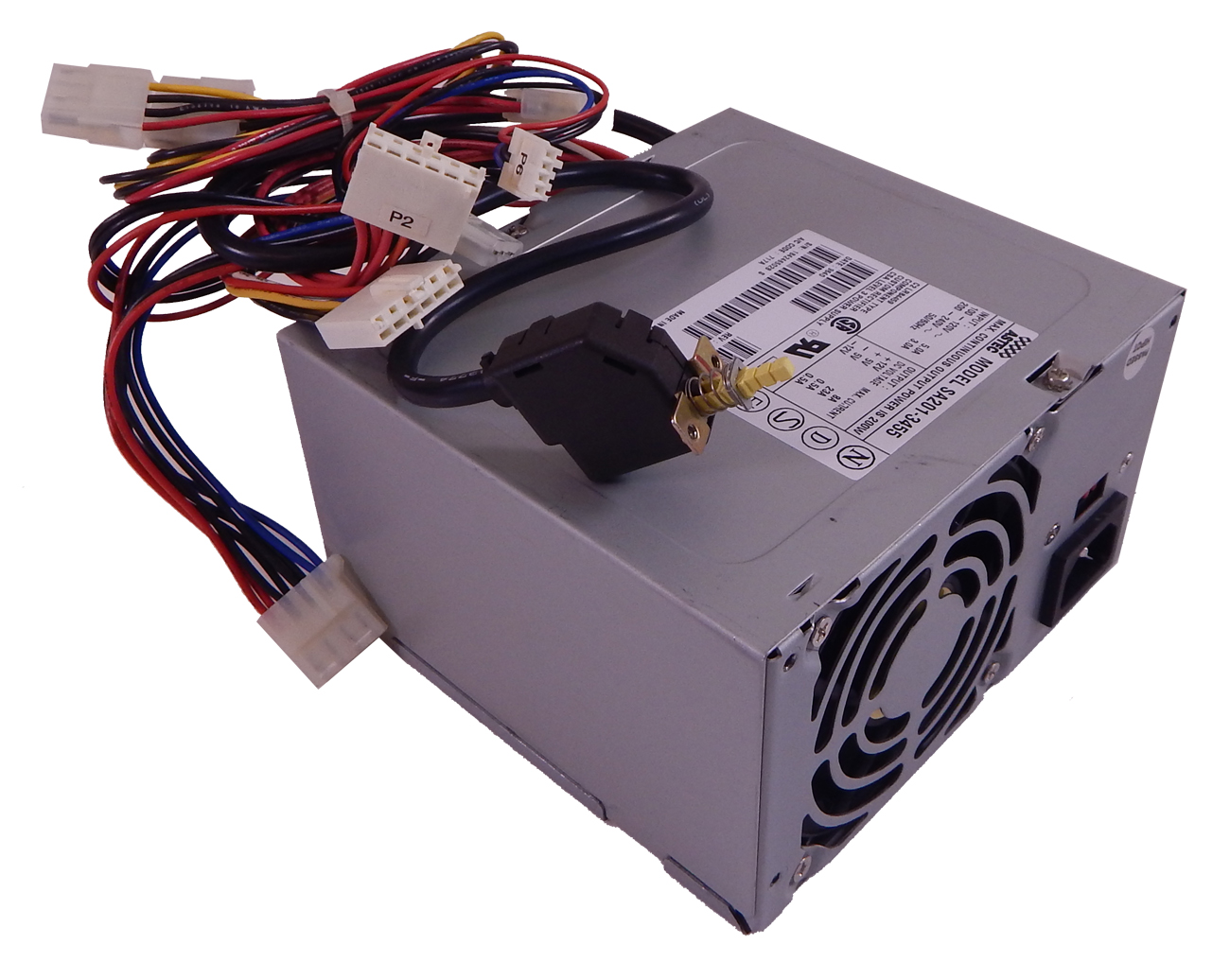

Have you never seen a power supply from before 2015 or whenever modular ones became available/popular?

-

@hungrier said in Graphics card upgrade:

@error said in Graphics card upgrade:

@boomzilla said in Graphics card upgrade:

EDIT: but after a quick search, I can see what you meant and it seems obvious.

After Googling, I went from "I didn't know that was a thing" to "I didn't know not having that was a thing."

Have you never seen a power supply from before 2015 or whenever modular ones became available/popular?

I've seen it when I've blown the dust out of my computer but I wasn't really inspecting it or trying to do anything with it.

-

@error said in Graphics card upgrade:

@boomzilla said in Graphics card upgrade:

EDIT: but after a quick search, I can see what you meant and it seems obvious.

After Googling, I went from "I didn't know that was a thing" to "I didn't know not having that was a thing."

Think I still have one of these:

in my closet.

That power switch went to the case and it was your power button.

-

@Deadfast said in Graphics card upgrade:

If you mean that it only has a PCI-E 2.0 then you technically wouldn't have to, they're backwards compatible. Hell, you could even go as far back as 1.0.

Of course your graphics card would then have its throughput limited. When 3.0 was originally introduced the difference was immeasurable, nowadays I imagine that would no longer be the case.Now that PCIe 4.0 is here and all new graphics cards is using that it's still not a requirement to run them att full performance, as PCIe 3.0 still got enough bandwidth to handle it. Would be a few years before bandwidth starts bottlenecking performance, even 2.0 worked perfectly well up to the 10xx series at least where you would start losing single digit FPS in games iirc. The main advantage of 4.0 right now is enabling faster storage than 3.0, although we're still not up to max possible speed with current harddrives.

-

Tried to play a LAN game of Civ VI with my family and my computer kept restarting. I'm assuming my (GeForce GT 730) graphics card is dying. The machine itself is a little over 7 years old, though I replaced the graphics card and hard drive a few years ago. Being so old, it has similar issues as my son's computer so I figured it was time to refresh the whole thing.

Ordered a scratch and dent from the Dell Outlet with an RTX 2060, so that'll be a big upgrade for me.

-

@boomzilla said in Graphics card upgrade:

so that'll be a big upgrade for me.

I assume that means that your new machine is sufficiently pimped out with RGB and other blinkenlights.

-

@cvi said in Graphics card upgrade:

@boomzilla said in Graphics card upgrade:

so that'll be a big upgrade for me.

I assume that means that your new machine is sufficiently pimped out with RGB and other blinkenlights.

No, but I'll still have an RGB keyboard. Went for the XPS, not Alienware.

-

@boomzilla Well. Despite trying to avoid RGB keyboards, I eventually ended up with one anyway. I hate it less than initially estimated. (It being marginally useful to indicate which keyboard layer is currently active may have contributed to that.)

-

My (somewhat aging) machine sometimes spontaneously reboots, with the

BIOSUEFI varyingly saying it's an overheat event or a low voltage event. Wondering whether I need to look at my cooling, my power supply, or both.

-

@PleegWat said in Graphics card upgrade:

My (somewhat aging) machine sometimes spontaneously reboots, with the

BIOSUEFI varyingly saying it's an overheat event or a low voltage event. Wondering whether I need to look at my cooling, my power supply, or both.Low voltage can lead to overheat, I believe

-

@sloosecannon said in Graphics card upgrade:

Low voltage can lead to overheat, I believe

Low voltage to the fans would spin them slower, causing more heat. Low voltage to other chips would decrease the heat output, though...

I’d probably still start with power supply though. Especially if you have a spare you can temporarily cannibalize to test with.

-

@Unperverted-Vixen said in Graphics card upgrade:

Low voltage to the fans would spin them slower, causing more heat. Low voltage to other chips would decrease the heat output, though...

-

Air flow vs. fan supply voltage is a linear relationship (I think?). But many fans used in computer use open-loop or closed-loop regulation.

-

Heat dissipation in semiconductors vs. supply voltage is a quadratic relationship: https://semiengineering.com/knowledge_centers/low-power/low-power-design/power-consumption/

So, I guess the answer is... it depends.

-

-

@Zerosquare The CPU fan typically has proper control (and a 4-pin cable). The rest may be voltage-controlled by the motherboard, but have not other regulation. So 12V bus dropping would mean the fans slowing down.

However, fans are supplied by 12V, and board logic is 3.3V . The only common thing between the buses is group-regulation in the PSU.

-

@Gąska said in Graphics card upgrade:

Wait a month or two, and you'll save at least $100.

Anniversary update: