Any program that can open a 1.87 GB XML document?

-

We recently got this

hackywonderful auditing software (to the tune of 5-digit figures per month), and have been trying to get our data in it.unfortunately the only method to import data into it is via XML, so we've been working on getting our data out of the databases into the XML schema they want. This wouldn't be so bad if we were "like their other customers who only import a max 200,000 records into the system", but we're not. We need 4,000,000 records.Nominally a flat database push (heck, even export to CSV) of the data needed would only take a few hundred MB, but due to the XML format it blows up to (latest export test) 1,910,563 KB.

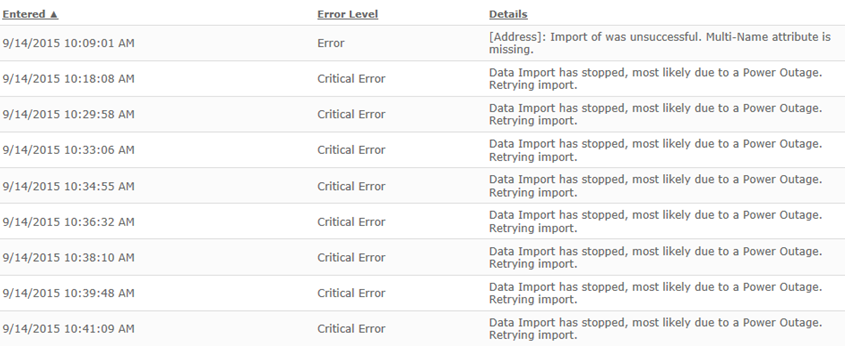

Apparently this blows the softwares' little head, because it (probably) crashes during processing of the file, and the logging functions just aren't any more descriptive than

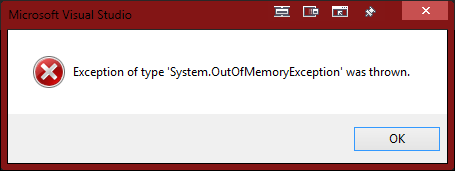

Import of was unsuccessful. Multi-Name attribute is missing.(Yes, there's a missing thing there. There's an identifier number between "of" and "was", but it's NULL).We do get an indicator of where the import failed, but it's approximately 35k records in, but we don't have an editor that can handle such a file well enough (we have only MS tools at the moment. Internet Explorer just freezes, Visual Studio valiently loads up to 3 Gb or RAM before OOM happens, and Notepad.... no comment).

Can anyone recommend a (hopefully) lightweight editor capable of handling this monstrosity we're forced to create?

Filed under: No, we can't just split the export into multiple files because Bruce Said So

-

Write a trivial extractor using a streaming parser. Half a day.

-

Ultra Edit is rather good at editing large files.

-

re @Tsaukpaetra

Oh right, editor. Have you got less around? Doesn't even sound like you want to edit.. but, if so, have you got vim around? Works a treat with real-sized files.

-

Specifically on a real OS (aka NotWindows)

-

Or use a simple script in a language of your choice to truncate a copy of the file to a size your preferred editor can handle. That should allow you to at least find the failing record.

-

EmEditor lets you open partial of files. It's my goto improved text editor

-

trivial extractor using a streaming parser

Would that it would take only half a day, but unauthorized projects are... frowned upon.

got less

Would that I could install cygwin at least. Haven't been able to get around the firewall to download a portable version either.Specifically on a real OS

Would that they would allow me a personal VM to play with.truncate a copy of the file

Our current approach in a nutshell. Problem is that due to the way the XML file was specified it's moderately difficult to patch end tags for, and records aren't necessarily a consistent number of rows...Ultra Edit is rather good at editing large files.

open partial of files

Will try (assuming it's pokeable through the firewall).

-

Can you use something (first thing that comes to mind, something like MSXML) to parse the document and split it into more manageable chunks? If the problem is that there's a busted tag somewhere, and MSXML doesn't barf, it'll probably tell you where the problem was.

-

parse the document and split it into more manageable chunks

Trying to figure that out, as well as streaming.

The problem is that this XML file was generated using some crafty SQL on SQL Server, so in theory it is indeed valid XML.My guess is that the audit programs' import process is attempting to load the entire thing in memory, and it's very likely that it's not a 64-Bit program, so when they smash whatever XML module they're using with a command to put it in memory, the import program is core dumping.

We don't have visibility into the server in order to figure out if this is actually the case (Apparently the service that watches the import process just kicks it off again), and the most verbose log we can get complains of things like a "Power Outage":

-

This wouldn't be so bad if we were "like their other customers who only import a max 200,000 records into the system", but we're not. We need 4,000,000 records.

@CodingHorrorBot if you please.

According to some people on the internet, WordPad can open very large files, I don't know if it's true. I've opened large files in Notepad++, but not 1.87GB-large, might be worth a try.

Otherwise, the Linux CLI tools can handle arbitrarily large files easily (including advanced search and replace stuff with sed/awk, if you need it). Or, heck, you could whip out a nice graphical Python program to do so in less than 10 lines lines: one textbox, two pageup and pagedown buttons, seek to position, read 10MB chunk, display on textbox.

-

@anonymous234 Is Doing It Wrong™

-

Not me you idiot

-

/me falls out of chair

-

My guess is that the audit programs' import process is attempting to load the entire thing in memory, and it's very likely that it's not a 64-Bit program, so when they smash whatever XML module they're using with a command to put it in memory, the import program is core dumping.

We don't have visibility into the server in order to figure out if this is actually the case (Apparently the service that watches the import process just kicks it off again), and the most verbose log we can get complains of things like a "Power Outage":So it's their program that is core dumping on their server, and YOU are tasked to debug it ?

I hope you are billing them generously !

-

Heh, I wish I could spend a few minutes debugging the server. No, they won't give access to the backend.

I'm halfway to looking into SQL Server injection with the front end so I could get a console into it (the server is running in our network but we can't log in to it directly, only via FTP of the expected XML file, or through the ASP 2.0 web interface).

-

This wouldn't be so bad if we were "like their other customers who only import a max 200,000 records into the system", but we're not. We need 4,000,000 records.

Is there any way you could break up the import into multiple pieces?

-

We're also looking into an artificial split on Primary Key to reduce the size of the export file, yes. Not sure why my fellow lacky (I'm not the developer on this, merely an advisor) can't do it like that.

-

I still don't understand WHY you are trying to debug it.

They provide the software that you pay ...5-digit figures per month

At that price, it doesn't come with support ?

Or is it the same kind of helpful support as MS

-

Yes. The support is along the lines of "Did you use our < insert in-house tool that does it wrong > tool to make the file in the right format? If not we can't help you if you're not using the right format." kind of replies, the same kind you get from support bots that run your question into the FAQ wikis and emails it back to you.

-

I handle fatassed XML files all the time. Either UltraEdit or Notepad++. One of those works, the other falls on its face, I can never remember which one.

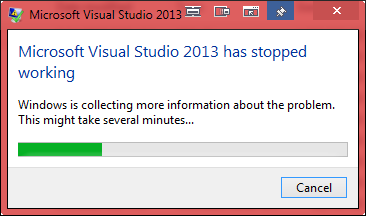

Visual Studio usually also manages it, but takes approximately forever.

That said, I'm yet to meet an XML parsing library that plays nice with files beyond about 4gb, even on 64bit systems.

-

-

Why can't the export be broken into chunks? You have no control over the export process?

It's not a good workaround, but if it only has to happen once, could you possibly make a clone of the entire database, drop all but rows 0-200k, do the export on the remaining 200k rows and import them into the new system, and repeat until all rows have been migrated?

-

Tried it in VS 2013. Got either this:

Or this:

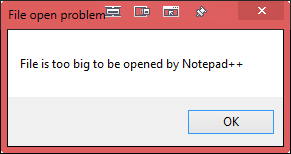

Tried Notepad++, got this:

EmEditor works, but it seems to be a trial (hopefully long enough to satisfy my needs)

Seems Ultra Edit is blocked by group policy (Who TF set that up?!).You have no control over the export process?

Yes and no. Yes, I can probably get it to break into chunks but they don't export correctly from a single SQLCMD call, and nobody has given me anything else to break it up.drop all but rows 0-200k, do the export on the remaining 200k rows

Current efforts include trying to update the top 200k records with a flag column=null with some @counter, increment and cursor through until no records remain without a flag, then user another cursor to export those batches.

Its... Maybe working? Hard to tell.

-

My guess is that the audit programs' import process is attempting to load the entire thing in memory, and it's very likely that it's not a 64-Bit program, so when they smash whatever XML module they're using with a command to put it in memory, the import program is core dumping.

That was another reason I was asking--if you can load the entire thing into (say) MSXML or something you may be able to write a program to break the thing into multiple manageable chunks. You'll probably have to figure out what "manageable" is empirically

and, of course, this assumes the destination can accept multiple pieces instead of one big dump.

and, of course, this assumes the destination can accept multiple pieces instead of one big dump.

-

I've opened large files in Notepad++, but not 1.87GB-large, might be worth a try.

FWIW I just opened a 700MB file in regular Notepad. It took a while, but it eventually opened and was reasonable responsive. I can't find a ~2GB file at hand or I'd test that.

-

Various customers and vendors that don't understand the point of XML. 300 character element names. Empty optional elements. General idiocy. Usually the overhead ratio is like 99.9 percent.

-

nobody has given me anything else to break it up.

If you can figure out the schema from the full document you should be able to write your own program to split it without too much trouble.

-

UltraEdit is immensely commonly pirated by mistake.

Your licensing guys probably caught a group sharing 1 key and freaked.

-

-

be a trial (hopefully long enough to satisfy my needs)

Yeah, it's a trial but the full version isn't too bad (~$20 IIRC). And it's not bad on licensing (Buy once, use anywhere)

-

uses XML that large?!

uses XML that large?!If our scientific data off our instruments is converted to XML (yes, there's a standard document schema for it) then it becomes documents in the range 50–150 GB each. I'm hoping that we can keep it binary so that it doesn't blow our storage server in a few days…

-

-

-

-

Here, for you, a C# one-liner:

Enumerable.Range(0, 40000).ToList().ForEach(x => System.IO.File.AppendAllText(Path.GetTempPath() + Path.DirectorySeparatorChar + "2GB.csv", String.Join(",", Enumerable.Range(0,10000).Select(e => e.ToString()))));Should be somewhere around 2GB in size ...

-

$> dd if=/dev/urandom of=2GB.txt bs=1048576 count=2048

-

The only program I know which really can open real big files is the Large Text File Viewer.

Available here for example

There is a "newer" project called Large Text File Reader (check it out on SourceForge) which claims to be able to open up to 10GB files.

TRWTF: "new users can only input two URLs in a post" and I can't even input two. :-(

-

"new users can only input two URLs in a post" and I can't even input two.

That's Discourse for you.

Don't worry, you'll get more user abilities in an hour or so? Or was it just 15 minutes?

-

-

-

```

$> dd if=/dev/urandom of=2GB.txt bs=1048576 count=2048But then I'd have Linux on me. :smile: ETA: Also, nice quote-mangling, Discurse. Great software you wrote, @end. https://www.youtube.com/watch?v=ajsNJtnUb7c

-

But then I'd have Linux on me.

you already do. what OS do you think we host this forum on?

also FTYTCFY

-

@FrostCat said:

But then I'd have Linux on me.

you already do. what OS do you think we host this forum on?

Well, I can't log in to the forum server and run that, so I meant I'd have to install Linux on my own computer, which I don't have an available one to do, not to mention the time it would take to download and install it just to test that seems a bit out of proportion.

also FTYTCFY

Yeah, that was more effort than I wanted to make, but thanks.

-

not to mention the time it would take to download and install it just to test that seems a bit out of proportion.

Huh? Modern day netinstalls are around 50 megs and install in about 10 minutes on a semi-decent connection unless you install all the bells and whistles.

-

Modern day netinstalls are around 50 megs and install in about 10 minutes

Ah, well, I didn't know that. It'd still be about 20 minutes, which seems overkill especially since I just remembered you can use copy/b from a command prompt in Windows, so I could've just concatenated ~3 of the 700MB file I used for testing.

-

@FrostCat, post:44, topic:51209, full:false said:

I'd have to install Linux on my own computer

The

is not having a Linux live usb key handy

is not having a Linux live usb key handy

-

@FrostCat, post:44, topic:51209, full:false said:

I'd have to install Linux on my own computer

The

is not having a Linux live usb key handy

is not having a Linux live usb key handyI'll tell you how many times I've had an issue and I wished I had one of those handy.

Zero.

-

We get it, you're one of those KOOL LINUX KIDZ who used Enlightenment when the 21st Century began.

-

I'll tell you how many times I've had an issue and I wished I had one of those handy.

Zero.You prefer OpenBSD?! :Wtf: