Bad Gateway *SPANK SPANK*

-

20137/4568 timed out 2016-07-18T11:05:34,278046629+0000 ... usual waiting threads... ... Thread 1 (Thread 0x7f3b17ed8740 (LWP 20137)): #0 0x0000000000c02d37 in void v8::internal::StringHasher::AddCharacters<unsigned char>(unsigned char const*, int) () #1 0x0000000000c04a76 in v8::internal::IteratingStringHasher::VisitConsString(v8::internal::ConsString*) () #2 0x0000000000c04e79 in v8::internal::String::ComputeAndSetHash() () #3 0x0000000000c06125 in v8::internal::RegExpKey::Hash() () #4 0x0000000000c12ca9 in v8::internal::HashTable<v8::internal::CompilationCacheTable, v8::internal::CompilationCacheShape, v8::internal::HashTableKey*>::FindEntry(v8::internal::Isolate*, v8::internal::HashTableKey*) () #5 0x0000000000c12e81 in v8::internal::CompilationCacheTable::LookupRegExp(v8::internal::Handle<v8::internal::String>, v8::internal::JSRegExp::Flags) () #6 0x00000000009546a9 in v8::internal::CompilationCacheRegExp::Lookup(v8::internal::Handle<v8::internal::String>, v8::internal::JSRegExp::Flags) () #7 0x0000000000954b7f in v8::internal::CompilationCache::LookupRegExp(v8::internal::Handle<v8::internal::String>, v8::internal::JSRegExp::Flags) () #8 0x0000000000bb28ca in v8::internal::RegExpImpl::Compile(v8::internal::Handle<v8::internal::JSRegExp>, v8::internal::Handle<v8::internal::String>, v8::internal::JSRegExp::Flags) () #9 0x0000000000cbb1b2 in v8::internal::Runtime_RegExpInitializeAndCompile(int, v8::internal::Object**, v8::internal::Isolate*) () #10 0x000008c12950963b in ?? () #11 0x000008c129509581 in ?? () #12 ... goes on for a while like this...

-

@boomzilla Do the backtraces from other lockups look the same? Because if the problem is in the JS-code (i.e., what corresponds to frame #10 and above), the useful part of the stack trace wouldn't really show the problem, it's just part of what the JS code ends up calling repeatedly while doing its infinite loopy-loops.

-

@cvi said in Bad Gateway *SPANK SPANK*:

Do the backtraces from other lockups look the same?

This was the first one I've found that had something interesting going on in Thread 1. All the others seemed to be composed entirely of unknown frames, like starts in #10. I guess we'll have to wait for our custom compiled node to see what's going on there.

-

@boomzilla said in Bad Gateway *SPANK SPANK*:

This was the first one I've found that had something interesting going on in Thread 1.

That doesn't actually look all that interesting. It's just looking up a RE in a cache. There are some vile problems in the RE engine that node uses, but you are probably not compiling those sorts of regular expressions over and over. (I really hope that's not the problem!)

However, the behaviour described reminds me a bit of what Eclipse looks like when it genuinely runs out of memory. Could there be a thrashing GC involved? Those look really bad and are indicative of problems elsewhere. (I don't know the architecture of the engine well enough to comment knowledgeably.)

-

@boomzilla Makes sense, and supports the hypothesis that the problem lies in whatever makes up the unknown frames.

-

@dkf said in Bad Gateway *SPANK SPANK*:

However, the behaviour described reminds me a bit of what Eclipse looks like when it genuinely runs out of memory.

That's possible. Does node limit its memory like java does? Hmm...some searching says that it does.

EDIT: ...but...I think we should have been seeing evidence of that in the flamegraphs.

-

@boomzilla said in Bad Gateway *SPANK SPANK*:

the flamegraphs

I don't think the service problems correlate with postings in that thread…

-

@dkf said in Bad Gateway *SPANK SPANK*:

There are some vile problems in the RE engine that node uses

An early flamegraph had me investigating that, but the regex it was running wasn't that bad, and the next flamegraph was stuck somewhere totally different.

-

Thread 1 (Thread 0x7f8badfe2740 (LWP 21781)): #0 sem_post () at ../nptl/sysdeps/unix/sysv/linux/x86_64/sem_post.S:57 #1 0x0000000000e6571d in v8::platform::TaskQueue::Append(v8::Task*) () #2 0x0000000000a22e69 in v8::internal::Compiler::GetOptimizedCode(v8::internal::Handle<v8::internal::JSFunction>, v8::internal::Handle<v8::internal::Code>, v8::internal::Compiler::ConcurrencyMode, v8::internal::BailoutId, v8::internal::JavaScriptFrame*) () #3 0x0000000000c8675d in v8::internal::Runtime_CompileOptimized(int, v8::internal::Object**, v8::internal::Isolate*) () #4 0x00000acdb4a0963b in ?? ()

-

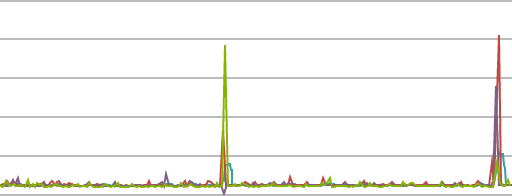

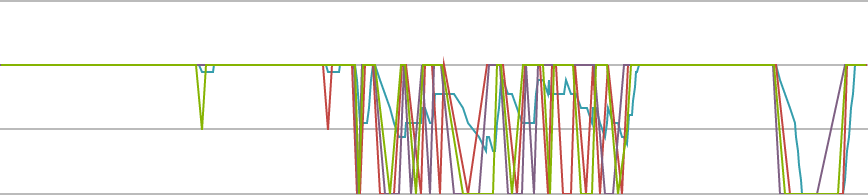

I must say, I like this...

A lot better than I liked this...

-

-

@ben_lubar Looks like a null pointer at the bottom of that first stack dump? (The few above it with lots of zeros in the addresses look a bit suspicious too)

-

@FrostCat the first 16 bits of any address that code is located at should be the same, so I'm pretty sure every frame except the first one is garbage.

-

Ok, so this segfaults: https://gist.github.com/BenLubar/064b65170ae1f01ea00328b537eaefba

-

@ben_lubar How are we running a program in an interpreted language, and it still segfaults? It's like a miracle of awful software.

-

@ben_lubar All those waiting threads look like they're worker threads idling on a presumably empty work queue. It doesn't look like a deadlock.

-

Got a raid now, but I'll try to figure out what version the bug appeared in.

-

@boomzilla Be wary assuming that web giants like Netflix would notice something like this.

With sufficiently large AWS deployments, restarting nonresponsive machines is just SOP and nearly transparent.

-

@ben_lubar said in Bad Gateway *SPANK SPANK*:

Got a raid now

We killed Vale Guardian and got within 5% of killing Gorseval the Multifarious. Probably not going to raid again for a while since this time was just so I could unlock some content that requires killing any raid boss.

Anyway, back to business.

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

@ben_lubar How are we running a program in an interpreted language, and it still segfaults? It's like a miracle of awful software.

The interpreted language is shit.

-

@Deadfast Regardless, it shouldn't be able to escape from its cave.

-

@blakeyrat Exactly, the fact that it can makes it shit. Reminds me of another shitty web language...

-

Hmm, so the commit that may have caused the crash is this one:

-

@Deadfast I expected WTFs from the language being shit, but the interpreter surprised me being shit too.

-

Turns out it's a buffer overflow in v8's debug symbol generator: https://github.com/nodejs/node/issues/7785#issuecomment-233501500

And where's the big piece of data coming from?

It's a 464 byte generated variable name for a regular expression.

-

@ben_lubar said in Bad Gateway *SPANK SPANK*:

It's a 464 byte generated variable name for a regular expression.

-

@ben_lubar said in Bad Gateway *SPANK SPANK*:

Only 40 minutes to build nodejs. Nice.

40 minutes seems like a long time. Some of our automated builds include building node.js from source (for FIPS compliance, and for the fact that node.js uses a HARD CODED LIST OF DEFAULT ROOT CERTIFICATE AUTHORITIES ARE YOU KIDDING ME RIGHT NOW) and it only takes 20 minutes. That's an RPM build, so it's building both for debug and release, theoretically 2x the time.

-

@ben_lubar said in Bad Gateway *SPANK SPANK*:

buffer overflow

464 byte generated variable name

A variable name that long is dumb, even when generated. Overflowing a buffer because of it…

-

@ben_lubar said in Bad Gateway *SPANK SPANK*:

Turns out it's a buffer overflow in v8's debug symbol generator: https://github.com/nodejs/node/issues/7785#issuecomment-233501500

And where's the big piece of data coming from?

It's a 464 byte generated variable name for a regular expression.

So is it something fixable this end or are we stuck waiting for an upstream fix?

-

@Jaloopa Note that this isn't the cause behind the cooties. Ben's just trying to compile a debuggable version of NodeJS so we can figure out

the problem is.

the problem is.

-

@Deadfast oh. I thought he'd managed to figure out something from the dumps we have

-

@Jaloopa Sadly not. The dumps we have don't show what the main thread is doing which Ben is trying to rectify.

-

@Deadfast said in Bad Gateway *SPANK SPANK*:

Ben is trying to rectify.

There should probably be a joke in there about Node.js, a rectum, and a purple dildo — possibly including a high voltage rectifier connected to the dildo — but my brain does not yet have sufficient caffeine to put the disparate elements together into something that's actually funny.

-

@heterodox said in Bad Gateway *SPANK SPANK*:

HARD CODED LIST OF DEFAULT ROOT CERTIFICATE AUTHORITIES ARE YOU KIDDING ME RIGHT NOW

You know I hear the OS keeps one of those, maybe they could just look the certs up?

Seriously that's busted as shit. Being an open source project, I'm sure it'll be fixed in roughly 47 years.

-

@heterodox said in Bad Gateway *SPANK SPANK*:

40 minutes seems like a long time. Some of our automated builds include building node.js from source (for FIPS compliance, and for the fact that node.js uses a HARD CODED LIST OF DEFAULT ROOT CERTIFICATE AUTHORITIES ARE YOU KIDDING ME RIGHT NOW) and it only takes 20 minutes. That's an RPM build, so it's building both for debug and release, theoretically 2x the time.

But notice that the time reported by @ben_lubar was the time it took docker.com to build it. Which is certainly not a major priority for them. Who knows what the build machine even looks like?

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

You know I hear the OS keeps one of those, maybe they could just look the certs up?

Seriously that's busted as shit. Being an open source project, I'm sure it'll be fixed in roughly 47 years.

Indeed. There was an issue opened last year, I think, and their response was basically that it was the distribution's job to add a patch to their package to check against their distribution's trust store instead. Which is a relatively reasonable position, but the RPM in THEIR REPOSITORY does not do this for Red Hat.

-

@boomzilla said in Bad Gateway *SPANK SPANK*:

But notice that the time reported by @ben_lubar was the time it took docker.com to build it. Which is certainly not a major priority for them. Who knows what the build machine even looks like?

Fair point; I didn't know one of their servers was doing the build. Our build is also in a Docker container but I think the host is a 4x16 (load depends on what else is running at the same time).

-

@heterodox said in Bad Gateway *SPANK SPANK*:

Indeed. There was an issue opened last year, I think, and their response was basically that it was the distribution's job to add a patch to their package to check against their distribution's trust store instead.

And if it's Windows, where that's clearly wrong and there is no "distribution" anyway? Let me guess: the answer is, "FUCK WINDOWS ONLY 95% of the world uses that OS so we should SHIT ALL OVER THEM! Woo open sources!!!"

There's no way to justify hard-coding values you can easily (and goddamned SHOULD) simply ask the OS for.

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

And if it's Windows, where that's clearly wrong and there is no "distribution" anyway? Let me guess: the answer is, "FUCK WINDOWS ONLY 95% of the world uses that OS so we should SHIT ALL OVER THEM! Woo open sources!!!"

There's no way to justify hard-coding values you can easily (and goddamned SHOULD) simply ask the OS for.

Yeah, I'm pretty sure it's the same story with Windows; I think I built a node.exe for our developers to use a year or so ago. Need to build them a new one.

On that front, to be fair they're using OpenSSL as their SSL library on Windows instead of hooking into Schannel (would double the QA

) so I believe getting the system's root certificates would be significantly harder than on Linux distributions wherein it's just a file.

) so I believe getting the system's root certificates would be significantly harder than on Linux distributions wherein it's just a file.

-

@heterodox said in Bad Gateway *SPANK SPANK*:

On that front, to be fair they're using OpenSSL as their SSL library on Windows instead of hooking into Schannel (would double the QA ) so I believe getting the system's root certificates would be significantly harder than on Linux distributions wherein it's just a file.

Waaah! Doing the right thing is hard! I'm going to do it the wrong stupid way instead!

Seriously, they made their shit-bed, now they have to lie in it and roll around all over the shit.

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

FUCK WINDOWS ONLY 95% of the world

Not on servers. Much less servers running nodejs.

-

-

@fbmac said in Bad Gateway *SPANK SPANK*:

Not on servers.

Or on phones, where I really don't think it is as much as 5%.

However, it's the embedded space that is interesting; that's all over the place, and includes a gigantic amount of diversity (yadda yadda guacamole thread). There's lots of Windows, especially running things with user-facing displays like animated advertising hoardings, but lots of other things too. For example, I have no idea what's running the dash displays in my car.

-

@fbmac said in Bad Gateway *SPANK SPANK*:

Not on servers.

WEB servers.

Windows dominates, say, printer servers.

-

@blakeyrat when you find a significant number of printer servers that are being used to host Node.js applications, you can include them in the discussion.

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

@fbmac said in Bad Gateway *SPANK SPANK*:

Not on servers.

WEB servers.

Windows dominates, say, printer servers.

Yeah, I'm sure all of these run Windows:

-

@ben_lubar Judging by my recent experiences with TP-LINK, I can assure you that those don't run, period.

-

This post is deleted!

-

@blakeyrat said in Bad Gateway *SPANK SPANK*:

Windows dominates, say, printer servers.

Not necessarily, though this is also a problem where you've got to work out whether you just count the number of computers hosting a printer server or whether you scale by the amount of traffic or number of clients.

-

So basically we're screwed unless I can figure out a way to work around that bug. Or maybe install a version of gdb 4 minor releases past what's on debian stable.