Forum is hard to Google

-

- Google for something inside the forum

- Results have the first line of the posts showing the words you searched

- Click one of the results

- You're now in a completely unrelated post

-

@wharrgarbl Can you give an example?

-

@wharrgarbl Google's bug tracker is

. The forum search actually works pretty well.

. The forum search actually works pretty well.

-

@masonwheeler said in Forum is hard to Google:

@wharrgarbl Can you give an example?

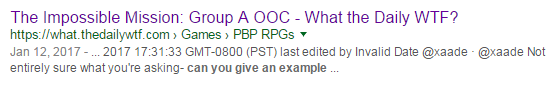

This result from a search for "can you give an example":

Links to https://what.thedailywtf.com/topic/20854/the-impossible-mission-group-a-ooc/1356

That is:

@boomzilla said in Forum is hard to Google:

@wharrgarbl Google's bug tracker is . The forum search actually works pretty well.

Not always, there were times I couldn't find something there, but found with Google. Specially true when it didn't have time to index yet (something I just saw but don't remember what topic), or something I don't have an exact match. (Google does aliases and stuff).

-

@wharrgarbl Unless I'm mistake, Google indexes the paginated version of the forum, so the URLs will always lead to post indexes that are one greater than a multiple of (probably) 20

-

@RaceProUK Using inspect to take the real URL in my example (because NodeBB does a redirect), it is: https://what.thedailywtf.com/post/1075832

-

@wharrgarbl So, you expect GoogleBot to understand how NodeBB works well enough to capture the post links while it indexes?

-

@RaceProUK Some of the links on google that I see use the global post id and others point to some topic index. Weird.

-

@boomzilla Not really: it depends on what URL GoogleBot used to get to those posts

-

@RaceProUK said in Forum is hard to Google:

@wharrgarbl So, you expect GoogleBot to understand how NodeBB works well enough to capture the post links while it indexes?

No, I expect web developers to understand how Google works and fix their stuff.

-

@wharrgarbl You are aware that Google's search algorithm is constantly changing, right?

-

@wharrgarbl said in Forum is hard to Google:

@RaceProUK said in Forum is hard to Google:

@wharrgarbl So, you expect GoogleBot to understand how NodeBB works well enough to capture the post links while it indexes?

No, I expect web developers to understand how Google works and fix their stuff.

What if it works in a way inherent, such that the fix would be having one post per page?

-

@RaceProUK The guidelines for webdevelopers, as published by Google, don't change that often.

Instead of page=5, working websites with variable page size have something like start=20

You could even have a pagesize url parameter.

-

-

@wharrgarbl or just make the crawl version give only one post per page... then you don't go to the top of a page of 20 posts, and go to the exact post no matter what your settings are.

-

@xaade Giving the googlebot a page different than you give users was against the rules, last time I read something about it

-

@wharrgarbl Yeah, dynamic content is a bitch.

-

@xaade Changing the meta description of the page changes the snippet that Google shows in it's results too.

-

Y'all know that forum SEO was largely figured out years ago, right? (And that it's largely sucky because user content is always dinged.)

-

@xaade said in Forum is hard to Google:

What if it works in a way inherent, such that the fix would be having one post per page?

Why are you talking like Nagesh all of a sudden?

-

@wharrgarbl said in Forum is hard to Google:

- Google for something inside the forum

- Results have the first line of the posts showing the words you searched

- Click one of the results

- You're now in a completely unrelated post

You should Google with bing instead.

-

@wharrgarbl said in Forum is hard to Google:

@xaade Giving the googlebot a page different than you give users was against the rules, last time I read something about it

So then signed-out users see 1 post pages by default.

-

@LB_ said in Forum is hard to Google:

@wharrgarbl said in Forum is hard to Google:

@xaade Giving the googlebot a page different than you give users was against the rules, last time I read something about it

So then signed-out users see 1 post pages by default.

Only one problem with that:

The posts-per-page setting for anonymous users is the maximum for all users.

-

@wharrgarbl said in Forum is hard to Google:

@xaade Giving the googlebot a page different than you give users was against the rules, last time I read something about it

I'm pretty sure that as long as the content is the same, it is fine.

I think it would be allowed to serve a static (HTML) page for

/post/${pid}URLs, containing that post alone, and some pagination features (next post, previous post), which would be seen by GoogleBot (and users who don't have Javascript). The posts surrounding that one could all be loaded in Javascript with AJAX. You could have the server configured to redirect GoogleBot (or anyone, I guess) to a/post/URL whenever a/topic/URL is requested....although, I seem to recall that currently,

/post/URLs redirect to/topic/URLs, so you'd probably need to change that or you'd probably get an infinite loop of redirects.

-

@LB_ said in Forum is hard to Google:

@wharrgarbl said in Forum is hard to Google:

@xaade Giving the googlebot a page different than you give users was against the rules, last time I read something about it

So then signed-out users see 1 post pages by default.

Javascript can be used to load in a full page of posts for context. GoogleBot won't run the script; it'll just see the static HTML that you serve.

All you'd need to do is ensure that the static HTML contains just that one post, and links for GoogleBot to follow so that it can get to the other posts in the topic.

-

@anotherusername said in Forum is hard to Google:

GoogleBot won't run the script; it'

In theory, it does

Just put the evidenced post in meta description, and that is what google will display for the link.

-

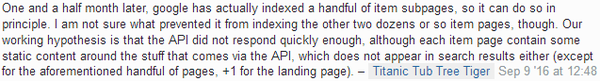

@wharrgarbl GoogleBot will do some pretty advanced analysis and will run certain types of scripts, including when you're using Javascript to add content to the page. But I don't think it supports using

XMLHttpRequestto download external content.There is one comment there that suggests that Google might be able to:

However I think that's more likely to be because someone somewhere created hard-links that GoogleBot could see to those pages of his site, and that's how it found them. The fact that it only found a handful of the pages suggests to me that human intervention had a hand to play in it.

-

@anotherusername stuff based on assumptions of Google limitations are a bad idea

-

@anotherusername based on logging of one of my websites, googlebot appears to run a near-complete javascript interpreter, including making xmlhttprequests. I suspect they have something equivalent to phantomJS.