I, ChatGPT

-

@Arantor said in I, ChatGPT:

@Gustav said in I, ChatGPT:

@Arantor said in I, ChatGPT:

@Gustav In which case you have the concept of reasoning, of observation and implementation. Still not a thing AI can do.

Reasoning is just about the first thing they made AI do, back when they called it expert systems. Observation is nearly a solved problem by now for the most part thanks to deep learning. Implementation boils down to taking known things and mixing them together.

Expert systems don’t themselves do the reasoning, the humans did that in constructing the rulesets. The machines just tries to solve for the criteria it has, and won’t spontaneously think of something out of left field that could be adapted.

One such system was one of the more spectacular "AI" fuckups recently.

-

So here's a piece of comedy. I have my side project that we all know I'm not going to launch. Anyway, I took the name and a single line of description and punched it into usestyle.ai on recommendation of one of the tech bros of Twitter.

It gave me a landing page design with content and everything. What concerns me is that while it's not great (a real designer and a real copyrwriter will do much better), it's sufficiently not terrible that for some people it would actually be viable.

More interestingly it actually managed to suggest use cases as part of the marketing crap that I hadn't thought of.

-

@Arantor said in I, ChatGPT:

More interestingly it actually managed to suggest use cases as part of the marketing crap that I hadn't thought of.

Pattern matching in the wider space of generally known concepts is something that these AIs can do.

-

@dkf sure, but it was also interesting to see it demonstrated up close and a little too personal for comfort.

-

@Arantor said in I, ChatGPT:

up close and a little too personal for comfort.

It suggested you should shove your project in a very personal and uncomfortable place?

-

@HardwareGeek no, it’s more I’ve had this idea in my head for literal years now (with working proofs of concept etc) and I’ve spent a long time figuring out what uses you could use this for - and not coming up with that many. Hence some of the reticence in turning it into something more substantial. And yet I throw it at an AI, it suggests multiple sets of avenues that simply hadn’t occurred and I’m just… I spent a long time on this and now we’re here.

-

-

@Arantor said in I, ChatGPT:

@HardwareGeek no, it’s more I’ve had this idea in my head for literal years now (with working proofs of concept etc) and I’ve spent a long time figuring out what uses you could use this for - and not coming up with that many. Hence some of the reticence in turning it into something more substantial. And yet I throw it at an AI, it suggests multiple sets of avenues that simply hadn’t occurred and I’m just… I spent a long time on this and now we’re here.

It's a bit like bringing a different person (and their perspective) into a project. They have different exposure to what could be "natural" and so think of different things that could be done with the project. That doesn't invalidate your effort; they're just able to match different patterns. AI helps here because it is great at pattern matching, so long as the size of context isn't too large or the pattern too deep. Humans have the edge on both fronts... at least some of the time, though more so for some humans than others I guess. (Smarter people match larger contexts against larger collections of patterns to more depth. And even occasionally use logic too!)

-

@Arantor said in I, ChatGPT:

And yet I throw it at an AI, it suggests multiple sets of avenues that simply hadn’t occurred and I’m just… I spent a long time on this and now we’re here.

Don't worry that everything has been thought of. Just realize it to make a profit!

-

@dkf said in I, ChatGPT:

even occasionally use logic[citation needed]

-

@HardwareGeek Yeah. It isn't common.

-

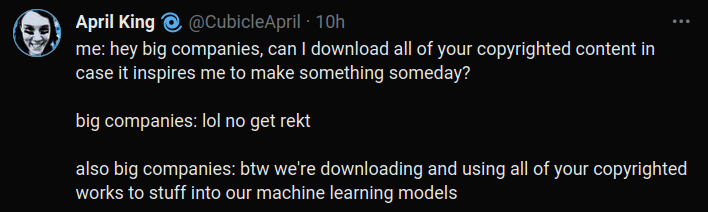

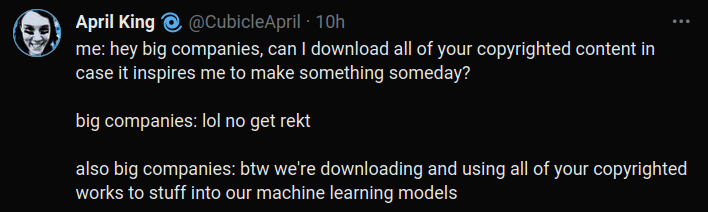

Ceterum censeo copyright is stupid but she still has a point

-

@LaoC You agreed to that. It was on page #7832 of the immortal soul contract you implicitly agreed to when you first opened facebook.com

-

Applying LLM techniques to proteins:

https://www.science.org/content/blog-post/try-antibody-over-here

That’s quite unnerving, considering that if you were handed a given antibody and told to improve it, you’d surely go after the problem via a combination of intensive structural modeling (for affinity to the antigen binding surface) and semi-random mutation (for other properties). Instead, this technique is more “OK, we know what all the other proteins look like - let’s take this sequence and make it look a bit more like those, whattaya say?” It’s finding a new way to leverage all those billions of years of natural protein evolution and extract actionable steps from it. And it looks like "improvement", from our perspective, turns out to be a wide-ranging effect.

Pretty neat.

-

@LaoC said in I, ChatGPT:

Ceterum censeo copyright is stupid but she still has a point

I understand the angst but I don't follow the logic. Big companies have allowed me to watch and consume their stuff in all sorts of ways. Well, absolute state of fax machines and all that.

-

@boomzilla said in I, ChatGPT:

Big companies have allowed me to watch and consume their stuff in all sorts of ways.

Consume, yeah. But use their content for profit without them getting a significant cut? Definitely not.

-

@Zerosquare OpenAI is clearly getting in on that though, as third parties build products around ChatGPT, OpenAI is like 'right we'll add that to the next version'. And so it continues, ouroboros style.

-

@Zerosquare said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

Big companies have allowed me to watch and consume their stuff in all sorts of ways.

Consume, yeah. But use their content for profit without them getting a significant cut? Definitely not.

Eh, they get ripped off the same way by people remembering old shows or books or whatever all the time.

-

@boomzilla said in I, ChatGPT:

@Zerosquare said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

Big companies have allowed me to watch and consume their stuff in all sorts of ways.

Consume, yeah. But use their content for profit without them getting a significant cut? Definitely not.

Eh, they get ripped off the same way by people remembering old shows or books or whatever all the time.

I’m sure Disney would stop that, too, if they could.

-

@topspin said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

@Zerosquare said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

Big companies have allowed me to watch and consume their stuff in all sorts of ways.

Consume, yeah. But use their content for profit without them getting a significant cut? Definitely not.

Eh, they get ripped off the same way by people remembering old shows or books or whatever all the time.

I’m sure Disney would stop that, too, if they could.

Only as long as anyone else does it. They have to be allowed to remix prior art into new things.

-

AI and vendor lock-in! What can possibly go wrong?

-

@Zecc said in I, ChatGPT:

@PleegWat said in I, ChatGPT:

@Carnage I would count any wireless vibrator as teledildonics. But I'm not sure if that helps the numbers much.

And here I thought they were all wireless. Are there some requiring a power cord?

Some properly powerful toys do have power cables.

-

Those microphones are weird-looking.

-

@Zerosquare said in I, ChatGPT:

Those microphones are weird-looking.

I have it on good authority they are throat-swabbers.

-

@Tsaukpaetra I feel like that explains so many things.

-

@DogsB said in I, ChatGPT:

now it may democratize the process of building an app, too.

Blakey, is that you?

-

@topspin said in I, ChatGPT:

@DogsB said in I, ChatGPT:

now it may democratize the process of building an app, too.

Blakey, is that you?

It'll only last until OpenAI bakes that functionality into ChatGPT itself.

-

@Carnage said in I, ChatGPT:

@Zecc said in I, ChatGPT:

@PleegWat said in I, ChatGPT:

@Carnage I would count any wireless vibrator as teledildonics. But I'm not sure if that helps the numbers much.

And here I thought they were all wireless. Are there some requiring a power cord?

Some properly powerful toys do have power cables.

I think I once saw a hobbyist video on a similar device suggesting that while the device came wired, it could easily be modified with a lithium cell which would power it for hours.

-

@PleegWat said in I, ChatGPT:

@Carnage said in I, ChatGPT:

@Zecc said in I, ChatGPT:

@PleegWat said in I, ChatGPT:

@Carnage I would count any wireless vibrator as teledildonics. But I'm not sure if that helps the numbers much.

And here I thought they were all wireless. Are there some requiring a power cord?

Some properly powerful toys do have power cables.

I think I once saw a hobbyist video on a similar device suggesting that while the device came wired, it could easily be modified with a lithium cell which would power it for hours.

Yeah it seems the actual unit runs on 13V so very probable.

-

@PleegWat Yeah, you don't need a yuuge amount of power to rotate a weight. Though, that particular wand is quite powerful compared to every other one I've tried. But it'd probably be perfectly fine with a battery.

-

@Carnage said in I, ChatGPT:

Some properly powerful toys do have power cables.

Also, don't forget the original!

-

@Zerosquare said in I, ChatGPT:

Those microphones are weird-looking.

Some people certainly do produce a lot of sound while using them.

-

@remi OTOH they don't usually expect it to be recorded

-

From shitting on AI to DIY wireless dildos. I don’t think anyone saw that derailment coming. Well done. Take a bow.

-

In case you guys didn't see it yet, openai made this announcement this week:

New GPT-4 Turbo:

- We announced GPT-4 Turbo, our most advanced model. It offers a 128K context window and knowledge of world events up to April 2023.

- We’ve reduced pricing for GPT-4 Turbo considerably: input tokens are now priced at $0.01/1K and output tokens at $0.03/1K, making it 3x and 2x cheaper respectively compared to the previous GPT-4 pricing.

- We’ve improved function calling, including the ability to call multiple functions in a single message, to always return valid functions with JSON mode, and improved accuracy on returning the right function parameters.

- Model outputs are more deterministic with our new reproducible outputs beta feature.

- You can access GPT-4 Turbo by passing gpt-4-1106-preview in the API, with a stable production-ready model release planned later this year.

-

-

-

@sockpuppet7 said in I, ChatGPT:

knowledge of world events up to April 2023.

barbie at cinemas in blackpool showtimes today

-

@sockpuppet7 said in I, ChatGPT:

We announced GPT-4 Turbo, our most advanced model. It offers a 128K context window and knowledge of world events up to April 2023.

Does it know what is meant by "mamelon" and "ravelin"?

-

I don't know if I mentioned this before, but one of my coworkers found an actual use for a LLM. He used it to filter the abstracts of ~15000 datasets to get the collection of actually interesting and relevant ones, which were about 800–850. Since the abstracts were basically just plain text with all sorts of their own stuff, filtering by hand would have taken forever. And we don't mind if it mis-categorises a few; just getting rid of all the archived rubbish was the object of the exercise.

Apparently the important thing was to reinitialize the context for each, even though that pushed the cost up, as that reduces the rate at which the system goes completely delusional.

-

@dkf said in I, ChatGPT:

I don't know if I mentioned this before, but one of my coworkers found an actual use for a LLM. He used it to filter the abstracts of ~15000 datasets to get the collection of actually interesting and relevant ones, which were about 800–850. Since the abstracts were basically just plain text with all sorts of their own stuff, filtering by hand would have taken forever. And we don't mind if it mis-categorises a few; just getting rid of all the archived rubbish was the object of the exercise.

Apparently the important thing was to reinitialize the context for each, even though that pushed the cost up, as that reduces the rate at which the system goes completely delusional.

-

@boomzilla said in I, ChatGPT:

Applying LLM techniques to proteins:

https://www.science.org/content/blog-post/try-antibody-over-here

That’s quite unnerving, considering that if you were handed a given antibody and told to improve it, you’d surely go after the problem via a combination of intensive structural modeling (for affinity to the antigen binding surface) and semi-random mutation (for other properties). Instead, this technique is more “OK, we know what all the other proteins look like - let’s take this sequence and make it look a bit more like those, whattaya say?” It’s finding a new way to leverage all those billions of years of natural protein evolution and extract actionable steps from it. And it looks like "improvement", from our perspective, turns out to be a wide-ranging effect.

Pretty neat.

That's pretty amazing. Useful science FTW!

I mean, it makes absolutely no sense that this works at all, when you compare it with e.g. the effort put into predicting protein folding (computationally, by human mechanical-turks, or with other kinds of AI). But if it works, it works.

-

@topspin said in I, ChatGPT:

@boomzilla said in I, ChatGPT:

Applying LLM techniques to proteins:

https://www.science.org/content/blog-post/try-antibody-over-here

That’s quite unnerving, considering that if you were handed a given antibody and told to improve it, you’d surely go after the problem via a combination of intensive structural modeling (for affinity to the antigen binding surface) and semi-random mutation (for other properties). Instead, this technique is more “OK, we know what all the other proteins look like - let’s take this sequence and make it look a bit more like those, whattaya say?” It’s finding a new way to leverage all those billions of years of natural protein evolution and extract actionable steps from it. And it looks like "improvement", from our perspective, turns out to be a wide-ranging effect.

Pretty neat.

That's pretty amazing. Useful science FTW!

I mean, it makes absolutely no sense that this works at all, when you compare it with e.g. the effort put into predicting protein folding (computationally, by human mechanical-turks, or with other kinds of AI). But if it works, it works.

I think it makes sense. It's comparing the protein to other known stuff.

-

@GOG said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

We announced GPT-4 Turbo, our most advanced model. It offers a 128K context window and knowledge of world events up to April 2023.

Does it know what is meant by "mamelon" and "ravelin"?

More importantly, can it differentiate, visually, between a Mauser rifle and a javelin?

-

@Arantor said in I, ChatGPT:

More importantly, can it differentiate, visually, between a Mauser rifle and a javelin?

It is the very model of a modern language model.

-

@Arantor said in I, ChatGPT:

@GOG said in I, ChatGPT:

@sockpuppet7 said in I, ChatGPT:

We announced GPT-4 Turbo, our most advanced model. It offers a 128K context window and knowledge of world events up to April 2023.

Does it know what is meant by "mamelon" and "ravelin"?

More importantly, can it differentiate, visually, between a Mauser rifle and a javelin?

It's multimodal, so one can hope.

-

In cynical news I have my side project and I've been trying to wonder if a) I can apply my clever as hell algorithm at its heart and claim it's AI for the cheap win, or b) actually drop AI into it for no (limited?) good reason and sell it that way because everyone's falling over themselves to implement things with AI in.

And knowing full well that even if ChatGPT did want to integrate what I have in mind, it won't do them any good because it needs much more attached stuff than just the blackbox to be useful.

-

c) Claim it's the next big thing that goes beyond AI: Real Intelligence.

-

@Zerosquare I need to be able to say it with a straight face and not entirely feel like I'm lying about it though.

-

Mmmh. You may not be quite management-ready material yet, after all.

Doxy Die Cast - Doxy

Doxy Die Cast - Doxy

Hitachi Magic Wand - Wikipedia

Hitachi Magic Wand - Wikipedia