More Intel benchmarking shenanigans

-

It's amazing when a market leader with the best product still feels the need to skew the results in their own favor to look better than their competition. So, Intel releases a benchmark claiming up to 50% better gaming performance for the 9900K compared to the 2700X. Well...

9900K gets optimized memory timings, 2700X runs on default timings.

9900K gets a beefy Noctua cooler, 2700X gets the included cooler.

They enable Game Mode for the 2700X.

Sorry, that needs to be repeated: THEY ENABLE GAME MODE FOR THE 2700X.Now, Game Mode is a feature to be used with Threadripper CPUs whenever there's a program that can't handle the large amount of threads, and work by disabling one of the CCX modules making the 16c32t CPU into an 8c16t CPU. What happens when you enable it on a Ryzen? The exact same thing, making the 8c16t CPU into a 4c8t CPU.

And that's not even touching the fact that all tech sites are under an NDA and forbidden to publish any benchmarks for the 9900K until its official release, meaning Intel are also using the advantage of nobody being allowed to publish real numbers for the 9900K yet.

https://www.patreon.com/posts/21950120

Ya know, Intel. First you are tending to my nerd side with that nice D12 packaging and then you remind me of why I've been eyeing AMD for a while. Good job!

-

@Atazhaia said in More Intel benchmarking shenanigans:

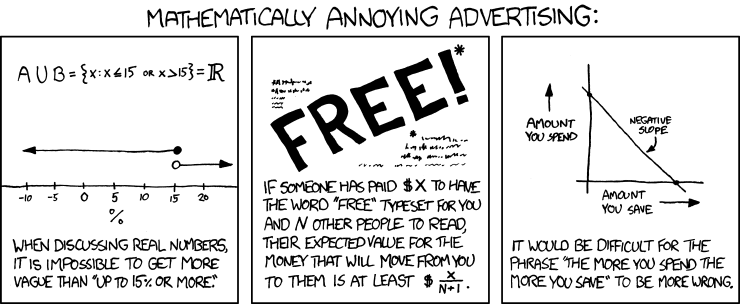

up to 50% better

Those are weasel words right there. 0.01% better would be validly counted. Arguably even making things worse would be…

-

@dkf It's like an up to 20mbps ADSL line.

-

@PleegWat At least an up to 20Mbps ADSL line has a technical reason for being up to, as it's mainly dependant on distance from the phone exchange. Granted, the maximum would only be possible if you'd live next to it but whatever.

I would assume Intel is doing this little stunt because the 9900K isn't that far ahead of the 2700X, but Intel needs to motivate that doubled pricetag somehow.

-

@Atazhaia

TRWTF is spending the scratch on a Intel X9XX series or the AMD equivalent, instead of just getting the X7XX series instead, which is the same damn CPU except it failed like one upcheck so they disabled a couple turbo buckets, cut the price in half, and called it a day.

-

@izzion Because people want the best-of-the-best because it looks cool! But I agree, the only difference between a K/X model and a non-K/X model is that the first one runs a bit faster/has better turbo and in case of Intel also is unlocked for overclocking. (All Ryzen are unlocked regardless of extra letters.)

But yeah, for overclocking you need to pay that extra cash to Intel for that feature. Although, I can make a case for the Ryzen 2000-series X models that have so good dynamic overclocking that they are pretty much not worth to bother overclocking yourself, for the people who want the benefits of OC but don't want to bother with it.

-

Something I didn't pick up on at first is that Intel calls the 9900K the "first mainstream 8c16t CPU". Then what is the Ryzen 7 according to Intel?

-

@Atazhaia said in More Intel benchmarking shenanigans:

Then what is the Ryzen 7 according to Intel?

Intel: "What's a wry-zehn?"

-

@dkf said in More Intel benchmarking shenanigans:

@Atazhaia said in More Intel benchmarking shenanigans:

up to 50% better

Those are weasel words right there. 0.01% better would be validly counted. Arguably even making things worse would be…

In the context of the benchmarks, it means the highest performance improvement recorded (in their extremely one-sided, not at all fair comparison that others are legally forbidden from challenging for a while yet) was 50%.

They don't really need to use weasel words because a benchmark is not a contract. Also, it was done by a third party, and commissioned by Intel.

-

@Atazhaia said in More Intel benchmarking shenanigans:

Something I didn't pick up on at first is that Intel calls the 9900K the "first mainstream 8c16t CPU". Then what is the Ryzen 7 according to Intel?

More to the point, what is my 8c16t i7-7820X? Is that not a mainstream CPU or something?

-

@Steve_The_Cynic said in More Intel benchmarking shenanigans:

More to the point, what is my 8c16t i7-7820X? Is that not a mainstream CPU or something?

That's a HEDT CPU, not a mainstream CPU.

-

Nvidia did the same thing recently with their new cards (20xx).

6 times better performance!

Real time ray tracing!

60 fps in 4K in every game!And NDAs for media until release.

-

@Atazhaia said in More Intel benchmarking shenanigans:

@Steve_The_Cynic said in More Intel benchmarking shenanigans:

More to the point, what is my 8c16t i7-7820X? Is that not a mainstream CPU or something?

That's a HEDT CPU, not a mainstream CPU.

But it's been out for more than a year! Surely that makes it antiquated trash?

-

@Steve_The_Cynic HEDT moves a bit slower, so it's still the current HEDT lineup for Intel. At least until the 9xxx X-series CPUs are released.

-

@Atazhaia said in More Intel benchmarking shenanigans:

@Steve_The_Cynic HEDT moves a bit slower, so it's still the current HEDT lineup for Intel. At least until the 9xxx X-series CPUs are released.

Yeah, I know. I was yanking your chain a bit...

That said, I've been reminded recently of how painfully sluggish WIn10 is if you don't have something fairly recent. My old PC is an i5-750 (4c4t, 2.8GHz) that I built in 2011, and compared to the 7820X, it's ... more ... than ... a ... bit ... slow. (Worse: neither PC has an SSD, and the difference is still dramatic.)

-

@Steve_The_Cynic My current PC is an i7-990X (6c12t, 3.47GHz) built in 2010 (and upgraded a few times during the years). And I am heavily considering an upgrade, once I can decide on what I should upgrade to.

-

@Atazhaia said in More Intel benchmarking shenanigans:

@Steve_The_Cynic My current PC is an i7-990X (6c12t, 3.47GHz) built in 2010 (and upgraded a few times during the years). And I am heavily considering an upgrade, once I can decide on what I should upgrade to.

Don't skimp on RAM speed. Once the software you're running gets big enough to spill out of the CPU's caches, if you've bought slower memory, you'll notice it.

-

@Steve_The_Cynic but also don't spend too much on it. The performance boost is heavily dependent on particular workflow, and in most workflows it's negligible.

-

@dkf said in More Intel benchmarking shenanigans:

@Atazhaia said in More Intel benchmarking shenanigans:

up to 50% better

Those are weasel words right there. 0.01% better would be validly counted. Arguably even making things worse would be…

That middle one isn't quite true. AS:RD is free and I'm expecting to lose about $3 per month running the stats website until the day I die. DFHack is free and completely run by volunteers. Dwarf Fortress is free but the website has a button you can use to buy crayon drawings.

-

@ben_lubar: but you're a pathological case.

Mathematically speaking, of course.

-

@Zerosquare said in More Intel benchmarking shenanigans:

@ben_lubar: but you're a pathological case.

Mathematically speaking, of course.

Do Go on...

-

@ben_lubar said in More Intel benchmarking shenanigans:

That middle one isn't quite true. AS:RD is free and I'm expecting to lose about $3 per month running the stats website until the day I die.

Well, it depends on what you define as “value” and how you expect to earn it.

-

@dkf said in More Intel benchmarking shenanigans:

@ben_lubar said in More Intel benchmarking shenanigans:

That middle one isn't quite true. AS:RD is free and I'm expecting to lose about $3 per month running the stats website until the day I die.

Well, it depends on what you define as “value” and how you expect to earn it.

The "value" I expect to earn is other people being happy, who may or may not ever tell me about it.

-

@ben_lubar said in More Intel benchmarking shenanigans:

The "value" I expect to earn is other people being happy, who may or may not ever tell me about it.

To bring you some value, I'm happy

-

@TimeBandit said in More Intel benchmarking shenanigans:

I'm happy that I've never played it

Why? You don't want to shoot guns at illegal space aliens?

-

-

@Jaloopa High-End Desktop. Extra expensive and extra powerful, but typically not for gaming.

-

@Atazhaia said in More Intel benchmarking shenanigans:

@Jaloopa High-End Desktop. Extra expensive and extra powerful, but typically not for gaming.

Works pretty damned well for gaming, actually.

-

@Steve_The_Cynic Yeah, but as a pure gaming PC it's kinda meh. The best gaming CPU is whatever is at the top of the mainstream segment, due to higher clocks as games are typically not very good at multithreading yet. Building something extreme like a dual 2080 Ti rig with a bunch of NVMe drives in RAID0 I can understand the need for extra PCIe lanes, though.

-

@Atazhaia said in More Intel benchmarking shenanigans:

@Jaloopa High-End Desktop. Extra expensive and extra powerful, but typically not for gaming.

Which makes this phrase:

@Atazhaia said in More Intel benchmarking shenanigans:

HEDT moves a bit slower

..a bit awkward (I pondered quoting it out of context), but we know what you meant.

-

@Atazhaia said in More Intel benchmarking shenanigans:

It's amazing when a market leader with the best product still feels the need to skew the results in their own favor to look better than their competition

Market leaders can get away with very annoying things, I started noticing this as a pattern after samsung installing crapware on it's flagship phones. Now I avoid any market leader at anything.

-

@sockpuppet7 many market leaders are there for a reason.

-

@Atazhaia said in More Intel benchmarking shenanigans:

The best gaming CPU is whatever is at the top of the mainstream segment, due to higher clocks as games are typically not very good at multithreading yet.

Is that actually still the case? I thought that with the modern console designs (PS4 and Xbone have 4-8 slow cores) that games have been needing to multithreaded more heavily for a while now.

-

@Unperverted-Vixen said in More Intel benchmarking shenanigans:

@Atazhaia said in More Intel benchmarking shenanigans:

The best gaming CPU is whatever is at the top of the mainstream segment, due to higher clocks as games are typically not very good at multithreading yet.

Is that actually still the case? I thought that with the modern console designs (PS4 and Xbone have 4-8 slow cores) that games have been needing to multithreaded more heavily for a while now.

Still the case as far as I can see, I generally find one or two cores maxed out while the rest aren't doing a great deal.

I'm sure it's very game/engine dependant though.

-

@Gąska said in More Intel benchmarking shenanigans:

@sockpuppet7 many market leaders are there for a reason.

That, and pretty much anyone can get away with anything these days. Social media outrages can at best tank stock temporarily, but usually there's so much outrage over one or another thing all the time that any given one doesn't last long enough to notably impact long term sales, I'm afraid.

@Unperverted-Vixen said in More Intel benchmarking shenanigans:

Is that actually still the case? I thought that with the modern console designs (PS4 and Xbone have 4-8 slow cores) that games have been needing to multithreaded more heavily for a while now.

They have, but "there's no replacement for displacement". A game can only run as fast as its main loop does.

-

@Cursorkeys Multithreaded optimisations are coming, just I guess the game industry is slow to adapt. It's why Intel still is king for gaming, as their CPUs are better at singlethreaded/fewthreaded performance.

Which also makes the whole thing about the 8-core 9900K being the "world's best gaming CPU" kinda ridiculous, because it's not mainly about the number of cores. It's about that it has the best singlethreaded performance which is shown by it not being significantly better than a 8700K/7700K for gaming (based off the information I've heard). It's just IPC improvements and a higher clock rate that allows it to be better. Multithreaded performance seems to just be ~10% better compared to a Ryzen 2700X but for twice the price.

Natural progression should make multithreading better over time. But right now one of the things I would be interested in is seeing what could be done by taking a 4-core CPU and max out the speeds under the current TDP ceiling. Just to see how much higher it would go and how it would compare in game performance to an 8-core CPU.

-

A bigger problem for games I think is that the game models don't benefit much from the things the CPU is good at. You could have better physics, but unless you design game systems around those physics there is no point. Or better since, but again you need something interesting for the ai to do that enriches gameplay.

The limitations aren't technological, the technology has been there for years. It's a lack of imagination that is holding them back.

-

@Kian said in More Intel benchmarking shenanigans:

game models don't benefit much from the things the CPU is good at

Let me phrase that in a different manner: an entire class of computing problems don't benefit much from splitting them, if at all possible, into a number of independently executable parts.

@Kian said in More Intel benchmarking shenanigans:

It's a lack of imagination

I'd argue, perhaps lack of necessity. You can imagine the most capable AI, but it will be useless. It will win every time. FEAR had a capable AI. And look at the shit suicidal cannon fodder we've got almost 15 years of... uh, innovation later. Because the general populace doesn't really want smart opponents. They want to shoot illegal aliens. The more capable bots become, the more difficult becomes the problem to make them pretend that they aren't.

-

@Applied-Mediocrity

They could at least apply their advances in AI to my units so that when I'm using a massive horde of onagers to AOE the crap out of the enemy base, my own units don't commit mass fratricide

-

There's a reason for AI being shit in games.

People in general don't want smarter enemies.

I did my masters thesis in building an AI from pretty much a list of AI techniques, that felt like playing against a human in FPSes. Two decades ago. It's not that hard to do. I was utter shit at programming back then, like most people not even yet out of school. :D

-

@Applied-Mediocrity said in More Intel benchmarking shenanigans:

I'd argue, perhaps lack of necessity. You can imagine the most capable AI, but it will be useless. It will win every time.

That's exactly why I said it's lack of imagination. We know how to make better, more humanlike ai, and that's just not fun. We haven't yet come up with a way to leverage the technology to make games more fun.

-

@Carnage @Applied-Mediocrity

The rationale of "people don't want to play against good AI" makes sense to me. And yet OpenAI Five required a restriction of removing over 80% of the champion pool (including all of the "mechanically difficult" champions), and still wound up being pretty one dimensional and exploitable by the humans after a few games to see what it was doing.Do you think that's a limitation of the neural-net "self training" approach, or endemic to AI?

-

@izzion said in More Intel benchmarking shenanigans:

Do you think that's a limitation of the neural-net "self training" approach, or endemic to AI?

I wonder how much of it comes from the AI's perception compared to a human. How much do you notice? Do you always put an appropriate weight on everything's importance? If an AI is more consistently noticing what's going on that could have a huge effect on its performance.

-

@boomzilla said in More Intel benchmarking shenanigans:

If an AI is more consistently noticing what's going on that could have a huge effect on its performance.

Or if the AI knows about game events that are going on that it logically shouldn't know about and is pre-working to counter it.

-

@boomzilla

With the caveat that I don't specifically play DOTA (just one of its kid-cousin MOBAs), it appeared that the primary thing the DOTA bots ran into was that their self-training basically got into the part of determining "oh, hey, getting map control wins games" and never advanced to the part of "stalling the enemy's gain of map control while building up for late game lets us build advantages that makes the results more consistent and skill-based". So the AI would just throw a bunch of heroes at a single lane of towers and ram that lane down. At first, when the humans tried to answer it head on, they got smashed, because the bots "team fought" better (what do you know, electronic reflexes, even when set to "simulate" humans with a fixed delay of 80ms, are still faster/better). But when the humans adjusted to a strategy that could weaken / slow the push without directly engaging the bots, the AI kind of got lost and just kept shifting lane to lane to try to force things down, and fell way behind in resources since they weren't trying to farm the minions.@e4tmyl33t

And, from my understanding, they did make sure the AI only had the game state knowledge that a human would have had - limited vision, etc.

-

@izzion there are a few different angles to keep in mind. One is strategy vs skill: in a fighting game, for example, an ai could pull frame perfect moves, parrying every attack and exploiting every opening of an opponent and be unbeatable, or a bot in a fps could have perfect aim and land every headshot a frame after you show your face.

As games require more than pure technical skill, the balance turns in favor of humans, as winning strategies can't be trivially coded. In your example though, I suspect that's more a limitation of neutral nets. Expert systems work better for games in general

-

@Kian said in More Intel benchmarking shenanigans:

@izzion there are a few different angles to keep in mind. One is strategy vs skill: in a fighting game, for example, an ai could pull frame perfect moves, parrying every attack and exploiting every opening of an opponent and be unbeatable, or a bot in a fps could have perfect aim and land every headshot a frame after you show your face.

As games require more than pure technical skill, the balance turns in favor of humans, as winning strategies can't be trivially coded. In your example though, I suspect that's more a limitation of neutral nets. Expert systems work better for games in general

And, that, I think, is what causes most of the consternation in the "debate" around AI and the merits of the term (and the frustrations of people thinking the "bots" are too hard). I wouldn't classify raw technical skill as "intelligence" -- rather, the intelligence part of the computer player would be the ability to play / "think" strategically and adapt its play style as the game state changed.

I agree, having computer player difficulty be the result of super-human execution (or just raw cheating, like the AI in Civ games at least up to Civ 4 -- I haven't played seriously since then to know how they handle difficulty in newer versions, but up to and including Civ 4, they made the AI more difficult by giving it more starting resources and more production bonuses) is ultimately nothing but an exercise in masochism and frustration. And it really grinds my gears to hear proclamations about "advances in AI" that ultimately come down to yet another dose of just turning the cheat dial up another notch.

-

@Carnage said in More Intel benchmarking shenanigans:

There's a reason for AI being shit in games.

People in general don't want smarter enemies.

I did my masters thesis in building an AI from pretty much a list of AI techniques, that felt like playing against a human in FPSes. Two decades ago. It's not that hard to do. I was utter shit at programming back then, like most people not even yet out of school. :DSounds interesting. Where can we find it?

-

@izzion right, rubber banding is the same in racing games. You want to give the player a consistent challenge no matter how far ahead they are, so they don't get bored of winning too easily, so you cheat.

At a certain point it's understandable. Players may not be able to distinguish a smart ai from a cheating one, so why go to the trouble of making one smart? If you are going to send a million NPCs to their deaths to be stompped on by the player, do you need them to be making sound strategic decisions? Your goal as a game designer is to entertain, not to advance the field of ai.

-

There are two very different things called "AI" in video games:

- Bots that emulate players. These are closer to actual AI.

- NPCs running scripts. These are not AI in any sense of the word, but for some reason are still called AI by nearly everyone. A boss fight in a video game is heavily scripted.

If you're making the first category, you run into a major problem: some things that are easy for computers are hard for humans, and some things that are easy for humans are nearly impossible for computers.

For example, in any kind of game where you can aim a projectile, a computer could calculate the perfect shot and immediately make it. However, this wouldn't be fun, so bots in these types of games usually have code specifically to make them worse at aiming or to shoot more bullets than they need to.