Programmatically generating sound in real time depending on other events

-

Consider this self-inflicted homework.

They say that generating audio is easy. You take a portable library that abstracts the sound input/output API of different OSes; you write a callback function that generates the audio you need; you hook it up to the API of the library that you chose, and off you go!

The libraries I've seen strongly prefer me to give them a callback function. For example, while PortAudio 'also supports a "Blocking I/O" model which uses read and write calls which may be more familiar to non-audio programmers', 'not all APIs support this functionality'. And libsoundio doesn't even imply anything besides the callback model, so I guess that the audio library will have to control the main loop here. Callback functions are supposed to return the sound they are requested to produce fairly quickly (no I/O, scheduling or mutexes) and in very precise amounts ("write at minimum

frame_count_minframes and at maximumframe_count_maxframes" ... For some backends "frame_count_minwill be equal toframe_count_max").The problem is, since the sound library controls my main loop and the callback isn't allowed to do anything interesting, how am I supposed to gather the outside events I'm generating the sounds in response to? To be specific, let's say I want my application to sound a click every time a socket becomes readable. If I wasn't generating sound, there would be a wide array of options ranging from Berkeley sockets API and poor old

pollto asynchronous platform-abstract callbacks, but since the audio callback isn't supposed to do even non-blocking I/O (is it?), I don't see a way to do this without running two event loops at the same time, and that seems to require multiple threads (unless one is lucky to be writing in a language with nativeasync/awaitand everything integrated in one event loop, I suppose). And running two threads only to have both of them spend time blocked waiting for events seems to me like bad design.What's the correct solution here? How do voice chat applications do this, for example?

Should I go on with the two thread idea? That would imply a thread-safe ring buffer to store generated audio in, and somehow providing a mutex-free way for the audio thread to access it without formally invoking nasal demons. That sounds way too complicated (atomically updating shared offset value... manually issuing memory barriers after each write... I don't want to go this way).

Is the answer "get a smarter event loop" (including "use a language capable of

async/await")? I suppose that Qt could be such a solution, since it integrates a lot of stuff.

-

@aitap said in Programmatically generating sound in real time depending on other events:

Should I go on with the two thread idea? That would imply a thread-safe ring buffer to store generated audio in, and somehow providing a mutex-free way for the audio thread to access it without formally invoking nasal demons. That sounds way too complicated (atomically updating shared offset value... manually issuing memory barriers after each write... I don't want to go this way).

This does seem like the way to go. And from the libsound page it seems like you can use mutexes, but you just can't wait for them. E.g. your sound callback should/could just return silence if the mutex is already locked.

-

@aitap said in Programmatically generating sound in real time depending on other events:

Callback functions are supposed to return the sound they are requested to produce fairly quickly (no I/O, scheduling or mutexes) and in very precise amounts ("write at minimum frame_count_min frames and at maximum frame_count_max frames" ... For some backends "frame_count_min will be equal to frame_count_max").

This definitely reads like the audio backend is assuming to be in control of a thread. This may well be required to meet timing constraints. So yes, you'll need a second thread for other work.

-

@aitap said in Programmatically generating sound in real time depending on other events:

since the audio callback isn't supposed to do even non-blocking I/O (is it?), I don't see a way to do this without running two event loops at the same time

I had a very swift look through the implementation. It looks… complicated with a lot of juggling to get its desired soft-realtime operation mode working right. Put it in its own thread. (I think it'd be able to get rid of a lot of the complexity if it wasn't supporting lots of different underlying APIs, but the point of a portable library is to hide that stuff.)

-

Concurring with the other people who say "put it in its own thread." That's the only reasonable way to do a low-level audio API.

-

@aitap said in Programmatically generating sound in real time depending on other events:

Consider this self-inflicted homework.

@PaulaBean said in About the Coding Help category:

No homework problems please.

-

@aitap said in Programmatically generating sound in real time depending on other events:

And running two threads only to have both of them spend time blocked waiting for events seems to me like bad design.

Not at all. Things like this are done all the time. Go for it.

@aitap said in Programmatically generating sound in real time depending on other events:

That would imply a thread-safe ring buffer to store generated audio in, and somehow providing a mutex-free way for the audio thread to access it without formally invoking nasal demons. That sounds way too complicated (atomically updating shared offset value... manually issuing memory barriers after each write... I don't want to go this way).

There exist lockless queue algorithms. Just google up a library for your language of choice and let it do the work. While I never checked it, there might be some lockless ring buffers too.

-

Everyone, thanks for your advice!

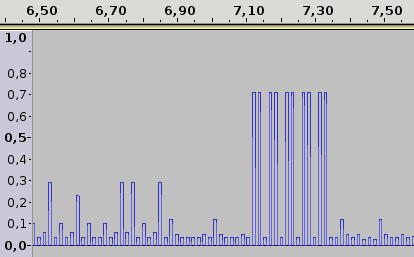

I now have a little program that emits a click for every network packet it sees, like a Geiger counter, with the amplitude proportional to the packet size:

It even works with the naive implementation of a mutex-protected ring buffer if I set the click length to a high enough value. Sounds like farts though; I should synthesize something more involved than square waves.

-

@aitap said in Programmatically generating sound in real time depending on other events:

It even works with the naive implementation of a mutex-protected ring buffer

If you're on Windows, that's not terribly surprising. On Linux, there's a very real chance it wouldn't have worked as well because for some reason acquiring a lock for unlocked mutex is very slow.

-

Audio is a pain because you have to constantly feed samples at a fixed rate. Having a thread in charge of generating/reading those samples and talking to the audio subsystem is the only way that makes sense really.

I would have the entire ring buffer owned by that thread and your way of interacting with it is then not 'write samples into the buffer' but 'tell the audio thread that an event happened', and have it generate the samples an manage the buffer itself, unless you think sample generation is going to be so slow that thread can't keep up.

-

@bobjanova said in Programmatically generating sound in real time depending on other events:

Audio is a pain because you have to constantly feed samples at a fixed rate.

Only in extremely low level-programming, which is why basically every audio library ever has a high-level "play sound" API that takes a complete sound as input and handles all the details of playing it back under the hood.

Honestly, I think even the libraries having to do that is

. If you can feed OpenGL an entire multi-megabyte image and give it a few simple commands that say "draw this image at these coordinates" and leave the mechanics of it to the hardware, why can't you do the same with audio? Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

. If you can feed OpenGL an entire multi-megabyte image and give it a few simple commands that say "draw this image at these coordinates" and leave the mechanics of it to the hardware, why can't you do the same with audio? Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

-

@Mason_Wheeler Only in extremely low level-programming, which is why basically every audio library ever has a high-level "play sound" API

Well yeah but the OP here sounds like he wants to play with the low level API. You can feed high level frameworks audio files and tell them to play them - in a game engine you can typically tell it to play it at some location, too, so it handles the volume and stereo effects.

It isn't done at the hardware level though, there is no concept of 'clever' sound cards like how graphics cards have the shaders and rendering pipeline in hardware. I guess that's because sound isn't a complicated enough thing to justify moving it off the CPU, and it's not parallel in the same way which is why specialised hardware is a winner for graphics.

-

@Mason_Wheeler said in Programmatically generating sound in real time depending on other events:

Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

Two reasons. One is that audio chipset is perhaps the most archaic part of a modern PC and is shit quality. The other is that it's a very niche use case - basically every kind of software needs or prefers short buffers updated periodically over having to provide a full minute of samples up front. Even media players only decode a small chunk of the file at a time.

-

@bobjanova said in Programmatically generating sound in real time depending on other events:

there is no concept of 'clever' sound cards

Worse. Such concepts do exist, but never had enough market penetration to matter.

-

@Mason_Wheeler said in Programmatically generating sound in real time depending on other events:

Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

Actually, that's how /dev/audio worked on some Unix systems in 90s (SGI? DUX? both? can't remember) and contemporary linux versions. The obvious problem was that the format was hardcoded (22kHz 8bit mono, if I remember correctly) and sending wrong format was quite... unpleasant.

-

@dkf said in Programmatically generating sound in real time depending on other events:

@bobjanova said in Programmatically generating sound in real time depending on other events:

there is no concept of 'clever' sound cards

Worse. Such concepts do exist, but never had enough market penetration to matter.

I remember the very short window when sound cards with hardware mixer were actually commercially available for general public for reasonable price (only twice as expensive that the most cheap noname card).

-

@Kamil-Podlesak said in Programmatically generating sound in real time depending on other events:

@Mason_Wheeler said in Programmatically generating sound in real time depending on other events:

Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

Actually, that's how /dev/audio worked on some Unix systems in 90s (SGI? DUX? both? can't remember) and contemporary linux versions.

You can argue /dev/audio is a high-level API that does all the buffer fiddling for you.

-

@Gąska That's on Linux, but each click has to take upwards of ~10ms, which means that many packets have to be skipped.

-

@Kamil-Podlesak Even now I guess I could open a pipe to

aplay -f $whatever, take itsfd, nicely asklibpcapfor its ownfd(not guaranteed, but should work on Linux) and stuff bothfds intoepoll, doing everything in a single thread, but in extremely unportable manner.

-

@bobjanova said in Programmatically generating sound in real time depending on other events:

I would have the entire ring buffer owned by that thread and your way of interacting with it is then not 'write samples into the buffer' but 'tell the audio thread that an event happened', and have it generate the samples an manage the buffer itself, unless you think sample generation is going to be so slow that thread can't keep up.

I think that's what I ended up doing. The

pcapthread writes events (packet sizes) into a ring buffer, and the sound thread synthesises clicks for each event it takes out of the ring buffer, or zeroes if the ring buffer is empty. Did you mean a different design?

-

@Mason_Wheeler said in Programmatically generating sound in real time depending on other events:

Honestly, I think even the libraries having to do that is . If you can feed OpenGL an entire multi-megabyte image and give it a few simple commands that say "draw this image at these coordinates" and leave the mechanics of it to the hardware, why can't you do the same with audio? Is there any good reason why you can't feed your audio hardware a 20 MB uncompressed buffer and say "play this back" and let it handle the details?

OpenAL used to work that way. In the simplest form, you allocated buffers, filled them, and then queued them for playback. OpenAL would then mix (and do some other optional transformations) and push the audio to the playback device. I don't remember the full details, but I'm fairly sure you would then ask OpenAL for what buffers were finished playing so that you could reuse them. For streaming something, you could pick the buffer size, where shorter buffers = lower latency, but more burden on queuing new ones at a sufficient rate, longer buffers = higher latency, but less change of underrunning data.

Conceptually that was quite easy and convenient, but otherwise OpenAL wasn't ever a great API. It suffered from the OpenGL global-context issue with global-state design, which is a pain. Towards the end, there was an open-sources somewhat-maintained code-base, but it seemed to stagnate (and at some point I stopped bothering keeping track). FWIW, the open source implementation called platform APIs, and did so via a internal thread that also performed the mixing (where needed).

-

@Gąska said in Programmatically generating sound in real time depending on other events:

On Linux, there's a very real chance it wouldn't have worked as well because for some reason acquiring a lock for unlocked mutex is very slow.

Out of curiosity, where do you see this? I thought that the mutexes based on pthread_mutex use futexes internally, meaning that acquiring a uncontended lock is a essentially an atomic CAS (plus a function call or two for API reasons, but no kernel transition). AFAIK, this is the same as CRITICAL_SECTION on Windows.

-

@dkf said in Programmatically generating sound in real time depending on other events:

Worse. Such concepts do exist, but never had enough market penetration to matter.

They did for a while, though, didn't they? Creative had the whole EAX thing in their cards, which I think was originally in hardware (and the cards were at least somewhat common). Later, CPUs kinda caught up in performance, the whole processing was moved to software, and nobody has a Creative sound card anymore.

Even at something like 16 channels, 32 bits, 96KHz just isn't that much data to process anymore. (Albeit .. consoles still have specialized audio co-processors, unless I'm mistaken.)

-

@cvi said in Programmatically generating sound in real time depending on other events:

@Gąska said in Programmatically generating sound in real time depending on other events:

On Linux, there's a very real chance it wouldn't have worked as well because for some reason acquiring a lock for unlocked mutex is very slow.

Out of curiosity, where do you see this? I thought that the mutexes based on pthread_mutex use futexes internally, meaning that acquiring a uncontended lock is a essentially an atomic CAS (plus a function call or two for API reasons, but no kernel transition). AFAIK, this is the same as CRITICAL_SECTION on Windows.

I misremembered it a little. It wasn't mutex, it was rw-lock.

https://fy.blackhats.net.au/blog/html/2018/10/19/rust_rwlock_and_mutex_performance_oddities.html

That said, lock-free ring buffer would be the ideal solution for the problem in OP.

-

@cvi said in Programmatically generating sound in real time depending on other events:

Albeit .. consoles still have specialized audio co-processors, unless I'm mistaken.

AFAIK you are.

-

@Gąska Guess a quick Google search is needed then.

PS4: https://en.wikipedia.org/wiki/PlayStation_4_technical_specifications#Audio_processing_unit

(Apparently that one also exists in normal AMD PCs).Xbone: https://semiaccurate.com/2013/09/03/xbox-ones-sound-block-is-much-more-than-audio/

Edit: And PS5 likely has something too: https://www.vg247.com/2020/03/18/ps5-3d-audio-tempest-engine/

-

@cvi Oh well. ¯\_(ツ)_/¯