Moar Cooties

-

Is this forum software able to roll back its version? Might be nicer to rollback to a known stable version next time.

accalia is willing to maintain a test server and deployment process; I suggest you considertaking her up on that.

-

@Buddy said in Moar Cooties:

Is this forum software able to roll back its version?

Usually, yes. If there have been schema changes, then no. Last one was 25 days ago

-

@julianlam would rolling back now fix the current issue? Are there any downsides to trying it?

-

@Buddy Wait, we're still having an issue...?

-

...leads to:

-

@boomzilla it seems things were the best around 13:00. Can we go back to that?

-

@RaceProUK Much more telling were the length of the I/O queue, apparently.

WTF is going on? Is it a spider gorging itself on all the old content? Those have a nasty habit of breaking all the caches and so shitting on responsiveness for everyone else…

-

-

@boomzilla with a little hump on the right hand side, you get a badly drawn cock to send to Jeff.

-

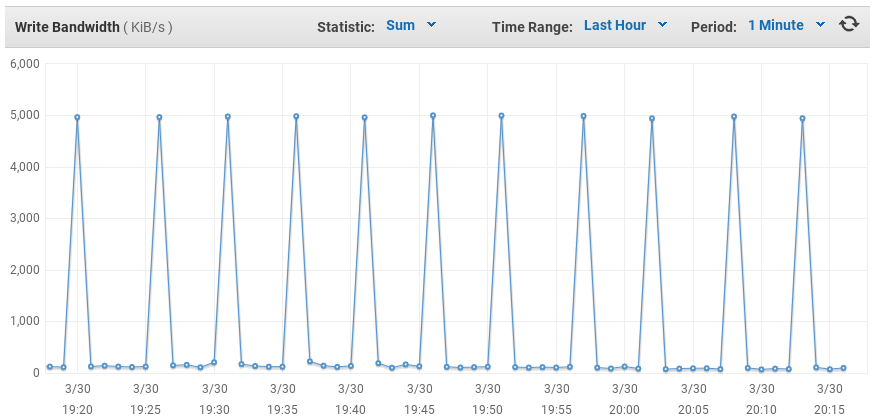

Why are we writing 5MB/s every 5 minutes? /cc @julianlam

-

@ben_lubar journaling?

What's our commitIntervalMS in mongo?

-

ORLY?

-

@HardwareGeek If it wasn't for the fact there's more than one of us in SockDev, I'd be so tempted to change that to "It's all fucking shit"

-

-

Another point in favor of something like spiders driving cootie storms:

-

@boomzilla I just told GoogleBot to slow down.

New crawl rate: 0.1 requests per second

Status: Begin within 2 days, effective for 90 days.

-

@ben_lubar is that the lowest it'll go without being off?

Do we have ngix logs/stats?

-

@swayde said in Moar Cooties:

Do we have ngix logs/stats?

Unedited except for redactions:

$ tail /var/log/nginx/access.log 66.249.66.165 - - [30/Mar/2016:22:25:42 +0000] "GET /socket.io/?EIO=3&transport=polling&t=LF4qU5Q&sid=[redacted] HTTP/1.1" 400 52 "https://what.thedailywtf.com/topic/19205/blakeyrat-pointing-out-nodebb-problems/913" "Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)" [redacted] - - [30/Mar/2016:22:25:42 +0000] "GET /socket.io/?EIO=3&transport=polling&t=LF9eNp-&sid=[redacted] HTTP/1.1" 200 4 "https://what.thedailywtf.com/category/7/side-bar-wtf" "bypass_zscaler" [redacted] - - [30/Mar/2016:22:25:42 +0000] "POST /socket.io/?EIO=3&transport=polling&t=LF9eTwd&sid=[redacted] HTTP/1.1" 200 2 "https://what.thedailywtf.com/category/7/side-bar-wtf" "bypass_zscaler" [redacted] - - [30/Mar/2016:22:25:42 +0000] "GET /uploads/default/21406/f466434797c4f020.png HTTP/1.1" 200 17767 "https://what.thedailywtf.com/topic/18019/moar-cooties/81" "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/49.0.2623.87 Safari/537.36" [redacted] - - [30/Mar/2016:22:25:42 +0000] "GET /plugins/nodebb-plugin-emoji-static/static/images/tdwtf/giggity.gif HTTP/1.1" 200 1505 "https://what.thedailywtf.com/topic/18019/moar-cooties/81" "Mozilla/5.0 (Windows NT 10.0; WOW64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/49.0.2623.87 Safari/537.36" [redacted] - - [30/Mar/2016:22:25:42 +0000] "GET /socket.io/?EIO=3&transport=websocket&sid=[redacted] HTTP/1.1" 101 0 "-" "Mozilla/5.0 (Windows NT 6.1; WOW64; rv:45.0) Gecko/20100101 Firefox/45.0" [redacted] - - [30/Mar/2016:22:25:42 +0000] "GET /topic/19454/nodebb-updates/29 HTTP/1.1" 503 1157 "-" "Mozilla/5.0 (Windows NT 6.1; WOW64; rv:45.0) Gecko/20100101 Firefox/45.0" 66.249.66.165 - - [30/Mar/2016:22:25:42 +0000] "GET /api/widgets/render?v=5b6ca047-9cff-4292-8893-f8a05b675472&locations%5B%5D=sidebar&locations%5B%5D=footer&locations%5B%5D=header&template=topic.tpl&url=topic%2F7637%2Fsave-the-pacific-northwest-tree-octopus%2F13 HTTP/1.1" 200 989 "https://what.thedailywtf.com/topic/7637/save-the-pacific-northwest-tree-octopus/13" "Mozilla/5.0 (compatible; Googlebot/2.1; +http://www.google.com/bot.html)" 100.43.90.12 - - [30/Mar/2016:22:25:42 +0000] "GET /topic/12697/the-it-anecdotes-thread/57 HTTP/1.1" 503 1157 "-" "Mozilla/5.0 (compatible; YandexBot/3.0; +http://yandex.com/bots)" 40.77.167.104 - - [30/Mar/2016:22:25:42 +0000] "GET /forums/p/13917/312145.aspx HTTP/1.1" 301 193 "-" "Mozilla/5.0 (compatible; bingbot/2.0; +http://www.bing.com/bingbot.htm)"

-

ok, I've rate limited any IP sending requests with a user-agent matching

/(bot|spider|slurp|crawler)/ito 1 request every 10 seconds in nginx.

-

@ben_lubar Let's hope Mozilla never adds the name of its JS engine to the user agent then ;)

-

@RaceProUK hey, if they want to make their browsers get tagged as bots on every website ever, they can go right ahead!

-

@ben_lubar I think you may have hit servercooties with that as well... They are reporting 503s while I'm browsing ok...

-

@ben_lubar said in Moar Cooties:

hey, if they want to make their browsers get tagged as bots on every website ever, they can go right ahead!

Serves them right for using Firefox

-

@Nocha weird, the requests only have 1/30 as an error status, but the overall has 10/10 as 503 errors.

-

@ben_lubar It looks like it is being a little enthusiastic with it's requests - I just saw a couple tagged as 23:53.34 one was 200, in topic/2, but the overall one was showing as 503...

-

@Nocha It's been a while since I last checked, but IIRC the 'Overall' is the worst of the individual status codes

-

Well that's a start...

-

@ben_lubar Do we know what the bots are crawling that's killing the server? Is it just them trying to access all the posts in all the categories?

-

@sloosecannon said in Moar Cooties:

Is it just them trying to access all the posts in all the categories?

Probably. Their insistence on following every link ever just slaughters cache coherency which forces the DB to churn the disk and that makes everything die, and it explains why we had several weeks of trouble-free use first: the spiders hadn't realised that everything had changed.

-

@sloosecannon well, it looks like whatever was causing the cooties went away when I rate limited the bots.

-

@ben_lubar said in Moar Cooties:

well, it looks like whatever was causing the cooties went away when I rate limited the bots.

Bet you it's baiduspider.

Filed under: I'm onto you, baiduspider!

-

if that mystery was solved.... bring back the t/1k!

-

@darkmatter said in Moar Cooties:

bring back the t/1k!

I'll be going to sleep soon. Not going to leave that up all night for you goons to molest. Maybe we'll try in the morning.

-

@boomzilla fair enough.

-

-

@ben_lubar I know it's a drop in the bucket probably, but where are the bots getting the idea of hitting

socket.io? Aren't they served static content? How are they navigating to that?

-

@ben_lubar said in Moar Cooties:

@sloosecannon well, it looks like whatever was causing the cooties went away when I rate limited the bots.

Have we not learnt anything? You cannot just go around inspecting logs and form a hypothesis about what is wrong and then directly go and test your hypothesis like this! You need to first set up a test site, wait a couple of weeks for the problem to hit there too, then revert back to an older version of NodeBB to see if the problem persists. If it does, you need to write tests to cover all possible aspects of your hypothesis and run these on your test site for a couple of weeks. Then, and only then, should we bring back the main forum online again and try to fix it!

-

@Onyx Google's crawler executes JavaScript; I imagine other bots do too

-

@RaceProUK I'm aware, but since there's a "plain" version of the forums as well I expected that to be served to the bots.

-

@Onyx Crazytalk.

-

I wonder why there were Tomcat responses on this forum recently...

Did it switch to JVM?

-

@Adynathos That was when @ben_lubar took down the instance completely.

-

Oh jeez, I never thought of crawlers also connecting to the websocket server... there's really no need to, so I wonder if we can just blacklist them via user agent detection

-

@julianlam Any reason they are not just simply getting the plain HTML version? Is there crawlable data that's only visible with JS on?

-

@julianlam robots.txt is much better than serving different content to bots. Anything malicious will ignore both, so we may as well use the method that doesn't penalize us on search engines.

-

@Onyx It's their prerogative, we don't change our html for bots or users, and there's no content retrieved via js (at least on cold load)

-

@ben_lubar Cooties are back! Turn off iframely! NOWWWW!!!

OK, that was sarcasm. Did we identify why iframely could have caused cooties last week?

-

@NedFodder I'm not ben, but here's my guess:

We self host it, so those bots doing all those requests would have been putting extra load on that, too. So it was an "easy" reduction in server load.

-

cc @ben_lubar

It's not a disaster; the site's still working, just a bit sluggish. What I can't work out is if it's related to reactivating iFramely?

-

The purple is iframely, and it doesn't seem like it was taking any longer than usual during the cooties.

However,

Mozilla/5.0 (compatible; Baiduspider/2.0; +http://www.baidu.com/search/spider.html)shows up as having an unusually longGET /topic/11465/the-bad-ideas-thread/10011(87.6 seconds of backend stuff, with iframely only contributing 2.34 of those seconds).Let's see how many IP addresses Baiduspider has accessed the site from today.

$ grep '01/Apr/2016' /var/log/nginx/access.log | grep 'Baiduspider' | cut -d' ' -f 1 | sort -u | wc -l 132So Baidu has the ability to send up to 13.2 requests per second. Ugh.

?

?