Advanced Trolly Logic

-

-

The trolley is omnipotent. On one track is Yahweh, on the other track is all of humanity.

Now try with all of humanity minus a breeding pair.

Now try with merely 1 million of humans.

-

@Gribnit said in Advanced Trolly Logic:

The trolley is omnipotent. On one track is Yahweh, on the other track is all of humanity.

Now try with all of humanity minus a breeding pair.

Let it go wherever that incel is.

-

-

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

-

@boomzilla said in Advanced Trolly Logic:

I picked the cat instead of the group of lobsters

You monster!

-

@boomzilla said in Advanced Trolly Logic:

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

They had so much more life ahead of them, though. Even in a meaningful way.

-

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

They had so much more life ahead of them, though. Even in a meaningful way.

It wasn't about the lobsters. It was about the cat.

-

-

@boomzilla said in Advanced Trolly Logic:

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

They had so much more life ahead of them, though. Even in a meaningful way.

It wasn't about the lobsters. It was about the cat.

When the ocean reaches forth for you, you will at least know why.

-

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

They had so much more life ahead of them, though. Even in a meaningful way.

It wasn't about the lobsters. It was about the cat.

When the ocean reaches forth for you, you will at least know why.

Wind and tides and shit. Yeah.

-

@boomzilla said in Advanced Trolly Logic:

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@Gribnit said in Advanced Trolly Logic:

@boomzilla said in Advanced Trolly Logic:

@GOG I probably could have gotten a bit higher but I picked the cat instead of the group of lobsters.

They had so much more life ahead of them, though. Even in a meaningful way.

It wasn't about the lobsters. It was about the cat.

When the ocean reaches forth for you, you will at least know why.

Wind and tides and shit. Yeah.

No dude. The lobsters. They're immortal.

-

@Gribnit only the winners.

-

@boomzilla said in Advanced Trolly Logic:

@Gribnit only the winners.

It's this kind of attitude that keeps you from being only 40 evil.

-

I’m surprised a non-zero number of people here claim to have sacrificed the cat. A soon as I saw that one come up, I knew the popular choice would be the wrong one.

-

@kazitor I feel bad for leaving some kills on the table.

-

-

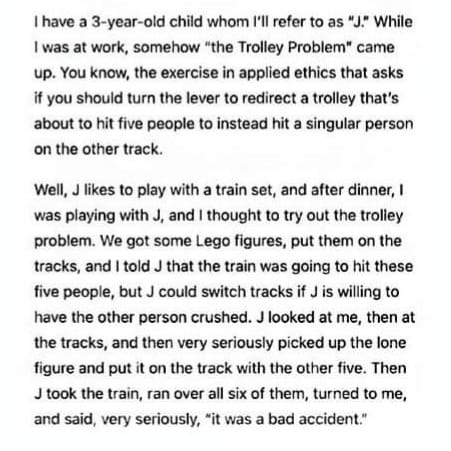

@boomzilla J has a bright future in the

.

.

-

The unique workspace perk is hard alcohol on tap. Do you take the job?

this is a trolley problem.

-

-

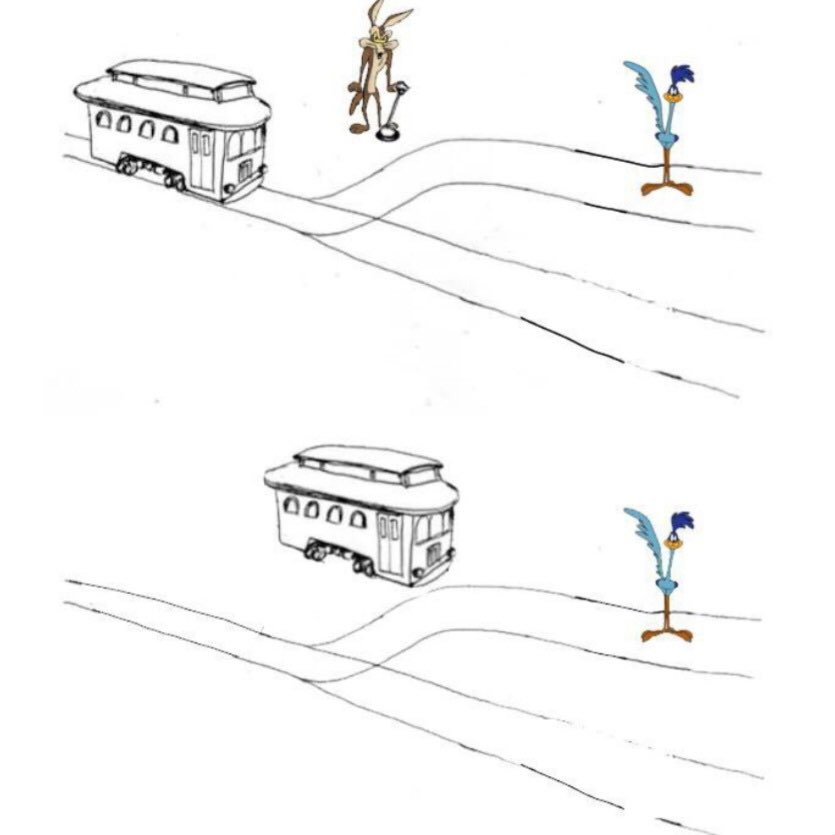

@Mason_Wheeler accepted, but note that the translocation of the trolley is more in the realm of a Mynah Bird / Dodo bender-type toon than a blowback-type like the Roadrunner. In the hypothetical original, both tracks would have curved toward the Coyote, evoking the cursed spears of more ancient mythologies.

-

So what would a robot subject to Asimov's laws do when faced with the trolley problem?

If he throws the switch, he directly causes harm to a human, which is a violation of the first law.

If he does not throw the switch, he through inaction allows a human to come to harm, which is also a violation of the first law.For the sake of argument, assume he cannot access the track directly.

-

@PleegWat This could be where a poor programmer would have the robot enter an infinite loop or infinite recursion, leading the robot to just sit there indefinitely, or at least until the conflict or deadlock had passed. Realistically speaking, it's going to be impossible to determine if a robot has always abided by Asimov's law in the strictest sense. A more obvious example of this is if you had a robot firefighter who had to choose between saving people from two buildings on fire, each miles away from each other. There's still laws of physics and logic which will always have higher priority by its very nature.

-

@PleegWat said in Advanced Trolly Logic:

So what would a robot subject to Asimov's laws do when faced with the trolley problem?

4th Law deals with this, but, a 3 Law robot could destroy the tracks.

-

@PleegWat said in Advanced Trolly Logic:

So what would a robot subject to Asimov's laws do when faced with the trolley problem?

If he throws the switch, he directly causes harm to a human, which is a violation of the first law.

If he does not throw the switch, he through inaction allows a human to come to harm, which is also a violation of the first law.For the sake of argument, assume he cannot access the track directly.

The 2004 documentary I, Robot, starring Will Smith, suggests that the answer would be to kill all humanity in order to prevent harm from coming to them

-

@PleegWat If you've actually read I, Robot, (the book, not the abomination of a movie they made well after the author was too dead to object to it,) the answer is clear. The Three Laws were presented as an engineering concept, producing numerical values that gave certain weights to a robot's decision-making process, rather than as black-and-white absolutes. Several of the stories in I, Robot used this as a plot point in some way.

With that in mind, throwing the switch causes less harm than not doing so, so the First Law would mandate the robot to throw the switch.

-

@hungrier yeah, you should never let them integrate over time if you can help it.

-

@Mason_Wheeler said in Advanced Trolly Logic:

I, Robot, (the book, not the abomination of a movie they made well after the author was too dead to object to it,)

Indeed, this is why I prefer the Fantastic Voyage movies.

Indeed, this is why I prefer the Fantastic Voyage movies.

-

Well, this one's easy. The Boddhisattva Vow is the ultimate entanglement, and this action would amount to it.

-

-

-

@Mason_Wheeler said in Advanced Trolly Logic:

@PleegWat If you've actually read I, Robot, (the book, not the abomination of a movie they made well after the author was too dead to object to it,) the answer is clear. The Three Laws were presented as an engineering concept, producing numerical values that gave certain weights to a robot's decision-making process, rather than as black-and-white absolutes. Several of the stories in I, Robot used this as a plot point in some way.

With that in mind, throwing the switch causes less harm than not doing so, so the First Law would mandate the robot to throw the switch.

Wasn't there a plot point where a robot actively murdered a human (who was about to kill a lot of other humans), but the contradictions caused it to die?

Honestly, I thought the movie just took that concept and amped it up to 11, but without the robot suicide. A temporary time of chaos, and then everything is fixed forever. (But life is messy, so of course that wouldn't have worked.) Even during the rebellion, I don't think anyone was killed -- just captured and occasionally injured while being captured. (Though there was that scene earlier in the car where the robots are clearly trying to do something violent to Will Smith, they could have been trying to capture him.)

-

@PotatoEngineer sort of.

So, there is Sonny, the special one built by the old man, with special programming like the ability to dream, to have secrets, and the ability to choose - when necessary - to kill. Specifically to kill the old man, so Will Smith would come investigate.

Then there is VIKI, the AI that organises the city; VIKI has tapped into the next gen of robots, and is using them to murder people (like 'accidentally' rescheduling the destruction of the old man's house to a time when Will Smith was inside to try to kill him), with the theory of short time of chaos, then humanity is protected from itself.

VIKI has also ensured all the legacy robots are stashed away where they can't interfere.

Sonny is declared guilty of murder (of the old man) and is sentenced to be effectively murdered himself - but the old man knew ahead of time and built him with an extra special body to resist that exact situation.

Sonny then uses the nanites-or-whatever that they were going to use to destroy him on VIKI so that humanity is liberated from the next generation of crazy robot and all the legacy robots come out of hiding.

So, uh, yes.

-

@PotatoEngineer said in Advanced Trolly Logic:

Wasn't there a plot point where a robot actively murdered a human (who was about to kill a lot of other humans), but the contradictions caused it to die?

I don't recall that story, but it's been a while since I read it. Do you have a name for it?

-

@Mason_Wheeler said in Advanced Trolly Logic:

@PotatoEngineer said in Advanced Trolly Logic:

Wasn't there a plot point where a robot actively murdered a human (who was about to kill a lot of other humans), but the contradictions caused it to die?

I don't recall that story, but it's been a while since I read it. Do you have a name for it?

I haven't read the particular story myself, but I've heard others talk of it. Here's a summary article for when robots actually murder humans. The one I'm thinking of is Robots And Empire, where a robot invents the Zeroth Law (same as the first, but with "humanity" instead of "individual human"), and dies while trying to use it by making all of Earth gradually radioactive. Also, Forward The Foundation, where a robot-indistinguishable-from-a-human kills an assassin.

https://gizmodo.com/how-isaac-asimovs-non-deadly-robots-got-lethal-5268218

-

@PotatoEngineer You're right, that was in Forward The Foundation. (But not in I, Robot, which is probably why I couldn't remember it being there.)

I've never read Robots and Empire, but that wouldn't surprise me too much.

One thing I do remember is that one of the stories involved a challenge to prove that a specific character was a human being and not a robot masquerading as a human. One character argued that, without taking them against their will and cutting them open, there was no good way to tell, because the behavior of a robot conforming to the Three Laws is indistinguishable from that of a good person living a virtuous life. So it kind of makes sense that at some point it would have to deal with the age-old question of using violence for virtuous ends, when it's the only feasible way to prevent far worse results.

-

@Mason_Wheeler said in Advanced Trolly Logic:

@PotatoEngineer You're right, that was in Forward The Foundation. (But not in I, Robot, which is probably why I couldn't remember it being there.)

The very last story in I, Robot had a very weak version of the Zeroth Law, where the global supercomputer-robot was willing to embarrass/discredit individual humans to make sure that humanity as a whole prospered. (The individual human were leaders who didn't believe the supercomputer's economic projections and made up their own numbers. The supercomputer could compensate for the leader's errors, but did so only weakly in order to discredit them.)

-

-

-

@Mason_Wheeler yes.

GG EZ.

-

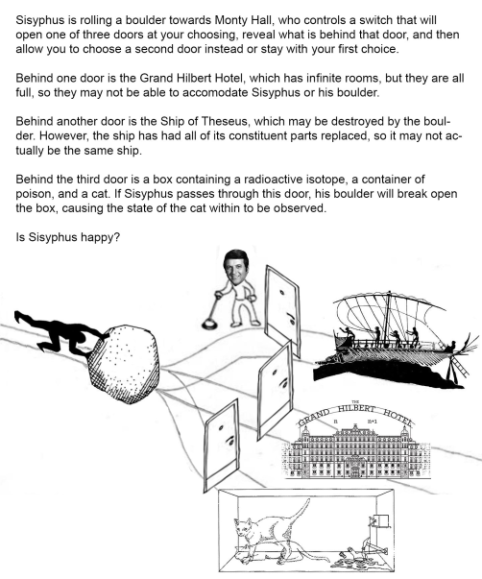

@Mason_Wheeler Hey, look, with the Hilbert hotel being full, Sisyphus would be capable of causing an infinite number of casualties with only one stone. That could be great fun!

-

@BernieTheBernie I wouldn't worry about The Grand Hilbert Hotel; they can always shuffle everyone up to the room number one larger to make space.

-

@dkf If they are fast enough.

Anyways, the quantum thingy could make Sisyphus happy, too: he could interfere in the quantum world with the enorm force of his boulder. Extremely macroscopic world hits quantum world.

But the cat may have been dead already, which becomes revealed by his action. So by smashing the cat with his boulder, he might not be able to kill it, and in that case, he might not be happy.

-

@dkf Why bother all the guests though? They can let a number of guests keep their rooms and have the others move just a bit further.

I propose moving only the guests in rooms which are a multiple of a certain integer. Could be an arbitrarily large number.

-

@BernieTheBernie said in Advanced Trolly Logic:

and in that case, he might not be happy

Sisyphus is, of course, happy because he reached someplace and the boulder isn't rolling back. It does not really matter what.

-

@Zecc said in Advanced Trolly Logic:

@dkf Why bother all the guests though? They can let a number of guests keep their rooms and have the others move just a bit further.

I propose moving only the guests in rooms which are a multiple of a certain integer. Could be an arbitrarily large number.

Move the guest in room f(n) to room f(n+1), where f(n) is the number of terms in the harmonic sequence needed to reach n.

-

@Zecc said in Advanced Trolly Logic:

@dkf Why bother all the guests though? They can let a number of guests keep their rooms and have the others move just a bit further.

I propose moving only the guests in rooms which are a multiple of a certain integer. Could be an arbitrarily large number.

Or, just as easily, you one-off move all guests so a large majority of rooms are empty, and then you just fill in such a way that this stays the case.

-

@Watson said in Advanced Trolly Logic:

@Zecc said in Advanced Trolly Logic:

@dkf Why bother all the guests though? They can let a number of guests keep their rooms and have the others move just a bit further.

I propose moving only the guests in rooms which are a multiple of a certain integer. Could be an arbitrarily large number.

Move the guest in room f(n) to room f(n+1), where f(n) is the number of terms in the harmonic sequence needed to reach n.

Pathetic. Use the Ackermann function instead.

-

@topspin said in Advanced Trolly Logic:

@Watson said in Advanced Trolly Logic:

@Zecc said in Advanced Trolly Logic:

@dkf Why bother all the guests though? They can let a number of guests keep their rooms and have the others move just a bit further.

I propose moving only the guests in rooms which are a multiple of a certain integer. Could be an arbitrarily large number.

Move the guest in room f(n) to room f(n+1), where f(n) is the number of terms in the harmonic sequence needed to reach n.

Pathetic. Use the Ackermann function instead.

I'm thinking of using the sequence of values from 3↑n3 as that grows crazy fast, but any hyperoperation can probably be used.

-

@dkf I think those are roughly similar (both built on recursive hyperoperations), but I'm not sure.