What niche? This could be free money.

Posts made by Matches

-

RE: "Everything is live. Why would you need a test environment?"posted in Side Bar WTF

-

RE: Visual Studio - Hide debug output? (Quickwatch)posted in Coding Help

@ben_lubar It is, will give this a try - thanks!

-

Visual Studio - Hide debug output? (Quickwatch)posted in Coding Help

Is there a way to block display of a specific variable in 'On hover' for variables, 'Auto watch', and 'Watch'? Under normal circumstances this would be completely absurd, but I'm interested in doing some dev streams on Twitch.tv (which I've done in the past), and typically I've been blacking out my screen when I need to go into areas with potentially sensitive information. Being able to block autos and watch (and on hover) would allow me to do that less often and provide an overall better viewer experience.

-

RE: Question on SQL Server Multiplexingposted in General Help

@cheong this is a loaded question because it depends on how your company is set up, how the server's are set up, your physical location, your server locations, etc. It's best to consult Microsoft licensing directly, they are very helpful and leave a paper trail.

-

RE: Opening emoji selector crashes browserposted in Bugs

@Lorne-Kates it's not a question, you're right. It's like "how can she slap!"

Seriously though. Sprite sheet and css selectors.

-

RE: Opening emoji selector crashes browserposted in Bugs

What is, emoji spirit sheet with css selectors for request minimization and size savings, Alex.

-

RE: Status: Waiting on AWSposted in Error'd

Also relevant, beginning to see recovery = /= recovered

-

RE: SQL - easiest way to keep DRYposted in Coding Help

@lucas1 you can design your processes to not be redundant as all fuck.

-

RE: SQL - easiest way to keep DRYposted in Coding Help

@Karla said in SQL - easiest way to keep DRY:

DRY > optimization

All depends on the size of your data set.

-

RE: SQL - easiest way to keep DRYposted in Coding Help

@Karla while i make liberal use of view and stored procedure, be very careful when introducing them. They create chains that the query optimizer has difficulty with and can drastically slow down your query time, and can create a difficult chain to follow if you start going deep. Function tend to also have awful performance in the long run.

Consider creating stored procs that accept variables to define what type of query they run, so you can query as little as possible to keep things snappy. Minimize joins in your views whenever possible. Conditional if statements mostly only work in procs, views tend to suck for that.

-

RE: T-SQL syntax reference (with diagrams?)posted in Coding Help

@wft I don't care what you use. But if you said that to me and I were your boss I'd fire you.

-

RE: Google forgot how to English?posted in Side Bar WTF

@Jaloopa you misunderstand,

Google recommendations are based on what other people are typing. Google search box is based on what your typing, and what Google sees as valid.

When you type "of the" Google flagged it as incorrect. People who see that associate it with spelling errors due to word processing, and change it so the flag goes away. (Most probably reason out that of the are common words) - the number one go to? Combination. Ofthe

So Google sees your phrase, Warns you it will ignore your terms, but based on your current phrase here's what people tried directly after this search.

Additionally, after you search it, you fuck all that up because Google uses your history as a favorite phrase.

-

RE: A developer bets on UWP and loses (article)posted in General

@ScholRLEA wrong.

Source: was tmobile with rooted phone before going full no-carrier

-

RE: Google forgot how to English?posted in Side Bar WTF

@anonymous234 i don't think that represents spelling errors, but rather common words that Google plans to ignore.

Try +of and +the, or quote the phrase

The suggestions would be ways other people beat the ignored words.

-

RE: T-SQL syntax reference (with diagrams?)posted in Coding Help

@wft a condensed list won't generally do much good, mainly because the answer would be"well, which diagram do you want to see? We have creation, selection, data mining, maintenance, transformation, analytics in a million different ways, geo, queues..."

Instead, just use MSDN documentation and the tutorials they provide. Use stack overflow for ten different implementation examples, or blogs for a start to finish real(ish) world example.

The thing about sql server is that it can perform in a ton of different ways for a ton of different style environments. It can literally cover the 99.9999% of use cases which is why it's so frequently referenced. It has great query optimization, indexing support with conditional indexes, partitioning, multi disk support etc.

It's something you just have to experiment with. Create a project"i want to do X" and just do it. You'll learn how things interact, what performs well and what doesn't.

Learn to use the estimated query plan and actual query plan, and you can make diagrams from that if you so choose. But that isn't a high level execution query, that is what the actual query is doing when you run the statement. One if the reasons high level queries will be hard is because of how much the query plan optimizer does. It manages execution order, parallelization, simplification of execution, etc. It is the kitchen sink.

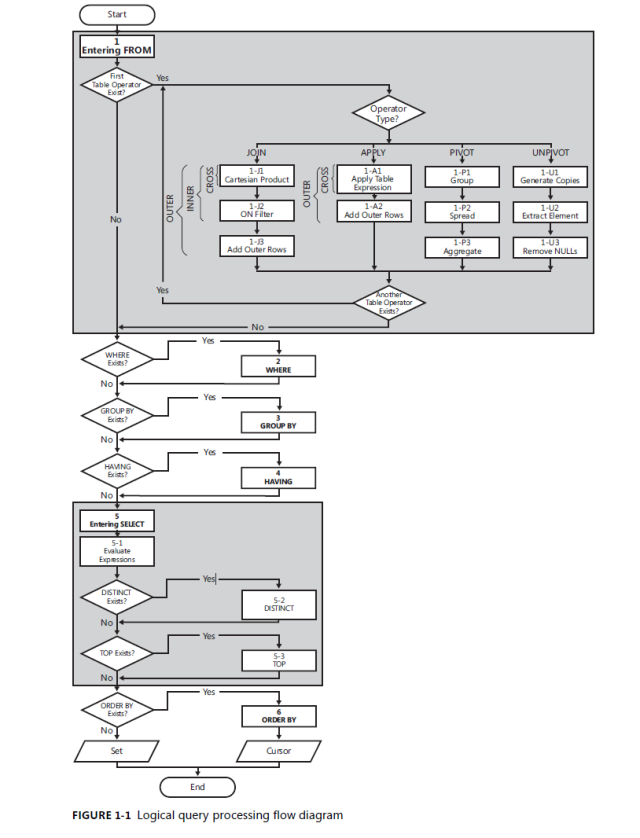

But seeing as this is coding help, start here:

It's about as high level as you can get.

-

RE: So Microsoft broke my phoneposted in General Help

@FrostCat Why do something like that when you could bring GO into the mix?

-

RE: So Microsoft broke my phoneposted in General Help

@ben_lubar You could always make a GO browser to host the hangouts chrome extension, which issues a bring-to-front when a call or message is received.

-

RE: Sql Server (Embedded?)posted in General

@cartman82 absolutely do, it's just .net 4 which is still pretty good, and it's not like the underlying code matters much for a database from a user perspective, provided performance is good.

It was replaced with local db, which supposedly can be installed and deployed in line like compact, but i can't find any decent tutorials on how to deploy the db / create it programmatically. I've made a local db before, connected to it, etc but once it goes out to client machines it has to have additional install steps which i don't want the user to have to deal with.

Consider that i still know many large banks on sql 2k5 and 2k8, sql server has always stood the test of time well.

I will be abstracting the process eventually so that it will support sqlite, postgres,mysql, etc but i don't want to start with that when my initial targets will be relatively technology illiterate and won't be until much later when other options are required or even desired. Could likely even get away with just hooking into other systems so i don't need to worry about supporting silent installs.

-

RE: Sql Server (Embedded?)posted in General

@accalia I've used sqlite plenty, the main reason why I'm currently opting for sql server compact 4 over sqlite is concurrency support. Multiple read/write threads, that sort of thing.

Sqlite has never played well with multiple readers or writers.

-

RE: When is parallelization not a performance gain?posted in General Help

@Tsaukpaetra Using

'Insert into table values (value1, 2,3) select * from something'

is different than

'select * into table from something'

The first one is much better in terms of lock contention.

I mainly mention it because people tend to get lazy and use the second form, which fucks up badly once things get busy.

-

Sql Server (Embedded?)posted in General

So in my day to day life I use Sql Server (2012-2016) and generally speaking, Sql Server is my favorite database platform. I'm aware of Sql Server Compact (4.0) and know that in general it's been discontinued in favor of Sql Server Local DB.

I generally work with remote DBs that you have full control over in terms of server infrastructure, but here's my question:

Does Sql Server have anything newer than Sql Server Compact (4.0) for no-install single user database? I haven't played much with local db, does that work as an embedded database when .Net is already installed?

Yes, I know I could use Sqlite, or mysql portable, or an array of other solutions - but I specifically want to use an embedded Sql Server (syntax) database to minimize differences between a cloud and local solution.

Assumptions that are safe to make:

- The user will have local administrator (They can install things if needed, but I don't really want a multi install process. I'd like to have a click-once installer and everything just 'works' for my application - no 'Go install .net 4.6, go install sql server, go install my program, go install...')

- The folder will be writeable (so local db can safely create a db, insert/update/etc)

- .Net 4.6 will be installed

- Application making the calls will be a c# app

The main focus here is 'No-Install' or 'Transparent Install' to the end user. The user experience is

- Download my EXE to the folder they want the app installed in

- Double click

- Magic. The app starts, is installed with a desktop icon, etc. (Click once installer)

Also if someone could move this to coding help that would be great. (Why can't I do that myself?)

-

RE: When is parallelization not a performance gain?posted in General Help

@Tsaukpaetra make sure you're not using select * into thing from source somewhere, that's frequently a performance killer as sql server waits for a lock on temp db. (Doesn't happen often, but will for sufficiently complex sql, or busy boxes.)

-

RE: When is parallelization not a performance gain?posted in General Help

@Dragnslcr even if the bottle neck is network, it might still be better to parallel the query because you might free up contesting queries which are blocking each other.

Here's a question: have you tried "set transaction level read uncommitted"?

If your query has several sub queries, or several joins, or hits a hot table that's used by others this could "magically" speed things up.

This would be mostly for testing though, because if it does improve things you need to figure out how to share your space. Are dirty reads fine? Read uncommitted. Do they require exact? Have the non important stuff take a back seat in priority. Everything critical? Review your indexes so you can row lock instead of page/table lock.

Already at row level locks, and everything is critical? Talk to your managers to establish a pecking order, get better hardware, get more hardware and merge data in a queue, etc.

Also, do everything in your power to not drink the ocean. Filter to as specific data as you can, utilize batching in 100k row updates, get with your co workers and make sure they do the same.

Make use of fetch, offset

Consider batch loading to a temp table where there will be no contention, and running a merge (or insert, update). If you're coming from c# or similar, use transactions for many row insert/updates, sql is always better with data sets, row manipulation is slow compared to bulk operations.

Have them run and save a running sql query monitor every hour / half hour and save the results. If it's causing production issues it should be pretty easy to get management sign off. You'd be looking for locks or high load from rogue user sql aka euc access applications

-

RE: TRWTF is that they had to write thisposted in Side Bar WTF

@Tsaukpaetra company name start with an s, and end with an s?

-

RE: Yami learns Powershell part deuxposted in Coding Help

@Yamikuronue i thought your download file method was a wrapper around a regular web request. Didn't realize it was an actual built in method, i work with api all day and have never had reason to use that method lol

-

RE: Yami learns Powershell part deuxposted in Coding Help

@Yamikuronue I'm still claiming credit for linking you to try catch and the specific web exception, and you can't stop me.

-

RE: Yami learns Powershell part deuxposted in Coding Help

@Yamikuronue a web request is a language feature, you can catch the web exception, and exception to dig into it. But usually web requests look like this:

Var request = (HttpWebRequest) WebReqiest.Create(url)

Note there is no new in that statement

Your specific error says that something is calling downloadfile with two arguments, but download file does not take two arguments, so it doesn't know what to do

-

RE: TRWTF is that they had to write thisposted in Side Bar WTF

@Tsaukpaetra then there's only one thing to do.

Create a handful of new tables that have a foreign key to the truncated tables. It will make the truncates fail.

You don't happen to be working for a blue company related to houses?

-

RE: TRWTF is that they had to write thisposted in Side Bar WTF

@Tsaukpaetra if your data deals with a lot of edit/delete records, it actually is faster in a lot of cases to truncate, bulk insert instead of insert, update, delete. The main reason is the transaction log.

That being said, it's a very specific use case in very specific live data environments, and should never be used for sources of truth. But when you meet all the check boxes, it is the best route in terms of minimal downtime to record updates required.

Reporting and data aggregates tend to be the scenario where this makes sense, rather than the working system.

-

RE: Webservice, takes object as argument, but object is inheritedposted in Coding Help

@Lorne-Kates generally when you are sending objects that can be basically anything it's best to wrap them in an envelope, so basically

TypeID = something (enum, reflected type, whatever is easiest to handle)

Content = wrapped data objectThen the wrapped data object can contain meta data (ie, timestamp for send, flags, etc) and a dynamic field content (or for older version, or languages without dynamic, use a serialized string)

That way you can manage data contacts and processing either through straight deserialize (if you only need a few item types) or via reflection (true generic deserialize)

Do yourself a favor, and get json.net through nuget, it has methods to

JsonConvert.DeserializeObject<Type>(json)

JObject.Parse(json)And supports methods that use

Public void Deserialize<T>(string json) where T : class

(JsonConvert.DeserializeObject<T)

JObject.Parse(json))

-

RE: Poll: do you miss polls in NodeBB?posted in Meta

@anonymous234 strawpoll uses web sockets, so probably could.

-

RE: Spam phone callsposted in General Help

@masonwheeler no, really, there was no data cap. I used 1gb at 4g lte, and another 300gb on 3g. It was free.

The uncapped data had a specified limit, your usage did not.

-

RE: Spam phone callsposted in General Help

@masonwheeler except tmobile does unlimited for (all?) Their plans, they just drop you to 3g speed when you exceed your limit.

*Plans may have changed in the last years, previously i was on included 1 gb data at 4glte and after unlimited at 3g for free.

-

RE: Spam phone callsposted in General Help

@russ0519 Google maps doesn't need a data connection. Just download the map for your area and your phone works fine even if you go off route.i use maps extensively.

-

RE: Spam phone callsposted in General Help

@russ0519 i don't make calls while driving. I don't text and drive. My work has wifi, my house has wifi, my friends have wifi, the store has wifi, and fast food has wifi, diners have wifi, the place that changes oil has wifi. You can't travel around town and spit without hitting wifi

Edit: and i currently live in Alabama, so don't you try to tell me it's not a thing.

It was in Oregon, Cali, Texas, Ohio, Alabama, Utah, Nevada.

-

RE: Spam phone callsposted in General Help

@russ0519 no, i mean all phone services. I am 100% VoIP with Google hangouts/Google voice. I make no payments for phone service because I'm always attached to wifi.

I bought a tmobile hot spot for emergency data if i need it while traveling, but I've only used it twice in three years, and it was for convenience not emergency.

-

RE: Spam phone callsposted in General Help

@russ0519 i paid $20 to move my number to Google voice, and cancelled all my carrier plans. I haven't paid for phone service for about 3 years.

-

RE: Spam phone callsposted in General Help

@Onyx depends on what is identifying them. If it's from a free app like white pages, or any of the lookup apps, it's easy enough to have your victim flag you as Dell if your spoofing as Dell. Get enough of those and get marked as a Dell number.

Dell is also a big company, they could have decommissioned the number and later been picked up

You can register a new number as Dell (display name) and most carriers don't care

You can just straight up spoof the number and caller id.

It's a pretty wide market of possibilities

Of note, extending first paragraph down here because nodebb is deleting my text, some carriers include caller id app bundled with your phone software, and it's just as unreliable as the white page style app, but it's usually vendor specific and embedded into your call ui

-

RE: Spam phone callsposted in General Help

@Onyx often they are real numbers, but assigned to large outbound pools of numbers. For example Skype. They wire it up to use the outbound pool and the big providers handle the proxy for them. It's a design being abused from the original purpose, but it's basically tor for phones

-

RE: Spam phone callsposted in General Help

@russ0519 the most effective way I've gotten to almost 0 spam calls (used to get 4+ a day) was switching to Google voice, it filters out known spam callers for you actively, and allows block and reporting options. Plus Google voice allows for options on how people can ring you (time of night), (on your contact list), (block private numbers)

-

RE: WTF Bitesposted in Megatopics

@Grunnen except in access you are adding a where because it gets rearranged to be a where join. The logic engine is meant for very simple queries, so you have to break joins down into very simple structures, or use a real database.

-

RE: WTF Bitesposted in Megatopics

@Grunnen no, your problem is your age comparison. Check if age is null or greater and it will work, but generally sub queries are less ambiguous

Select thing

From

(Select stuff from table 1) as a

Some join

(Select other stuff from table 2) as b

On key

Where join row is null or matches a condition -

RE: streaming help neededposted in Coding Help

@FrostCat sounds like you got it under control then, keep at it.

-

RE: streaming help neededposted in Coding Help

@FrostCat since we're talking about ado, I'm just going to assume access to c#, maybe consider using a pdf library? itextsharp is pretty good, but really any c# library that supports generating pdfs from a template, or using html, or a template+ stamping+flattening should do you just fine. When you generate the document, send it as a byte stream to the user and don't call the save methods.

You should probably look for a library that supports acrofields if you want to support forms, so users can sign documents easily

-

RE: streaming help neededposted in Coding Help

@Gąska I'm assuming contract terms or other sensitive data. We [my company,not frost] build custom pdfs using html and send them out to echo sign for signing. Pdf is terrible to work with when you're doing custom work.

-

RE: The future with self-driving carsposted in Funny stuff

@loose this is consistently what i try to warn people about related to automated driving: even if the company sells it as full automation (ie: Google) keep your wits about you, and watch the fucking road for danger, especially since now you have more time to do so. Computers programmed by people will always have edge cases and scenarios that are unaccounted for, because road conditions are not equal, do change, and new shit comes along all the time.

I fully support, embrace, and encourage progress towards automating driving, but you need to smack a bitch in the face if they suggest they can text, play games, watch movies or do anything other than watch the road when driving.