I'd like to share a nice(?) little(?) horror story from a job in years gone by... Apologies for the length, but there's a lot of stuff involved with this, and I've no doubt forgotten a fair number of details.

I'd gotten a temporary gig as a sysadmin at the local public library, where, due to union classifications and whatnot, you couldn't be a "systems administrator" or "IT guy" or whatever. You were a "Librarian Assistant".

It was a pretty standard IT gig. Run some servers (one OpenVMS box for the library catalogue/circulation system, and at the time, some NT4 Server systems), and support the desktops (NT4WS). One of the apps we had to try and support was a little gem called InterLEND, used to "automate" the inter-library loans (ILL) process (sending requests, tracking the progress of requests sent out, yada yada).

InterLEND had started its days as a DOS program, using a modem to dial up to various obsolete and (hopefully) long dead services to sent requests to other libraries. It wasn't fancy, but it did what it had to with a minimum of fuss. And then the Windows age came along, and InterLEND was re-released as a Windows app, with all the usual bells and whistles. Menu bars, button bars, scroll bars, lots of colors, and all the other good stuff that comes with Windows (resource leaks, unexplained crashes, oh joy).

Now, to make one thing clear, InterLEND for Windows had one nice thing going for it: its data files were 100% backwards compatible with all previous versions, including the pre-history DOS versions. You could use any version to read/write the data files and perform the daily tasks without problem, or so it was thought. This saved our butts a few time.

We found various problems with the application. Some by the users during normal work, some by us techies during testing of new versions. Amongst them:

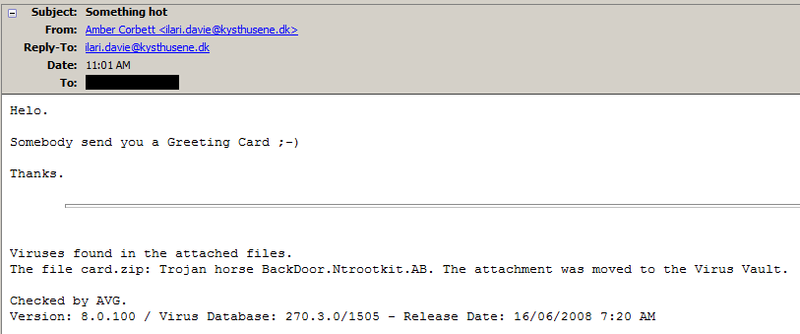

1) InterLEND windows was TCP/IP aware and could send requests via SMTP and retrieve them from POP mailboxes. However, any messages in the mailbox which did not contain the standard ISO interlibrary loan protocol formatted messages could (and pretty much always did) crash the Mailer portion of InterLEND, which then took down the rest of InterLEND. While running the mailer, you'd get a nice little status window showing the application babbling at itself and at the POP and SMTP servers. But it wouldn't tell you which incoming message was causing the crash. You'd have to fire up an email app (Outlook, Eudora, whatever...) to rummage through the mailbox and try to guess which one was causing the problem. All'd you get is Doctor Watson telling you there was a problem.

Sometimes it was obvious: a spam message had crawled in (usually not a problem, as the email addresses for these requests were generally not posted publicly anywhere on the net). Sometimes it wasn't. If a misbehaving mailer at another library had sent out a malformed message, InterLEND would choke and hit the floor retching bits all over the place. To figure out which one was causing the crash, you'd print out a copy from the mail client, delete the message from the mailbox, and re-run InterLEND's mailer, until it managed to get past the spot causing the crash.

Then you'd take the messages you'd printed out (no, there was no wooden table involved) and see if they'd shown up in InterLEND. The one that wasn't in the database was the one causing the crash. Thankfully, the ISO format was plaintext with various padding and delimiter characters (mostly +'s), so extracting the message details wasn't too painful. You could manually enter the details into InterLEND as a new request and from there things would proceded normally.

2) InterLEND for Windows' interface was, to put it mildly, quirky. It had approximately 15 tabs underneath the main menu bar, each representing an area of the program (Mailer, Requests, Clients, Search, etc...). They all had different colors to differentiate them, so that from a distance, InterLEND looked like a rainbow that had a serious personality disorder.

3) Some of the tabs, such as search, would naturally contain a lot of data. If you did a search for any requests whose item in question contained the word 'The', you'd get quite a few hits. So the search results were displayed in a scrollable window. Nothing wrong with that... but for some reason InterLEND presented *two* scrollbars, side-by-side. The outer one was the standard scrollbar any "tall" content will show in a window. The second, inner-most, scrollbar was... different. It also scrolled the content, but *ONLY* the content that been retrieved from the database so far. If you did a query that returned, say, 5000 results, the other scrollbar would size itself to scroll through those 5000 results, retrieving data only as you scroll down through the recordset.

If you'd scrolled down to (maybe) record 1000, the outer bar would be moved down the scroll area by about 1/5th the available area. However, the inner scroll bar would be pinned at the bottom of the scroll area, as you were displaying record #990-1000 of the records retrieved so far.

I don't know why that second scroll bar was there. It completely duplicated the functionality of the outer bar. At best it served as a very bad "zoom" function to view previously retrieved data, at worst it was someone's "hey! that's cool!' idea for stuffing in more features.

4) InterLEND used some kind of private/proprietary database for storing all of its data. They didn't use any of the readily available "private" databases, such as Jet or Borland DE. Maybe this is due to InterLEND's history as a DOS program from the stone ages of computing, created before such fancy DB engines were available. Whatever engine they were using, it did what it had to. Stored data, updated data, deleted data, retrieved data. But on occasion, it would corrupt itself. Being proprietary means, of course, that there's no real repair tools. And whatever its internal construction was, meant that any corruption left the entire database was a steaming pile of random bits. You couldn't rebuild an index, delete a corrupted record, or lop off a chunk of the file to excise the bad spots. A flipped bit somewhere hosed the whole thing.

This meant the database files had to be religiously backed up, lest you lose everything. Thankfully the corruption didn't occur too often, so a backup granularity of 1 day usually did the trick. At most you'd lose that morning's working (incoming requests were retrieved/processed in the mornings, and outgoing requests were done in the Afternoons). But when it did hose itself, work stopped until the nightly backup tape could be retrieved and last night's copy of the files retrieved. So standard SOP was to print out anything you'd been working on, in case the DB fried itself, which leads to....

5) One of InterLEND's selling features was that it would reduce the amount of paperwork needed to process a request. Interlibrary Loans, by their nature, are paper-intensive. The requesting library has to send a form for the actual request. The lending library has to send forms along with the item to specify due dates and shipping instructions. If the item's going across international borders, customs declarations have to be included. Oy veh. And as well, at both ends of the process, more forms are used for the requestor to tell the requesting library what they want, for the lending library to retrieve it from the stacks, etc... In the end, sending a single book from library A to library B could slurp up 10 or more sheets of paper. And then there's the form letters to the original request to tell them the item's arrived and that it can be picked up, and the form letters to tell the same person that it's now overdue and they owe $toomuch in overdue fines, etc...

No way to get around those 10 pages... paper trails must be maintained. But, there's quite a few stages to processing an ILL request. There's requests, returns, queries if the request wasn't specific enough ("did you really want 20 years worth of that magazine? or 20 pages from a specific issue?"), and status updates if either side isn't processing things fast enough. Each one requires digging out the paper trail and ticking off things on the forms to indicate what stage the process was in.

So now we've gone from a paper intensive manual process to a fully automatic .... paper intensive manual process.

Oh well... at least they were saving mad coin on postage due to emailing most of the queries and status updates. They could use some of it to help offset the cost of all that paper...

Now for the oddities in InterLEND...

1) A longstanding never-fixed bug was embedded in the mailer. There was no error handling on the POP end of things. If the mailbox was unavailable for any reason (network burp, typo in the user or server name, bad password, etc..), the InterLEND client again barf bits and crash.

When this bug was found during new version rollout testing, InterLEND's supplier claimed it was our fault for having triggered the bug. After all, who in their right mind would enter deliberately incorrect information into an application?

2) InterLEND was supposedly network-aware. This was in the marketroid features checklist somewhere. What this mean is that it could be run off a network drive without problem, and that it could do SMTP and POP email.

What it didn't mean is multi-user concurrency. Only one person could be using it to update the database at any time, or it would chew itself to bits. Reading, however, wasn't a problem. As we had two people working on ILL processing at the time, this presented a problem... Either they'd have to a) take turns running the app, or, horribly, b) be very careful about when either of them would do an update on the database.

So of course, they opted for b). At least the two people had desks side-by-side and could tell each other when they were doing an updates. But after a long day staring at forms, mistakes occasionally get made...

3) InterLEND for Windows, as mentioned above, brought in all the fancy GUI features that come with Windows (indeed, it seems like the vendor tried to cram in as many GUI widgets as they possibly could). Along with this is brought in proportionally-spaced fonts for the various printouts it could do. But those printouts were never updated to be "modern". Essentially they just dumped a plaintext ASCII-formatted page to the laser printer and told it to use Times-Roman, instead of an old dot-matrix the way they used to.

This meant the "nicely" designed forms with their columns and tab-aligned (well, looking it was tab-aligned) tables came out, at best, somewhat jagged. And of course there's no report designer of any sort anywhere in InterLEND. At most you could customize a few fields to specify return addresses and basic contact information.

This is where the backwards compatibility of the data files came into play. When it came time to print reports, they'd quit out of the Windows version, fire up the old DOS version, print whatever they had to, then hop out of DOS and back into Windows.

4) The GUI itself was never terribly stable. Simple things like scrolling around in a window could cause it to crash. Perhaps it was a race condition, as this only seemed to occur in windows that were displaying data retrieved from the database. But it wasn't frequent or consistent enough to say for sure.

But again, the vendor's response amounted to the old "Don't do that, then."

Fast forward a few years, and the decision comes down from on-high to replace InterLEND. Oh frabjuous joy! Something better! Finally... <insert sound of screeching tires, followed by prolonged car crash sounds>.

Government grants for upgrading library services were found to be available, so after a few rounds of drafts and submissions, some Federal moolah rolls in, and the upgrade process is set loose.

To select which product to migrate to, a... *gasp* CONSULTANT *gasp* was hired to do a study of available alternatives. The various libraries in the province here that used InterLEND were queried as to what their ILL staff would like from a new version, a specification and features requirement was cobbled together from the wish list, and the consultant was set loose.

After a somewhat (and curiously) short study person, the consultant pronounced that product X (I'm not mentioning what, since it's still in use here these days) would fit the bill perfectly. It had all the features, was actively maintained, the licensing costs were rather reasonable for an app of this power, and quite affordable, given the budgets of some of the libraries which would be using it. But that's not what impressed the various library representatives who were present at the dog & pony show. No, their primary comment about the whole process was about how cute the consultant was. This should've been a warning sign...

So with great fanfare, contracts were signed, install media was supplied, test servers were configured at deployed, and training sessions were organized at the provincial library's headquarters for initial testing and basic training. Everything seemed to go quite well, and people were returning from the sessions excited at what they'd be able to do when the system went live.

A few months later, after the initial training sessions were concluded and general approval of product X was noted, the decision was made to go live with it. Cue the car crash sound effects.

Product X was by its nature a client/server application. The server app ran on a server (woah, what a concept), used MS SQL Server as its data store, and generally acted as a front-end for the client apps, so they'd never have to directly interface with the database. As this system would be used by various libraries spread across the whole province, this saved a considerable amount on MSSQL licensing. Only one license would have to be purchased for the server, and a few CALs for the concurrent accesses from the front-end server app.

Everything seemed to be working perfectly. Test requests were humming along and zipping through the system, things were speading up considerably compared to the old InterLEND workflow, yay yeehaw pass the champagne. And then the rollout started, and the first remote clients began accessing the server to perform requests.

Now things started moving at a v.... e.... r..... y...... ssllloooowwwwwwwww crawl. The client application, which in testing had started up in <5 seconds from double-click to fully started, now took over 10 minutes to display the interface, and left the user stuck with a horrible splash screen that took up 50% of the desktop, and could not be put into the background or otherwise covered up.

If the user had the patience to sit through the the glacially slow startup, the client itself took nearly forever to respond to actions. Opening a query screen to search for a particular request would take 2 or 3 minutes to display the query interface. Other actions took similar periods to complete, or even start.

Much head scratching ensued. The administrations of the server could not replicate this behavior on their end, and were starting to pass the blame buck back to the users, or the users' IT infrastucture ("It works fine for us!"). The users' IT people were lobbing the buck back at the server admins, saying everything's fine on their ends, yada yada yada.

A bit of investigating by a coworker finds the real problem. Any actions in the client program are essentially round-tripped through the server front-end program. Think of Windows Vista's User Access Control, implemented in a client app. Performing any action would cause various requests to be sent to the server, i.e. "does this user have permissions for this?" or "what's the contents of table 'massive amounts of data'?".

And worse yet, many of these queries were the equivalent of an SQL "SELECT * FROM SOMETABLE", with the client rummaging through all the returned records for whatever it needed.

Aha! Now the slowdown becomes clear. All the testing and training sessions had been done via local networks at LAN speeds with some test data. There might have been a few minor speed hiccups, but those could be blamed on Windows in general, which everyone knows hiccups louder and harder than anything else in the universe. Fast forward to live deployment, with remote users accessing the system over the public internet over at best T1 lines (major centers), or at worst, 56k dialup (remote rural branches) with large amouns of production data. Suddenly the masses of data even the simplest action in the client trigger don't show up very fast anymore. And as the system becomes populated with more and more data, the slowdown will only get worse.

Some investigating reveals that product X came in two versions. One was the generally available "shrink wrap" type that the vendor supplied by default. A second version, custom-build for another library system which had also been bitten by the network speed "bug", had been rejiggered and optimized to support slower and higher-latency network links. Oops... the consultant had told everyone that product X was designed

entirely with internet usage in mind. He never mentioned this special

edition.

Essentially this second version sprinkled a little bit of the new-fangled miraculous power of SQL "WHERE" clauses to the client->server messages.

Things got.... somewhat less laggy. Now, clicking on a button would get a response in 5 or 10 seconds, rather than 5 or 10 minutes. Woah... a few extra bytes of client->server network overhead reduced wait times by about 1.5 orders of magnitude, and also reduced server->client bandwidth usage as well. Bonus!

So that's where things stand these days. Product X is in full production use by most libraries in this province. A few opted out of it, and are using other systems, or sticking with InterLEND. And as for InterLEND itself, most locations still use it a bit, slowly whittling away at the pile of old requests still active within it, longing for the day when that last request becomes completed and they can nuke the program from their systems with extreme prejudice. And the locations which have opted to stick it out with InterLEND? Well, we don't talk about those places much anymore....

The moral of all this? If the primary comment from people returning from the consultant's presentations on which product to recommend are "He's cute!", perhaps a different consultant (and group junket members) should be chosen. And perhaps the consultant's qualifications would be vetted as well, to see if he's got any library experience in his past. Experience which exceeds the "oh yeah, I've borrowed a book on occasion".