So I have some bash script that works fairly well, but I want to try to do it in mostly pure python. I am running into a lot of roadblocks and none of the GPTs are being very helpful.

aws s3 cp s3://bucket/file.txt - |zstdmt -9 | aws s3 cp s3://bucket/file.txt.zst

This bash command works and is super fast and can saturate all the CPUs on a fast enough connection to s3.

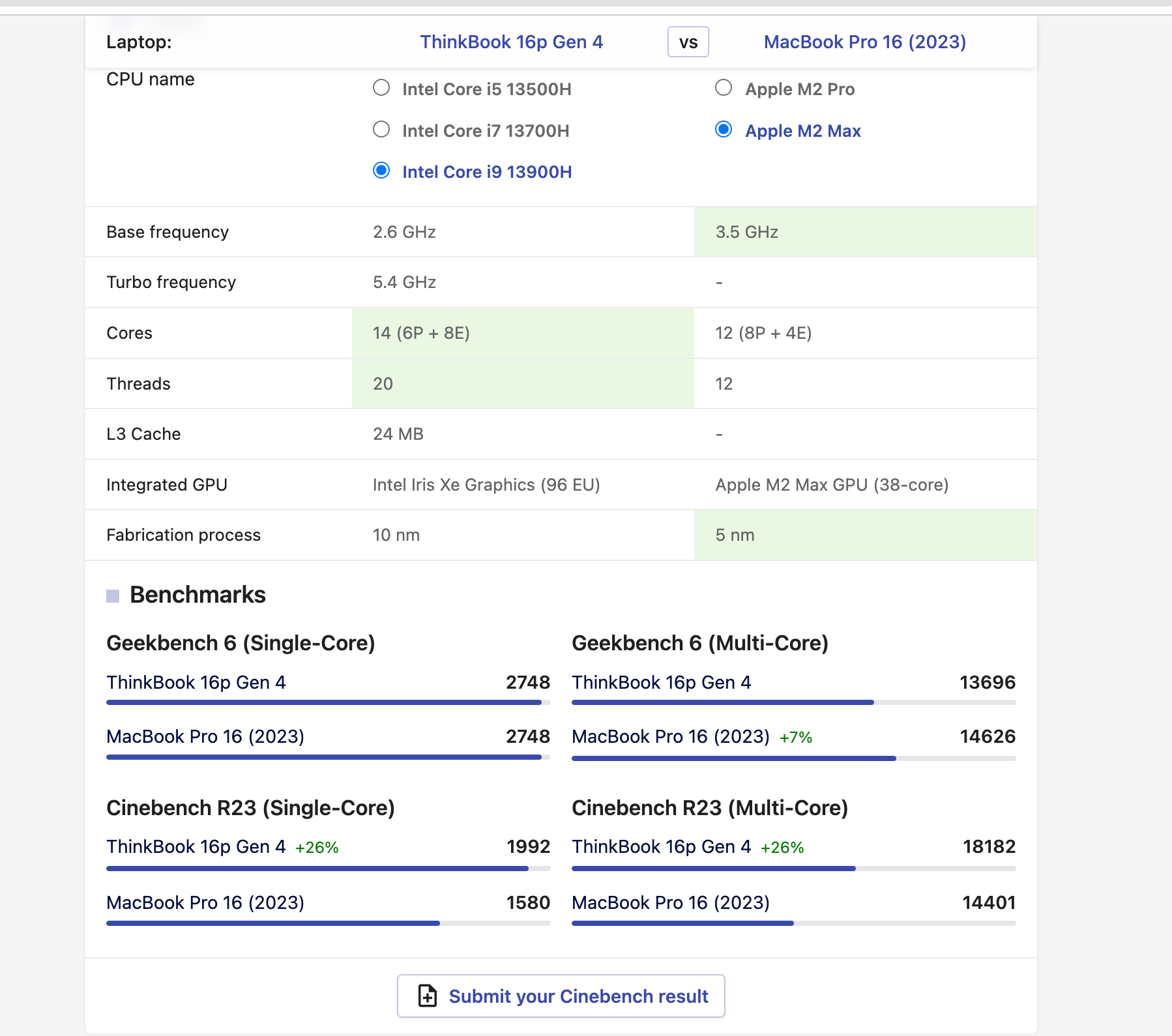

I know python has a GIL, but allegedly the zstdandard library uses cthreads so it can use more than a single CPU and boto3 is threaded on the download and upload, but only for the download_file and download_fileobj which deal with "files".

Download an S3 object to a file.

Variants have also been injected into S3 client, Bucket and Object.

You don't have to use S3Transfer.download_file() directly.

.. seealso::

:py:meth:`S3.Client.download_file`

:py:meth:`S 3.Client.download_fileobj`

Unfortunatelly this method doesn't seem to have been injected

import boto3

s3 = boto3.client('s3')

response = s3.get_object(Bucket='mybucket', Key='mykey')

data = response['Body'].read()

the response['Body'] returns a stream which would've been perfect to wrap a Zstdcompressor around and pass to the upload which also is not properly threaded when used with a stream.

I though it would be trivial to find something in python that can simulate a file object using a stream or sonething similar. Named pipes is my next idea, but even with that and dealing with multiple processes seems to be a PITA. I really though this would be easier to replicate in python.

if they said Mark with the sea instead of Marc with a "c", I can see where the confusion came from.

if they said Mark with the sea instead of Marc with a "c", I can see where the confusion came from.

is strong.

is strong.

FCC closing loophole that gave robocallers easy access to US phone numbers

FCC closing loophole that gave robocallers easy access to US phone numbers

) and Internet service with the same company. They have a website where I can block phone numbers and anyone calling me from a blocked number will get a recording that says something along the line of "This person is not accepting calls at this time".

) and Internet service with the same company. They have a website where I can block phone numbers and anyone calling me from a blocked number will get a recording that says something along the line of "This person is not accepting calls at this time".

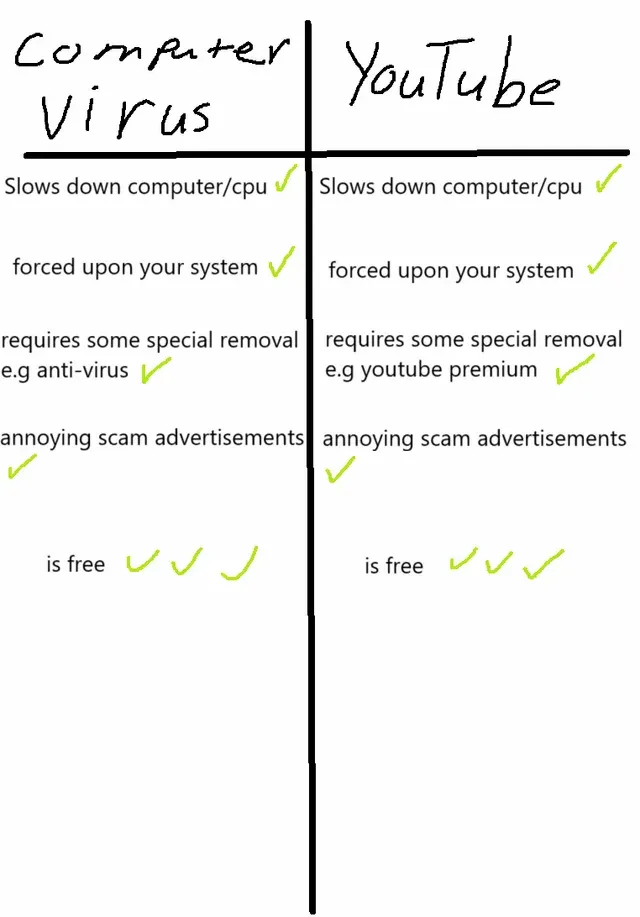

Items 2 and 3 on the YouTube side are incorrect.

Items 2 and 3 on the YouTube side are incorrect. why would I scratch my own car door? I hadn't unlocked a car by physically inserting a key in the door for 20 years until that one rental in Greece two years ago. The extra meters I walked just to return to the door to lock the car is staggering.

why would I scratch my own car door? I hadn't unlocked a car by physically inserting a key in the door for 20 years until that one rental in Greece two years ago. The extra meters I walked just to return to the door to lock the car is staggering.