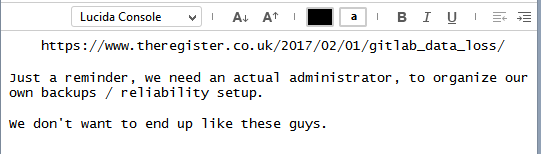

No thread about the GitLab fuckup yet?

-

@flabdablet I'm tending to "backup via Discourse", actually. Forum software, bug tracker, backup platform, what's

notto like?

-

@boomzilla said in No thread about the GitLab fuckup yet?:

@flabdablet Is there anything that is? Every so often we get a thread moaning about how "It's

$current_yearwhy isn't this solved‽"Yes, plenty of things. Thing is, you don't see people calling attention to how they don't work because they do now. :P

-

@masonwheeler Name and shame!

And see how long it takes for someone to post a counter example.

-

We do a test disaster recovery every year off-site to prove that our mission-critical systems can be restored and operable from backup.

-

@Onyx said in No thread about the GitLab fuckup yet?:

Which idiot upgraded the fucking system and didn't pay attention to YOUR FUCKING DATABASE TOOLING BEING UPDATED?

.......................................................................... jef

-

@RaceProUK said in No thread about the GitLab fuckup yet?:

@Vault_Dweller It's a bit like that question "If a tree falls and there's no-one to hear it, does it make a sound?"

If we check to see if a tree has fallen and it turns out, 40 years ago, the person who was supposed to plant the tree never did, and no one ever stopped by to check to see if there was actually a tree on the lot until right now-- does it make a sound?

-

@Lorne-Kates I actually like Pratchett's answer to that one:

There is always someone around to hear the tree falling. Even if it's only a squirrel which is wondering why its world is suddenly and rapidly turning sideways.

-

@Rhywden Even if it's just the Auditors?

-

@anonymous234 said in No thread about the GitLab fuckup yet?:

Databases and backups should (in most cases) be a solved problem by now.

My previous project would run a nightly database dump (and sync of data stored on cloud providers) and pitch it into a directory on our NAS, usually at around 21:30. The NAS would then automatically do a pair of backups at about midnight (to one off-site storage service) and 0200 (to another one) next morning, including the DB dumps and all the other data written during the previous day (highly variable). Restoring was awkward (for various unimportant-for-this-thread reasons), but we tested it and had reasonable confidence that it would work. It took a fair bit of setting up overall, but it was pretty good once we'd got it all done; we wanted it to be both turnkey and yet fully accessible to us.

The current project… well, I'm still working on getting the code to the point where I can store state in a DB at all.

Long road ahead…

Long road ahead…

-

@asdf said in No thread about the GitLab fuckup yet?:

@Yamikuronue said in No thread about the GitLab fuckup yet?:

forgot to return the inner return value

Oh, yeah, that's a classic. Everyone who's ever touched Shell scripts has made that mistake once or twice. ;)

Almost all of our batch script have the "magic incantation" of

exit /b 0. Just in case, you know, non-zero return. (not my scripts)

-

@Lorne-Kates said in No thread about the GitLab fuckup yet?:

@RaceProUK said in No thread about the GitLab fuckup yet?:

@Vault_Dweller It's a bit like that question "If a tree falls and there's no-one to hear it, does it make a sound?"

If we check to see if a tree has fallen and it turns out, 40 years ago, the person who was supposed to plant the tree never did, and no one ever stopped by to check to see if there was actually a tree on the lot until right now-- does it make a sound?

Yes it does, and the sound goes

FFFFFFFFFUUUUUUUUUUUUUUUUUUUUUUUUUUUUUUUU.................................

-

@loopback0 said in No thread about the GitLab fuckup yet?:

@Vault_Dweller said in No thread about the GitLab fuckup yet?:

@loopback0 I think "weren't even setup" falls pretty heavily under the definition of "failed"

It's hard to fail if you're not even attempting something.

You can't lose if you don't play, right?

-

@loopback0 said in No thread about the GitLab fuckup yet?:

@Vault_Dweller said in No thread about the GitLab fuckup yet?:

@loopback0 I think "weren't even setup" falls pretty heavily under the definition of "failed"

It's hard to fail if you're not even attempting something.

It's not that hard. In this case, you're obviously failing to even attempt that thing.

-

@flabdablet said in No thread about the GitLab fuckup yet?:

It's quite comforting to keep a really close eye on the backup process. Found a failing source drive once just because backup was running slower than I'd come to expect. SMART and RAID logs showed nothing untoward. Did read-speed tests on all the drives in the set individually (hurrah for software RAID), replaced the one running at a quarter of the speed it should, and slapped it into service at my house as a secondary backup; two months later it started reallocating sectors.

This reminded of something my dad once said. He had worked at a variety of things in the late 50s and early 60s before he got into IT support, and in one of those jobs he got to watch people testing electronic components (specifically high-current resistors, if memory serves, the sort that include a heat sink). The testers could often tell when duds were out of spec because of the speed at which the needles on the analogue meters would move, that is, before the needles had settled.

Boys and girls, you can't do that with digital meters.

-

@Steve_The_Cynic said in No thread about the GitLab fuckup yet?:

Boys and girls, you can't do that with digital meters.

You'd be surprised what you can do with a digital meter. A high-speed digital oscilloscope can measure all sorts of things, and those things are really just digital meters with a different display subsystem.

-

@dkf said in No thread about the GitLab fuckup yet?:

@Steve_The_Cynic said in No thread about the GitLab fuckup yet?:

Boys and girls, you can't do that with digital meters.

You'd be surprised what you can do with a digital meter. A high-speed digital oscilloscope can measure all sorts of things, and those things are really just digital meters with a different display subsystem.

Well, I meant digital multimeters, which typically have an update frequency of a few Hz at the most. They don't change fast enough to provide the instant feedback these testing folk were able to use to reject (some of) the duds.

-

@asdf said in No thread about the GitLab fuckup yet?:

@Yamikuronue said in No thread about the GitLab fuckup yet?:

and somewhere along the way it stopped working

Which means they ran a shell script without

set -eI was hoping that meant "set email address for errors to following". Alas.

@cartman82 said in No thread about the GitLab fuckup yet?:

@Yamikuronue said in No thread about the GitLab fuckup yet?:

I copied this to my new boss and team with "This is the best reason I've ever seen to run regular disaster drills"

Good idea.

Sending this to mine.

I see Eastern Europe hasn't moved on from grayscale yet.

-

@cartman82 said in No thread about the GitLab fuckup yet?:

When you pay gitlab

It is free, though. So...

-

Yeah, I've got code hosted on Gitlab. From what I can tell (self-installed a copy too), they've got Discourse levels of dependency soup (also, it's written in Ruby. Wonder if there's a coincidence there). I couldn't even get it installed without using a docker image. So it really wouldn't surprise me if that was part of the problem...

-

@sloosecannon The problem was with them trying to get postgres to replicate and then accidentally deleting some of the DB's files on the disk. I suspect it's orthogonal to their self sabotage WRT ruby.

-

Yeah, my university's engineering department uses a private GitLab, and I'm pretty happy with it, though I wish it had the GitHub functionality for creating releases; I don't want to have to make a tag to attach files. That actually affects the state of the repo, in a way that doesn't work out that well for what I'm doing with my repos.

-