Client Survey

-

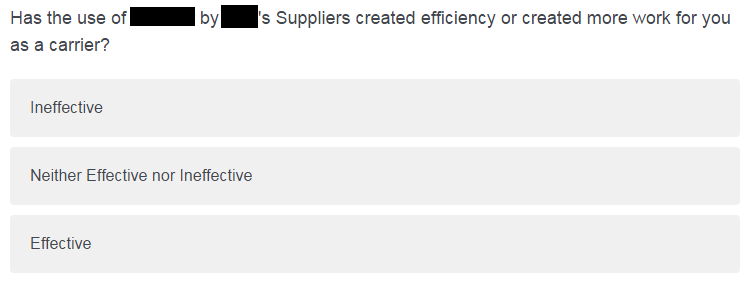

So earlier this year we had an integration project with one of our big clients. Now that it's been running for several months, they sent us a survey to see what we think of it. This is one of the questions:

You'd think surveyors would have figured out that responses to these questions mean absolutely shit. If the respondent can't figure out how the answers are supposed to apply to your question, then the response is worthless.

-

I suppose they meant it more "has the use of product by company's suppliers allowed you to be more effective in completing your work"?

-

Then that's what they should have said, instead of this unanswerable mess. What they should do is have a group of people on their side take the survey before sending it out to see if the questions make sense. A sort of Quality Assurance (le gasp), if you will.

-

Sad thing is, I know a lot of people who had it indeed start that way, and then get the way your posted because so many people complained and pushed and rumbled about it.

It's also why I had to extend the "Question Text" field in our home-grown survey system from 300-wide to 2000 wide.

-

I've seen this shit before when Highly Paid Survey Consultants get involved. Chances are the original answers were "made things more efficient", "had no effect", or "created more work". But just as "excellent to terrible" got turned into "extremely dissatisfied to extremely satisfied" and caused my old employers' error rates to soar, the answers got changed to shit each more alike than the other.