In which @Captain Transfers a 500GB File

-

I need to transfer a 500GB file from one Windows Server to another. Unfortunately, the source doesn't like it when we ask it to do too much, and services start to fail if we just let a transfer go at full speed. So we need to throttle down.

Is there a good BitTorrent client for Windows that

- No Java dependency

- Scheduled transfer speed throttling

- Suitable for Windows Server

- Doesn't need a user logged in (run as a service?)

I would do Transmission if this was Linux or a Mac (or if they had a link to the Windows port on their download page). Any other options?

-

@Captain an older version of uTorrent?

-

@Captain Deluge maybe?

-

@Captain Is this across a LAN or WAN?

Robocopy has a flag(/ipg:N) that will let you throttle the time between 64kb packets.

-

@Magus said in In which @Captain Transfers a 500GB File:

@Captain Deluge maybe?

This.

I'm using Tixati for my linux distro downloading needs, but it doesn't have a headless mode.

-

torrent seems like a kludge. maybe try a better file copier? I use teracopy but it doesn't do rate limiting. maybe try one of these: http://www.instantfundas.com/2011/08/3-file-copy-programs-better-than.html

-

500GB is pretty large. A sneakernet transfer will probably be simpler. Possibly faster, too.

-

Caddy and if you got the space Parchive

-

@anotherusername if it's within LAN and servers have Gigabit Ethernet, it might indeed be faster to copy over wire. Especially if you factor in time needed to connect the disk.

-

@Gąska the talk about rate limiting the transfer leads me to suspect that it isn't that easy, though.

-

@anotherusername Speed isn't the problem, as such. The problem is that the hard drive sucks at random reads, and it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS.

So I need to rate limit during the day (or set the rate to 0 -- i.e., pause the transfer) and resume highest speeds when the office is closed.

-

Have you considered writing a simple program to do the transfer?

Just read it into a fixed sized buffer, send that, wait x (milli)seconds, repeat.

-

@Captain said in In which @Captain Transfers a 500GB File:

The problem is that the hard drive sucks at random reads, and it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS.

that's....... that's bad.....

can you schedule some down time to take the machine offline and get the data off?

-

@accalia @Captain

By which she means, all the data, to a server with a functional storage system.Please at least tell me that you have actual backups taken regularly of this data. Because if random file access is overwhelming production operations, the server is on the verge of proving that RAID isn't backup.

-

@izzion

Also, assuming you do have actual backups taken, why not just restore the backup to the new server? Either way transferring the file is going to require a "change freeze" as of some point in time, might as well be based on your already scheduled backup window.

-

@izzion said in In which @Captain Transfers a 500GB File:

Please at least tell me that you have actual backups taken regularly of this data. Because if random file access is overwhelming production operations, the server is on the verge of proving that RAID isn't backup.

I know I know I know, but I haven't allocated time to set up a secondary system yet.

-

@Captain said in In which @Captain Transfers a 500GB File:

@anotherusername Speed isn't the problem, as such. The problem is that the hard drive sucks at random reads, and it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS.

So I need to rate limit during the day (or set the rate to 0 -- i.e., pause the transfer) and resume highest speeds when the office is closed.

In that case... it might not be overkill to set up an Apache webserver so that the file in question is shared, and then use a client which supports rate-limiting the download speed, e.g. JDownloader 2.

-

@anotherusername said in In which @Captain Transfers a 500GB File:

500GB is pretty large. A sneakernet transfer will probably be simpler. Possibly faster, too.

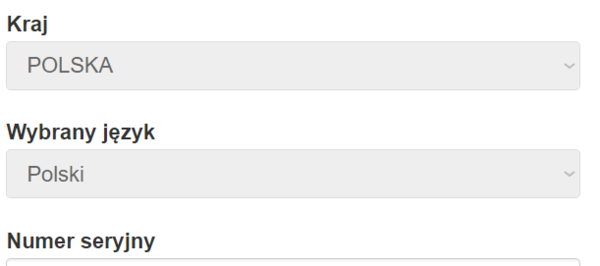

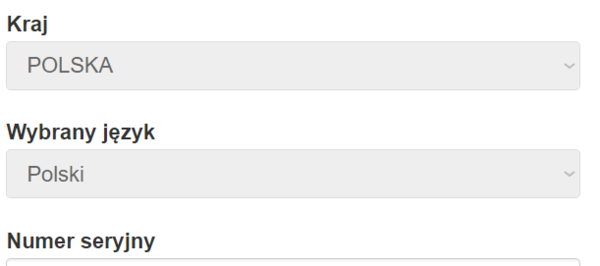

I bought the 1.5 TB version of this a couple of days ago. Very unimpressed with Seagate's product registration. There is an autorun on the drive which opens a browser (IE rather than Edge or my default, for some reason) to a page with the serial number as a parameter. But it searched for it rather than going directly, leading to a Bing page with no results found.

Manually pasting the address goes to a page like this:

Those dropdown boxes are disabled, and the second one (chosen language) was not chosen by me, but determined by my location rather than my browser language (again...)

In order to change it, you need to go back to the main Seagate page, change the location (no way to have one location with a different language, as we all know every country is made up of 100% natives), then re-paste the address.

Of course, I didn't register because none of this is needed in the EU, but I would bet that changing the language to English would have blocked me from using my real address.

-

@coldandtired said in In which @Captain Transfers a 500GB File:

I bought the 1.5 TB version of this a couple of days ago. Very unimpressed with Seagate's product registration. There is an autorun on the drive which opens a browser (IE rather than Edge or my default, for some reason) to a page with the serial number as a parameter. But it searched for it rather than going directly, leading to a Bing page with no results found.

Manually pasting the address goes to a page like this:

Those dropdown boxes are disabled, and the second one (chosen language) was not chosen by me, but determined by my location rather than my browser language (again...)

In order to change it, you need to go back to the main Seagate page, change the location (no way to have one location with a different language, as we all know every country is made up of 100% natives), then re-paste the address.

Of course, I didn't register because none of this is needed in the EU, but I would bet that changing the language to English would have blocked me from using my real address.

Stop being so negative. Someone went to a lot of effort to make it “easy”!

-

@coldandtired LOL. Shit like that is why I'll never trust hardware companies to do good software.

-

At the current technology level, I would say having a single file that size at all is

. However, I am at a loss as to the alternative in this case.

. However, I am at a loss as to the alternative in this case.Is splitting the file into a spanned archive feasible? It won't get around the main problem, but it could ease the transfer.

Maybe, I dunno, wait five years until that's a more commonly used quantity of data?

-

@ScholRLEA said in In which @Captain Transfers a 500GB File:

Is splitting the file into a spanned archive feasible?

That thought occurred to me also, but when he said that simply reading the whole file at max speed will tie up the hard drive enough to cause other services to start failing...

-

@anotherusername said in In which @Captain Transfers a 500GB File:

when he said that simply reading the whole file at max speed will tie up the hard drive enough to cause other services to start failing...

Copy it overnight when nothing else important is happening.

-

@dkf Just like sending a probe to the Sun!

-

@ScholRLEA Umm, yes, but with most businesses they really don't do all that much outside business hours. That's when it's a good time to move large amounts of data about.

Or I suppose they could stop trying to be cheap with networking and get a gigabit switch.

-

Setup a simple FTP server like FileZilla (Server) and limit the bandwidth there?

Plug a portable harddisk into a USB 2 port?

Connect a cheap USB-to-Ethernet dongle that only does 10 MBit/s, on a good day?

Just copy it over the network and constantly click "pause" and "resume" in the file copy dialog?

-

@ChrisH said in In which @Captain Transfers a 500GB File:

Just copy it over the network and constantly click "pause" and "resume" in the file copy dialog?

-

@dkf said in In which @Captain Transfers a 500GB File:

@anotherusername said in In which @Captain Transfers a 500GB File:

when he said that simply reading the whole file at max speed will tie up the hard drive enough to cause other services to start failing...

Copy it overnight when nothing else important is happening.

And preferably use the sneakernet option to make sure the transfer completes that night.

Unless running it at full speed actually no kidding brings the system down, in which case.... you're probably screwed

-

@sloosecannon said in In which @Captain Transfers a 500GB File:

Unless running it at full speed actually no kidding brings the system down, in which case.... you're probably screwed

FWIW, we move 200GB files off of Windows systems relatively often and that works fine.

-

@dkf said in In which @Captain Transfers a 500GB File:

@sloosecannon said in In which @Captain Transfers a 500GB File:

Unless running it at full speed actually no kidding brings the system down, in which case.... you're probably screwed

FWIW, we move 200GB files off of Windows systems relatively often and that works fine.

Yeah, sounds like his hardware is going very bad very quick.

@Captain you do have backups right? You better have backups.

-

@dkf said in In which @Captain Transfers a 500GB File:

FWIW, we move 200GB files off of Windows systems relatively often and that works fine.

Same. I'll put the second vote in for Robocopy though; you can set flags such that the copy's restartable if it fails in the middle (seems likely given the implied fragility) and you can multi-thread to go all out (seems like a bad idea in this case). Never tried throttling as mentioned above. But of course biggest advantage is it's already on the server.

-

@Captain If you can make the file available over FTP or HTTP,

wget -N -c -t inf --limit-rate=50k 'url'will do a rate-limited fetch that you can restart if the client dies partway.

-

@Captain said in In which @Captain Transfers a 500GB File:

it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS

If you jigger the USB drivers on your server to disable USB2 support, there is no way that a transfer to an external hard disk over USB1 is going to be quick enough to have any noticeable impact on the server whatsoever.

-

@ChrisH said in In which @Captain Transfers a 500GB File:

FTP

:shudder: yeah, if I'm not mistaken, FTP has no official standard for verifying copy integrity, and we're talking about a 500 GB file...

-

@anotherusername said in In which @Captain Transfers a 500GB File:

@ChrisH said in In which @Captain Transfers a 500GB File:

FTP

:shudder: yeah, if I'm not mistaken, FTP has no official standard for verifying copy integrity, and we're talking about a 500 GB file...

Just md5sum the file on the.... oh wait that'll crash the server

-

@sloosecannon Not to mention, if the MD5 is wrong, you have to retransfer the whole file.

That's at least a nice aspect about torrents... every chunk is checksum-verified.

Although, creating the torrent would probably bring down the server, since it'd have to compute the checksum for every chunk.

sigh

-

@flabdablet said in In which @Captain Transfers a 500GB File:

@Captain said in In which @Captain Transfers a 500GB File:

it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS

If you jigger the USB drivers on your server to disable USB2 support, there is no way that a transfer to an external hard disk over USB1 is going to be quick enough to have any noticeable impact on the server whatsoever.

In a similar vein, if the destination machine is on your LAN and you can arrange for the file to be available to it via Windows file sharing, you can turn the destination machine's Ethernet port down to 10Mb/s to achieve a grindingly slow transfer rate.

-

@flabdablet said in In which @Captain Transfers a 500GB File:

@Captain said in In which @Captain Transfers a 500GB File:

it brings services down if I run the transfer at full speed -- via USB or Ethernet or SATA or SAS

If you jigger the USB drivers on your server to disable USB2 support, there is no way that a transfer to an external hard disk over USB1 is going to be quick enough to have any noticeable impact on the server whatsoever.

That's what, 12 Mbit/s?

mathmathmath...

500 GB at 12 Mb/s = ~93 hours

Is that right? ... yep:

-

I like rsync for transfers (of course it works better in linux).

http://www.howtogeek.com/50794/keep-rsync-from-using-all-your-bandwidth/

-

@dangeRuss If you're going to use rsync, and you have to restart after a broken connection, make sure you use its --whole-file option to force it to do the whole thing again; otherwise it will hammer the shit out of the drives at both ends of the connection looking for data chunks the source and destination files have in common.

-

@flabdablet said in In which @Captain Transfers a 500GB File:

@dangeRuss If you're going to use rsync, and you have to restart after a broken connection, make sure you use its --whole-file option to force it to do the whole thing again; otherwise it will hammer the shit out of the drives at both ends of the connection looking for data chunks the source and destination files have in common.

You would probably want the in-place option as well.

What kind of file are we working with here. How big is the drive?

If the drive doesn't do well with random seeks, maybe it's worth it to copy it block by block (this would usually need to be done when the drive is not mounted, but I'm sure there are solutions that would let you do it with a live drive). If the partition is small enough, this may be your best bet.

And if you guys are having issues with services dying when you try to do things with the drive, maybe it's time to think about upgrading to SSDs. They are getting pretty cheap (if you stick to the consumer ones, which, while not enterprisy, will still be far better some shitty drive that keeps having your services fail). I recommend something like Samsung 850 EVO

It comes in sizes up to 4TB (although getting exponentially more expensive as you go up in size).

For 500GB-1TB sizes, I doubt if this is more expensive and an equivalent sized SAS drive.

If you have a little more money get the Pro version.

-

@dangeRuss said in In which @Captain Transfers a 500GB File:

maybe it's time to think about upgrading to SSDs.

Not even that. Maybe it's time to think about upgrading to drives that aren't literally about to fail

-

@sloosecannon said in In which @Captain Transfers a 500GB File:

@dangeRuss said in In which @Captain Transfers a 500GB File:

maybe it's time to think about upgrading to SSDs.

Not even that. Maybe it's time to think about upgrading to drives that aren't literally about to fail

I don't think he's saying they're about to fail. I'm sure plenty of rust powered drives are shitty at random reads to the point of things starting to fail. I feel like there's not reason not to upgrade to SSDs vs Rust drives at this point.

-

@dangeRuss said in In which @Captain Transfers a 500GB File:

@sloosecannon said in In which @Captain Transfers a 500GB File:

@dangeRuss said in In which @Captain Transfers a 500GB File:

maybe it's time to think about upgrading to SSDs.

Not even that. Maybe it's time to think about upgrading to drives that aren't literally about to fail

I don't think he's saying they're about to fail. I'm sure plenty of rust powered drives are shitty at random reads to the point of things starting to fail. I feel like there's not reason not to upgrade to SSDs vs Rust drives at this point.

I've got several servers running on spinning rust drives, and trying to copy big things (granted not 500gb big, but still) off of them doesn't bring the server down...... Either those are some of the shittiest HDDs ever, or they're failing......

-

@sloosecannon ...it could also say more about the services in question than it says about the drive itself. If a few services all need real-time data, trying to copy a really big file might be the straw that brings it all down.

-

@anotherusername said in In which @Captain Transfers a 500GB File:

If a few services all need real-time data

I... suppose that's true. It depends on the services, I suppose. If you're running like tons of databases with random reads going on and stuff, I could see that kind of an issue

-

@sloosecannon it could also be swapping heavily already due to insufficient RAM, which will slow things down tremendously...

-

@sloosecannon Yes we do have backups.

-

@anotherusername 32 GB, maybe 28 are in use.

-

@Captain Can you not just copy the 500GB file off one of the backups, then?