Server cooties are being FIXED, NOT swapped

-

As of a month or so ago, we upgraded the

pggem that is used to connect to the Postgres database.- We did this because of a rare but extremely annoying encoding bug where posts will simply stop rendering. (remember that?)

- Unfortunately, the new

pggem has an even bigger problem: a memory leak.

As of beta 8, we have reverted to the earlier version of the Postgres gem for now while we work with them to figure out why memory is leaking so badly.

This means anyone who was on an earlier 1.2 beta (anything from beta 4 onward) should upgrade to beta 8 immediately. If you do not, expect to run into out of memory errors regularly until you do.

-

WTF. What happened to beta 7? Did Discourse go Windows? Java?

-

Beta 7 was 3 hours ago, beta 7 vs 8 is only this fix.

-

Duuuuuuuuuuuuuuuuuuuuuuuuuuuuuude. It's just like the good old days!

-

Anywho....paging @PJH.

-

It's like the software releases I did when I was 8. Fuck something up, don't test it, release, and then test afterwards and release again because you found one of the billions and billions of bugs.

-

-

Yeah, the pub's probably closed.

-

So we might get Invisiposts™ back?

Ummm, yay?

-

Atwood likes Spolsky right? What does he think of the Netscape release plan? (Release when it builds; patch when there's a bug bad enough to appear in a newspaper.)

-

-

<maybe....

-

It's like the software releases I did when I was 8. Fuck something up, don't test it, release, and then test afterwards and release again because you found one of the billions and billions of bugs.

That’s still better than most Github projects, where there are no releases at all and you’re supposed to use the tip of the

masterbranch.

-

That's evil.

-

-

..... that remonds me i need to tag @sockbot for a release soon

-

you’re supposed to use the tip of the master branch.

It's okay, though, it's just the tip, it won't hurt your inexperienced system...

-

DFHack updates the

masterbranch whenever there's a release. Otherwise, it's all on thedevelopbranch.

-

you’re supposed to use the tip of the master branch

The dating advice thread is that way

-

-

It's like the software releases I did when I was 8. Fuck something up, don't test it, release, and then test afterwards and release again because you found one of the billions and billions of bugs.

You did releases?I think I had just two things: "latest" and "looks like an old copy".

-

Why do you put all these high-effort posts buried in the middle of threads nobody reads? You should put fart jokes here, and the high-effort posts in new threads. So we can then reply to them with fart jokes.

-

Ohhh, I suppose that's what + Reply as linked Topic is for...

TBH, I don't think I had the intention of writing a book when I responded to Ben's post, it was going to be a "+1 been there, done that" type of response that kind of got away from me...

-

Fart.

-

Would you like us to move it to a different place?

-

You mean, like it's own topic in General or Sidebar WTF? Something like that.

Sure, if you think it deserves it, why not! :D

-

I

'd a post to a new topic: 1 year of professional C development

'd a post to a new topic: 1 year of professional C development

-

The cooties may have been swapped, but they're not gone.

-

I logged in just as it was happening postgresql was at 100% I need to track the query when this happens, will see if I can enable the slow log on pg

(whenever crazy happens here I get a message in slack)

-

@boomzilla were you restoring posts in /t/1000 before 504s hit? There was lag before, but it was kinda working...

whenever crazy happens here I get a message in slack

You must be swamped!

... oh, you mean the server.

-

https://meta.discourse.org/t/1-2-beta-users-please-upgrade-to-beta-8-immediately-due-to-critical-memory-leak/25186

Oh, boy...now that topic is "private or doesn't exist." Meet the new server cootie swap topic, same as the old server cootie swap topic:

-

It's actually not a swap anymore, the pg gem was a magenta herring. It was actually a memory leak in EventMachine, used by the message bus, at a rate of 16kb per second.

So, no swappage, and the leak is over! Happy times!

-

Memory leak?

In Ruby?

Are they sure? Because it's hard to tell when it uses 8GB to print Hello World.

-

In Ruby?

No, it's actually C code (or C++, I think, after looking at their git repo).

-

Because it's hard to tell when it uses 8GB to print Hello World.

And allocating 200,000 strings per request...

-

That’s still better than most Github projects, where there are no releases at all and you’re supposed to use the tip of the

masterbranch.Like, say, the venerable (read: old) JS tablesorter script, which has a glaring IE11 bug fixed since June 2014 that has yet to be in an actual release?

-

Making releases sucks.

-

And allocating 200,000 strings per request...

ASCII or UNICODE...?

I ask merely for amusement...

-

-

-

T'was a reference to Sam's postulation some time back that UNICODE was the reason behind our whitescreens...

-

The strings actually include a byte to indicate their encoding.

Also note that a considerable number of those are the empty string.

T'was a reference to Sam's postulation some time back that UNICODE was the reason behind our whitescreens...

Yeah, the postgres gem (call it a "driver") was returning the "blob of bytes" string encoding in certain situations.

-

btw, pg gem is on latest so white screen stuff should be gone.

only huge issue left here is that for some reason pg is pegging once in a while. I saw that yesterday about this time and we had a 40 sec outage. But there is a definite reduction in server cooties.

Will get pg logs in so we can isolate what query is taking causing this.

-

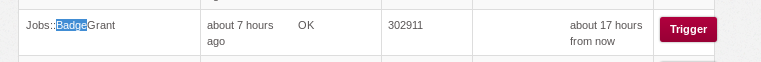

Will get pg logs in so we can isolate what query is taking causing this.

Is this likely to be one of mine or one of the behind-the-scenes ones? (Nervous/paranoid because whenever I see 'query' mentioned like that I think of the badges....)

-

I don't want to point fingers before I have any ammo :) but its possible.

-

I don't want to point fingers before I have any ammo but its possible.

Yeah, this one's worrying me and I'm keeping an eye on it...

-

Update, been monitoring and seen quite a few slow reqs over a day of monitoring

I enabled logging of all queries taking longer than 1 second and watching that, I also raised workmem on pg to 100mb (from 10) and shared_buffers to 1GB (from 200mb) since we have memory that is unused on the box

Watching the logs to see what will happen and what are the slow queries.

-

@PJH right off the bat noticing some badge queries that are fairly expensive and being run a lot:

2015-02-20 03:03:53 UTC LOG: duration: 1151.014 ms statement: INSERT INTO user_badges(badge_id, user_id, granted_at, granted_by_id, post_id) SELECT 147, q.user_id, q.granted_at, -1, NULL FROM ( WITH exclusions AS ( /* Which categories to exclude from counters */ SELECT user_id, id, topic_id, post_number FROM posts WHERE raw LIKE '%magic uuid to exclude%' AND user_id IN ( SELECT gu.user_id FROM group_users gu WHERE group_id IN( SELECT g.id FROM groups g WHERE g.name IN ('admins') ) ) ) SELECT user_id, 0 post_id, current_timestamp granted_at FROM badge_posts WHERE topic_id NOT IN ( /* Topics with less than 10 posts */ SELECT topic_id FROM badge_posts GROUP BY topic_id HAVING count(topic_id) < 10 ) AND topic_id NOT IN ( /* Excluded topics */ SELECT topic_id FROM exclusions ) AND ( /* Discourse requirements */ 'f' OR user_id IN ( SELECT trigger_post.user_id FROM posts trigger_post WHERE trigger_post.id IN (240040) ) ) GROUP BY user_id HAVING count(*) >= POW(2, 0) ) q LEFT JOIN user_badges ub ON ub.badge_id = 147 AND ub.user_id = q.user_id WHERE (ub.badge_id IS NULL AND q.user_id <> -1) RETURNING id, user_id, granted_atThis is a 1.1 second query that runs right after I post something, so bigs bursts of posting can be quite crippling.

I wonder if either the exclusion clause can be heavily simplified/removed so it does not have to scan through every post an admin makes every time it runs OR if you can just run it daily instead.

edited out magic uuid - a

-

Increased memory seems to have calmed the beast quite a lot, will post full logs in 4 hours or so.

-

Ideally, the exclusions would be a materialized view.