Microsoft switches to git

-

MS have made the switch to git!

[forked because this is a neat article about making git work for huge projects - bz]

-

I read this comment in Microsoft Sam's voice...

-

@LB_ They have a Git repo of Windows that is 300gb according to arstechnica report.

-

@lucas1 TFA alludes to that:

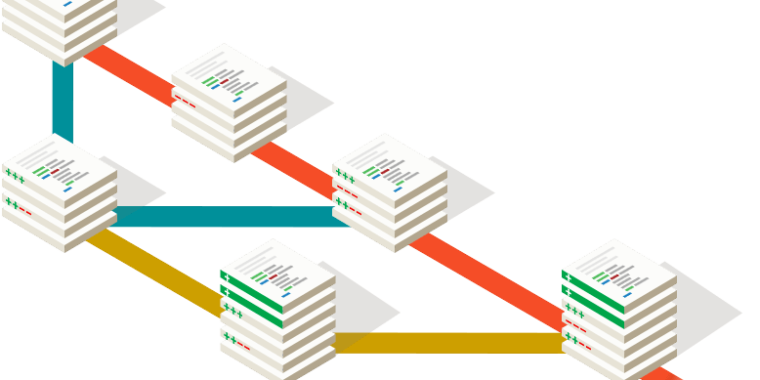

We tried an approach of “virtualizing” Git. Normally Git downloads everything when you clone. But what if it didn’t? What if we virtualized the storage under it so that it only downloaded the things you need. So clone of a massive 300GB repo becomes very fast. As I perform Git commands or read/write files in my enlistment, the system seamlessly fetches the content from the cloud (and then stores it locally so future accesses to that data are all local). The one downside to this is that you lose offline support. If you want that you have to “touch” everything to manifest it locally but you don’t lose anything else – you still get the 100% fidelity Git experience. And for our huge code bases, that was OK.

-

-

-

@boomzilla quoted in Microsoft switches to git:

a massive 300GB repo

Why would you put everything in one?

-

On something the scale of an OS or Office, Git probably is the best tool for the job. As someone who uses TFVC and really likes it, though, I hope they keep the quality of that up too. For my project, I'd definitely rather use it than git.

-

@dkf said in Microsoft switches to git:

Why would you put everything in one?

It's what the repo was. If you RTFA they worked on splitting stuff up as much as possible, including a dead end attempt at using submodules.

-

@dkf Because branching is easy in git.

-

@boomzilla said in Microsoft switches to git:

As I perform Git commands or read/write files in my enlistment, the system seamlessly fetches the content from the cloud (and then stores it locally so future accesses to that data are all local). The one downside to this is that you lose offline support.

"We took a system that was completely unfit for what we want to do. Then we used a filesystem hack to actually implement what we want".

-

I'm very interested in the virtual filesystem - as far as I know, in Windows a virtual filesystem has to be a network location (like with VPNs) or have its own drive letter, and can't just be embedded within your existing filesystem. I wonder if they implemented new APIs to remove that limitation or if they just stuck to the existing ways of making virtual filesystems?

It's also nice to see that they've been contributing performance enhancements back to git for the benefit of everyone.

-

@anonymous234 said in Microsoft switches to git:

We took a system that was completely unfit for what we want to do.

Right. But only because no system is fit for what they need to do.

-

@boomzilla I don't understand what is wrong with taken something that does like most of you want and then building something better around it.

-

@lucas1 Me, neither.

-

@LB_ I don't know about virtual filesystems, but you can mount a disk into a folder rather than a drive letter. Maybe they're doing something similar.

-

@hungrier Since vista or so, I think, but for the purpose described here that wouldn't be sufficient - you actually need some overlay file system I think?

-

@hungrier You think for 300gb of a shared codebase they are doing that?

Nah I honestly think they might be using some like ZFS.

-

@PleegWat I don't see why they would need anything else. As long as their virtual FS, however they implement it, has an interface that Windows understands, and they can mount it in place of the .git folder, the rest of it is up to their solution. Git would just see it as a normal .git folder, and all the fetching and caching magic can happen behind the scenes.

-

Reading https://blogs.msdn.microsoft.com/visualstudioalm/2017/02/03/announcing-gvfs-git-virtual-file-system/, it looks like the file system uses a driver, presumably kernel driver. Joy.

-

@lucas1 said in Microsoft switches to git:

Nah I honestly think they might be using some like ZFS.

RTFA

RTFA

-

@boomzilla I know it doesn't use ZFS now ... also I overly don't care about this. BTW didn't you tell me I didn't read the article when it was quite clear that you didn't and then you never admitted it.

-

@lucas1 said in Microsoft switches to git:

BTW didn't you tell me I didn't read the article when it was quite clear that you didn't and then you never admitted it.

No.

-

@boomzilla I specifically remember it, it wasn't that long ago. So Yes.

-

a massive 300GB repo

300GB doesn't seem all that "massive". Especially for something like Windows.

Between internal and external drives I've got 20TB of total capacity attached to my current home computer.

-

@El_Heffe on my shitty personal servers I am rocking about 10tb .. but that isn't a software repo. It isn't quite the same

-

@Magus said in Microsoft switches to git:

On something the scale of an OS or Office, Git probably is the best tool for the job. As someone who uses TFVC and really likes it, though, I hope they keep the quality of that up too. For my project, I'd definitely rather use it than git.

Yeah. TFVC works nicely for my use case.

I also have another use case I administer for another team. It's SVN - because a DVCS is utterly wrong for that particular job.

-

@Weng said in Microsoft switches to git:

- because a DVCS is utterly wrong for that particular job.

Yes it can be if you are using a large number of static assets.

Also be glad they are using a version control system at all.

-

I've been doing something... similar.

-

@ben_lubar I'm lost on SVN untracked branching madness

-

Bonus chatter: I remember some time ago somebody gave an example of a large git repo. "For example, the linux kernel repository at roughly eight years old is 800–900MB in size, has about 45,000 files, and is considered to have heavy churn, with 400,000 commits."

I found that adorable. You have 45,000 files. Yeah, call me when your repo starts to get big. The Windows repo has over three million files.

Four hundred thousand commits over eight years averages to around thirteen commits per day. This is heavy churn? That's so cute.

You know what we call a day with thirteen commits? "Catastrophic network outage."

-

@dcon We have more commits per day than that across our set of repositories. With a team of six. People start to get antsy once there's more than 100 commits a day (unless they're smart enough to have mail filter rules set up

). That's usually an indication of someone trying to debug a particularly obstreperous bit of automated build system…

). That's usually an indication of someone trying to debug a particularly obstreperous bit of automated build system…OTOH, the Linux kernel is an especially bad example of what is going on with activity within a DVCS ecosystem, as the statistics you get from looking near the trunk of the tree of repositories really do not reflect the number of commits used to reach that point. Linux is basically run on squash-commits, and they're history-destroying. (I utterly hate squash commits, and only use them extremely rarely where I'm wiping out a true blunder or doing an unusually awkward merge.) The number of commits to something that might be called Linux across the distributed network is far larger than simple analysis would indicate.

-

@dcon and @dkf said in Microsoft switches to git:

commits per day

That says much more about whether you like big of small commits than about the actual activity.

-

@adynathos said in Microsoft switches to git:

@dcon and @dkf said in Microsoft switches to git:

commits per day

That says much more about whether you like big of small commits than about the actual activity.

Basically the main difference between my coworker and me (it's only two of us committing to the repo in question so strict rules aren't in place): He makes tiny commits, I make larger ones. Because I hate committing something that I know doesn't work yet (I don't mean bugs, I mean I know that it can't even be functional because it's missing parts).

While I can appreciate a more fine-grained control when reverting with small commits, if my rollback doesn't return me to a working version I might just as well just comment things out or use undo as a "revert" technique, I find it about as useful/reliable...

-

@dkf said in Microsoft switches to git:

squash-commits, and they're history-destroying.

And virtually impossible to 100% prevent

I am a big fan (in most situations) of extremely small commits, the smallest possible increment of work that does not break something.

More than once the existence of these incremental commits provides great insight into what the developer was thinking. Given that most applications have a lifecycle longer than the employment duration of the original developer, this can be the difference between success and disaster.

-

@thecpuwizard said in Microsoft switches to git:

I am a big fan (in most situations) of extremely small commits, the smallest possible increment of work that does not break something.

Me too.

And then another extreme that I had used to is to commit IIF the code had deployed to production. Anything in between is only shelved.

Checking in frequently does not go well if you checked in code for feature A, B, C and D in that order, then only got approval to push B and D to production.

-

One option for that scenario is to set up feature branches and merge batches of commits into master/production.

Sometimes I even do it!

I am pretty rarely ever doing one-off commits into master, though. For one thing, my development branch knows about its development environment; while master only knows about production. So I can't really test changes to master directly.

-

Many different ways - many difference environments. NOT claiming one is universally better/worse; simply sharing what works for me (and most of my firms clients) and some of the ramifications.

In general we have eliminated branches, except for when multiple versions are still "in support" and a change is required for something other than the current version.

Since this thread is about Git (and Microsoft's adoption) I don't want to go too much further in this discussion, but am always happy to talk about it...

-

@thecpuwizard said in Microsoft switches to git:

@dkf said in Microsoft switches to git:

squash-commits, and they're history-destroying.

And virtually impossible to 100% prevent

Oh, they're quite easy to 100% prevent: just don't use a VCS that allows history rewriting.

-

@masonwheeler said in Microsoft switches to git:

Oh, they're quite easy to 100% prevent: just don't use a VCS that allows history rewriting.

My understanding of git is that a squash-commit occurs when merging from one branch to another. Even in a VCS that prevents history rewriting (like TFS), what prevents you from doing a merge and munging all those changes together into one new massive changeset, squashing the history?

-

@unperverted-vixen I think you're not understanding Git correctly, then. I see it all the time on GitHub, where someone sends a PR which gets accepted (merged) and then the recipient repository gets several new commits added to its history. (In fact, sometimes the recipient doesn't like that, and asks the sender to squash it down into a single commit, which would be redundant if this were something that happened automatically.)

-

@masonwheeler Let me rephrase that: when a squash commit does happen, it's in the context of taking commits from one branch and applying them to another (aka merging). I didn't mean to imply that all merges/PRs result in squash commits.

-

@unperverted-vixen Oh, all right. That makes more sense.

-

@captain said in Microsoft switches to git:

my development branch knows about its development environment; while master only knows about production

-

@masonwheeler said in Microsoft switches to git:

@unperverted-vixen I think you're not understanding Git correctly, then. I see it all the time on GitHub, where someone sends a PR which gets accepted (merged) and then the recipient repository gets several new commits added to its history. (In fact, sometimes the recipient doesn't like that, and asks the sender to squash it down into a single commit, which would be redundant if this were something that happened automatically.)

This is a thing? I hate pulling in a bunch of pointless little commits from a feature branch. I want to see milestones (or at least features) when I'm looking at master.

Now, if only I could somehow keep changes on some files from propagating when I push/merge without ignoring them.

-

@captain This is a thing, because while you may not enjoy it when you're merging, a maintenance developer 3 years from now is likely to be much more appreciative of the fine-grained commits and specific, detailed commit messages explaining more clearly than at a "milestone" level how the code got to be the way it is.

-

@dkf I've been afflicted by coworkers producing two types of nigh-unreviewable pull requests:

-

One developer who provides a single commit changing tens of files, comprising the entirety of a change ticket. The first one took me three days to pick apart. The second one didn't get reviewed.

-

Others who provide tens of commits, showing each attempt in their debug cycle. With so many changes that get replaced later in the stream, the stream can only be reviewed in totality—essentially reducing to the single-mega-commit type.

The latter I was fortunately able to teach how to rewrite history as they go, prior to pull request. Now at least from them I get nice sequences of self-contained commits, permitting me to subdivide the task.

History prior to start of code review is not useful and tends to obfuscate. Rewriting it to be clean helps code review and subsequent maintenance.

-

-

@masonwheeler said in Microsoft switches to git:

@unperverted-vixen I think you're not understanding Git correctly, then. I see it all the time on GitHub, where someone sends a PR which gets accepted (merged) and then the recipient repository gets several new commits added to its history. (In fact, sometimes the recipient doesn't like that, and asks the sender to squash it down into a single commit, which would be redundant if this were something that happened automatically.)

Actually, it is something that happens automatically. When merging, there's an option to squash it down to a single commit.

-

@greybeard said in Microsoft switches to git:

History prior to start of code review is not useful

You state that as an absolute. However, there are many cases where it is not true. The tiniest of details may provide significant value, and any information loss (in this case the granular evolution of the implementation) can prevent that value from being realized.

-

@greybeard said in Microsoft switches to git:

I've been afflicted by coworkers producing two types of nigh-unreviewable pull requests

I'm more lenient (review just the delta, not the path to get there) but I prefer to see many commits as that helps visibility within a team of what people are working on. (I know this doesn't scale perfectly, but for “small” teams it's a good idea to keep that sort of visibility about.)

Microsoft hosts the Windows source in a monstrous 300GB Git repository

Microsoft hosts the Windows source in a monstrous 300GB Git repository